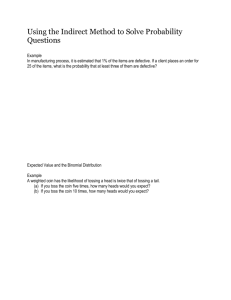

Topic 7: Probability

advertisement

Topic 6: Probability

Dr J Frost (jfrost@tiffin.kingston.sch.uk)

Last modified: 18th July 2013

Slide guidance

Key to question types:

SMC

Senior Maths Challenge

Uni

Questions used in university

interviews (possibly Oxbridge).

www.ukmt.org.uk

The level, 1 being the easiest, 5

the hardest, will be indicated.

BMO

British Maths Olympiad

Those with high scores in the SMC

qualify for the BMO Round 1. The

top hundred students from this go

through to BMO Round 2.

Questions in these slides will have

their round indicated.

MAT

Maths Aptitude Test

Admissions test for those

applying for Maths and/or

Computer Science at Oxford

University.

University Interview

Frost

A Frosty Special

Questions from the deep dark

recesses of my head.

Classic

Classic

Well known problems in maths.

STEP

STEP Exam

Exam used as a condition for

offers to universities such as

Cambridge and Bath.

Slide guidance

?

Any box with a ? can be clicked to reveal the answer (this

works particularly well with interactive whiteboards!).

Make sure you’re viewing the slides in slideshow mode.

For multiple choice questions (e.g. SMC), click your choice to

reveal the answer (try below!)

Question: The capital of Spain is:

A: London

B: Paris

C: Madrid

Topic 6: Probability

Part 1 – Manipulating Probabilities

Part 2 – Random Variables

a.

b.

c.

d.

e.

Random Variables

Discrete and Continuous Distributions

Mean and Expected Value

Uniform Distributions

Standard Deviation and Variance

Part 3 – Common Distributions

a.

b.

c.

d.

e.

Binomial

Bernoulli

Poisson

Geometric

Normal/Gaussian

Some starting notes

Only some of those reading this will have done a Statistics module at A Level. Therefore

only GCSE knowledge is assumed.

There is some overlap with the field of Combinatorics. For probability problems relating

to ‘arrangements’ of things, look there instead.

Probability and Stats questions in…

In university interviews…

In SMC

In STEP

Probability questions

frequently come up (although

not technically requiring any

more than GCSE theory).

In my experience, applicants

tend to do particularly bad at

these questions.

Harder probability questions are

quite rare (although one

appeared towards the end of

2012’s paper)

Two questions at

the end of every

paper. You could

avoid these, but

you broaden

your choice if

you prepare for

these.

In BMO

Used to be moderately common,

but less so nowadays

But some basic probability/statistics will broaden your maths ‘general knowledge’. You’ll

know for example what scientists at CERN mean when they refer in the news to the “5𝜎

test” needed to verify that a new particle has been discovered!

Topic 6 – Probability

Part 1: Manipulating Probabilities

Events and Sets

An event is a set of outcomes.

“Even number thrown on a die”

= {2, 4,? 6}

Given that events can be represented as sets, we can use set

operations. Suppose 𝐸 = {2, 4, 6}, say the event of throwing

an even number, and 𝑃 = {2, 3, 5}, say the event of throwing

a prime number. Then:

𝐸 ∩ 𝑃 = {2}?

? 5, 6}

𝐸 ∪ 𝑃 = {2, 3, 4,

∩ means set intersection. It gives the items

which are members of both sets. It represents

“numbers that are even AND prime”.

∪ means set union. It gives the items which

are members of either. It represents

“numbers that are even OR prime”.

GCSE Recap

When 𝐴 and 𝐵 are mutually exclusive (i.e. 𝐴 and 𝐵 can’t happen at the same

time, or more formally 𝐴 ∩ 𝐵 = ∅, where ∅ is the ‘empty set’)…

𝑃 𝐴 ∪ 𝐵 = 𝑃 𝐴 +?𝑃(𝐵)

When 𝐴 and 𝐵 are independent (i.e. 𝐴 and 𝐵 don’t influence each other)…

𝑃 𝐴 ∩ 𝐵 = 𝑃 𝐴 ×? 𝑃(𝐵)

When 𝐴 and 𝐵 are mutually exclusive…

𝑃 𝐴∩𝐵 =0

?

More useful identities

When 𝐴 and 𝐵 are not mutually exclusive…

𝑃 𝐴 ∪ 𝐵 = 𝑃 𝐴 + 𝑃 𝐵 ?− 𝑃(𝐴 ∩ 𝐵)

When 𝐴 and 𝐵 are independent and mutually exclusive…

𝑃 𝐴 ∪ 𝐵 = 𝑃 𝐴 + 𝑃 𝐵 ?− 𝑃 𝐴 𝑃(𝐵)

Conditional probabilities

We might want to express the probability of an event given that another

occurred:

The probability that A occurred given B

occurred.

𝑃 𝐴|𝐵

To appreciate conditional probabilities, consider a probability tree:

1st pick

2nd pick

3

6

4

7

Red

3

7

Green

3

6

Red

Green

This represents the

probability that a green

counter was picked

second GIVEN that a red

counter was picked first.

Conditional probabilities

Using the tree, we can construct the following identity for condition

probabilities:

𝑝(𝐵|𝐴)

𝑝(𝐴)

B

A

𝑃 𝐴 ∩ 𝐵 = 𝑃 𝐴 𝑃(𝐵|𝐴) or

𝑃 𝐴∩𝐵

𝑃 𝐵𝐴 =

𝑃 𝐴

𝑝(𝐴 ∩ 𝐵)

Conditional probabilities

If 𝐴 and 𝐵 are independent, then what is 𝑃(𝐴|𝐵)?

The Common Sense Method

If 𝐴 and 𝐵 are independent,

then the probability of 𝐴

occurring is not affected by

whether 𝐵 occurred, so:

𝑃 𝐴𝐵 =

? 𝑃(𝐴)

The Formal Method

𝑃 𝐴∩𝐵

𝑃 𝐴𝐵 =

𝑃 𝐵

=

𝑃 𝐴 𝑃 𝐵

𝑃 𝐵 ?

Because 𝐴 and 𝐵

are independent.

Examples

1

2

The events A and B are independent with 𝑃 𝐴 = 4 and 𝑃 𝐴 ∪ 𝐵 = 3 . Find:

(Source: Edexcel)

𝑃 𝐵

𝑃(𝐵′ |𝐴)

𝑃 𝐴∪𝐵 =𝑃 𝐴 +𝑃 𝐵 −𝑃 𝐴 𝑃 𝐵

2 1

1

= + 𝑃 𝐵 − 𝑃? 𝐵

3 4

4

5

𝑃 𝐵 =

9

𝑃 𝐵′ 𝐴 = 𝑃(𝐵′ ) because 𝐴 and 𝐵 are

independent.

𝑃 𝐴′ ∩ 𝐵

𝑃 𝐴′ ∩ 𝐵 = 𝑃 𝐴′ × 𝑃 𝐵

3

5

15

5

= 4 × 9 = 36 = 12

?

4

So 𝑃 𝐵′ 𝐴 = 9

?

Bayes’ Rule

Bayes’ Rule relates causes and effects. It allows us find the probability of the

cause given the effect, if we know the probability of the effect given the

cause.

𝑃 𝐸𝐶 𝑃 𝐶

𝑃 𝐶𝐸 =

𝑃 𝐸

Dr House is trying to find the cause of a disease. He

suspects Lupus (as he always does) due to their kidney

failure. The probability that someone has this symptom

if they did have Lupus is 0.2. The probability that a

random patient has kidney damage is 0.001, and the

probability they have Lupus 0.0001. What is the

probability they have Lupus given their observed

symptom?

0.2 × 0.0001

𝑃 𝐿𝐾 =

= 0.002

?

0.001

Bayes’ Rule

But we don’t always need to know the probability of the effect.

𝑷 𝑬𝑪 𝑷 𝑪

𝑷 𝑪𝑬 =

𝑷 𝑬

Notice that in the distribution 𝑃(𝐶|𝐸), 𝐸 is fixed, and the distribution is over

different causes, where 𝑐∈𝐶 𝑃 𝐶 𝐸 = 1. This suggests we can write:

𝑷 𝑪 𝑬 =𝒌𝑷 𝑬 𝑪 𝑷 𝑪

where 𝑘 is a normalising constant that is set to ensure our probabilities add

up to 1, i.e. 𝑃 𝐶 𝐸 + 𝑃 𝐶 ′ 𝐸 = 1

Question: The probability that a game is called off if it’s

raining is 0.7. The probability it’s called off if it didn’t rain

(e.g. due to player illness) is 0.05. The probability that it

rains on any given day is 0.2.

Andy Murray’s game is called off. What’s the probability

that rain was the cause?

Bayes’ Rule

Question: The probability that a game is called off if it’s

raining is 0.7. The probability it’s called off if it didn’t rain

(e.g. due to player illness) is 0.05. The probability that it

rains on any given day is 0.2.

Andy Murray’s game is called off. What’s the probability

that rain was the cause?

Write down information:

𝑃 𝐶 𝑅 = 0.7

𝑃 𝐶 𝑅′ = 0.05

?

So 𝑃 𝑅′ = 0.8

𝑃 𝑅 = 0.2

Then using Bayes’ Rule:

𝑃 𝑅 𝐶 = 𝑘 𝑃 𝐶 𝑅 𝑃 𝑅 = 0.14𝑘

𝑃 𝑅′ 𝐶 = 𝑘𝑃 𝐶 𝑅′ 𝑃 𝑅′ = 0.04𝑘

? = 1, so 𝑘 =

But 𝑃 𝑅 𝐶 + 𝑃 𝑅′ 𝐶 = 1. So 0.18𝑘

Then 𝑷 𝑹 𝑪 = 𝟎. 𝟏𝟒 ×

𝟓𝟎

𝟗

=

𝟕

𝟗

50

.

9

Topic 6 – Probability

Part 2: Random Variables

Random Variables

A random variable is a variable which can have multiple values, each with

an associated probability.

The variable can be thought of as a ‘trial’ or ‘experiment’, representing

something which can have a number of outcomes.

A random variable has 3 things associated with it:

The values the random variable can have

(e.g. outcomes of the throw of a die)

1

The outcomes

2

A probability function

The probability associated with each

outcome.

Parameters

These are constants used in our

probability function that can be set (e.g.

number of throws)

3

(formally known as the ‘support vector’)

Example random variables

This symbol means “for all”

Random variable (X)

Outcomes

Parameters?

The single throw

of a fair die.

{1, 2, 3, 4, 5, 6}

None

The single throw

of an unfair die.

{1, 2, 3, 4, 5, 6}

We can set the

probability of

?

each outcome:

p1, p2, …, p6

?

?

We use capital letters for

random variables.

Parameters are values we can control, but

do not change across different outcomes.

We’ll see plenty more examples.

?

Probability Function

1

𝑃 𝑋=𝑥 =

?6

𝑃 𝑋 = 𝑥𝑖 = 𝑝𝑖

∀𝑥

∀𝑖

?

For an outcome x and a random variable X, we

express the probability as 𝑃(𝑋 = 𝑥),

meaning “the probability that the random

variable X has the outcome x”.

We sometimes write 𝑝(𝑥) for short, with a

lowercase p.

In this example, we can use the probability

associated with the particular outcome. We

sometimes use 𝑥𝑖 to mean the ith outcome.

Sketching the probability function

It’s often helpful to show the probability

function as a graph. Suppose a random

variable X represents the single throw of a

biased die:

Probabilities

P(X)

1

2

3

4

X

5

6

Outcomes

Discrete vs Continuous Distributions

Discrete distributions are ones where the outcomes are discrete, e.g. throw of a die,

number of Heads seen in 10 throws, etc.

In contrast continuous distributions allow us to model things like height, weight, etc.

Here’s two possible probability functions:

Discrete

Continuous

0.3

P(X=h)

P(X=k)

0.4

0.2

0.1

1.2m 1.4m 1.6m 1.8m 2.0m 2.2m 2.4m

1

2

3

4

Number of times target hit (k)

Height of randomly picked person (h)

Discrete vs Continuous Distributions

Discrete

P(X=k)

0.4

Probabilities add up to 1.

i.e. 𝑥 𝑝 𝑥 = 1

0.3

All probabilities must be between 0

and 1, i.e.

0 ≤ 𝑝 𝑥 ≤ 1 ∀𝑥.

0.2

0.1

1

2

3

4

Number of times target hit (k)

We call the probability function the:

Probability mass function (PMF for

short)

Because our probability function is ultimately just a plain old function

(provided it meets the above properties), we often see the function written

as “𝑓(𝑥)” rather than 𝑝(𝑥).

Continuous Distributions

𝟏.𝟔

𝟏.𝟒

i.e. We find the area under the

graph. Note that the area

under the entire graph will be

1:

+∞

𝑝 𝑥 𝑑𝑥 = 1

Does it make sense to talk about

the probability of someone being

exactly 2m?

𝒑 ?𝒉 𝒅𝒉

P(X=h)

𝒑 𝟏. 𝟒 ≤ 𝒉 < 𝟏. 𝟔 =

Clearly not, but we could for

example find the probability

of a height being in a

particular range.

−∞

1.0m 1.2m 1.4m 1.6m 1.8m 2.0m 2.2m 2.4m 2.6m

Height of randomly picked person (h)

The probability associated with a particular value is known as the probability density. It’s

value alone is not particular meaningful (and can be greater than 1!), but finding the area in

a range gives us a probability mass. This is similar to histograms, where the y-axis is the

‘frequency density’, and finding the area under the bars gives us the frequency.

Probability Density

Question: Archers fire arrows at a target. The probability of the arrow being a

certain distance from the centre of the target is proportional to this distance. No

archer is terrible enough that his arrow will be more than 1m from the centre.

What’s the probability that an arrow is less than 0.5m from the centre?

Source: Frosty Special

Answer: 𝑝 𝑥 ≤ 0.5𝑚 = 0.25

?

2

Probability is proportional to distance.

P(X=x)

Maximum distance is 1m.

Since area under graph must be 1,

then maximum probability density

must be 2, so that the area of

triangle is ½ x 2 x 1 = 1.

0.5

1

Distance of arrow from centre (x)

We’re finding the probability of the

arrow being between 0m and 0.5m,

so find the area under the graph in

this region. We can see this will be

0.25.

Probability Density

Question: Archers fire arrows at a target. The probability of the arrow being a

certain distance from the centre of the target is proportional to this distance. No

archer is terrible enough that his arrow will be more than 1m from the centre.

What’s the probability that an arrow is less than 0.5m from the centre?

Source: Frosty Special

Alternatively, using a cleaner integration approach:

Step 1: Use the information to express the proportionality relationship:

?

𝑝 𝑥 ∝𝑥

, so 𝑝 𝑥 = 𝑘𝑥.

Step 2: Determine constant by using the fact that

1

𝑘

?0

𝑏

𝑝

𝑎

𝑘

𝑘𝑥 𝑑𝑥 =

2

So 2 = 1 and thus 𝑘 = 2

Step 3: Finally, integrate desired range.

0.5

0

? 2𝑥 𝑑𝑥 = 0.25

𝑥 𝑑𝑥 = 1

Mean and Expected Value

Mean of a Sample

Mean of a Random Variable

But what about the mean of a random

variable X?

This is known as the “expected value of

X”, written E[X]. It can be calculated

using:

The process of using a random

variable to give us some values is

known as sampling. For example, we

might have measured the heights of a

sample of people:

𝐸𝑋 =

or 𝐸 𝑋 =

The mean of a sample you’ve known

how to do since primary school:

𝑥=

𝑥𝑖

𝑛

Archery scores: 57, 94, 25, 42

57 + 94 + 25 + 42

? = 54.5

𝑥=

4

𝑥 𝑝(𝑥)

𝑝 𝑥 𝑑𝑥

∞

𝑥

−∞

depending on whether your variable is

discrete or continuous.

X is “times target hit out of 3 shots”.

x

0

1

2

3

P(X=x)

0.25

0.5

0.05

0.2

𝐸𝑋

? + 2 × 0.05

= 0 × 0.25 + 1 × 0.5

Expected Value

Question: Two people randomly think of a real number between 0 and 100. What

is the expected difference between their numbers? (i.e. the average range)

(Source: Frosty Special)

(Hint: Make your random variable the difference between the two numbers )

As with many problems, it’s easier to consider a simpler scenario.

Consider just say integers between 0 and 10. How many ways can the numbers

be chosen if the range is 0? Or the range is 1? Or 2? What do you notice?

Step 1: Use the information to express the proportionality relationship:

𝑝 𝑥 ∝ 100 − 𝑥

We can consider the two numbers (with range 𝑥), as a ‘window’ which we can

‘slide’ in the 0 to 100 region. The bigger?the window, the less we can slide it. If

they were to choose 0 and 100, we can’t slide at all.

Step 2: Determine constant by using the fact that

𝑏

𝑝

𝑎

𝑥 𝑑𝑥 = 1

𝑝 𝑥 = 100𝑘 − 𝑘𝑥

𝑘

Integrating we get 100𝑘𝑥 − 2 𝑥 2 . If the?limits are 0 and 100, we get 5000𝑘 =

1

1, so 𝑘 = 5000

Expected Value

Question: Two people randomly think of a real number between 0 and 100. What

is the expected difference between their numbers? (i.e. the average range)

(Source: Frosty Special)

(Hint: Make your random variable the difference between the two numbers )

Step 3: Finally, given our known PDF, find E[X]

100

𝐸𝑋 =

𝑥 𝑝 𝑥 𝑑𝑥

100

0

𝑥 𝑘?

100 − 𝑥

=

𝑑𝑥

0

1

= 33

3

One of the harder problem sheet exercises is to consider what happens

when we introduce a 3rd number!

Modifying Random Variables

We often modify the value of random variables.

Example: X = outcome of a single throw of a die,

Y = outcome of another die

Consider X + 1

What does it mean?

We add 1 to all the outcomes of the die (i.e. we

now have 2 to 7)

?

The probabilities remain unaffected.

How does the expected

value change?

Clearly the mean value will also increase by one.

i.e.: 𝐸 𝑋 + 1 = 𝐸 𝑋 +?1

In general: 𝐸 𝑎𝑋 + 𝑏 = 𝑎𝐸 𝑋? + 𝑏

Modifying Random Variables

We often modify the value of random variables.

Example: X = outcome of a single throw of a die,

Y = outcome of another die

Now consider X + Y

What does it mean?

We consider all possible outcomes of X and Y,

and combine them by adding them. The new

? Clearly we need to

set of outcomes is 2 to 12.

recalculate the probabilities.

Uniform Distribution

A uniform distribution is one where all outcomes are equally likely.

Discrete Example

Continuous Example

You throw a fair die. What’s the probability

of each outcome?

1

𝑝 𝑎𝑛𝑦 𝑜𝑢𝑡𝑐𝑜𝑚𝑒 =

6

(This ensures the probabilities add up to 1).

?

You’re generating a random triangle. You

𝜋

pick an angle in the range 0 < 𝜃 < 2 to

use to construct your triangle, chosen

from a uniform distribution.

What is the probability (density) of

picking a particular angle?

2

,

𝑝 𝜃 = 𝜋

𝜋

𝑖𝑓 0 < 𝜃 <

2

0

𝑜𝑡ℎ𝑒𝑟𝑤𝑖𝑠𝑒

?

This ensures the area under your PDF

𝜋

graph (a rectangle with width 2 and

2

height 𝜋) is 1.

Standard Deviation and Variance

Standard Deviation gives a measure of ‘spread’. It can roughly be thought of as the

average distance of values from the mean. It’s often represented by the letter 𝜎.

The variance is the standard deviation squared. i.e. 𝜎 2

Variance of a Sample

We find the average of the squares of

the displacements from the mean.

Example:

1cm 4cm 7cm 12cm

Mean = 6

Displacements are -5, -2, 1, 6

So variance is:

−5 2 + −2 2 + 12 + 62

= 16.5

4

Variance of a Random Variable

This is very similar to the sample variation.

We’re finding the average of the squared

displacement from the mean, i.e.:

𝑉𝑎𝑟[𝑋] = 𝐸[ 𝑋 − 𝜇 2 ]

Using the fact that 𝐸 𝑎𝑋 + 𝑏 = 𝑎𝐸 𝑋 + 𝑏:

𝐸 𝑋 − 𝜇 2 = 𝐸 𝑋 2 − 2𝜇𝑋 + 𝜇2

= 𝐸 𝑋 2 − 𝐸 2𝜇𝑋 + 𝐸 𝜇2 The expected value of a value

is just the value itself.

= 𝐸 𝑋 2 − 2𝜇𝐸 𝑋 + 𝜇2

Since 𝜇 =

= 𝐸 𝑋 2 − 2𝐸 𝑋 2 + 𝐸 𝑋 2

𝐸[𝑋]

= 𝐸 𝑋2 − 𝐸 𝑋 2

i.e. We can find the “mean of the squares minus

the square of the mean”.

Standard Deviation and Variance

Example: Find the variance of this biased spinner (which just has the values 1 and

2), represented by the random variable X.

k

1

2

P(X=k)

0.6

0.4

𝐸 𝑋 = 0.6 × 1 + 0.4 × 2 = 1.4

𝐸 𝑋 2 = 0.6 × 12 + 0.4 × 22 = 2.2

?

So 𝑉𝑎𝑟 𝑋 = 𝐸 𝑋 2 − 𝐸 𝑋

2

= 2.2 − 1.42 = 0.24

STEP Question

Fire extinguishers may become faulty at any time after manufacture and are tested

annually on the anniversary of manufacture. The time T years after manufacture until

a fire extinguisher becomes faulty is modelled by the continuous probability density

function:

𝑓 𝑡 =

2𝑡

1 + 𝑡2

0,

2,

𝑓𝑜𝑟 𝑡 ≥ 0

𝑜𝑡ℎ𝑒𝑟𝑤𝑖𝑠𝑒

A faulty fire extinguisher will fail an annual test with probability p, in which case it is

destroyed immediately. A non-faulty fire extinguisher will always pass the test. All of

the annual tests are independent.

a) Show that the probability that a randomly chosen fire extinguisher will be

destroyed exactly three years after its manufacture is 𝑝(5𝑝2 − 13𝑝 + 9)/10

(We’ll do part (b) a bit later)

b) Find the probability that a randomly chosen fire extinguisher that was destroyed

exactly three years after its manufacture was faulty 18 months after its manufacture.

What might be going

through your head:

“I need to consider

each of the 3 cases.”

“I have a PDF. This requires me to

use definite integration.”

STEP Question

𝑓 𝑡 =

2𝑡

1 + 𝑡2

0,

2

,

𝑓𝑜𝑟 𝑡 ≥ 0

𝑜𝑡ℎ𝑒𝑟𝑤𝑖𝑠𝑒

Since we have a PDF, it makes sense to integrate it so we can find the probability of the extinguisher failing between

some range of times.

2𝑡

1 + 𝑡2

2

𝑑𝑡 = −

1

+𝑐

1 + 𝑡2

The probability the extinguisher fails sometime in the first year is

and during the third year

3

2

−

1

1+𝑡 2

=

1

0

−

1

1+𝑡 2

1

= , during the second year

2

2

1

−

1

1+𝑡 2

=

3

10

1

10

Let’s consider the three cases:

a)

If it fails during the first year, it must survive the first two tests, before failing the third. This gives a probability of

𝟏

𝟏 − 𝒑 𝟐𝒑

𝟐

b)

c)

𝟑

If it fails during the second year, it must survive the second test and fail on the third, giving

𝟏 − 𝒑 𝒑 (note

𝟏𝟎

that on the first test, the probability of it surviving given it’s not faulty is 1)

𝟏

If it fails during the third year, then it fails during the third year. We get 𝒑.

Adding these probabilities together gives us the desired probability.

𝟏𝟎

Mean and Variance of Random Variables

A point P is chosen (with uniform distribution) on the circle 𝑥 2 + 𝑦 2 = 1. The

random variable 𝑋 denotes the distance of 𝑃 from (1,0). Find the mean and

variance of X. [Source: STEP1 1987]

An important first question is how we could chosen a random point on the

circle with uniform distribution.

Question: Could we for example choose the x coordinate randomly between

-1 and 1, and use 𝑥 2 + 𝑦 2 = 1 to determine 𝑦?

Click to choose

points uniformly

across x.

No: We can see that because the lines are steeper

either side of the circle, we’d likely have less points in

these regions, and thus we haven’t chosen a point

with uniform distribution around the circle. We’d

have a similar problem if we were trying to pick a

random point on a sphere, and picked a random

latitude/longitude coordinate (we’d favour the poles)

Mean and Variance of Random Variables

A point P is chosen (with uniform distribution) on the circle 𝑥 2 + 𝑦 2 = 1. The

random variable 𝑋 denotes the distance of 𝑃 from (1,0). Find the mean and

variance of X. [Source: STEP1 1987]

In which case, how can we make sure we pick a point randomly?

Introduce a parameter 𝜃 for the angle anticlockwise

from the x-axis say. Clearly this doesn’t give bias to

?

certain regions of the arc (satisfying

the ‘uniform

distribution’ bit).

𝑋

So what is the distance X?

𝜃

2 sin

2

?

(It’s an isosceles triangle, so split into 2)

𝜃

(1,0)

So what is E[X]?

2𝜋

𝑥𝑝 𝑥 =

0

𝜃 1

2sin

?

2 2𝜋

1

=

𝜋

2𝜋

0

𝜃

sin

2

𝟒

=

𝝅

Summary

• Random variables have a number of possible outcomes, each with an associated

probability.

• Random variables can be discrete or continuous.

• Discrete random variables have an associated probability mass function. We require

that 𝑓(𝑥) = 1 across the domain of the function (i.e. possible outcomes).

• Continuous random variables have an associated probability density function.

Unlike ‘conventional’ probabilities, these can have a value greater than 1. We

∞

require that −∞ 𝑓 𝑥 𝑑𝑥 = 1, i.e. the total area under the graph is 1.

We can find a probability mass (i.e. the ‘conventional’ kind of probability) by finding

the area under the graph in a particular range, using definite integration.

• While we have a ‘mean’ for a sample, we have an ‘expected value’ for a random variable,

written 𝐸[𝑋]. It can be calculated using 𝑥 𝑝(𝑥) for a discrete random variable, and

∞

𝑥 𝑝 𝑥 𝑑𝑥 for a continuous random variable. The expected value for a fair die for

−∞

example is 3.5.

• The variance gives a measure of spread. For specifically it’s the average squared distance

from the mean. We can calculate it using 𝑽𝒂𝒓 𝑿 = 𝑬 𝑿𝟐 − 𝑬 𝑿 𝟐 , which can be

remembered using the mnemonic “mean of the square minus the square of the mean”,

or “msmsm”.

• 𝐸 𝑎𝑋 + 𝑏 = 𝑎𝐸 𝑋 + 𝑏. i.e. Scaling our outcomes/adding has the same effect on the

mean.

Topic 6 – Probability

Part 3: Common Distributions

Common Distributions

We’ve seen so far that can build whatever random variable we like using two essential

ingredients: specifying the outcomes, and specifying a PMF/PDF that associates a

probability with each outcome. But there’s a number of well-known distributions for

which we already have the outcomes and probability function defined: we just need to

set some parameters.

Bernoulli

Multivariate

Binomial

e.g. Throw of a

(possible biased) coin.

e.g. Throw of a (possibly

biased) die.

e.g. Counts the number of

heads and tails in 10

throws.

Multinomial

Poisson

Geometric

e.g. Counting the

number of each face in

10 throws of a die.

e.g. Number of cars which

pass in the next hour given

a known average rate.

e.g. The number of times

I have to flip a coin before

I see a heads.

We won’t

explore these.

Exponential

Dirichlet

e.g. The possible time

before a volcano next

erupts.

e.g. The possible probability distributions for the throw

of a die, given I threw a die 60 times and saw 10 ones,

10 twos, 10 threes, 10 fours, 10 fives and 10 sixes.

Bernoulli Distribution

The Bernoulli Distribution is perhaps the most simple distribution. It

models an experiment with just two outcomes, often referred to as

‘success’ and ‘failure’.

It might represent the single throw of a coin. (where ‘Heads’ could

represent a ‘success’)

Description

A single trial with

two outcomes.

Outcomes

“Failure”/”Success”,

or {0, 1} ?

Parameters?

p, the

probability

? of

success.

Probability Function

1−𝑝 𝑥 =0

𝑃 𝑋 = 𝑥 =?

𝑝

𝑥=1

A trial with just two outcomes is known as a Bernoulli Trial.

A sequence of Bernoulli Trials (all independent of each other) is known as a

Bernoulli Process.

An example is repeatedly flipping a coin, and recording the result each time.

Binomial Distribution

Suppose I flip a biased coin. Let heads be a ‘success’ and

tails be a ‘failure’. Let there be a probability 𝑝 that I have a

success in each throw.

The Binomial Distribution allows us to determine the

probability of a given number of successes in n (Bernoulli)

trials, in this case, the number of heads in n throws.

Question: If I throw a biased coin (with probability of heads p) 8 times, what is the

probability I see 3 heads?

H

H

H

T

T

T

T

T

The probability of this particular sequence is: 𝑝3 1 −

?𝑝5

But there’s 83? ways in which we could see 3 heads in 8 throws.

Therefore 𝑝 3 𝐻𝑒𝑎𝑑𝑠 = 83 𝑝3 1? − 𝑝 5

Binomial Distribution

Therefore, in general, the probability of k successes in n trials is:

𝑝 𝑋=𝑘 =

𝑛

𝑘

𝑝𝑘 1 − 𝑝

𝑛−𝑘

Description

Outcomes

Parameters?

Probability Function

Binomial D

Number of

‘successes’ in n

trials.

{0, 1, 2, … , n}

i.e. between 0

?

and n successes.

𝑝, the probability

𝑛 𝑘

𝑝

𝑋

=

𝑘

=

𝑝 1−𝑝

of a single success.

𝑘

? of

?

𝑛, the number

trials

We can write B(n,p) to represent the Binomial Distribution, where n and p are

the parameters for the number of trials and probability of a single success.

If we want some random variable X to use this distribution, we can use

𝑿~𝑩(𝒏, 𝒑). The ~ means “has the distribution of”.

𝑛−𝑘

Frost Real-Life Example

While on holiday in Hawaii, I was having lunch with a family, where an unusually high

number were left-handed: 5 out of the 8 of us (including myself). I was asked what

the probability of this was. (Roughly 10% of the world population is left-handed.)

Suppose X is the random variable representing the

number of left handed people.

Then 𝑋~𝐵 8, 0.1

?

8

× 0.15 × 0.93

5

?

= 1 𝑖𝑛 2450 𝑐ℎ𝑎𝑛𝑐𝑒

𝑃 𝑋=5 =

(This example points out one of the assumptions of the Binomial Distribution: that each trial

is independent. But this was unlikely to be the case, since most on the table were related,

and left-handedness is in part hereditary. Sometimes when we model a scenario using an

‘off-the-shelf’ distribution, we have to compromise by making simplifying assumptions.)

Summary of Distributions so far

Similarly, a multivariate distribution represents a single trial with any number of

outcomes.

A multinomial distribution is a generalisation of the Binomial Distribution, which

gives us the probability of counts when we have multiple outcomes.

Generalise to

n trials

Bernoulli

e.g. “What’s the

probability of getting a

Heads?”

Binomial

e.g. “What’s the

probability of getting 3

Heads and 2 Tails?”

Generalise to

k outcomes

Multivariate

e.g. “What’s the

probability of getting

a 5?

Generalise to

n trials

Multinomial

e.g. “What’s the

probability of rolling

3 sixes, 2 fours and a

1?

(Use your combinatorics knowledge to try and work out the probability function for this!)

Poisson Distribution

Cars pass you on a road at an average rate of 5

cars a minute. What’s the probability that 3

cars will pass you in the next minute?

When you have a known average ‘rate’ of

events occurring, we can use a Poisson

Distribution to model the number of events that

occur within that period.

We use 𝜆 to represent the average rate.

We can see that when the average rate is

10 (say per minute), we’re most likely to

see 10 cars. But technically, we could see

a million cars (even if the probability is

very low!)

k is the number of events (e.g. seeing a

car) that occur.

Poisson Distribution

Assumptions that the Poisson Distribution makes:

1. All events occur independently (e.g. a car passing

you doesn’t affect when the next car will pass you).

2. Events occur equally likely at any of time (e.g. we’re

not any more likely to see cars at the beginning of

the period than at the end)

Description

Outcomes

Number of events

occurring within a

fixed period given

an average rate.

{0, 1, 2, … } up to

infinity.

?

i.e. The Poisson

Distribution is a DISCRETE

distribution.

Parameters?

𝜆, the average

number of

events ?

in that

period.

Probability Function

𝜆𝑘 −𝜆

𝑃 𝑋=𝑘 = 𝑒

? 𝑘!

𝑒 is Euler’s Number, with the

value 2.71…

Poisson Distribution

Example: An active volcano erupts on average 5 times each year. It’s

equally likely to erupt at any time.

Q1) What’s the probability that it erupts 10 times next year?

510 −5

𝑝 𝑋 = 10? =

𝑒

10!

= 0.018

Q2) What’s the probability that it erupts at all next year?

1−𝑝 𝑋 =0

50 −5

= 1 −? 𝑒

0!

= 1 − 𝑒 −5

= 0.993

Q3) What’s the probability that it next erupts between 2 and 3 years

after the current date?

i.e. It erupts 0 times in the first year, 0 times in the second year, and at

least once the third year.

?

−5

−5

−5

𝑒 ×𝑒 × 1−𝑒

= 𝑒 −10 − 𝑒 −15

Relationship to the Binomial Distribution

Imagine that we segment this fixed period into a number of smaller chunks of time, in

each of which an event can occur (which we’ll describe as a ‘success’), or not occur.

1 minute

A car passed in

this period!

A car passed in

this period!

If we presumed that we only had at most one car passing in each of these smaller

periods of time, then we could use a Binomial Distribution to model the total number

of cars that pass across 1 minute, because it models the number of successes.

Of course, multiple cars could actually pass within each smaller segment of time.

How would we fix this?

Relationship to the Binomial Distribution

We could simply use smaller chunks of time – in the limit, we have tiny slivers of time, so

instantaneous that we couldn’t possibly have two cars passing at exactly the same time.

1 minute

Now if we’d divided up our time into 𝑛 chunks where 𝑛 is large, and we

expect an average of 𝜆 cars to pass, what then is the probability 𝑝 of a

car passing in one chunk of time? (Only Year 8 probability needed!)

𝝀

𝒑= ?

𝒏

Therefore, as n becomes infinitely large (so our slivers of time become

instantaneous moments), we can use the Binomial Distribution to represent the

number of events that occur within some period:

𝑝 𝑋=𝑘

𝑛

= lim

𝑛→∞ 𝑘

𝜆

𝑛

𝑘

𝜆

1−

𝑛

𝑛−𝑘

𝜆𝑘 −𝜆

= 𝑒

𝑘!

We need some fiddly

maths to show this. 𝑒

tends to arise in

maths when we have

limits.

Uniform Distribution

We saw earlier that a uniform distribution is where each outcome is equally likely.

Description

Each outcome is

equally likely.

Outcomes

x1, x2, …, xn

Parameters?

None.

?

Examples: The throw of a fair die, the

throw of a fair coin, the possible lottery

numbers this week (presuming the ball

machine isn’t biased!).

?

Probability Function

𝑝 𝑋 = 𝑥𝑖 =

?

1

𝑛

∀𝑖

Geometric Distribution

You, Christopher Walken, are captured by the Viet Cong during the Vietnam War, and

forced to play Russian Roulette. The gun has 6 slots on the barrel, one of which has a

bullet, and the other slots empty. Before each shot, you rotate the barrel randomly,

then shoot at your own head. If you survive, you repeat this ordeal.

Q1) What’s the probability that you die on the first shot?

Q2) What’s the probability that you die on the second shot?

𝟓

𝟔

You survive the first then die?on the second: ×

𝟏

𝟔

=

𝟓

𝟑𝟔

Q3) What’s the probability that you die on the 𝑥 𝑡ℎ shot?

p x =

𝟓 𝒙−𝟏

𝟔

?

×

𝟏

𝟔

𝟏

?

𝟔

Geometric Distribution

If you have a number of trials, where in each trial you can have a ‘success’ or ‘failure’,

and you repeat the trial until you have a success (at which point you stop), then the

geometric distribution gives you the probability of succeeding on the 1st trial, the 2nd

trial, and so on.

Description

Succeeding on the

xth trial after

previously failing.

Outcomes

Parameters?

{ 1, 2, 3, … }

The trial on which

?

you succeed.

The probability

𝑝 of success.

?

Probability Function

𝑝 𝑥 = 1−𝑝

𝑥−1

𝑝

?

𝟏

Note that if 𝑋~𝐺𝑒𝑜𝑚(𝑝), then 𝑬 𝑿 = 𝒑

For example, if we tossed a fair die until we saw a 1, we’d expect to have to throw the die

1

1 ÷ 6 = 6 times on average before we see a 1 (where the count includes the last throw).

Side Note: The distribution is called ‘geometric’ because if we were to list out the

probabilities for 𝑝(1), 𝑝(2), 𝑝(3) and so on, we’d have a geometric series!

Geometric Distribution

Tom and Geri have a competition. Initially, each player has one attempt at hitting a target. If

one player hits the target and the other does not then the successful player wins. If both

players hit the target, or if both players miss the target, then each has another attempt,

4

with the same rules applying. If the probability of Tom hitting the target is always 5 and the

2

probability of Geri hitting the target is always 3, what is the probability that Tom wins the

competition?

4

A:

15

4

5

D:

B:

8

15

E:

13

15

2

3

C:

4

2

1

1

3

The probability that they both hit or miss is 5 × 3 + 5 × 3 = 5.

4

1

4

So Tom can win by either winning immediately 5 × 3 = 15, or initially

3

4

drawing before winning: 5 × 15, or drawing twice and then winning:

3 2

4

×

and so on. This gives us an infinite geometric series with

5

5

4

3

𝑎

2

𝑎 = 15 and 𝑟 = 5. Using 1−𝑟, we get 3.

SMC

Level 5

Level 4

Level 3

Level 2

Level 1

Frost Real-Life Example

My mum (who works at John Lewis), was selling London Olympics ‘trading cards’, of

which there were about 200 different cards to collect, and could be bought in packs.

Her manager was curious how many cards you would have to buy on average before

you collected them all. The problem was passed on to me!

(Note: Assume for simplicity that each card is equally likely to be acquired – unlike say ‘Pokemon cards’ [a

childhood fad I never got into], where lower numbered cards are rarer)

Hint: Perhaps think of the trials needed to collect the next card as a geometric process?

Then consider these processes all combined together.

Answer: 𝟏𝟏𝟕𝟔?cards

Explanation on next slide…

Frost Real-Life Example

Answer: 𝟏𝟏𝟕𝟔 cards

To get the first card, we just need to buy 1 card.

199

To get the second card, each time we buy a card, we have a 200 chance of buying a new

card (if not, we keep buying until we have a new one). Since the number of cards we need

to buy to get this next card is geometrically distributed, we expected number of cards is

1

200

=

.

𝑝

199

Combined these expected number of cards we need to buy for each new card, we get

200

200

200

200

+

+

+

⋯

+

200

199

198

1

𝟏

𝟏

𝟏

= 𝟐𝟎𝟎 𝟏 + 𝟐 + 𝟑 + ⋯ + 𝟐𝟎𝟎 . The bracketed expression is

known as a ‘Harmonic Series’, which can be represented as 𝑯𝟐𝟎𝟎 . Typing “200 * H(200)”

into www.wolframalpha.com got me the answer above.

This problem is more generally known as the “Coupon Collector’s Problem”

http://en.wikipedia.org/wiki/Coupon_collector%27s_problem

Coin Conundrums

How would you model a fair coin given you have just a fair die?

Solution: Easy! Roll the die. If you get say an even number, declare

?

‘Heads’, else declare ‘Tails’.

How would you model a fair die given you have just a fair coin?

Solution: A bit harder! Throw the coin 3 times, giving us 8 possible outcomes. Label the

first 6 of these outcomes (e.g. HHH, HHT, …). If we get the last two outcomes, then reject

these outcomes and repeat.

An interesting side question is how many times on average we’d expect to have to throw

?

6

3

the coin. If the probability of being able to stop is p = 8 = 4, the expected value of a

1

4

geometric distribution is 𝑝, i.e. 3. Since we throw the coin 3 times each time, then we

expect an average of 4 throws.

How would you model a fair coin using an unfair coin?

Solution: Suppose the probability of Heads on the unfair coin is 𝑝. Then throw this

coin two times. We have four outcomes: HH, HT, TH and TT, with probabilities 𝑝2 ,

𝑝(1 − 𝑝), 𝑝(1 − 𝑝) and 1 − 𝑝 2 respectively.

? Two of these outcomes have the same

probability. So declare ‘Heads’ if you threw HT on the biased coin, ‘Tails’ if you threw

HT, and repeat otherwise. (You’d expect to have to throw 𝑝(𝑝 − 1) times on average).

Gaussian/Normal Distribution

A Gaussian/Normal distribution is a continuous distribution which has a ‘bell-curve’

type shape. It’s useful for modelling variables where the values are clustered,

about the mean, and spread out around it with probability dropping off.

P(X=x)

IQ is a good example. The mean is (by

definition) 100, and the probability of

having an IQ drops off symmetrically, with

Standard Deviation 15 (by definition).

70

85

100

115 130 145

IQ (“Intelligence Quotient”) x

Suppose there’s a known mean 𝜇 and a

known standard deviation 𝜎. We

distribution is denoted as 𝑁(𝜇, 𝜎 2 ),

parameterised by the mean and

variance.

Then if 𝑋~𝑁 𝜇, 𝜎 2 :

𝑝 𝑋=𝑥 =

1

𝜎 2𝜋

𝑒

−

𝑥−𝜇 2

2𝜎2

Z-values

We might be interested to know what

percentage of the population have an IQ

below 130.

The z-value is the number of Standard

Deviations above the mean.

P(X=x)

Some rather helpful mathematicians have

compiled a table of values that give us

𝑃 𝑥 < 𝑧 , i.e. the probability of being below a

particular z-value, for different values of z. This

is unsurprisingly known as a z-table.

70

85

100

115 130 145

IQ (“Intelligence Quotient”) x

If 𝜎 = 15 and 𝜇 = 100, how many

Standard Deviations above the mean

is 130?

Answer = 2 ?

Z-values

…and our hundredths

digit here.

We look up the units

and tenths digit of

our z-value here…

So 𝑃 𝑥 ≤ 130 = 0.9772

?

Z-values

It’s useful to remember that 68% of values are within 1 s.d. of the mean, 95%

within two, and 99.7% within 3 (when the variable is ‘normally distributed’)

When scientists referred to a “5𝜎 test” needed to officially ‘discover’ the Higgs Boson,

they mean that were the data observed to occur ‘by chance’ in the situation where the

Higgs Boson didn’t exist (known as the null hypothesis), then the probability is less than

that of being 5𝜎 away from the mean of a randomly distributed variable: a 1 in 3.5m

chance. A Level students studying S2 will encounter ‘hypothesis testing’.