Maximum estimates

advertisement

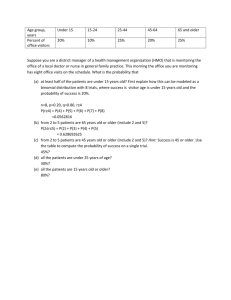

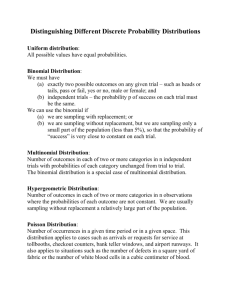

Maximum likelihood estimates What are they and why do we care? Relationship to AIC and other model selection criteria Maximum Likelihood Estimates (MLE) Given a model () MLE is (are) the value(s) that are most likely to estimate the parameter(s) of interest. That is, they maximize the probability of the model given the data. The likelihood of a model is the product of the probabilities of the observations. Maximum Likelihood Estimation For linear models (e.g., ANOVA and regression) these are usually determined using the linear equations which minimize the sum of the squared residuals – closed form For nonlinear models and some distributions we determine MLEs setting the first derivative equal to zero and then making sure it is a maxima by setting the second derivative equal to zero – closed form. Or we can search for values that maximize the probabilities of all of the observations – numerical estimation. Search stops when certain criteria are met: Precision of the estimate Change in the likelihood Solution seems unlikely (stops after n iterations) Binomial probability Some theory and math An example Assumptions Adding a link function Additional assumptions about bs Binomial Sampling Characterized by two mutually exclusive events Heads or tails On or off Dead or alive Used or not used, or Occupied or not occupied. Often referred to as Bernoulli trials Models Trials have an associated parameter p p = probability of success. 1-p = probability of failure ( = q) p+q=1 p also represents a model Single parameter p is equal for every trial Binomial Sampling p is a continuous variable between 0 and 1 (0 <p <1) pˆ y n y is the number of successful outcomes n is the number of trials. This estimator is unbiased. E ( y ) np . var( y ) npq np (1 p ) var( pˆ ) pq ˆˆ v̂ar( pˆ ) pq ŝe(p̂) pˆ qˆ n n n Binomial Probability Function n y n y f y | n, p p (1 p) y The probability of observing y successes given n trials with the underlying probability p is ... Example: 10 flips of a fair coin (p = 0.5), 7 of which turn up heads is written 10 7 f 7 | 10,0.5 0.5 (1 0.5)107 7 Binomial Probability Function (2) evaluated numerically: n! f 7 | 10, 0.5 p y (1 p ) n y y!( n y )! 120 0.57 0.53 0.1172 In Excel: =BINOMDIST(y, n, p, FALSE) Binomial Probability Function (3) y 0 1 2 3 4 5 6 7 8 9 10 10 n! p y (1 p) n y y!(n y )! 0.5 BINPROB 0.0010 0.0098 0.0439 0.1172 0.2051 0.2461 0.2051 0.1172 0.0439 0.0098 0.0010 Probability n y p f y | 10, 0.5 1 0.9 0.8 0.7 0.6 0.5 0.4 0.3 0.2 0.1 0 0 1 2 3 4 5 y 6 7 8 9 10 Likelihood Function of Binomial Probability Reality: have data (n and y) don’t know the model (p) leads us to the likelihood function: n y L p | n, y p (1 p) n y y read the likelihood of p given n and y is ... not a probability function. is a positive function (0 < p < 1) Likelihood Function of Binomial Probability(2) Alternatively, the likelihood of the data given the model can be thought of as the product of the probabilities of the individual observations. The probability of the observations is: P( yi ) p (1 p ) f f = 1 for success, f = 0 for failure 1 f Therefore, n L p | n, y p i 1 f 1 f (1 p) Binomial Probability Function and it's likelihood Likelihood 0.00 0.00000000 0.05 0.00000000 0.10 0.00000007 0.15 0.00000105 0.20 0.00000655 0.25 0.00002575 0.30 0.00007501 0.35 0.00017669 0.40 0.00035389 0.45 0.00062169 0.50 0.00097656 0.55 0.00138732 0.60 0.00179159 0.65 0.00210183 0.70 0.00222357 0.75 0.00208569 0.80 0.00167772 0.85 0.00108195 0.90 0.00047830 0.95 0.00008729 1.00 0.00000000 2.500E-03 Likelihood p 2.000E-03 1.500E-03 1.000E-03 5.000E-04 0.000E+00 p L p | 10,7 p (1 p) 7 107 Log likelihood Although the Likelihood function is useful, the loglikelihood has some desirable properties in that the terms are additive and the binomial coefficient does not include p. ln L ln L p|n, y n ln y ln( p ) ln( 1 p ) (n y ) y Log likelihood Using the alternative: n ln L p | n, y ln( p f (1 p )1 f ) i 1 The estimate of p that maximizes the value of ln(L) is the MLE. Precision As n , precision , variance L(p|10,7) L(p|100,70) Properties of MLEs Asymptotically normally distributed Asymptotically minimize variance Asymptotically unbiased as n → One-to-one transformations of MLEs are also MLEs. For example mean lifespan: Lˆ 1/ln ( Sˆ ) is also an MLE. Assumptions: n trials must be identical – i.e., the population is well defined (e.g.,20 coin flips, 50 Kirtland's warbler nests, 75 radio-marked black bears in the Pisgah Bear Sanctuary). Each trial results in one of two mutually exclusive outcomes. (e.g., heads or tails, survived or died, successful or failed, etc.) The probability of success on each trial remains constant. (homogeneous) Trials are independent events (the outcome of one does not depend on the outcome of another). y, the number of successes; is the random variable after n trials. Example – use/non-use survey Selected 50 sites (n) at random (or systematically) with a study area. Visit each site once and ‘surveyed’ for species x Species was detected at 10 sites (y) Meet binomial assumptions: Sites selected without bias Surveys conducted using same methods Sites could only be used or not used (occupied) No knowledge of habitat differences or species preferences Sites are independent Additional assumption – perfect detection Example – calculating the likelihood p Likelihood Variance SE 0.00 0.00E+00 0.05 1.26E-14 0.00095 0.030822 0.10 1.48E-12 0.0018 0.042426 0.15 8.66E-12 0.00255 0.050498 0.20 1.36E-11 0.0032 0.056569 0.25 9.59E-12 0.00375 0.061237 0.30 3.76E-12 0.0042 0.064807 0.35 9.06E-13 0.00455 0.067454 0.40 1.40E-13 0.0048 0.069282 0.45 1.40E-14 0.00495 0.070356 0.50 8.88E-16 0.005 0.070711 0.55 3.41E-17 0.00495 0.070356 0.60 7.31E-19 0.0048 0.069282 0.65 7.80E-21 0.00455 0.067454 0.70 3.43E-23 0.0042 0.064807 0.75 4.66E-26 0.00375 0.061237 0.80 1.18E-29 0.0032 0.056569 0.85 2.18E-34 0.00255 0.050498 0.90 3.49E-41 0.0018 0.042426 0.95 5.45E-53 0.00095 0.030822 1.00 0.00E+00 0 0 L p | 50,10 p (1 p) 10 5010 Example – results MLE = 20% + 6% of the area is occupied Link functions - adding covariates “Link” the covariates, the data (X), with the response variable (i.e., use or occupancy) Usually done with logit link: pi e β0 x1i β1 1 e β0 x1i β1 Nice properties: Constrains result 0<pi<1 Link functions - adding covariates “Link” the covariates, the data (X), with the response variable (i.e., use or occupancy) Usually done with logit link: β0 x1i β1 e e Xβ pi β0 x1i β1 1 e Xβ 1 e Xβ Nice properties: Constrains result 0<pi<1 bs can be -∞ < b < +∞ Additional assumption –bs are normally distributed Link function Binomial likelihood: n y n y L p | n, y p (1 p) y Substitute the link for p n exp( Xb ) L p | n, y y 1exp( Xb ) Voila! – logistic regression y exp( Xb ) 1 1exp( Xb ) n y Link function More than one covariate can be included Extend the logit (linear equation). bs are the estimated parameters (effects); estimated for each period or group constrained to be equal using the data (xij). Link function The use rates or real parameters of interest are calculated from the bs as in this equation. β0 x1i β1 e Xβ e pi β0 x1i β1 1 e Xβ 1 e HUGE concept and applicable to EVERY estimator we examine. Occupancy and detection probabilities are replaced by the link function submodel of the covariate(s). Conceivably every sites has a different probability of use that is related to the value of the covariates. Multinomial probability An example Adding a link function Multinomial Distribution and Likelihoods Extension of the binomial coefficient with more than two possible mutually exclusive outcomes. Nearly always introduced by way of die tossing. Another example Multiple presence/absence surveys at multiple sites Binomial Coefficient The binomial coefficient was the number of ways y successes could be obtained from the n trials n n! y y! Example 7 successes in 10 trials n n ! 3628800 720 5040 y y! Multinomial coefficient The multinomial coefficient or the number of possible outcomes for die tossing (6 possibilities): n n! y1 y 2 y3 y 4 y5 y6 y1! y 2 ! y3 ! y 4 ! y5 ! y6 ! n! 6 y! i i 1 Example rolling each die face once in 6 trials: n y1 y2 y3 y4 y5 y6 n! 6 y ! i 1 i 6! 720 720 1!1!1!1!1!1! 1 Properties of multinomials Dependency among the counts. For example, if a die is thrown and it is not a 1, 2, 3, 4, or 5, then it must be a 6. 6 yi n i 1 Face Number Variable 1 10 y1 2 11 y2 3 13 y3 4 9 y4 5 8 y5 6 9 y6 TOTAL 60 n Multinomial pdf Probability an outcome or series of outcomes: n f ( yi | n pi ) p1 p2 p3 p4 p5 p6 yi 6 p i 1 i 1 Die example 1 The probability of rolling a fair die (pi = 1/6) six times (n) and turning up each face only once (ni = 1) is: f (1,1,1,1,1,1| 6 1 6,1 6,1 6,1 6,1 6,1 6) 6! 1 1 1 1 1 1 1!1!1!1!1!1! 6 6 6 6 6 6 0.01543 Die example 1 Dependency 6 p i 1 i 0.167 0.167 0.167 0.167 0.167 0.167 1 Example 2 Another example, the probability of rolling 2 – 2s , 3 – 3s, and 1 – 4 is: f (0, 2,3,1, 0, 0 | 6 1 6,1 6,1 6,1 6,1 6,1 6) 6! 1 1 1 1 1 1 0! 2! 3! 1! 0! 0! 6 6 6 6 6 6 720 0.167 6 12 0.001286 Likelihood As you might have expected, the likelihood of the multinomial is of greater interest to us We frequently have data (n, yi...m) and are seeking to determine the model (pi…m). The likelihood for our example with the die is: n y1 y2 y3 y4 y5 y6 L ( pi | n yi ) p1 p2 p3 p4 p5 p6 yi n m yi pi yi i 1 Log-likelihood This likelihood has all of the same properties we discussed for the binomial case. Usually solve to maximize the ln(L) n y1 y2 y3 y4 y5 y6 L ( pi | n yi ) p1 p2 p3 p4 p5 p6 yi n m yi pi yi i 1 n ln(L( pi | n yi ) ln y1 ln p1 y2 ln p2 y3 ln p3 y n m ln yi ln pi y i 1 ym ln pm Log-likelihood Ignoring the multinomial coefficient (constant) m ln(L( pi | n yi ) yi ln pi i 1 data ln( probabilities ) ln( L( pi | nyi )) Presence-absence surveys & multinomials Procedure: Select a sample of sites Conduct repeated presence-absence surveys at each site Usually temporal replication Sometimes spatial replication Record presence or absence of species during survey Encounter histories for each site & species Encounter history matrix Each row represents a site Site No. 1 2 3 Each column represents a sampling occasion. 211 0 0 1 212 0 0 1 213 0 1 0 214 1 0 0 215 1 0 1 216 1 1 0 217 1 1 1 218 0 0 1 On each occasion each species ‘1’ if encountered (captured) ‘0’ if not encountered. Occasion Encounter history - example For sites sampled on 3 occasions there are 8 (=2m = 23) possible encounter histories Encounter History 1 1 0 0 2 1 0 1 0 1 1 0 1 – Detected during survey 1 1 1 1 0 – Not-detected during survey 1 0 1 0 0 0 1 1 3 0 0 1 2 0 0 0 10 sites were sampled 3 times (not enough for a good estimate) yi Separate encounter history for each species 10 Encounter history - example Each capture history is a possible outcome, yi Encounter History 1 1 0 0 Analogous to one face of the die (ni). 2 1 0 1 0 1 1 0 1 1 1 1 1 0 1 0 0 0 1 1 3 0 0 1 2 0 0 0 Data consist of the number of times each capture history appears (yi). 10 Encounter history - example Each encounter history has an associated probability (pi) yi Encounter History 1 1 0 0 Each pij can be different 2 1 0 1 0 1 1 0 1 1 1 1 1 0 1 0 0 0 1 1 3 0 0 1 2 0 0 0 10 Log-likelihood example Log-likelihood Calculate log of the probability of encounter history (ln(Pi)) Multiply ln(Pi) by the number of times observed (yi) Sum the products Link function in binomial Binomial likelihood: n y n y L p | n, y p (1 p) y Substitute the link for p n exp( Xb ) L p | n, y y 1exp( Xb ) Voila! – logistic regression y exp( Xb ) 1 1exp( Xb ) n y Multinomial with link function Substitute the logit link for the pi n m exp( Xb ) ln( L( pi | nyi ) ln yi ln y i 1 1 exp( Xb ) But wait a minute! Is Pr(Occupancy) = Pr(Encounter)? Is Pr(Occupancy) = Pr(Encounter)? Probability of encounter includes both detection and use (occupancy). Sites known to be used Occupancy analysis estimates each thus providing conditional estimates of use of sites. yi Encounter History 1 1 0 0 2 1 0 1 0 1 1 0 1 1 1 1 1 0 1 0 0 0 1 1 3 0 0 1 2 0 0 0 10 Absent or Not detected?