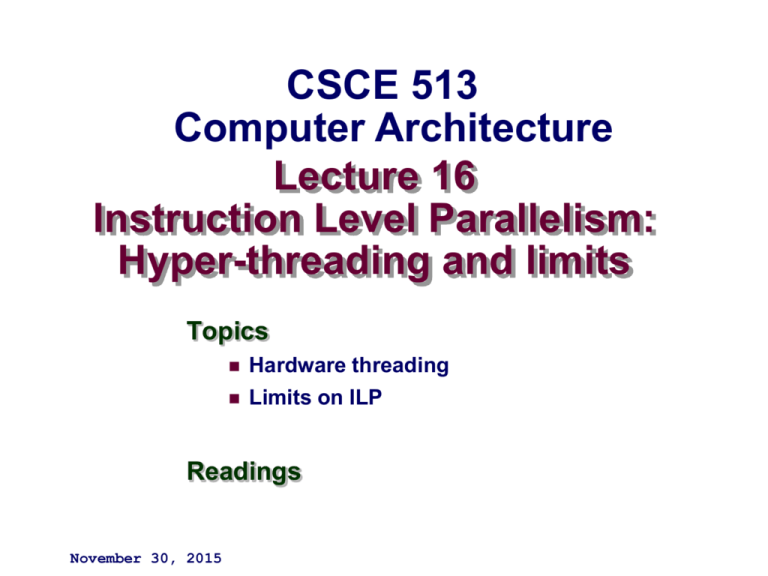

CSCE 513

Computer Architecture

Lecture 16

Instruction Level Parallelism:

Hyper-threading and limits

Topics

Hardware threading

Limits on ILP

Readings

November 30, 2015

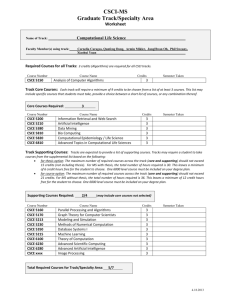

Overview

Last Time

pthreads

Readings for GPU programming

Stanford – (Itunes)http://code.google.com/p/stanford-cs193g-sp2010/

UIUC ECE 498 AL : Applied Parallel Programming

http://courses.engr.illinois.edu/ece498/al/

Book (online) David Kirk/NVIDIA and Wen-mei W. Hwu, 2007-2009

http://courses.engr.illinois.edu/ece498/al/Syllabus.html

New

Back to chapter 3

Topics revisited: multiple issue; tomasulo’s data hazards for

address field

Hyperthreading

Limits on ILP

–2–

CSCE 513 Fall 2015

CSAPP – Bryant O’Hallaron

.

–3–

CSCE 513 Fall 2015

T1 (“Niagara”)

Target: Commercial server applications

High thread level parallelism (TLP)

Large numbers of parallel client requests

Low instruction level parallelism (ILP)

High cache miss rates

Many unpredictable branches

Frequent load-load dependencies

Power, cooling, and space are major

concerns for data centers

Metric: Performance/Watt/Sq. Ft.

Approach: Multicore, Fine-grain

multithreading, Simple pipeline, Small

L1

–4

– caches, Shared L2

CSCE 513 Fall 2015

T1 Architecture

Also ships with 6 or 4 processors

–5–

3/15/2016

CS252 s06 T1

5

CSCE 513 Fall 2015

T1 pipeline

Single issue, in-order, 6-deep pipeline: F, S, D, E, M, W

3 clock delays for loads & branches.

Shared units:

L1, L2

TLB

X units

pipe registers

• Hazards:

– Data

– Structural

–6–

CSCE 513 Fall 2015

T1 Fine-Grained Multithreading

Each core supports four threads and has its own level

one caches (16KB for instructions and 8 KB for data)

Switching to a new thread on each clock cycle

Idle threads are bypassed in the scheduling

Waiting due to a pipeline delay or cache miss

Processor is idle only when all 4 threads are idle or stalled

Both loads and branches incur a 3 cycle delay that can

only be hidden by other threads

A single set of floating point functional units is shared

by all 8 cores

floating point performance was not a focus for T1

–7–

CSCE 513 Fall 2015

Memory, Clock, Power

16 KB 4 way set assoc. I$/ core

8 KB 4 way set assoc. D$/ core

3MB 12 way set assoc. L2 $ shared

4 x 750KB independent banks

crossbar switch to connect

2 cycle throughput, 8 cycle latency

Direct link to DRAM & Jbus

Manages cache coherence for the 8 cores

CAM based directory

Write through

• allocate LD

• no-allocate ST

Coherency is enforced among the L1 caches by a directory

associated with each L2 cache block

Used to track which L1 caches have copies of an L2 block

By associating each L2 with a particular memory bank and

enforcing the subset property, T1 can place the directory at L2

rather than at the memory, which reduces the directory

overhead

L1 data cache is write-through, only invalidation messages are

required; the data can always be retrieved from the L2 cache

– 8 – 1.2 GHz at 72W typical, 79W peak power consumption

CSCE 513 Fall 2015

Miss Rates: L2 Cache Size, Block

Size

2.5%

L2 Miss rate

2.0%

TPC-C

SPECJBB

1.5%

T1

1.0%

0.5%

0.0%

1.5 MB;

32B

1.5 MB;

64B

3 MB;

32B

3 MB;

64B

6 MB;

32B

6 MB;

64B

–9–

CSCE 513 Fall 2015

Miss Latency: L2 Cache Size, Block Size

200

180

T1

TPC-C

SPECJBB

160

L2 Miss latency

140

120

100

80

60

40

20

0

1.5 MB; 32B

1.5 MB; 64B

3 MB; 32B

3 MB; 64B

6 MB; 32B

6 MB; 64B

– 10 –

CSCE 513 Fall 2015

CPI Breakdown of Performance

Benchmark

Per

Thread

CPI

Per

Effective Effective

core

CPI for

IPC for

CPI

8 cores

8 cores

TPC-C

7.20

1.80

0.23

4.4

SPECJBB

5.60

1.40

0.18

5.7

SPECWeb99

6.60

1.65

0.21

4.8

– 11 –

CSCE 513 Fall 2015

Fraction of cycles not ready

Not Ready Breakdown

100%

Other

80%

Pipeline delay

60%

L2 miss

40%

L1 D miss

20%

L1 I miss

0%

TPC-C

SPECJBB

SPECWeb99

TPC-C - store buffer full is largest contributor

SPEC-JBB - atomic instructions are largest contributor

SPECWeb99 - both factors contribute

– 12 –

CSCE 513 Fall 2015

Performance: Benchmarks + Sun Marketing

Sun Fire

T2000

IBM p5-550 with 2

dual-core Power5 chips

Dell PowerEdge

SPECjbb2005 (Java server software)

business operations/ sec

63,378

61,789

24,208 (SC1425 with dual singlecore Xeon)

SPECweb2005 (Web server

performance)

14,001

7,881

4,850 (2850 with two dual-core

Xeon processors)

NotesBench (Lotus Notes

performance)

16,061

14,740

Benchmark\Architecture

– 13 –

CSCE 513 Fall 2015

HP marketing view of T1 Niagara

1. Sun’s radical UltraSPARC T1 chip is made up of individual

cores that have much slower single thread performance when

compared to the higher performing cores of the Intel Xeon,

Itanium, AMD Opteron or even classic UltraSPARC

processors.

2. The Sun Fire T2000 has poor floating-point performance, by

Sun’s own admission.

3. The Sun Fire T2000 does not support commerical Linux or

Windows® and requires a lock-in to Sun and Solaris.

4. The UltraSPARC T1, aka CoolThreads, is new and unproven,

having just been introduced in December 2005.

5. In January 2006, a well-known financial analyst downgraded

Sun on concerns over the UltraSPARC T1’s limitation to only

the Solaris operating system, unique requirements, and

longer adoption cycle, among other things. [10]

Where is the compelling value to warrant taking such a risk?

– 14 –

CSCE 513 Fall 2015

Microprocessor Comparison

Processor

Cores

Instruction issues

/ clock / core

Peak instr. issues

/ chip

Multithreading

L1 I/D in KB per core

L2 per core/shared

Clock rate (GHz)

Transistor count (M)

Die size (mm2)

– 15 –

Power

(W)

3/15/2016

SUN T1

Opteron

Pentium D

IBM Power 5

8

2

2

2

1

3

3

4

8

6

6

8

No

SMT

SMT

Finegrained

12K

uops/16

16/8

64/64

3 MB

1MB /

1MB/

1.9 MB

core

core

shared

shared

1.2

2.4

300

233

379

199

79

110

CS252 s06 T1

3.2

230

206

130

64/32

1.9

276

389

125

15

CSCE 513 Fall 2015

Performance Relative to Pentium D

6.5

6

Performance relative to Pentium D

5.5

5

+Power5

Opteron

Sun T1

4.5

4

3.5

3

2.5

2

1.5

1

0.5

0

– 16 –

SPECIntRate SPECFPRate

3/15/2016

SPECJBB05 SPECWeb05

CS252 s06 T1

TPC-like

16

CSCE 513 Fall 2015

In

tR

– 17 –

SP

EC

SP

EC

CS252 s06 T1

at

t

^2

at

t

m

^2

/W

/m

-C

-C

TP

C

TP

C

JB

B0

5/

W

JB

B0

5/

m

m

at

e/

W

at

t

Opteron

SP

EC

at

t

at

e/

m

m

^2

at

e/

W

^2

+Power5

FP

R

FP

R

SP

EC

SP

EC

In

tR

3/15/2016

SP

EC

at

e/

m

m

Efficiency normalized to Pentium D

Performance/mm2, Performance/Watt

5.5

5

4.5

4

Sun T1

3.5

3

2.5

2

1.5

1

0.5

0

17

CSCE 513 Fall 2015

Niagara 2

Improve performance by increasing threads

supported per chip from 32 to 64

8 cores * 8 threads per core

Floating-point unit for each core, not for each chip

Hardware support for encryption standards EAS,

3DES, and elliptical-curve cryptography

Niagara 2 will add a number of 8x PCI Express

interfaces directly into the chip in addition to

integrated 10Gigabit Ethernet XAU interfaces

and Gigabit Ethernet ports.

Integrated memory controllers will shift support

from DDR2 to FB-DIMMs and double the

Kevin Krewell

maximum amount of system memory.

“Sun's Niagara Begins CMT Flood The Sun UltraSPARC T1 Processor Released”

Microprocessor Report, January 3, 2006

– 18 –

3/15/2016

CS252 s06 T1

18

CSCE 513 Fall 2015

Amdahl’s Law Paper

Gene Amdahl, "Validity of the Single Processor Approach to

Achieving Large-Scale Computing Capabilities", AFIPS

Conference Proceedings, (30), pp. 483-485, 1967.

How long is paper?

How much of it is Amdahl’s Law?

What other comments about parallelism besides

Amdahl’s Law?

– 19 –

CSCE 513 Fall 2015

Parallel Programmer

Productivity

Lorin Hochstein et al "Parallel Programmer Productivity: A Case Study of

Novice Parallel Programmers." International Conference for High

Performance Computing, Networking and Storage (SC'05). Nov. 2005

What did they study?

What is argument that novice parallel programmers are a good

target for High Performance Computing?

How can account for variability in talent between programmers?

What programmers studied?

What programming styles investigated?

How big multiprocessor?

How measure quality?

How measure cost?

– 20 –

CSCE 513 Fall 2015

GUSTAFSON’s Law

Amdahl’s Law Speedup = (s + p ) ⁄ (s + p ⁄ N )

= 1 ⁄ (s + p ⁄ N ),

N = number of processors

s = sequential(serial) time

p = parallel time

For N=1024 processors

– 21 –

http://mprc.pku.edu.cn/courses/architecture/autumn2005/reevaluating-Amdahls-law.pdf CSCE 513 Fall 2015

Scale the problem

– 22 –

CSCE 513 Fall 2015

Matrix Multiplication revisited

for i=0; i < n; ++i

for (j=0; j<n; ++j){

for(k=0; k<n; ++k){

C[i][j] = C[i][j] + A[i][k]*B[k][j];

}

}

}

Note n3 multiplications, n3 additions, 4n3 memory references?

How can we improve code?

Stride through A?

B?

C?

Do reference to A[i][k] and C[i][j] work together or against each other

on the miss-rate

– Blocking

23 –

CSCE 513 Fall 2015

GUSTAFSON’s Law model scaling

Example

Suppose a model is dominated by a matrix multiply,

that for a given n x n matrix is multiplied a large

constant (k) number of times.

kn3 multiplies, adds (ignore memory references)

If a model of size n=1024 can be executed in 10 minutes

on one processor, using Gustaphson’s how big can

the model be and execute in the 10 minutes

assuming 1024 processors with 0% serial code?

with 1% serial code?

with 10% serial code?

– 24 –

CSCE 513 Fall 2015

– 25 –

CSCE 513 Fall 2015

Figure 3.26 ILP available in a perfect processor for six of the SPEC92 benchmarks. The first three

programs are integer programs, and the last three are floating-point programs. The floating-point programs

are loop intensive and have large amounts of loop-level parallelism.

– 26 –

Copyright © 2011, Elsevier Inc. All rights Reserved.

CSCE 513 Fall 2015

Figure 3.30 The relative change in the miss rates and miss latencies when executing with one thread per

core versus four threads per core on the TPC-C benchmark. The latencies are the actual time to return the

requested data after a miss. In the four-thread case, the execution of other threads could potentially hide much of

this latency.

– 27 –

Copyright © 2011, Elsevier Inc. All rights Reserved.

CSCE 513 Fall 2015

Figure 3.30 The relative change in the miss rates and miss latencies when executing with one thread per

core versus four threads per core on the TPC-C benchmark. The latencies are the actual time to return the

requested data after a miss. In the four-thread case, the execution of other threads could potentially hide much of

this latency.

– 28 –

Copyright © 2011, Elsevier Inc. All rights Reserved.

CSCE 513 Fall 2015

Figure 3.31 Breakdown of the status on an average thread. “Executing” indicates the thread issues an

instruction in that cycle. “Ready but not chosen” means it could issue but another thread has been chosen, and

“not ready” indicates that the thread is awaiting the completion of an event (a pipeline delay or cache miss, for

example).

– 29 –

Copyright © 2011, Elsevier Inc. All rights Reserved.

CSCE 513 Fall 2015

Figure 3.32 The breakdown of causes for a thread being not ready. The contribution to the “other” category varies. In

PC-C, store buffer full is the largest contributor; in SPEC-JBB, atomic instructions are the largest contributor; and in SPECWeb99,

both factors contribute.

– 30 –

Copyright © 2011, Elsevier Inc. All rights Reserved.

CSCE 513 Fall 2015

Figure 3.35 The speedup from using multithreading on one core on an i7 processor averages 1.28 for the Java

benchmarks and 1.31 for the PARSEC benchmarks (using an unweighted harmonic mean, which implies a

workload where the total time spent executing each benchmark in the single-threaded base set was the same).

The energy efficiency averages 0.99 and 1.07, respectively (using the harmonic mean). Recall that anything above 1.0 for

energy efficiency indicates that the feature reduces execution time by more than it increases average power. Two of the

Java benchmarks experience little speedup and have significant negative energy efficiency because of this. Turbo Boost is

off in all cases. These data were collected and analyzed by Esmaeilzadeh et al. [2011] using the Oracle (Sun) HotSpot

build 16.3-b01 Java 1.6.0 Virtual Machine and the gcc v4.4.1 native compiler.

– 31 –

Copyright © 2011, Elsevier Inc. All rights Reserved.

CSCE 513 Fall 2015

Figure 3.41 The Intel Core i7 pipeline structure shown with the memory system components. The total pipeline

depth is 14 stages, with branch mispredictions costing 17 cycles. There are 48 load and 32 store buffers. The six

independent functional units can each begin execution of a ready micro-op in the same cycle.

– 32 –

Copyright © 2011, Elsevier Inc. All rights Reserved.

CSCE 513 Fall 2015

Fermi (2010)

~1.5TFLOPS (SP)/~800GFLOPS (DP)

230 GB/s DRAM Bandwidth

– 33 –

© David Kirk/NVIDIA and Wen-mei W. Hwu, 2007-2009

ECE 498AL, Spring 2010 University of Illinois, Urbana-Champaign

33

CSCE 513 Fall 2015