Dynamical Systems, Stochastic Processes, and Probabilistic Robotics

advertisement

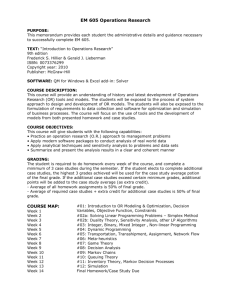

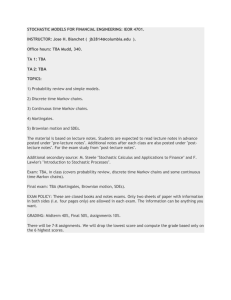

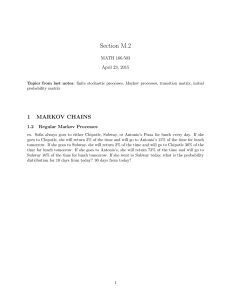

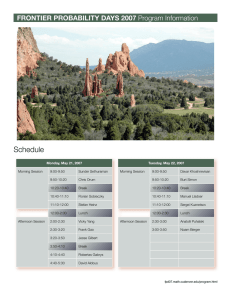

David Rosen Overview of some of the big ideas in autonomous systems Theme: Dynamical and stochastic systems lie at the intersection of mathematics and engineering ZOMG ROBOTS!!! Actually, no universally accepted definition For this talk: ◦ ◦ ◦ ◦ Sensing (what’s going on?) Decision (what to do?) Planning (how to do it?) Actuation & control (follow the plan) How do we begin to think about this problem? What’s going on? How do we know what the state is at a given time? Generally, we have some sensors: ◦ ◦ ◦ ◦ Laser rangefinders GPS Vision systems etc… Great! Well, not quite… ◦ In general, can’t measure all state variables directly. Instead, an observation function H : M → O maps the current state x to some manifold O of outputs that can be directly measured ◦ Usually, dim O < dim M ◦ Given some observation z = H(x), can’t determine x ! Maybe we can use the system dynamics (f ) together with multiple observations? Observability: Is it possible to determine the state of the system given a finite-time sequence of observations? ◦ “Virtual” sensors! Detectability (weaker): Are all of the unobservable modes of the system stable? What about noise? In general, uncorrected/unmodeled error accumulates over time. Stochastic processes: nondeterministic dynamical systems that evolve according to probability distributions. New model: for randomly distributed variables wt and vt . We assume that xt conditionally depends only upon xt-1 and the control ut (completeness): Stochastic processes that satisfy this condition are called Markov chains. Similarly, we assume that the measurement zt conditionally depends only upon the current state xt : Thus, we get a sequence of states and observations like this: This is called the hidden Markov model (HMM). How can we estimate the state of a HMM at a given time? Any ideas? Hint: How might we obtain from ? Punchline: If we regard probabilities in the Bayesian sense, then Bayes’ Rule provides a way to optimally update beliefs in response to new data. This is called Bayesian inference. It also leads to recursive Bayesian estimation. Define Then by conditional independence in the Markov chain: and by Bayes’ rule: This shows how to compute given only and the control input . Recursive filter! Initialize the filter with initial belief Recursion step: ◦ Propagate: ◦ Update: Benefits of recursion: ◦ Don’t need to remember observations ◦ Online implementation ◦ Efficient! Applications: ◦ Guidance ◦ Aerospace tracking ◦ Autonomous mapping (e.g., SLAM) ◦ System identification ◦ etc… This clip was reportedly sampled from an Air Force training video on missile guidance, circa 1955. It is factually correct. See also: ◦ Turboencabulator ◦ Unobtainium Rudolf Kalman How do we identify trajectories of the system with desirable properties? Controllability: given two arbitrary specified states p and q, does there exist a finite-time admissible control u that can drive the system from p to q ? Reachability: Given an initial state p, what other states can be reached from p along system trajectories in a given length of time? Stabilizability: Given an arbitrary state p, does there exist an admissible control u that can stabilize the system at p ? Several methods for generating trajectories ◦ Rote playback ◦ Online synthesis from libraries of moves ◦ etc… Optimal control: Minimize a cost functional amongst all controls whose trajectories have prescribed initial and final states x0 and x1. Provides a set of necessary conditions satisfied by any optimal trajectory. Can often be used to identify optimal controls of a system. Lev Pontryagin Can also derive versions of the PMP for: State-constrained control Non-autonomous (i.e., time-dependent) dynamics. etc… How can we regulate autonomous systems? Real-world systems suffer from noise, perturbations If the underlying system is unstable, even small perturbations can drive the system off of the desired trajectory. We have a desired trajectory that we would like to follow, called the reference. At each time t, we can estimate the actual state of the system . In general there is some nonzero error at each time t. Maybe we can find some rule for setting the control input u (t ) at each time t as a function of the error e (t ) such that the system is stabilized? In that case, we have a feedback control law: Many varieties of feedback controllers: Proportional-integral-derivative (PID) control Fuzzy logic control Machine learning Model adaptive control Robust control H∞ control etc… We started with what (at least conceptually) were very basic problems from engineering e.g., make this do this and ended up investigating all of this: Dynamical systems Stochastic processes Markov chains The hidden Markov model Bayesian inference Recursive Bayesian estimation The Pontryagin Maximum Principle Feedback stabilization and this is just the introduction!