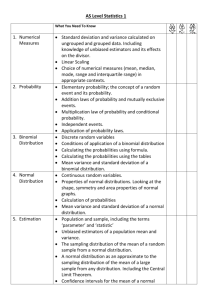

Practical Applications of Statistical Methods in the Clinical Laboratory

advertisement

Practical Applications of Statistical Methods in the Clinical Laboratory Roger L. Bertholf, Ph.D., DABCC Associate Professor of Pathology Director of Clinical Chemistry & Toxicology UF Health Science Center/Jacksonville “[Statistics are] the only tools by which an opening can be cut through the formidable thicket of difficulties that bars the path of those who pursue the Science of Man.” [Sir] Francis Galton (1822-1911) “There are three kinds of lies: Lies, damned lies, and statistics” Benjamin Disraeli (1804-1881) What are statistics, and what are they used for? • Descriptive statistics are used to characterize data • Statistical analysis is used to distinguish between random and meaningful variations • In the laboratory, we use statistics to monitor and verify method performance, and interpret the results of clinical laboratory tests “Do not worry about your difficulties in mathematics, I assure you that mine are greater” Albert Einstein (1879-1955) “I don't believe in mathematics” Albert Einstein Summation function N x x x i 1 i 1 2 x3 x N Product function N x x x i i 1 1 2 x3 x N The Mean (average) The mean is a measure of the centrality of a set of data. Mean (arithmetical) N 1 x xi N i 1 Mean (geometric) N x g N x1 x2 x3 x N N xi i 1 Use of the Geometric mean: The geometric mean is primarily used to average ratios or rates of change. Mean (harmonic) Example of the use of Harmonic mean: Suppose you spend $6 on pills costing 30 cents per dozen, and $6 on pills costing 20 cents per dozen. What was the average price of the pills you bought? Example of the use of Harmonic mean: You spent $12 on 50 dozen pills, so the average cost is 12/50=0.24, or 24 cents. This also happens to be the harmonic mean of 20 and 30: 2 1 1 30 20 24 Root mean square (RMS) xrms x x x x 1 2 xi N N i 1 2 1 2 2 2 3 2 N N For the data set: 1, 2, 3, 4, 5, 6, 7, 8, 9, 10: Arithmetic mean 5.50 Geometric mean 4.53 Harmonic mean 3.41 Root mean square 6.20 The Weighted Mean N xw x w i i i 1 N w i i 1 Other measures of centrality • Mode The Mode The mode is the value that occurs most often Other measures of centrality • Mode • Midrange The Midrange The midrange is the mean of the highest and lowest values Other measures of centrality • Mode • Midrange • Median The Median The median is the value for which half of the remaining values are above and half are below it. I.e., in an ordered array of 15 values, the 8th value is the median. If the array has 16 values, the median is the mean of the 8th and 9th values. Example of the use of median vs. mean: Suppose you’re thinking about building a house in a certain neighborhood, and the real estate agent tells you that the average (mean) size house in that area is 2,500 sq. ft. Astutely, you ask “What’s the median size?” The agent replies “1,800 sq. ft.” What does this tell you about the sizes of the houses in the neighborhood? Measuring variance Two sets of data may have similar means, but otherwise be very dissimilar. For example, males and females have similar baseline LH concentrations, but there is much wider variation in females. How do we express quantitatively the amount of variation in a data set? N 1 Mean difference (xi x ) N i 1 N N 1 1 xi x N i 1 N i 1 xx 0 The Variance N 1 2 V ( xi x ) N i 1 The Variance The variance is the mean of the squared differences between individual data points and the mean of the array. Or, after simplifying, the mean of the squares minus the squared mean. The Variance 1 V N N i 1 ( xi x ) 2 1 N 1 x N 1 N 1 1 2 x N 2 x xi N x (1) 2 i 1 2 xi x N 2 i x 2 2x 2 x 2 x2 x 2 2 x The Variance In what units is the variance? Is that a problem? The Standard Deviation V N 1 2 ( xi x ) N i 1 The Standard Deviation The standard deviation is the square root of the variance. Standard deviation is not the mean difference between individual data points and the mean of the array. 1 1 2 xx (x x) N N The Standard Deviation In what units is the standard deviation? Is that a problem? The Coefficient of * Variation CV 100 x *Sometimes called the Relative Standard Deviation (RSD or %RSD) Standard Deviation (or Error) of the Mean x x N The standard deviation of an average decreases by the reciprocal of the square root of the number of data points used to calculate the average. Exercises How many measurements must we average to improve our precision by a factor of 2? Answer To improve precision by a factor of 2: 1 1 05 . 2 N 1 N 2 05 . 2 N 2 4 (quadruplicate) Exercises • How many measurements must we average to improve our precision by a factor of 2? • How many to improve our precision by a factor of 10? Answer To improve precision by a factor of 10: 1 1 01 . 10 N 1 N 10 01 . 2 N 10 100 times! Exercises • How many measurements must we average to improve our precision by a factor of 2? • How many to improve our precision by a factor of 10? • If an assay has a CV of 7%, and we decide run samples in duplicate and average the measurements, what should the resulting CV be? Answer Improvement in CV by running duplicates: CVdup CV 7 4.9% 2 141 . Population vs. Sample standard deviation • When we speak of a population, we’re referring to the entire data set, which will have a mean : 1 Population mean xi N i Population vs. Sample standard deviation • When we speak of a population, we’re referring to the entire data set, which will have a mean • When we speak of a sample, we’re referring to a subset of the population, customarily designated “x-bar” • Which is used to calculate the standard deviation? “Sir, I have found you an argument. I am not obliged to find you an understanding.” Samuel Johnson (1709-1784) Population vs. Sample standard deviation 1 2 (xi ) N i s 1 2 (xi x ) N 1 i Distributions • Definition Statistical (probability) Distribution • A statistical distribution is a mathematically-derived probability function that can be used to predict the characteristics of certain applicable real populations • Statistical methods based on probability distributions are parametric, since certain assumptions are made about the data Distributions • Definition • Examples Binomial distribution The binomial distribution applies to events that have two possible outcomes. The probability of r successes in n attempts, when the probability of success in any individual attempt is p, is given by: P(r; p, n) p (1 p) r n r n! r !(n r )! Example What is the probability that 10 of the 12 babies born one busy evening in your hospital will be girls? Solution P(10;05 . ,12) 05 . (1 05 .) 10 12 10 0016 . or 16% . 12! 10!(12 10)! Distributions • Definition • Examples – Binomial “God does arithmetic” Karl Friedrich Gauss (1777-1855) The Gaussian Distribution What is the Gaussian distribution? 63 81 36 12 28 7 79 52 96 17 22 4 61 85 etc. F 1 number 100 63 81 36 12 28 7 79 52 96 17 22 4 61 85 + 22 73 54 33 99 5 61 28 58 24 16 77 43 8 = 85 152 90 45 127 12 140 70 154 41 38 81 104 93 F 2 number 200 . . . etc. x Probability The Gaussian Probability Function The probability of x in a Gaussian distribution with mean and standard deviation is given by: 1 ( x ) 2 / 2 2 P ( x; , ) e 2 The Gaussian Distribution • What is the Gaussian distribution? • What types of data fit a Gaussian distribution? “Like the ski resort full of girls hunting for husbands and husbands hunting for girls, the situation is not as symmetrical as it might seem.” Alan Lindsay Mackay (1926- ) Are these Gaussian? • • • • • • • Human height Outside temperature Raindrop size Blood glucose concentration Serum CK activity QC results Proficiency results The Gaussian Distribution • What is the Gaussian distribution? • What types of data fit a Gaussian distribution? • What is the advantage of using a Gaussian distribution? Probability Gaussian probability distribution .67 .95 µ-3 µ-2 µ- µ µ+ µ+2 µ+3 What are the odds of an observation . . . • more than 1 from the mean (+/-) • more than 2 greater than the mean • more than 3 from the mean Some useful Gaussian probabilities Range Probability Odds 68.3% 1 in 3 +/- 1.00 90.0% 1 in 10 +/- 1.64 95.0% 1 in 20 +/- 1.96 99.0% 1 in 100 +/- 2.58 That Example This [On the Gaussian curve] “Experimentalists think that it is a mathematical theorem while the mathematicians believe it to be an experimental fact.” Gabriel Lippman (1845-1921) Distributions • Definition • Examples – Binomial – Gaussian "Life is good for only two things, discovering mathematics and teaching mathematics" Siméon Poisson (1781-1840) The Poisson Distribution The Poisson distribution predicts the frequency of r events occurring randomly in time, when the expected frequency is e P ( r; ) r! r Examples of events described by a Poisson distribution ? • Lightning • Accidents • Laboratory? A very useful property of the Poisson distribution V( r ) Using the Poisson distribution How many counts must be collected in an RIA in order to ensure an analytical CV of 5% or less? Answer Since CV (100) (100) x and 0.05 400 counts Distributions • Definition • Examples – Binomial – Gaussian – Poisson The Student’s t Distribution When a small sample is selected from a large population, we sometimes have to make certain assumptions in order to apply statistical methods Questions about our sample • Is the mean of our sample, x bar, the same as the mean of the population, ? • Is the standard deviation of our sample, s, the same as the standard deviation for the population, ? • Unless we can answer both of these questions affirmatively, we don’t know whether our sample has the same distribution as the population from which it was drawn. Recall that the Gaussian distribution is defined by the probability function: 1 ( x ) 2 / 2 2 P ( x; , ) e 2 Note that the exponential factor contains both and , both population parameters. The factor is often simplified by making the substitution: z (x ) The variable z in the equation: z (x ) is distributed according to a unit gaussian, since it has a mean of zero and a standard deviation of 1 Probability Gaussian probability distribution .67 .95 -3 -2 -1 0 z 1 2 3 But if we use the sample mean and standard deviation instead, we get: (x x ) t s and we’ve defined a new quantity, t, which is not distributed according to the unit Gaussian. It is distributed according to the Student’s t distribution. Important features of the Student’s t distribution • Use of the t statistic assumes that the parent distribution is Gaussian • The degree to which the t distribution approximates a gaussian distribution depends on N (the degrees of freedom) • As N gets larger (above 30 or so), the differences between t and z become negligible Application of Student’s t distribution to a sample mean The Student’s t statistic can also be used to analyze differences between the sample mean and the population mean: (x ) t s N Comparison of Student’s t and Gaussian distributions Note that, for a sufficiently large N (>30), t can be replaced with z, and a Gaussian distribution can be assumed Exercise The mean age of the 20 participants in one workshop is 27 years, with a standard deviation of 4 years. Next door, another workshop has 16 participants with a mean age of 29 years and standard deviation of 6 years. Is the second workshop attracting older technologists? Preliminary analysis • Is the population Gaussian? • Can we use a Gaussian distribution for our sample? • What statistic should we calculate? Solution First, calculate the t statistic for the two means: t ( x1 x 2 ) s1 s 2 N1 N 2 (29 27) 2 2 6 4 20 16 1.19 ( x1 x 2 ) 2 1 2 2 s s N1 N 2 Solution, cont. Next, determine the degrees of freedom: N df N 1 N 2 2 16 20 2 34 Statistical Tables df t0.050 t0.025 t0.010 - - - - 34 1.645 1.960 2.326 - - - - Conclusion Since 1.16 is less than 1.64 (the t value corresponding to 90% confidence limit), the difference between the mean ages for the participants in the two workshops is not significant The Paired t Test Suppose we are comparing two sets of data in which each value in one set has a corresponding value in the other. Instead of calculating the difference between the means of the two sets, we can calculate the mean difference between data pairs. Instead of: (x1 x2 ) N we use: to calculate t: 1 ( x1 x2 ) ( x1i x2i ) N i 1 (x1 x2 ) t 2 sd N Advantage of the Paired t If the type of data permit paired analysis, the paired t test is much more sensitive than the unpaired t. Why? Applications of the Paired t • Method correlation • Comparison of therapies Distributions • Definition • Examples – – – – Binomial Gaussian Poisson Student’s t The 2 (Chi-square) Distribution There is a general formula that relates actual measurements to their predicted values N 2 i 1 [ yi f (xi )] 2 2 i The 2 (Chi-square) Distribution A special (and very useful) application of the 2 distribution is to frequency data ( ni f i ) fi i 1 N 2 2 Exercise In your hospital, you have had 83 cases of iatrogenic strep infection in your last 725 patients. St. Elsewhere, across town, reports 35 cases of strep in their last 416 patients. Do you need to review your infection control policies? Analysis If your infection control policy is roughly as effective as St. Elsewhere’s, we would expect that the rates of strep infection for the two hospitals would be similar. The expected frequency, then would be the average 83 35 118 01034 . 725 416 1141 Calculating 2 First, calculate the expected frequencies at your hospital (f1) and St. Elsewhere (f2) f 1 725 01034 . 75 cases f 2 416 01034 . 43 cases Calculating 2 Next, we sum the squared differences between actual and expected frequencies (ni f i ) fi i 2 2 (83 75) 2 (35 43) 2 75 43 2.34 Degrees of freedom In general, when comparing k sample proportions, the degrees of freedom for 2 analysis are k - 1. Hence, for our problem, there is 1 degree of freedom. Conclusion A table of 2 values lists 3.841 as the 2 corresponding to a probability of 0.05. So the variation (2between strep infection rates at the two hospitals is within statistically-predicted limits, and therefore is not significant. Distributions • Definition • Examples – – – – – Binomial Gaussian Poisson Student’s t 2 The F distribution • The F distribution predicts the expected differences between the variances of two samples • This distribution has also been called Snedecor’s F distribution, Fisher distribution, and variance ratio distribution The F distribution The F statistic is simply the ratio of two variances V1 F V2 (by convention, the larger V is the numerator) Applications of the F distribution There are several ways the F distribution can be used. Applications of the F statistic are part of a more general type of statistical analysis called analysis of variance (ANOVA). We’ll see more about ANOVA later. Example You’re asked to do a “quick and dirty” correlation between three whole blood glucose analyzers. You prick your finger and measure your blood glucose four times on each of the analyzers. Are the results equivalent? Data Analyzer 1 Analyzer 2 Analyzer 3 71 90 72 75 80 77 65 86 76 69 84 79 Analysis The mean glucose concentrations for the three analyzers are 70, 85, and 76. If the three analyzers are equivalent, then we can assume that all of the results are drawn from a overall population with mean and variance 2. Analysis, cont. Approximate by calculating the mean of the means: 70 85 76 77 3 Analysis, cont. Calculate the variance of the means: (70 77) (85 77) (76 77) Vx 3 38 2 2 2 Analysis, cont. But what we really want is the variance of the population. Recall that: x N Analysis, cont. Since we just calculated Vx 38 2 x we can solve for Vx N N 2 2 2 x N 4 38 152 2 2 x Analysis, cont. So we now have an estimate of the population variance, which we’d like to compare to the real variance to see whether they differ. But what is the real variance? We don’t know, but we can calculate the variance based on our individual measurements. Analysis, cont. If all the data were drawn from a larger population, we can assume that the variances are the same, and we can simply average the variances for the three data sets. V1 V2 V3 14.4 3 Analysis, cont. Now calculate the F statistic: 152 F 10.6 14.4 Conclusion A table of F values indicates that 4.26 is the limit for the F statistic at a 95% confidence level (when the appropriate degrees of freedom are selected). Our value of 10.6 exceeds that, so we conclude that there is significant variation between the analyzers. Distributions • Definition • Examples – – – – – – Binomial Gaussian Poisson Student’s t 2 F Unknown or irregular distribution • Transform Probability Probability Log transform x log x Unknown or irregular distribution • Transform • Non-parametric methods Non-parametric methods • Non-parametric methods make no assumptions about the distribution of the data • There are non-parametric methods for characterizing data, as well as for comparing data sets • These methods are also called distributionfree, robust, or sometimes non-metric tests Application to Reference Ranges The concentrations of most clinical analytes are not usually distributed in a Gaussian manner. Why? How do we determine the reference range (limits of expected values) for these analytes? Application to Reference Ranges • Reference ranges for normal, healthy populations are customarily defined as the “central 95%”. • An entirely non-parametric way of expressing this is to eliminate the upper and lower 2.5% of data, and use the remaining upper and lower values to define the range. • NCCLS recommends 120 values, dropping the two highest and two lowest. Application to Reference Ranges What happens when we want to compare one reference range with another? This is precisely what CLIA ‘88 requires us to do. How do we do this? “Everything should be made as simple as possible, but not simpler.” Albert Einstein Solution #1: Simple comparison Suppose we just do a small internal reference range study, and compare our results to the manufacturer’s range. How do we compare them? Is this a valid approach? NCCLS recommendations • Inspection Method: Verify reference populations are equivalent • Limited Validation: Collect 20 reference specimens – No more than 2 exceed range – Repeat if failed • Extended Validation: Collect 60 reference specimens; compare ranges. Solution #2: * Mann-Whitney Rank normal values (x1,x2,x3...xn) and the reference population (y1,y2,y3...yn): x1, y1, x2, x3, y2, y3 ... xn, yn Count the number of y values that follow each x, and call the sum Ux. Calculate Uy also. *Also called the U test, rank sum test, or Wilcoxen’s test. Mann-Whitney, cont. It should be obvious that: Ux + Uy = NxNy If the two distributions are the same, then: Ux = Uy = 1/2NxNy Large differences between Ux and Uy indicate that the distributions are not equivalent “‘Obvious’ is the most dangerous word in mathematics.” Eric Temple Bell (1883-1960) Solution #3: Run test In the run test, order the values in the two distributions as before: x1, y1, x2, x3, y2, y3 ... xn, yn Add up the number of runs (consecutive values from the same distribution). If the two data sets are randomly selected from one population, there will be few runs. Solution #4: The Monte Carlo method Sometimes, when we don’t know anything about a distribution, the best thing to do is independently test its characteristics. The Monte Carlo method Asq xy x 2 x Acir r 2 y 2 Acir 4 Asq x 2 The Monte Carlo method N mean, SD N mean, SD N mean, SD N mean, SD Reference population The Monte Carlo method With the Monte Carlo method, we have simulated the test we wish to apply--that is, we have randomly selected samples from the parent distribution, and determined whether our in-house data are in agreement with the randomly-selected samples. Analysis of paired data • For certain types of laboratory studies, the data we gather is paired • We typically want to know how closely the paired data agree • We need quantitative measures of the extent to which the data agree or disagree • Examples? Examples of paired data • • • • Method correlation data Pharmacodynamic effects Risk analysis Pathophysiology Correlation 50 45 40 35 30 25 20 15 10 5 0 0 5 10 15 20 25 30 35 40 45 50 Linear regression (least squares) Linear regression analysis generates an equation for a straight line y = mx + b where m is the slope of the line and b is the value of y when x = 0 (the y-intercept). The calculated equation minimizes the differences between actual y values and the linear regression line. Correlation 50 45 40 y = 1.031x - 0.024 35 30 25 20 15 10 5 0 0 5 10 15 20 25 30 35 40 45 50 Covariance Do x and y values vary in concert, or randomly? 1 cov( x , y ) ( yi y )( xi x ) N i 1 cov( x , y ) ( yi y )( xi x ) N i • What if y increases when x increases? • What if y decreases when x increases? • What if y and x vary independently? Covariance It is clear that the greater the covariance, the stronger the relationship between x and y. But . . . what about units? e.g., if you measure glucose in mg/dL, and I measure it in mmol/L, who’s likely to have the highest covariance? The Correlation Coefficient 1 ( yi y )( xi x ) cov( x , y ) N i x y y x 1 1 The Correlation Coefficient • The correlation coefficient is a unitless quantity that roughly indicates the degree to which x and y vary in the same direction. • is useful for detecting relationships between parameters, but it is not a very sensitive measure of the spread. Correlation 50 45 40 y = 1.031x - 0.024 = 0.9986 35 30 25 20 15 10 5 0 0 5 10 15 20 25 30 35 40 45 50 Correlation 50 45 40 y = 1.031x - 0.024 = 0.9894 35 30 25 20 15 10 5 0 0 5 10 15 20 25 30 35 40 45 50 Standard Error of the Estimate The linear regression equation gives us a way to calculate an “estimated” y for any given x value, given the symbol ŷ (y-hat): y mx b Standard Error of the Estimate Now what we are interested in is the average difference between the measured y and its estimate, ŷ : sy / x 1 2 ( yi yi ) N i Correlation 50 45 40 y = 1.031x - 0.024 = 0.9986 sy/x=1.83 35 30 25 20 15 10 5 0 0 5 10 15 20 25 30 35 40 45 50 Correlation 50 45 40 y = 1.031x - 0.024 = 0.9894 sy/x = 5.32 35 30 25 20 15 10 5 0 0 5 10 15 20 25 30 35 40 45 50 Standard Error of the Estimate If we assume that the errors in the y measurements are Gaussian (is that a safe assumption?), then the standard error of the estimate gives us the boundaries within which 67% of the y values will fall. 2sy/x defines the 95% boundaries.. Limitations of linear regression • Assumes no error in x measurement • Assumes that variance in y is constant throughout concentration range Alternative approaches • Weighted linear regression analysis can compensate for non-constant variance among y measurements • Deming regression analysis takes into account variance in the x measurements • Weighted Deming regression analysis allows for both Evaluating method performance • Precision Method Precision • Within-run: 10 or 20 replicates – What types of errors does within-run precision reflect? • Day-to-day: NCCLS recommends evaluation over 20 days – What types of errors does day-to-day precision reflect? Evaluating method performance • Precision • Sensitivity Method Sensitivity • The analytical sensitivity of a method refers to the lowest concentration of analyte that can be reliably detected. • The most common definition of sensitivity is the analyte concentration that will result in a signal two or three standard deviations above background. Signal Signal/Noise threshold time Other measures of sensitivity • Limit of Detection (LOD) is sometimes defined as the concentration producing an S/N > 3. – In drug testing, LOD is customarily defined as the lowest concentration that meets all identification criteria. • Limit of Quantitation (LOQ) is sometimes defined as the concentration producing an S/N >5. – In drug testing, LOQ is customarily defined as the lowest concentration that can be measured within ±20%. Question At an S/N ratio of 5, what is the minimum CV of the measurement? If the S/N is 5, 20% of the measured signal is noise, which is random. Therefore, the CV must be at least 20%. Evaluating method performance • Precision • Sensitivity • Linearity Method Linearity • A linear relationship between concentration and signal is not absolutely necessary, but it is highly desirable. Why? • CLIA ‘88 requires that the linearity of analytical methods is verified on a periodic basis. Ways to evaluate linearity • Visual/linear regression Signal Concentration Outliers We can eliminate any point that differs from the next highest value by more than 0.765 (p=0.05) times the spread between the highest and lowest values (Dixon test). Example: 4, 5, 6, 13 (13 - 4) x 0.765 = 6.89 Limitation of linear regression method If the analytical method has a high variance (CV), it is likely that small deviations from linearity will not be detected due to the high standard error of the estimate Signal Concentration Ways to evaluate linearity • Visual/linear regression • Quadratic regression Quadratic regression Recall that, for linear data, the relationship between x and y can be expressed as y = f(x) = a + bx Quadratic regression A curve is described by the quadratic equation: y = f(x) = a + bx + cx2 which is identical to the linear equation except for the addition of the cx2 term. Quadratic regression It should be clear that the smaller the x2 coefficient, c, the closer the data are to linear (since the equation reduces to the linear form when c approaches 0). What is the drawback to this approach? Ways to evaluate linearity • Visual/linear regression • Quadratic regression • Lack-of-fit analysis Lack-of-fit analysis • There are two components of the variation from the regression line – Intrinsic variability of the method – Variability due to deviations from linearity • The problem is to distinguish between these two sources of variability • What statistical test do you think is appropriate? Signal Concentration Lack-of-fit analysis The ANOVA technique requires that method variance is constant at all concentrations. Cochran’s test is used to test whether this is the case. VL 0.5981 ( p 0.05) Vi i Lack-of-fit method calculations • Total sum of the squares: the variance calculated from all of the y values • Linear regression sum of the squares: the variance of y values from the regression line • Residual sum of the squares: difference between TSS and LSS • Lack of fit sum of the squares: the RSS minus the pure error (sum of variances) Lack-of-fit analysis • The LOF is compared to the pure error to give the “G” statistic (which is actually F) • If the LOF is small compared to the pure error, G is small and the method is linear • If the LOF is large compared to the pure error, G will be large, indicating significant deviation from linearity Significance limits for G • 90% confidence = 2.49 • 95% confidence = 3.29 • 99% confidence = 5.42 “If your experiment needs statistics, you ought to have done a better experiment.” Ernest Rutherford (1871-1937) Evaluating Clinical Performance of laboratory tests • The clinical performance of a laboratory test defines how well it predicts disease • The sensitivity of a test indicates the likelihood that it will be positive when disease is present Clinical Sensitivity If TP as the number of “true positives”, and FN is the number of “false negatives”, the sensitivity is defined as: TP Sensitivity 100 TP FN Example Of 25 admitted cocaine abusers, 23 tested positive for urinary benzoylecgonine and 2 tested negative. What is the sensitivity of the urine screen? 23 100 92% 23 2 Evaluating Clinical Performance of laboratory tests • The clinical performance of a laboratory test defines how well it predicts disease • The sensitivity of a test indicates the likelihood that it will be positive when disease is present • The specificity of a test indicates the likelihood that it will be negative when disease is absent Clinical Specificity If TN is the number of “true negative” results, and FP is the number of falsely positive results, the specificity is defined as: TN Specificity 100 TN FP Example What would you guess is the specificity of any particular clinical laboratory test? (Choose any one you want) Answer Since reference ranges are customarily set to include the central 95% of values in healthy subjects, we expect 5% of values from healthy people to be “abnormal”--this is the false positive rate. Hence, the specificity of most clinical tests is no better than 95%. Sensitivity vs. Specificity • Sensitivity and specificity are inversely related. Disease + Marker concentration Sensitivity vs. Specificity • Sensitivity and specificity are inversely related. • How do we determine the best compromise between sensitivity and specificity? True positive rate (sensitivity) Receiver Operating Characteristic False positive rate 1-specificity Evaluating Clinical Performance of laboratory tests • The sensitivity of a test indicates the likelihood that it will be positive when disease is present • The specificity of a test indicates the likelihood that it will be negative when disease is absent • The predictive value of a test indicates the probability that the test result correctly classifies a patient Predictive Value The predictive value of a clinical laboratory test takes into account the prevalence of a certain disease, to quantify the probability that a positive test is associated with the disease in a randomly-selected individual, or alternatively, that a negative test is associated with health. Illustration • Suppose you have invented a new screening test for Addison disease. • The test correctly identified 98 of 100 patients with confirmed Addison disease (What is the sensitivity?) • The test was positive in only 2 of 1000 patients with no evidence of Addison disease (What is the specificity?) Test performance • The sensitivity is 98.0% • The specificity is 99.8% • But Addison disease is a rare disorder-incidence = 1:10,000 • What happens if we screen 1 million people? Analysis • In 1 million people, there will be 100 cases of Addison disease. • Our test will identify 98 of these cases (TP) • Of the 999,900 non-Addison subjects, the test will be positive in 0.2%, or about 2,000 (FP). Predictive value of the positive test The predictive value is the % of all positives that are true positives: TP PV 100 TP FP 98 100 98 2000 4.7% What about the negative predictive value? • TN = 999,900 - 2000 = 997,900 • FN = 100 * 0.002 = 0 (or 1) TN PV 100 TN FN 997,900 100 997,900 1 100% Summary of predictive value Predictive value describes the usefulness of a clinical laboratory test in the real world. Or does it? Lessons about predictive value • Even when you have a very good test, it is generally not cost effective to screen for diseases which have low incidence in the general population. Exception? • The higher the clinical suspicion, the better the predictive value of the test. Why? Efficiency We can combine the PV+ and PV- to give a quantity called the efficiency: TP TN Efficiency 100 TP FP TN FN The efficiency is the percentage of all patients that are classified correctly by the test result. Efficiency of our Addison screen 98 997,900 100 99.8% 98 2000 997,900 2 “To call in the statistician after the experiment is done may be no more than asking him to perform a postmortem examination: he may be able to say what the experiment died of.” Ronald Aylmer Fisher (1890 - 1962) Application of Statistics to Quality Control • We expect quality control to fit a Gaussian distribution • We can use Gaussian statistics to predict the variability in quality control values • What sort of tolerance will we allow for variation in quality control values? • Generally, we will question variations that have a statistical probability of less than 5% “He uses statistics as a drunken man uses lamp posts -for support rather than illumination.” Andrew Lang (1844-1912) Westgard’s rules • • • • • • 12s 13s 22s R4s 41s 10x 1 in 20 1 in 300 1 in 400 1 in 800 1 in 600 1 in 1000 Some examples +3sd +2sd +1sd mean -1sd -2sd -3sd Some examples +3sd +2sd +1sd mean -1sd -2sd -3sd Some examples +3sd +2sd +1sd mean -1sd -2sd -3sd Some examples +3sd +2sd +1sd mean -1sd -2sd -3sd “In science one tries to tell people, in such a way as to be understood by everyone, something that no one ever knew before. But in poetry, it's the exact opposite.” Paul Adrien Maurice Dirac (1902- 1984)