EVALUATING MODELS OF PARAMETER SETTING - CUNY

advertisement

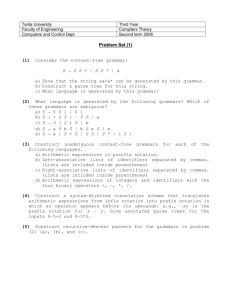

EVALUATING MODELS OF PARAMETER SETTING Janet Dean Fodor Graduate Center, City University of New York 1 2 On behalf of CUNY-CoLAG CUNY Computational Language Acquisition Group With support from PSC-CUNY William G. Sakas, co-director Carrie Crowther Atsu Inoue Xuan-Nga Kam Yukiko Koizumi Eiji Nishimoto Artur Niyazov Iana Melnikova Pugach Lisa Reisig-Ferrazzano Iglika Stoyneshka-Raleva Virginia Teller Lidiya Tornyova Erika Troseth Tanya Viger Sam Wagner www.colag.cs.hunter.cuny.edu 3 Before we start… Warning: I may skip some slides. But not to hide them from you. Every slide is at our website www.colag.cs.hunter.cuny.edu 4 What we have done A factory for testing models of parameter setting. UG + 13 parameter values → 3,072 languages (simplified but human-like). Sentences of a target language are the input to a learning model. Is learning successful? How fast? Why? 5 Our Aims A psycho-computational model of syntactic parameter setting. Psychologically realistic. Precisely specified. Compatible with linguistic theory. And… it must work! 6 Parameter setting as the solution (1981) Avoids problems of rule-learning. Only 20 (or 200) facts to learn. Triggering is fast & automatic = no linguistic computation is necessary. Accurate. BUT: This has never been modeled. 7 Parameter setting as the problem (1990s) R. Clark, and Gibson & Wexler have shown: P-setting is not labor-free, not always successful. Because… The parameter interaction problem. The parametric ambiguity problem. Sentences do not tell which parameter values generated them. 8 This evening… Parameter setting: How severe are the problems? Why do they matter? How to escape them? Moving forward: from problems to explorations. 9 Problem 1: Parameter interaction Even independent parameters interact in derivations (Clark 1988,1992). Surface string reflects their combined effects. So one parameter may have no distinctive isolatable effect on sentences. = no trigger, no cue (cf. cue-based learner; Lightfoot 1991; Dresher 1999) Parametric decoding is needed. Must disentangle the interactions, to identify which p-values a sentence requires. 10 Parametric decoding Decoding is not instantaneous. It is hard work. Because… To know that a parameter value is necessary, must test it in company of all other p-values. So whole grammars must be tested against the sentence. (Grammar-testing ≠ triggering!) All grammars must be tested, to identify one correct p-value. (exponential!) 11 Decoding O3 Verb Subj O1[+WH] P Adv. This sets: no wh-movt, p-stranding, head initial VP, V to I to C, no affix hopping, Cinitial, subj initial, no overt topic marking Doesn’t set: oblig topic, null subj, null topic 12 More decoding Adv[+WH] P NOT Verb S KA. This sets everything except ±overt topic marking. Verb[+FIN]. This sets nothing, not even +null subject. 13 Problem 2: Parametric ambiguity A sentence may belong to more than one language. A p-ambiguous sentence doesn’t reveal the target p-values (even if decoded). Learner must guess (= inaccurate) or pass (= slow, + when? ) How much p-ambiguity is there in natural language? Not quantified; probably vast. 14 Scale of the problem (exponential) P-interaction and p-ambiguity are likely to increase with the # of parameters. 20 parameters → 30 parameters → 40 parameters → 100 parameters → 220 grammars = over a million 230 grammars = over a billion 240 grammars = over a trillion 2100 grammars = ??? How many parameters are there? 15 Learning models must scale up Testing all grammars against each input sentence is clearly impossible. So research has turned to search methods: how to sample and test the huge field of grammars efficiently. Genetic algorithms (e.g., Clark 1992) Hill-climbing algorithms (e.g., Gibson & Wexler’s TLA 1994) 16 Our approach Retain a central aspect of classic triggering: Input sentences guide the learner toward the p-values they need. Decode on-line; parsing routines do the work. (They’re innate.) Parse the input sentence (just as adults do, for comprehension) until it crashes. Then the parser draws on other p-values, to find one that can patch the parse-tree. 17 Structural Triggers Learners (CUNY) STLs find one grammar for each sentence. More than that would require parallel parsing, beyond human capacity. But the parser can tell on-line if there is (possibly) more than one candidate. If so: guess, or pass (wait for unambig). Considers only real candidate grammars; directed by what the parse-tree needs. 18 Summary so far… Structural triggers learners (STLs) retain an important aspect of triggering (p-decoding). Compatible with current psycholinguistic models of sentence processing. Hold promise of being efficient. (Home in on target grammar, within human resource limits.) Now: Do they really work, in a domain with realistic parametric ambiguity? 19 Evaluating learning models Do any models work? Reliably? Fast? Within human resources? Do decoding models work better than domain-search (grammar-testing) models? Within decoding models, is guessing better or worse than waiting? 20 Hope it works! If not… The challenge: What is UG good for? All that innate knowledge, only a few facts to learn, but you can’t say how! Instead, one simple learning procedure: Adjust the weights in a neural network; Record statistics of co-occurrence frequencies. Nativist theories of human language are vulnerable until some UG-based learner is shown to perform well. 21 Non-UG-based learning Christiansen, M.H., Conway, C.M. and Curtin, S. (2000). A connectionist single-mechanism account of rule-like behavior in infancy. In Proceedings of 22nd Annual Conference of Cognitive Science Society, 83-88. Mahwah, NJ: Lawrence Erlbaum. Culicover, P.W. and Nowak, A. (2003) A Dynamical Grammar. Oxford, UK: Oxford University Press. Vol.Two of Foundations of Syntax. Lewis, J.D. and Elman, J.L. (2002) Learnability and the statistical structure of language: Poverty of stimulus arguments revisited. In B. Skarabela et al. (eds) Proceedings of BUCLD 26, Somerville, Mass: Cascadilla Press. Pereira, F. (2000) Formal Theory and Information theory: Together again? Philosophical Transactions of the Royal Society, Series A 358, 1239-1253. Seidenberg, M.S., & MacDonald, M.C. (1999) A probabilistic constraints approach to language acquisition and processing. Cognitive Science 23, 569-588. Tomasello, M. (2003) Constructing a Language: A Usage-Based Theory of Language Acquisition. Harvard University Press. 22 The CUNY simulation project We program learning algorithms proposed in the literature. (12 so far) Run each one on a large domain of human-like languages. 1,000 trials (1,000 ‘children’) each. Success rate: % of trials that identify target. Speed: average # of input sentences consumed until learner has identified the target grammar. Reliability/speed: # of input sentences for 99% of trials ( 99% of ‘children’) to attain the target. Subset Principle violations and one-step local maxima excluded by fiat. (Explained below as necessary.) 23 Designing the language domain Realistically large, to test which models scale up well. As much like natural languages as possible. Except, input limited like child-directed speech. Sentences must have fully specified tree structure (not just word strings), to test models like STL. Should reflect theoretically defensible linguistic analyses (though simplified). Grammar format should allow rapid conversion into the operations of an effective parsing device. 24 Language domains created # params # langs # sents per lang tree structure Gibson & Wexler (1994) 3 8 12 or 18 Not fully specified Word order + V2 Bertolo et al. (1997) 7 64 distinct Many Yes G&W + V-raising + degree-2 Kohl (1999) 12 2,304 Many Partial B et al. + scrambling Sakas & Nishimoto (2002) 4 16 12-32 Yes G&W + null subj/topic 3,072 168-1,420 Yes S&N + Imp + wh-movt + piping + etc Fodor, Melni- 13 kova & Troseth (2002) Language properties 25 Selection criteria for our domain We have given priority to syntactic phenomena which: Occur in a high proportion of known natl langs; Occur often in speech directed to 2-3 year olds; Pose learning problems of theoretical interest; A focus of linguistic / psycholinguistic research; Syntactic analysis is broadly agreed on. 26 By these criteria Questions, imperatives. Negation, adverbs. Null subjects, verb movement. Prep-stranding, affix-hopping (though not widespread!). Wh-movement, but no scrambling yet. 27 Not yet included No LF interface (cf. Villavicencio 2000) No ellipsis; no discourse contexts to license fragments. No DP-internal structure; Case; agreement. No embedding (only degree-0). No feature checking as implementation of movement parameters (Chomsky 1995ff.) No LCA / Anti-symmetry (Kayne 1994ff.) 28 Our 13 parameters (so far) Parameter Default Subject Initial (SI) Object Final (OF) Complementizer Initial (CI) V to I Movement (VtoI) I to C Movement (of aux or verb) (ItoC) Question Inversion (Qinv = I to C in questions only) Affix Hopping (AH) Obligatory Topic (vs. optional) (ObT) Topic Marking (TM) Wh-Movement obligatory (vs. none) (Wh-M) Pied Piping (vs. preposition stranding) (PI) Null Subject (NS) Null Topic (NT) yes yes initial no no no no yes no no piping no no 29 Parameters are not all independent Constraints on P-value combinations: If [+ ObT] then [- NS]. (A topic-oriented language does not have null subjects.) If [- ObT] then [- NT]. (A subject-oriented language does not have null topics.) If [+ VtoI] then [- AH]. (If verbs raise to I, affix hopping does not occur.) (This is why only 3,072 grammars, not 8,192.) 30 Input sentences Universal lexicon: S, Aux, O1, P, etc. Input is word strings only, no structure. Except, the learner knows all word categories and all grammatical roles! Equivalent to some semantic bootstrapping; no prosodic bootstrapping (yet!) 31 Learning procedures In all models tested (unless noted), learning is: Incremental = hypothesize a grammar after each input. No memory for past input. Error-driven = if Gcurrent can parse the sentence, retain it. Models differ in what the learner does when Gcurrent fails = grammar change is needed. 32 The learning models: preview Learners that decode: STLs. Waiting (‘squeaky clean’) Guessing Grammar-testing learners Triggering Learning Algorithm (G&W) Variational Learner (Yang 2000) …plus benchmarks for comparison too powerful too weak 33 Learners that decode: STLs Strong STL: Parallel parse input sentence, find all successful grammars. Adopt p-values they share. (A useful benchmark, not a psychological model.) Waiting STL: Serial parse. Note any choice-point in the parse. Set no parameters after a choice. (Never guesses. Needs fully unambig triggers.) (Fodor 1998a) Guessing STLs: Serial. At a choice-point, guess. (Can learn from p-ambiguous input.) (Fodor 1998b) 34 Guessing STLs’ guessing principles If there is more than one new p-value that could patch the parse tree… Any Parse: Pick at random. Minimal Connections: Pick the p-value that gives the simplest tree. ( MA + LC) Least Null Terminals: Pick the parse with the fewest empty categories. ( MCP) Nearest Grammar: Pick the grammar that differs least from Gcurrent. 35 Grammar-testing: TLA Error-driven random: Adopt any grammar. (Another baseline; not a psychological model.) TLA (Gibson & Wexler, 1994): Change any one parameter. Try the new grammar on the sentence. Adopt it if the parse succeeds. Else pass. Non-greedy TLA (Berwick & Niyogi, 1996): Change any one parameter. Adopt it. (No test of new grammar against the sentence.) Non-SVC TLA (B&N 96): Try any grammar other than Gcurrent. Adopt it if the parse succeeds. 36 Grammar-testing models with memory Variational Learner (Yang 2000,2002) has memory for success / failure of p-values. A p-value is: rewarded if in a grammar that parsed an input; punished if in a grammar that failed. Reinforcement is approximate, because of interaction. A good p-value in a bad grammar is punished, and vice versa. 37 With memory: Error-driven VL Yang’s VL is not error-driven. It chooses pvalues with probability proportional to their current success weights. So it occasionally tries out unlikely p-values. Error-driven VL (Sakas & Nishimoto, 2002) Like Yang’s original, but: First, set each parameter to its currently more successful value. Only if that fails, pick a different grammar as above. 38 Previous simulation results TLA is slower than error-driven random on the G&W domain, even when it succeeds (Berwick & Niyogi 1996). TLA sometimes performs better, e.g., in strongly smooth domains (Sakas 2000, 2003). TLA fails on 3 of G&W’s 8 languages, and on 95.4% of Kohl’s 2,304 languages. There is no default grammar that can avoid TLA learning failures. The best starting grammar succeeds only 43% (Kohl 1999). Some TLA-unlearnable languages are quite natural, e.g., Swedish-type settings (Kohl 1999). Waiting-STL is paralyzed by weakly equivalent grammars (Bertolo et al. 1997). 39 Data by learning model % failure rate # inputs (99% of trials) # inputs (average) 0 16,663 3,589 88 16,990 961 TLA w/o Greediness 0 19,181 4,110 TLA without SVC 0 67,896 11,273 Strong STL 74 170 26 Waiting STL 75 176 28 Any parse 0 1,486 166 Minimal Connections 0 1,923 197 Least Null Terminals 0 1,412 160 80 180 30 Algorithm Error-driven random TLA original Guessing STLs Nearest Grammar 40 Summary of performance Not all models scale up well. ‘Squeaky-clean’ models (Strong / Waiting STL) fail often. Need unambiguous triggers. Decoding models which guess are most efficient. On-line parsing strategies make good learning strategies. (?) Even with decoding, conservative domain search fails often (Nearest Grammar STL). Thus: Learning-by-parsing fulfills its promise. Psychologically natural ‘triggering’ is efficient. 41 Now that we have a workable model… Use it to investigate questions of interest: Are some languages easier than others? Do default starting p-values help? Does overt morphological marking facilitate syntax learning? etc….. Compare with psycholinguistic data, where possible. This tests the model further, and may offer guidelines for real-life studies. 42 Are some languages easier? Guessing STL- MC ‘Japanese’ # inputs (99% of trials) # inputs (average) 87 21 ‘French’ 99 22 ‘German’ 727 147 1,549 357 ‘English’ 43 What makes a language easier? Language difficulty is not predicted by how many of the target p-settings are defaults. Probably what matters is parametric ambiguity Overlap with neighboring languages Lack of almost-unambiguous triggers Are non-attested languages the difficult ones? (Kohl, 1999: explanatory!) 44 Sensitivity to input properties How does the informativeness of the input affect learning rate? Theoretical interest: To what extent can UG-based p-setting be input-paced? If an input-pacing profile does not match child learners, that could suggest biological timing (e.g., maturation). 45 Some input properties Morphological marking of syntactic features: Case Agreement Finiteness The target language may not provide them. Or the learner may not know them. Do they speed up learning? Or just create more work? 46 Input properties, cont’d For real children, it is likely that: Semantics / discourse pragmatics signals illocutionary force: [ILLOC DEC], [ILLOC Q] or [ILLOC IMP] Semantics and/or syntactic context reveals SUBCAT (argument structure) of verbs. Prosody reveals some phrase boundaries (as well as providing illocutionary cues). 47 Making finiteness audible [+/-FIN] distinguishes Imperatives from Declaratives. (So does [ILLOC], but it’s inaudible.) Imperatives have null subject. E.g., Verb O1. A child who interprets an IMP input as a DEC could mis-set [+NS] for a [-NS] lang. Does learning become faster / more accurate when [+/-FIN] is audible? No. Why not? Because Subset Principle requires learner to parse IMP/DEC ambiguous sentences as IMP. 48 Providing semantic info: ILLOC Suppose real children know whether an input is Imperative, Declarative or Question. This is relevant to [+ItoC] vs. [+Qinv]. ( [+Qinv] [+ItoC] only in questions ) Does learning become faster / more accurate when [ILLOC] is audible? No. It’s slower! Because it’s just one more thing to learn. Without ILLOC, a learner could get all word strings right, but their ILLOCs and pvalues all wrong – and count as successful. 49 Providing SUBCAT information Suppose real children can bootstrap verb argument structure from meaning / local context. This can reveal when an argument is missing. How can O1, O2 or PP be missing? Only by [+NT]. If [+NT] then also [+ObT] and [-NS] (in our UG). Does learning become faster / more accurate when learners know SUBCAT? Yes. Why? SP doesn’t choose between no-topic and nulltopic. Other triggers are rare. So triggers for 50 [+NT] are useful. Enriching the input: Summary Richer input is good if it helps with something that must be learned anyway (& other cues are scarce). It hinders if it creates a distinction that otherwise could have been ignored. (cf. Wexler & Culicover 1980) Outcomes depend on properties of this domain, but it can be tailored to the issue at hand. The ultimate interest is the light these data shed on real language acquisition. We can provide profiles of UG-based / input(in)sensitive learning, for comparison with children. The outcomes are never quite as anticipated. 51 This is just the beginning… Next on the agenda 52 Next steps ~ input properties How much damage from noisy input? E.g., 1 sentence in 5 / 10 / 100 not from target language. How much facilitation from ‘starting small’? E.g., Probability of occurrence inversely proportional to sentence length. How much facilitation (or not) from the exact mix of sentences in child-directed speech? (cf. Newport, 1977; Yang, 2002) 53 Next steps ~ learning models Add connectionist and statistical learners. Add our favorite STL (= Parse Naturally), with MA, MCP etc. and a p-value ‘lexicon’. (Fodor 1998b) Implement the ambiguity / irrelevance distinction, important to Waiting-STL. Evaluate models for realistic sequence of setting parameters. (Time course data) Your request here 54 www.colag.cs.hunter.cuny.edu www.colag.cs.hunter.cuny.edu The end 55 REFERENCES Bertolo, S., Broihier, K., Gibson, E., and Wexler, K. (1997) Cue-based learners in parametric language systems: Application of general results to a recently proposed learning algorithm based on unambiguous 'superparsing'. In M. G. Shafto and P. Langley (eds.) 19th Annual Conference of the Cognitive Science Society, Lawrence Erlbaum Associates, Mahwah, NJ. Berwick, R.C. and Niyogi, P. (1996) Learning from Triggers. Linguistic Inquiry, 27(2), 605-622. Chomsky, N. (1995) The Minimalist Program. Cambridge MA: MIT Press. Clark, R. (1988) On the relationship between the input data and parameter setting. NELS 19, 48-62. Clark, R. (1992) The selection of syntactic knowledge, Language Acquisition 2(2), 83-149. Dresher, E. (1999) Charting the learning path: Cues to parameter setting. Linguistic Inquiry 30.1, 27-67. Fodor, J D. (1998a) Unambiguous triggers, Linguistic Inquiry 29.1, 1-36. Fodor, J.D. (1998b) Parsing to learn. Journal of Psycholinguistic Research 27.3, 339-374. Fodor, J.D., I. Melnikova and E. Troseth (2002) A structurally defined language domain for testing syntax acquisition models, CUNY-CoLAG Working Paper #1. Gibson, E. and Wexler, K. (1994) Triggers. Linguistic Inquiry 25, 407-454. Kayne, R.S. (1994) The Antisymmetry of Syntax. Cambridge MA: MIT Press. Kohl, K.T. (1999) An Analysis of Finite Parameter Learning in Linguistic Spaces. Master’s Thesis, MIT. Lightfoot, D. (1991) How to set parameters: Arguments from Language Change. Cambridge, MA: MIT Press. Sakas, W.G. (2000) Ambiguity and the Computational Feasibility of Syntax Acquisition, PhD Dissertation, City University of New York. Sakas, W.G. and Fodor, J.D. (2001). The Structural Triggers Learner. In S. Bertolo (ed.) Language Acquisition and Learnability. Cambridge, UK: Cambridge University Press. Sakas, W.G. and Nishimoto, E. (2002). Search, Structure or Heuristics? A comparative study of memoryless algorithms for syntax acquisition. 24th Annual Conference of the Cognitive Science Society. Hillsdale, NJ: Lawrence Erlbaum Associates. Yang, C.D. (2000) Knowledge and Learning in Natural Language. Doctoral dissertation, MIT. Yang, C.D. (2002) Knowledge and Learning in Natural Language. Oxford University Press. Villavicencio, A. (2000) The use of default unification in a system of lexical types. Paper presented at the Workshop on Linguistic Theory and Grammar Implementation, Birmingham,UK. 56 Wexler, K. and Culicover, P. (1980) Formal Principles of Language Acquisition. Cambridge MA: MIT Press. Something we can’t do: production What do learners say when they don’t know? Sentences in Gcurrent, but not in Gtarget. Do these sound like baby-talk? Me has Mary not kissed why? (early) Whom must not take candy from? (later) Sentences in Gtarget but not in Gcurrent. Goblins Jim gives apples to. 57 CHILD-DIRECTED SPEECH STATISTICS FROM THE CHILDES DATABASE The current domain of 13 parameters is almost as much as it’s feasible to work with – maybe we can eventually push it up to 20. Each ‘language’ in the domain has only the properties assigned to it by the 13 parameters. Painful decisions – what to include? what to omit? To decide, we consult adult speech to children in CHILDES transcripts. Child age approx 1½ to 2½ years (earliest produced syntax). Child’s MLU very approx 2. Adults’ MLU from 2.5 to 5. So far: English, French, German, Italian, Japanese. (Child Language Data Exchange System, MacWhinney 1995) 58 STATISTICS ON CHILD-DIRECTED SPEECH FROM THE CHILDES DATABASE ENGLISH GERMAN ITALIAN JAPANESE RUSSIAN Name+Age (Y;M.D) Eve 1;8-9.0 Nicole 1;8.15 Martina 1;8.2 Jun (2;2.5-25) Varvara 1;6.5 -1;7.13 File Name eve05-06.cha nicole.cha mart03,08.cha jun041-044.cha varv01-02.cha Researcher/Childes folder name BROWN WAGNER CALAMBRONE ISHII PROTASSOVA Number of Adults 4,3 2 2,2 1 4 MLU Child 2.13 2.17 1.94 1.606 2.8 MLU Adults (avg. of all) 3.72 4.56 5.1 2.454 3.8 Total Utterances (incl. Frags.) 1304 Usable Utterances/Fragments USABLES (% of all utterances) 1107 806/498 62% 1258 728/379 66% 1691 929/329 74% 1008 1113/578 66% 727/276 72% DECLARATIVES 40% 42% 27% 25% 34% DEICTIC DECLARATIVES 8% 6% 3% 8% 7% MORPHO-SYNTACTIC QUESTIONS 10% 12% 0% 18% 2% PROSODY-ONLY QUESTIONS 7% 5% 15% 14% 5% WH-QUESTIONS 22% 8% 27% 15% 34% IMPERATIVES 13% 27% 24% 11% 11% EXCLAMATIONS 0% 0% 1% 3% 0% LET'S CONSTRUCTIONS 0% 0% 2% 4% 2% 59 FRAGMENTS (% of all utterances) 38% 34% 26% 34% 27% NP FRAGMENTS 25% 24% 37% 10% 35% VP FRAGMENTS 8% 7% 6% 1% 8% AP FRAGMENTS 4% 3% 16% 1% 7% PP FRAGMENTS 9% 4% 5% 1% 3% WH-FRAGMENTS 10% 2% 10% 2% 6% OTHER (E.g. stock expressions yes, huh) 44% 60% 26% 85% 41% COMPLEX NPs (not from fragments) Total Number of Complex NPs Approx 1 per 'n' utterances 140 55 88 58 105 6 13 11 19 7 NP with one ADJ 91 36 27 38 54 NP with two ADJ 7 1 2 0 4 NP with a PP 20 3 15 14 18 NP with possessive ADJ 22 7 0 0 4 NP modified by AdvP 0 0 31 1 6 NP with relative clause 0 8 13 5 5 60 DEGREE-n Utterances DEGREE 0 88% 84% 81% 94% 77% Degree 0 deictic (E.g. that's a duck) 8% 6% 2% 8% 18% Degree 0 (all others) 92% 94% 98% 92% 82% DEGREE 1+ 12% 16% 19% 6% 33% infinitival complement clause 36% 1% 31% 2% 30% finite complement clause 12% 1% 40% 10% 26% relative clause 10% 16% 12% 8% 3% coordinating clause 30% 59% 9% 10% 41% adverbial clause 11% 18% 7% 80% 0% ANIMACY and CASE % Utt. with +animate somewhere 62% 60% 37% 8% 31% % Subjects (overt) 94% 91% 56% 63% 97% % Objects (overt) 18% 23% 44% 23% 14% Case-marked NPs 238 439 282 100 949 Nominative 191 283 36 45 552 Accusative 47 79 189 4 196 Dative 0 77 57 4 35 Genitive 0 0 0 14 98 Topic 0 0 0 34 0 Instrumental and Prepositional 0 0 0 0 68 Subject drop 0 26 379 740 124 Object drop 0 4 0 125 37 Negation Occurrences 62 73 43 72 71 Nominal 5 19 2 0 8 Sentential 57 54 41 72 63 61 www.colag.cs.hunter.cuny.edu 62