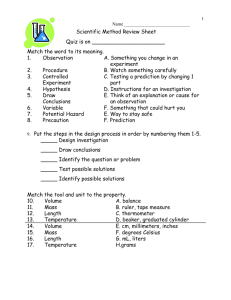

InterimPPT - The University of Texas at Arlington

advertisement

Interim Presentation on

Topic: Scalable video coding extension of HEVC

(S-HEVC)

A PROJECT UNDER THE GUIDANCE OF DR. K. R. RAO COURSE:

EE5359 - MULTIMEDIA PROCESSING, SPRING 2015

Submitted By:

Aanal Desai

UT ARLINGTON ID: 1001103728

EMAIL ID: aanal.desai@mavs.uta.edu

DEPARTMENT OF ELECTRICAL ENGINEERING UNIVERSITY OF TEXAS,

ARLINGTON

List of Acronyms:

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

AVC – Advanced Video Coding

AMVP – Advanced motion vector prediction.

BL – Base Layer

BO – Band Offset

CABAC – Context Adaptive Binary Arithmetic Coding

CTB – Coding Tree Block

CTU – Coding Tree Unit

CU – Coding Unit

CIF – Common Intermediate Format.

DASH – Dynamic Adaptive Streaming over HTTP

DC – Direct Current.

DCT – Discrete Cosine Transform

Diff – Difference

DPB – Decoded Picture Buffer

DST – Discrete Sine Transform

EL – Enhancement Layer

ED – Entropy Decoder

EO – Edge Offset.

FPS – Frames per second

Filt – Filter.

FIR – Finite Impulse Response.

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

GOP – Group of pictures.

HD – High Definition

HDTV – High Definition Television

HEVC – High Efficiency Video Coding

HLS – High Level Syntax

HTTP – Hyper Text Transfer Protocol

ILR – Inter Layer Reference

IEC – International Electro-technical Commission.

IP – Intra Prediction.

IQ – Inverse Quantization.

IT – Inverse Transform.

ITU-T – International Telecommunication Union-Telecommunications standardization sector.

ISO – International Standardization Organization.

JCTVC – Joint Collaborative Team on Video Coding

JPEG- Joint Picture Experts Group

LCU – Largest Coding Unit.

LM – Linear Mode.

LP – Loop Filtering.

MANE – Media Aware Network Elements.

•

•

Mbps – Megabits per second

MC – Motion compensation.

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

MPD – Media Presentation Description

MPEG – Moving Picture Experts Group

MV – Motion Vector

PB – Prediction Block.

PDA – Personal Digital Assistant.

PSNR – Peak Signal to Noise Ratio

PU – Prediction Unit

QCIF – Quarter Common Intermediate Format.

QP – Quantization Parameter.

ROI – Region Of Interest.

SAO – Sample Adaptive Offset

SHVC – Scalable High Efficiency Video Coding

SNR – Signal to Noise Ratio

SVC – Scalable Video Coding.

SPIE – Society of Photo-Optical Instrumentation Engineers

TU – Transform Unit

TB – Transform Block.

VCEG – Video Coding Experts Group.

VCL – Video Coding Layer.

VGA – Video Graphics Array.

UHD – Ultra High Definition

URL – Uniform Resource Locator

4CIF – 4x CIF.

Overview

• An increasing demand for video streaming to mobile devices such as

smartphones, tablet computers, or notebooks and their broad variety of

screen sizes and computing capabilities stimulate the need for a scalable

extension.

• Modern video transmission systems using the Internet and mobile

networks are typically characterized by a wide range of connection

qualities, which are a result of the used adaptive resource sharing

mechanisms. In such diverse environments with varying connection

qualities and different receiving devices, a flexible adaptation of onceencoded content is necessary[2].

• The objective of a scalable extension for a video coding standard is to

allow the creation of a video bitstream that contains one or more subbitstreams, that can be decoded by themselves with a complexity and

reconstruction quality comparable to that achieved using single-layer

coding with the same quantity of data as that in the sub-bitstream[2].

Introduction

• SHVC provides a 50% bandwidth reduction for the same video quality

when compared to the current H.264/AVC standard. SHVC further offers a

scalable format that can be readily adapted to meet network conditions or

terminal capabilities. Both bandwidth saving and scalability are highly

desirable characteristics of adaptive video streaming applications in

bandwidth-constrained, wireless networks[3].

• The scalable extension to the current H.264/AVC [4] video coding standard

(H.264/SVC) [8] provided resources of readily adapting encoded video

stream to meet receiving terminal's resource constraints or prevailing

network conditions.

• The JCT-VC is now developing the scalable extension (SHVC) [5] to HEVC in

order to bring similar benefits in terms of terminal constraint and network

resource matching as H.264/SVC does, but with a significantly reduced

bandwidth requirement[3]

Types of Scalabilities

• Temporal, Spatial and SNR Scalabilities

• Spatial scalability and temporal scalability defines cases in which a subbitstream represents the source content with a reduced picture size (or

spatial resolution) and frame rate (or temporal resolution), respectively[1].

• Quality scalability, which is also referred to as signal-to-noise ratio (SNR)

scalability or fidelity scalability, the sub-bitstream delivers the same spatial

and temporal resolution as the complete bitstream, but with a lower

reproduction quality and, thus, a lower bit rate[2].

Block diagram of spatial scalability:

Figure 1[24]

Block Diagram of SNR Scalability:

Figure 2 [24]

• Block diagrams of spatial and SNR scalable coding are Depicted in Fig.1

and 2, respectively. Note that the down-sampling is a non-normative part,

i.e. not specified in the standard Normative inter-layer processing is

present in spatial scalability case ("up-sampling"). The key idea proposed

in this paper is to replace the trivial copying (dotted in Fig. 1b) by denoising inter-layer filter, which improves the quality of inter-layer texture

prediction so that improves the coding efficiency of the enhancement

layer.[24]

• While analyzing the spectral characteristics of both down-sampling and

up-sampling filters used for spatial scalability, we found that removing

high-frequency noise from the reference signal before prediction is

effective.[24]

• Reference signal in SNR scalability case, i.e. the reconstructed base layer

picture, usually contains more coding noise compared with spatial

scalability case since it is coded with higher QP. Therefore, it is reasonable

to design inter-layer filter for SNR scalability with de-noising properties.

[24]

High-Level Block Diagram of the

Proposed Encoder

Figure 3 [1]

Inter-layer Intra prediction

• A block of the enhancement layer is predicted using the reconstructed

(and up-sampled) base layer signal.[2]

• Inter-layer motion prediction:- The motion data of a block are completely

inferred using the (scaled) motion data of the co-located base layer blocks,

or the (scaled) motion data of the base layer are used as an additional

predictor for coding the enhancement layer motion. [2]

• Inter-layer residual prediction:- The reconstructed (and up-sampled)

residual signal of the co-located base layer area is used for predicting the

residual signal of an inter-picture coded block in the enhancement layer,

while the motion compensation is applied using enhancement layer

reference pictures[2].

Up-sampling filter

• The base-layer pixel samples needs to be up-sampled to support interlayer texture prediction in the spatial scalability case. Presently SHVC

supports spatial scalability ratios of 2:1 and 3:2.

• In order to support these two configurations of spatial scalability, a set of

interpolation filters were introduced in addition to the HEVC motion

compensation interpolation filters. [24]

• Up-sampling filter is the key part of inter-layer texture prediction in the

case of spatial scalability. As shown in SHVC tool experiments, inter-layer

texture prediction delivers the most part of SHVC gain (~18% in terms of

Luma BD-rate reduction). [22]

• The phases in Table 1 and 2 represent theoretically accurate phase shifts

used in filter coefficients design. In actual implementation, division free

phase derivation is used [23]. Filters for zero-phase shift in Tables 1 and 2

are trivial. Outputs of these filters are identical to their inputs. [24]

Up-sampling filter

Table 1: Luma Up-Sampling Filters [24]

Table 2: Chroma Up-Samplimg Filters [24]

Inter-layer texture prediction

• H.264/AVC-SVC [14] presented inter-layer prediction for spatial and SNR

scalabilities by using intra-BL and residual prediction under the restriction

of a single-loop decoding structure.[20]

• To enable the selection of this up-sampled information for prediction in

the enhancement layer, the scalability extension employs a so-called

“reference index” approach [20]. Conceptually, this approach requires an

enhancement layer decoder to insert the up-sampled reference layer

picture into the enhancement layer RPL. [18]

• The up-sampled picture can then be signaled for reference in the same

manner as usually in inter-frame prediction. That is, the enhancement

layer bitstream signals an inter-mode CU, with the reference index

corresponding to the up-sampled picture inserted into the enhancement

layer RPL (with a zero motion vector used for this specific reference

picture). [18]

Figure 4[31]

Figure 5 [31]

• Hong et al [15] proposed a scalable video coding scheme for HEVC,

where the residual prediction process is extended to both intra and

inter prediction modes within a multi-loop decoding framework. In

addition to the intra-BL and residual prediction, a combined prediction

mode, which uses the average of the EL prediction and the intra-BL

prediction as the final prediction, and multi- hypothesis inter

prediction, which produces additional predictions for EL block using BL

block motion information, are also presented.

Intra-BL prediction

•

•

•

•

•

To utilize reconstructed base layer information, two Coding Unit (CU) level

modes, namely intra-BL and intra-BL skip, are introduced[1].

The first scalable coding tool in which the enhancement layer prediction signal

is formed by copying or up-sampling the reconstructed samples of the colocated area in the base layer is called Intra-BL prediction mode. [2]

For an enhancement layer CU, the prediction signal is formed by copying or,

for spatial scalable coding, up-sampling the co-located base layer

reconstructed samples. Since the final reconstructed samples from the base

layer are used, multi-loop decoding architecture is essential. [2]

When a CU in the EL picture is coded by using the intra-BL mode, the pixels in

the collocated block of the up-sampled BL are used as the prediction for the

current CU. [1]

The scalable extension of H.264/MPEG-4 AVC uses 4-tap FIR filters for

upsampling of the luma signal [8], 8-tap filters are applied in the proposed

HEVC extension. For chroma, bi-linear filters are used. [21]

• For supporting arbitrary resolution ratios, for each enhancement layer

sample position, the used filter is selected based on the required phase

shift [21].

• The upsampling filters used for the IntraBL mode are designed to provide

a good coding efficiency over a wide variety of base and enhancement

layer signals. However, even within each picture, video signals may show a

high degree of non-stationarity.[2]

• The operation is similar to the inter-layer intra prediction in the scalable

extension of H.264| MPEG-4 AVC, except that it is likely to use the samples

of both intra and inter predicted blocks from the base layer[2].

• Additionally, quantization errors and noise may show varying

characteristics in different parts of a picture. Hence, to adapt the

upsampling filter to local signal characteristics, another inter-layer intra

coding mode, referred to as InterBLFilt mode is introduced. This mode is

used in the same way as the InterBL mode.

Figure 6. Intra BL mode [2]

Intra residual prediction

• In the intra residual prediction mode, the difference between the intra

prediction reference samples in the EL and collocated pixels in the upsampled BL is generally used to produce a prediction, denoted as

difference prediction, based on the intra prediction mode. The generated

difference prediction is further added to the collocated block in the upsampled BL to form the final prediction.[1]

Figure 7. Intra Residual Prediction [1]

Weighted Intra prediction

• In this mode, the (upsampled) base layer reconstructed signal constitutes

one component for prediction. Another component is acquired by regular

spatial intra prediction as in HEVC, by using the samples from the causal

neighborhood of the current enhancement layer block. The base layer

component is low pass filtered and the enhancement layer component is

high pass filtered and the results are added to form the prediction.[2]

• The weights for the base layer signal are set such that the low frequency

components are taken and the high frequency components are

suppressed, and the weights for the enhancement layer signal are set vice

versa. The weighted base and enhancement layer coefficients are added

and an inverse DCT is computed to obtain the final prediction[2].

• In our implementation, both low pass and high pass filtering happen in the

DCT domain, as illustrated in Figure 8. First, the DCTs of the base and

enhancement layer prediction signals are computed and the resulting

coefficients are weighted according to spatial frequencies.[2]

Figure 8: Weighted intra prediction mode. The (up-sampled) base layer

reconstructed samples are combined with the spatially predicted

enhancement layer samples to predict an enhancement layer CU to be coded.

[2]

Difference prediction modes

• The principle in difference prediction modes is to lessen the systematic

error when using the (up-sampled) base layer reconstructed signal for

prediction. It is accomplished by reusing the previously corrected

prediction errors available to both encoder and decoder. [17]

• To this end, a new signal, denoted as the difference signal, is derived using

the difference amongst already reconstructed enhancement layer samples

and (up-sampled) base layer samples. [17]

• The final prediction is made by adding a component from the (upsampled)

base layer reconstructed signal and a component from the difference

signal [17].This mode can be used for inter as well as intra prediction

cases[2].

• In inter difference prediction shown in Fig 9, the (upsampled) base layer

reconstructed signal is added to a motion-compensated enhancement

layer difference signal equivalent to a reference picture to obtain the final

prediction for the current enhancement layer block.[2]

• For the enhancement layer motion compensation, the same inter

prediction technique as in single-layer HEVC is used, but with a bilinear

interpolation filter[2].

Figure 9: Inter difference prediction mode. The (upsampled) base layer

reconstructed signal is combined with the motion compensated difference

signal from a reference picture to predict the enhancement layer CU to be

coded. [2]

Intra Prediction

• In the intra difference prediction, the (up-sampled) base layer

reconstructed signal constitutes one component for the prediction. The

intra prediction modes that are used for spatial intra prediction of the

difference signal are coded using the regular HEVC syntax. [2]

Fig 10. Intra difference prediction mode. The (upsampled) base layer

reconstructed signal is combined with the intra predicted difference signal to

predict the enhancement layer block to be coded. [2]

Motion vector prediction

• Our scalable video extension of HEVC employs several methods to

improve the coding of enhancement layer motion information by

exploiting the availability of base layer motion information[2]

• In HEVC, two modes can be used for MV coding, namely, “merge” and

“advanced motion vector prediction (AMVP)”. In the both modes, some of

the most probable candidates are derived based on motion data from

spatially adjacent blocks and the collocated block in the temporal

reference picture. The “merge” mode allows the inheritance of MVs from

the neighboring blocks without coding the motion vector difference [16].

• In HEVC, TMVP is used to predict motion information for a current PU

from a co-located PU in the reference picture. The process is defined to

require the prediction modes, reference indices, luma motion vectors and

reference picture order counts (POCs) of the co-located PU. [19]

• The goal of the motion field mapping process is then to project this

motion information from the reference layer to the enhancement layer’s

resolution, while also accounting for the 16×16 TMVP storage units in the

reference layer.[18]

• In the offered scheme, collocated base layer MVs are used in both the

merge mode and the AMVP mode for enhancement layer coding. The base

layer MV is inserted as the first candidate in the merge candidate list and

added after the temporal candidate in the AMVP candidate list. The MV at

the center position of the collocated block in the base layer picture is used

in both merge and AVMP modes[1].

• In HEVC, the motion vectors are compressed after being coded and the

compressed motion vectors are utilized in the TMVP derivation for

pictures that are coded later. [1]

Inferred prediction mode

• For a CU in EL coded in the inferred base layer mode, its motion

information (including the inter prediction direction, reference index and

motion vectors) is not signaled. Instead, for each 4×4 block in the CU, its

motion information is derived from its collocated base layer block. Once

the motion information of a collocated base layer block is unavailable

(e.g., the collocated base layer block is intra predicted), the 4x4 block is

predicted in the same method as in the intra-BL mode[1].

Test Sequences:

No.

Sequence name

Resolutio Type

n

No. of

Frames

1

City

176*144

CQIF

30

352*288

CIF

30

352*288

CIF

30

704*576

4CIF

30

2

Harbour

Fig.11 [34]

Simulation Results:

• For evaluating the efficiency of the proposed scalable HEVC extension, we

compared the coding efficiency of the scalable approach with two layers

to that of simulcast and single layer coding. All layers have been coded

using pictures with a GOP size of 8 pictures. For both scalable coding and

simulcast, the same base layers are used.Here QPs of 22, 27, 32 and 37 for

the base layer and QPs of 20, 25, 30 and 35 for the enhancement layer as

recommended by JCT-VC [27]. The simulation results for various

sequences for a fixed base layer QP of 26. The scalable extension has been

implemented in the HEVC reference software HM-16.0, which has also

been used for producing the anchor bit streams.

BD-PSNR

• Bjøntegaard Delta PSNR (BD-PSNR) was proposed to objectively

evaluate the coding efficiency of the video codecs [26] [28][29]. BDPSNR provides a good evaluation of the rate-distortion (R-D)

performance based on the R-D curve fitting. BD-PSNR is a curve

fitting metric based on rate and distortion of the video sequence.

However this does not take the encoder complexity into account.

BD metrics tell more about the quality of the video sequence.

Ideally, BD-PSNR should increase and BD-bitrate should decrease.

Fig.12 : BD-PSNR vs. quantization parameter for City with BLCIF and EL-CIF

Fig. 13: BD-PSNR vs. quantization parameter for Harbour

with BL-CIF and EL-4CIF

BD-bitrate

• BD-bitrate also determines the quality of the encoded video sequence

similar to BD-PSNR. Ideally BD-bitrate should decrease for a good quality

video [28][29]. Figures 14 and 15 illustrate the BD-bitrate for the

bitstreams of proposed algorithm compared with the bitstreams encoded

using the unaltered reference software. From the figures it can be seen

that the BD-bitrate has decreased by 17% t0 29% which implies that the

quality of the encoded bitstream using the proposed algorithm has not

degraded compared to the bitstream encoded with the unaltered

reference software.

Fig.14: BD-bitrate vs. quantization parameter for

City with BL-QCIF and EL-CIF

Fig.15: BD-bitrate vs. quantization parameter

for Harbour for BL-CIF and EL-4CIF

Bitrate vs. PSNRplots:

Fig.16: PSNR vs. bitrate for City with BL-QCIF and

EL-CIF

Fig.17: PSNR vs. bitrate for Harbour with BL-CIF and

EL-4CIF

Bitstream size:

Fig.18: bitstream size vs. quantization parameter for City with

BL-QCIF and EL-CIF

Fig.19: Encoded bitstream vs. quantization parameter for

Harbour with BL-CIF and EL-4CIF

References

[1] IEEE paper by Jianle Chen, Krishna Rapaka, Xiang Li, Vadim Seregin, Liwei Guo, Marta

Karczewicz, Geert Van der Auwera, Joel Sole, Xianglin Wang, Chengjie Tu, Ying Chen, Rajan Joshi “

Scalable Video coding extension for HEVC”. Qualcomm Technology Inc, Data compression

conference (DCC)2013, DOC 20-22 March 2013

[2] IEEE paper by, Philipp Helle, Haricharan Lakshman, Mischa Siekmann, Jan Stegemann, Tobias

Hinz, Heiko Schwarz, Detlev Marpe, and Thomas Wiegand Fraunhofer Institute for

Telecommunications – Heinrich Hertz Institute, Berlin, Germany. “ScalableVideo coding extension

of HEVC” Data compression conference (DCC)2013, DOC 20-22 March 2013 { T. Hinz et al, “An

HEVC Extension for Spatial and Quality Scalable Video Coding”, Proceedings of SPIE, vol. 8666, pp.

866605-1 to 866605-16, Feb. 2013. }

[3] IEEE paper “Scalable HEVC (SHVC)-Based Video Stream Adaptation in Wireless Networks” by

James Nightingale, Qi Wang, Christos Grecos Centre for Audio Visual Communications &

Networks (AVCN). 2013 IEEE 24th International Symposium on Personal, Indoor and Mobile

Radio Communications: Services, Applications and Business Track

[4] T. Weingand et al, "Overview of the H.264/AVC video coding standard," IEEE Trans.

Circuits Syst. Video Technol., vol. 13, no. 7, pp. 560-576, July 2003.

[5] T. Hinz et al, "An HEVC extension for spatial and quality scalable video

coding," Proc. SPIE Visual Information Processing and Communication IV, Feb. 2013.

[6] B. Oztas et al, "A study on the HEVC performance over lossy networks," Proc. 19th IEEE

International Conference on Electronics, Circuits and Systems (ICECS), pp.785-788, Dec. 2012.

[7] J. Nightingale et al, "HEVStream: a framework for streaming and

evaluation of high efficiency video coding (HEVC) content in loss-prone networks," IEEE Trans.

Consum. Electron., vol.58, no.2, pp.404-412, May 2012.

[8] H.Schwarz et al, “Overview of the scalable extension of the H.264/AVC standard,”IEEE Trans

Circuits Syst Video Technology, vol.17, pp.1103-1120,Sept 2007

[9] J. Nightingale et al, "Priority-based methods for reducing the impact of packet loss on HEVC

encoded video streams," Proc. SPIE Real-Time Image and Video Processing 2013, Feb. 2013.

[10] T.Schierl et al, “Mobile Video Transmission coding”, IEEE Trans. Circuits Syst. Video Technol.,

vol. 1217, Sept 2007.

[11] J. Chen, K. Rapaka, X. Li, V. Seregin, L. Guo, M. Karczewicz, G. Van der Auwera, J. Sole, X.

Wang, C. J. Tu, Y. Chen, “Description of scalable video coding technology proposal by Qualcomm

(configuration 2)”, Joint Collaborative Team on Video Coding, doc. JCTVC- K0036, Shanghai, China,

Oct. 2012.

[12] ISO/IEC JTC1/SC29/WG11 and ITU-T SG 16, “Joint Call for Proposals on Scalable Video

Coding Extensions of High Efficiency Video Coding (HEVC)”, ISO/IEC JTC 1/SC 29/WG 11 (MPEG)

Doc. N12957 or ITU-T SG 16 Doc. VCEG-AS90, Stockholm, Sweden, Jul. 2012.

[13] A. Segall, “BoG report on HEVC scalable extensions”, Joint Collaborative Team on Video

Coding, doc. JCTVC-K0354, Shanghai, China, Oct. 2012 .

[14] H. Schwarz, D. Marpe, T. Wiegand, “Overview of the Scalable Video Coding Extension of the

H.264/AVC Standard”, IEEE Trans. Circuits and Syst. Video Technol., vol. 17, no. 9, pp. 11031120,

2007.

[15] D. Hong, W. Jang, J. Boyce, A. Abbas, “Scalability Support in HEVC”, Joint Collaborative Team

on Video Coding (JCT-VC) of ITU-T SG16 WP3 and ISO/IEC JTC1/SC29/WG11, JCTVC-F290, Torino,

Italy, Jul. 2011.

[16] G. J. Sullivan, J.-R. Ohm, W.-J. Han, T. Wiegand, “Overview of the High Efficiency Video Coding

(HEVC) Standard”, IEEE Trans. Circuits and Syst. Video Technol., to be published.

[17] J. Boyce, D. Hong, W. Jang, A. Abbas, “Information for HEVC scalability extension,” Joint

Collaborative Team on Video Coding, doc. JCTVC-G078, Nov. 2011.

[18] G.J. Sullivan et al, “Standardized extensions of High Efficiency Video Coding (HEVC)”, IEEE JSTSP, vol. 7, no. 6, pp. 1001 – 1016, Dec. 2013.

[19] J. Chen. V. Seregin, L. Guo, and M. Karczewicz, “Non-TE5: on motion mapping in SHVC,” Joint

Collaborative Team on Video Coding (JCTVC) document JCTVC-L0336, 12th Meeting: Geneva, CH,

14–23 Jan. 2013

[20] J. Dong, Y. He, Y. He, G. McClellan, E.-S. Ryu, X. Xiu, and Y. Ye, “Description of scalable video

coding technology proposal by InterDigital,” Communications Joint Collaborative Team on Video

Coding (JCT-VC) document JCTVC-K0034, 11th Meeting: Shanghai, CN, 10–19 Oct. 2012.

[21] I. Unanue et al, “A Tutorial on H.264/SVC Scalable Video Coding and its Tradeoff between

Quality, Coding Efficiency and Performance”, Recent Advances on Video Coding.[online].

Available: http://www.doc88.com/p-516795349043.html

[22] A. Segall,1. Chen,1. Dong, and E. Alshina, "TEAl: Summary Report Upsampling Filter", JCTVCLO021, Geneva, Switzerland, 14-23 Jan. 2013

[23] J. Chen, "BoG Report on reference layer sample location derivation in SHVC", JCTVC-M0449,

lncheon, Korea, 18-26Apr. 2013.

[24] Inter-Iayer Filtering for Scalable Extension of HEVC Elena Alshina, Alexander Alshin,

Yongjin Cho, and JeongHoon Park Samsung Electronics Co., Ltd. {elena _ a.alshina,

alexander _ b.alshin,yongjin9.cho, jeonghoon}@samsung.com Wei Pu, Jianle Chen, Xiang Li,

Vadim Seregin, and Marta Karczewicz QualcommTechnologies Inc. {wpu, cjianle, Ixiang,

vseregin, martak}@qti.qualcomm.com , 2013 IEEE.

[25] Test sequences for scalable video coding. [online]. Available: ftp://ftp.tnt.unihannover.de/pub/svc/testsequences/

[26] C-L. Su, T-M. Che and C-Y. Huang, “Cluster-Based Motion Estimation Algorithm With Low Memory

and Bandwidth Requirements for H.264/AVC Scalable Extension”, IEEE Trans. on CSVT, vol. 24, no. 6, pp.

1016-1024, June 2014.

[27] X. Li et al, “Rate-Complexity-Distortion evaluation for hybrid video coding”, IEEE Trans. on CSVT, vol.

21, pp. 957 - 970, July 2011.

[28] K. Shah, “Reducing the complexity of Inter-prediction mode decision for HEVC”, M.S. Thesis,

University of Texas at Arlington, UMI Dissertation Publishing, April 2014. [online]. Available:

http://www-ee.uta.edu/Dip/Courses/EE5359/KushalShah_Thesis.pdf

[29] S.Vasudevan, “Fast intra prediction and fast residual quadtree encoding implementation in HEVC”,

M.S. Thesis, University of Texas at Arlington, UMI Dissertation Publishing, Nov. 2013. [online]. Available

http://www-ee.uta.edu/Dip/Courses/EE5359/index.html

[30] https://hevc.hhi.fraunhofer.de/trac/hevc JCT-VC Document.

[31] Scalable Extension Of HEVC

http://www.mpeg.or.kr/doc/2011/%EC%A0%9C12%ED%9A%8CMPEG%ED%8F%AC%EB%9F%BC%EC%B

4%9D%ED%9A%8C%EB%B0%8F%EA%B8%B0%EC%88%A0%EC%9B%8C%ED%81%AC%EC%83%B5/4-2%ED%95%9C%EC%A2%85%EA%B8%B0-HEVC_extension.pdf

[32] (H.265/HEVC) Tutorial by Madhukar Budagavi m.budagavi@samsung.com

http://www.uta.edu/faculty/krrao/dip/Courses/EE5359/budagaviiscas2014ppt.pdf

[33] H.264 Advanced video coding http://www.vcodex.com/h264.html

[34] Test sequences: https://media.xiph.org/video/derf/

[35] Test Sequences: ftp://ftp.kw.bbc.co.uk/hevc/hm-11.0-anchors/bitstreams/

[36] SHVC software and software manual: The source code for the software and its manual is available in the following

SVN repository.[online]. Available: https://hevc.hhi.fraunhofer.de/svn/svn_SHVCSoftware/

[37] SHVC bitstream layer parser.[online]. Available: http://r2d2n3po.tistory.com/70

[38] S. Riabstev, “Detailed overview of HEVC/H.265”, [online]. Available: https://app.box.com/s/rxxxzr1a1lnh7709yvih

[39] K.R. Rao, D.N. Kim and J.J. Hwang, “Video Coding Standards: AVS China, H.264/MPEG-4 Part10, HEVC, VP6, DIRAC and VC-1”, Springer,

2014.

[40] Access to HM 16.0 Reference Software: http://hevc.hhi.fraunhofer.de/

[41] Website on PSNR: http://en.wikipedia.org/wiki/Peak_signal-to-noise_ratio