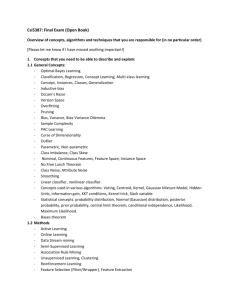

PPT - University of California, Irvine

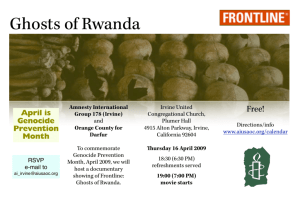

advertisement

CS 277: Data Mining

Text Classification

Padhraic Smyth

Department of Computer Science

University of California, Irvine

Some slides adapted from Introduction to Information Retrieval, by

Manning, Raghavan, and Schutze, Cambridge University Press, 2009,

with additional slides from Ray Mooney.

Progress Report

• New deadline

– In class, Thursday February 18th (not Tuesday)

• Outline

– 3 pages maximum

– Suggested content

• Brief restatement of the problem you are addressing (no need to repeat everything in

your original proposal), e.g., ½ a page

• Summary of progress so far

– Background papers read

– Data preprocessing, exploratory data analysis

– Algorithms/Software reviewed, implemented, tested

– Initial results (if any)

• Challenges and difficulties encountered

• Brief outline of plans between now and end of quarter

– Use diagrams, figures, tables, where possible

– Write clearly: check what you write

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Currently in the News….

• Communications of the ACM, Feb 2010

– Fun short article, “Where the Data is”

• http://cacm.acm.org/magazines/2010/2/69384-where-the-data-is/fulltext

– Technical article on Fast Dimension Reduction

• Introduction:

http://cacm.acm.org/magazines/2010/2/69382-strange-effects-in-high-dimension/fulltext

• Article:

http://cacm.acm.org/magazines/2010/2/69359-faster-dimension-reduction/fulltext

• New York Times, Feb 8, 2010

• Interesting study of which email articles are mailed most often

http://www.nytimes.com/2010/02/09/science/09tier.html?ref=technology

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Conferences related to Text Mining

• ACM SIGIR Conference

• WWW Conference

• ACM SIGKDD Conference

Worth looking at papers in recent years for many different ideas

related to text mining

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Further Reading on Text Classification

•

General references on text and language modeling

– Introduction to Information Retrieval, C. Manning, P. Raghavan, and H. Schutze,

Cambridge University Press, 2008

– Foundations of Statistical Language Processing, C. Manning and H. Schutze, MIT

Press, 1999.

– Speech and Language Processing: An Introduction to Natural Language

Processing, Dan Jurafsky and James Martin, Prentice Hall, 2000.

– The Text Mining Handbook: Advanced Approaches to Analyzing Unstructured

Data, Feldman and Sanger, Cambridge University Press, 2007

•

Web-related text mining in general

– S. Chakrabarti, Mining the Web: Discovering Knowledge from Hypertext Data,

Morgan Kaufmann, 2003.

– See chapter 5 for discussion of text classification

•

SVMs for text classification

– T. Joachims, Learning to Classify Text using Support Vector Machines: Methods,

Theory and Algorithms, Kluwer, 2002

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Text Classification

•

Text classification has many applications

•

Data Representation

•

Classification Methods

– Spam email detection

– Automated tagging of streams of news articles, e.g., Google News

– Online advertising: what is this Web page about?

– “Bag of words” most commonly used: either counts or binary

– Can also use “phrases” (e.g., bigrams) for commonly occurring combinations of

words

– Naïve Bayes widely used (e.g., for spam email)

• Fast and reasonably accurate

– Support vector machines (SVMs)

• Typically the most accurate method in research studies

• But more complex computationally

– Logistic Regression (regularized)

• Not as widely used, but can be competitive with SVMs (e.g., Zhang and Oles, 2002)

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Ch. 13

Types of Labels/Categories/Classes

• Assigning labels to documents or web-pages

– Labels are most often topics such as Yahoo-categories

– "finance," "sports," "news>world>asia>business"

• Labels may be genres

– "editorials" "movie-reviews" "news”

• Labels may be opinion on a person/product

– “like”, “hate”, “neutral”

• Labels may be domain-specific

–

–

–

–

–

Data Mining Lectures

"interesting-to-me" : "not-interesting-to-me”

“contains adult language” : “doesn’t”

language identification: English, French, Chinese, …

search vertical: about Linux versus not

“link spam” : “not link spam”

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Common Data Sets used for Evaluation

•

Reuters

– 10700 labeled documents

– 10% documents with multiple class labels

•

Yahoo! Science Hierarchy

– 95 disjoint classes with 13,598 pages

•

20 Newsgroups data

– 18800 labeled USENET postings

– 20 leaf classes, 5 root level classes

•

WebKB

– 8300 documents in 7 categories such as “faculty”, “course”, “student”.

•

Industry

– 6449 home pages of companies partitioned into 71 classes

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Practical Issues

•

Tokenization

– Convert document to word counts = “bag of words”

– word token = “any nonempty sequence of characters”

– for HTML (etc) need to remove formatting

•

Canonical forms, Stopwords, Stemming

– Remove capitalization

– Stopwords:

• remove very frequent words (a, the, and…) – can use standard list

• Can also remove very rare words, e.g., words that only occur in k or fewer documents,

e.g., k = 5

– Stemming (next slide)

•

Data representation

– e.g., sparse 3 column for bag of words: <docid termid count>

– can use inverted indices, etc

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Toy example of a document-term matrix

database

SQL

index

regression

likelihood

linear

d1

24

21

9

0

0

3

d2

32

10

5

0

3

0

d3

12

16

5

0

0

0

d4

6

7

2

0

0

0

d5

43

31

20

0

3

0

d6

2

0

0

18

7

16

d7

0

0

1

32

12

0

d8

3

0

0

22

4

2

d9

1

0

0

34

27

25

d10

6

0

0

17

4

23

Stemming

• Want to reduce all morphological variants of a word to a single

index term

– e.g. a document containing words like fish and fisher may not be retrieved by a

query containing fishing (no fishing explicitly contained in the document)

• Stemming - reduce words to their root form

• e.g. fish – becomes a new index term

• Porter stemming algorithm (1980)

– relies on a preconstructed suffix list with associated rules

• e.g. if suffix=IZATION and prefix contains at least one vowel followed by a consonant,

replace with suffix=IZE

– BINARIZATION => BINARIZE

• Not always desirable: e.g., {university, universal} -> univers (in Porter’s)

•

WordNet: dictionary-based approach

•

May be more useful for information retrieval/search than classification

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Confusion Matrix and Accuracy

True

Class 1

True

Class 2

True

Class 3

Predicted

Class 1

80

60

10

Predicted

Class 2

10

90

0

Predicted

Class 3

10

30

110

Accuracy = fraction of examples classified correctly

= 280/400

= 70%

Precision

Precision for class k

= fraction predicted as class k that belong to class k

= 90/100

= 90% for class 2 below

True

Class 1

True

Class 2

True

Class 3

Predicted

Class 1

80

60

10

Predicted

Class 2

10

90

0

Predicted

Class 3

10

30

110

Recall

Recall for class k

= fraction that belong to class k predicted as class k

= 90/180

= 50% for class 2 below

True

Class 1

True

Class 2

True

Class 3

Predicted

Class 1

80

60

10

Predicted

Class 2

10

90

0

Predicted

Class 3

10

30

110

Precision-Recall Curves

• Rank our test documents by predicted probability of belonging to

class k

– Sort the ranked list

– For t = 1…. N (N = total number of test documents)

• Select top t documents on the list

• Classify documents above as class k, documents below as not class k

• Compute precision-recall number

– Generates a set of precision-recall values we can plot

– Perfect performance: precision = 1, recall = 1

– Similar concept to ROC

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Precision-recall for category: Crude

1

0.9

0.8

0.7

Precision

0.6

0.5

LSVM

Decision Tree

Naïve Bayes

Rocchio

0.4

0.3

0.2

0.1

0

0

0.2

0.4

0.6

0.8

1

Dumais

(1998)

Recall

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

F-measure

• F-measure = weighted harmonic mean of precision and recall

(at some fixed operating threshold for the classifier)

F1 = 1/ ( 0.5/P + 0.5/R )

= 2PR / (P + R)

Useful as a simple single summary measure of performance

Sometimes “breakeven” F1 used, i.e., measured when P = R

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Sec.13.6

F-Measures on Reuters Data Set

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Probabilistic “Generative” Classifiers

• Model p( x | ck ) for each class and perform classification via Bayes rule,

c = arg max { p( ck | x ) } = arg max { p( x | ck ) p(ck) }

• How to model p( x | ck )?

– p( x | ck ) = probability of a “bag of words” x given a class ck

– Two commonly used approaches (for text):

• Naïve Bayes: treat each term xj as being conditionally independent, given ck

• Multinomial: model a document with N words as N tosses of a p-sided die

– Other models possible but less common,

• E.g., model word order by using a Markov chain for p( x | ck )

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Naïve Bayes Classifier for Text

• Naïve Bayes classifier = conditional independence model

– Assumes conditional independence assumption given the class:

p( x | ck ) = P p( xj | ck )

– Note that we model each term xj as a discrete random variable

– Binary terms (Bernoulli):

p( x | ck ) = P p( xj | ck )

– Non-binary terms (counts) (far less common)

p( x | ck ) = P p( xj = k | ck )

can use a parametric model (e.g., Poisson) or non-parametric model

(e.g., histogram) for p(xj = k | ck ) distributions.

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Simple Example: Naïve Bayes (binary)

• Vocabulary = {complexity, bound, cluster, classifier}

• Two classes: class 1 = algorithms, class 2 = data mining

• Naïve Bayes model:

– Probability that a word appears in a document, given the document class

– Assumes that each word appears independently of the others

Data Mining Lectures

complexity

bound

regression

classifier

Algorithms

0.9

0.7

0.1

0.1

Data Mining

0.2

0.1

0.7

0.8

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Spam Email Classification

• Naïve Bayes widely used in spam filtering

– See Paul Graham’s A Plan for Spam (on the Web)

• Naive Bayes-like classifier with unusual parameter estimation

– Widely used in spam filters

• Naive Bayes works well when appropriately used

• Very easy to update the model: just update counts

– But also many other aspects

• black lists, HTML features, etc.

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Multinomial Classifier for Text

•

Multinomial Classification model

– Assume that the data are generated by a p-sided die (multinomial model)

p(x | ck ) p( xj | ck )

nj

j 1

– where product is over all terms in the vocabulary

nj = number of times term j occurs in the document

– Here we have a single random variable for each class, and the p( xj = i | ck )

probabilities sum to 1 over i (i.e., a multinomial model)

– Note that high correlation can not be modeled well compared to naïve Bayes

• e.g., two words that should almost always appear in a document for a given class

– Probabilities typically only defined and evaluated for non-zero counts

• But “zero counts” could also be modeled if desired

• This would be equivalent to a Naïve Bayes model with a geometric distribution on

counts

Simple Example: Multinomial

• Vocabulary = {complexity, bound, cluster, classifier}

• Two classes: class 1 = algorithms, class 2 = data mining

• Multinomial model:

– Probability of next word in a document, given the document class

– Assumes that words are “memoryless”

Data Mining Lectures

complexity

bound

regression

classifier

Algorithms

0.5

0.4

0.05

0.05

Data Mining

0.1

0.05

0.3

0.55

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Highest Probability Terms in Multinomial Distributions

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Prediction

• Naïve Bayes

–

log [ p( x | c ) p(c) ] = log [ P p( xj | c ) ] + log p(c)

=

S

log p( xj | c ) + log p(c)

Sum over all words in vocabulary

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Prediction

• Naïve Bayes

–

log [ p( x | c ) p(c) ] = log [ P p( xj | c ) ] + log p(c)

=

S

log p( xj | c ) + log p(c)

Sum over all words in vocabulary

• Multinomial

– log [ p( x | c ) p(c) ] = log [ P p( xj | c )nj ] + log p(c)

=

S

nj log p( xj | c ) + log p(c)

Sum over all words that occur in document

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Classification Example

• Document 1 (bag of words)

Counts

complexity

bound

regression

classifier

4

0

0

1

complexity

bound

regression

classifier

2

0

1

3

• Document 2 (bag of words)

Counts

• Compute log p(class |document) for both naïve Bayes and

multinomial model, for each document

– Can assume p(class 1) = p(class 2)

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Classification Example (for document 1)

Naïve Bayes

A

DM

D1

Multinomial

complexity

bound

regression

classifier

-0.11

-0.36

-2.3

-2.3

-1.6

-2.3

-0.36

-0.22

complexity

bound

regression

classifier

4

0

0

1

Data Mining Lectures

A

DM

D1

Notes on Text Classification

complexity

bound

regression

classifier

-0.69

-0.92

-3.0

-3.0

-2.3

-3.0

-1.2

-0.6

complexity

bound

regression

classifier

4

0

0

1

© Padhraic Smyth, UC Irvine

Classification Example (for document 1)

Naïve Bayes

A

DM

D1

Multinomial

complexity

bound

regression

classifier

-0.11

-0.36

-2.3

-2.3

-1.6

-2.3

-0.36

-0.22

complexity

bound

regression

classifier

4

0

0

1

complexity

bound

regression

classifier

-0.69

-0.92

-3.0

-3.0

-2.3

-3.0

-1.2

-0.6

A

DM

complexity

bound

regression

classifier

4

0

0

1

D1

S log p( xj | c ) + log p(c)

Class A:

score = -0.11 – 2.3 = -2.41

Class DM: score = -1.6 – 0.22 = -1.08

Data Mining Lectures

S nj log p( xj | c ) + log p(c)

Class A:

score = -4 * 0.69 – 3.0 ~ -5.8

Class DM: score = -4 * 2.3 – 0.6 ~ -8.9

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Comparing Naïve Bayes and Multinomial models

McCallum and Nigam (1998) Found

that multinomial outperformed naïve

Bayes (with binary features) in text

classification experiments

Note on names used in the literature

- Bernoulli (or multivariate Bernoulli)

sometimes used for binary version of

Naïve Bayes model

- multinomial model is also referred to

as “unigram” model

- multinomial model is also sometimes

(confusingly) referred to as naïve

Bayes

WebKB Data Set

• Classify webpages from CS departments into:

– student, faculty, course,project

• Train on ~5,000 hand-labeled web pages

– Cornell, Washington, U.Texas, Wisconsin

• Crawl and classify a new site (CMU)

• Results with NB

Student

Extracted

180

Correct

130

Accuracy:

72%

Data Mining Lectures

Faculty

66

28

42%

Person

246

194

79%

Notes on Text Classification

Project

99

72

73%

Course

28

25

89%

Departmt

1

1

100%

© Padhraic Smyth, UC Irvine

Comparing Naïve Bayes and Multinomial on Web KB Data

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Sec. 15.2.4

Classic Reuters-21578 Data Set

•

•

•

•

Most (over)used data set

21578 documents

9603 training, 3299 test articles (ModApte/Lewis split)

118 categories

– An article can be in more than one category

– Learn 118 binary category distinctions

•

•

Average document: about 90 types, 200 tokens

Average number of classes assigned

– 1.24 for docs with at least one category

•

Only about 10 out of 118 categories are large

Common categories

(#train, #test)

Data Mining Lectures

•

•

•

•

•

Earn (2877, 1087)

Acquisitions (1650, 179)

Money-fx (538, 179)

Grain (433, 149)

Crude (389, 189)

Notes on Text Classification

•

•

•

•

•

Trade (369,119)

Interest (347, 131)

Ship (197, 89)

Wheat (212, 71)

Corn (182, 56)

© Padhraic Smyth, UC Irvine

Sec. 15.2.4

Example of Reuters document

<REUTERS TOPICS="YES" LEWISSPLIT="TRAIN" CGISPLIT="TRAINING-SET" OLDID="12981"

NEWID="798">

<DATE> 2-MAR-1987 16:51:43.42</DATE>

<TOPICS><D>livestock</D><D>hog</D></TOPICS>

<TITLE>AMERICAN PORK CONGRESS KICKS OFF TOMORROW</TITLE>

<DATELINE> CHICAGO, March 2 - </DATELINE><BODY>The American Pork Congress kicks off

tomorrow, March 3, in Indianapolis with 160 of the nations pork producers from 44 member states determining

industry positions on a number of issues, according to the National Pork Producers Council, NPPC.

Delegates to the three day Congress will be considering 26 resolutions concerning various issues, including the

future direction of farm policy and the tax law as it applies to the agriculture sector. The delegates will also debate

whether to endorse concepts of a national PRV (pseudorabies virus) control and eradication program, the NPPC

said.

A large trade show, in conjunction with the congress, will feature the latest in technology in all areas of the

industry, the NPPC added. Reuter

&#3;</BODY></TEXT></REUTERS>

35

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Comparing Multinomial and Naïve Bayes on Reuter’s Data

(from McCallum and Nigam, 1998)

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Comparing Multinomial and Naïve Bayes on Reuter’s Data

(from McCallum and Nigam, 1998)

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Comparing Naïve Bayes and Multinomial

(slide from Chris Manning, Stanford)

Results from classifying 13,589 Yahoo! Web pages in Science subtree

of hierarchy into 95 different topics

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Feature Selection

•

Performance of text classification algorithms can be optimized by selecting

only a subset of the discriminative terms

– Particularly important for Naïve Bayes (see previous slides)

– Multinomial classifier is less sensitive

•

Greedy search

– Start from empty set or full set and add/delete one at a time

– Heuristics for adding/deleting

• Information gain (mutual information of term with class)

• Chi-square

• Other ideas

Methods tend not to be particularly sensitive to the specific heuristic used for

feature selection, but some form of feature selection often improves

performance

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Sec.13.5.1

Feature selection via Mutual Information

• In training set, choose k words which best discriminate (give most

information about) the categories.

• The Mutual Information between a word, class is:

I ( w, c)

ew {0,1} ec {0,1}

p(ew , ec ) log

p(ew | ec )

p(ew )

For each word w and each category c

p(ew | ec )

Data Mining Lectures

the probability of w being in a document if

the document belongs to class c

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Sec.13.5.1

Example of Feature Selection

• For each category we build a list of k most discriminating terms

– Use the union of these terms in our classifier

– K selected heuristically, or via cross-validation

• For example (on 20 Newsgroups), using mutual information

– sci.electronics: circuit, voltage, amp, ground, copy, battery,

electronics, cooling, …

– rec.autos: car, cars, engine, ford, dealer, mustang, oil, collision,

autos, tires, toyota, …

• Greedy: does not account for correlations between terms

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Example of Feature Selection

(from Chakrabarti, Chapter 5)

9600 documents from US Patent database

20,000 raw features (terms)

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Comments on Generative Models for Text

(Comments applicable to both Naïve Bayes and Multinomial classifiers)

•

Simple and fast => popular in practice

– e.g., linear in p, n, M for both training and prediction

• Training = “smoothed” frequency counts, e.g.,

p(ck | xj 1)

nk , j k

nk m

– e.g., easy to use in situations where classifier needs to be updated regularly

(e.g., for spam email)

•

Numerical issues

– Typically work with log p( ck | x ), etc., to avoid numerical underflow

– Useful trick for naïve Bayes classifier:

• when computing S log p( xj | ck ) , for sparse data, it may be much faster to

– precompute S log p( xj = 0| ck )

– and then subtract off the log p( xj = 1| ck ) terms

•

Note: both models are “wrong”: but for classification are often sufficient

Beyond Independence

•

Naïve Bayes and multinomial both assume conditional independence of

words given class

•

Alternative approaches try to account for higher-order dependencies

– Bayesian networks:

•

•

•

•

•

p(x | c) = Px p(x | parents(x), c)

Equivalent to directed graph where edges represent direct dependencies

Various algorithms that search for a good network structure

Useful for improving quality of distribution model

….however, this does not always translate into better classification

– Maximum entropy models

•

•

•

•

•

•

Data Mining Lectures

p(x | c) = 1/Z Psubsets f( subsets(x) | c)

Equivalent to undirected graph model

Estimation is equivalent to maximum entropy assumption

Feature selection is crucial (which f terms to include) –

can provide high accuracy classification

…. however, tends to be computationally complex to fit (estimating Z is difficult)

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Dumais et al. 1998: Reuters - Accuracy

earn

acq

money-fx

grain

crude

trade

interest

ship

wheat

corn

Avg Top 10

Avg All Cat

Data Mining Lectures

NBayes

Trees

LinearSVM

95.9%

97.8%

98.2%

87.8%

89.7%

92.8%

56.6%

66.2%

74.0%

78.8%

85.0%

92.4%

79.5%

85.0%

88.3%

63.9%

72.5%

73.5%

64.9%

67.1%

76.3%

85.4%

74.2%

78.0%

69.7%

92.5%

89.7%

65.3%

91.8%

91.1%

81.5%

75.2% na

88.4%

Notes on Text Classification

91.4%

86.4%

© Padhraic Smyth, UC Irvine

Sec. 15.2.4

Precision-recall for category: Ship

1

0.9

0.8

Precision

0.7

0.6

0.5

LSVM

Decision Tree

Naïve Bayes

Rocchio

0.4

0.3

0.2

0.1

0

0

0.2

0.4

0.6

Recall

Data Mining Lectures

Notes on Text Classification

0.8

1

Dumais

(1998)

© Padhraic Smyth, UC Irvine

Sec. 15.2.4

Micro- vs. Macro-Averaging

•

If we have more than one class, how do we combine multiple performance

measures into one quantity?

•

Macroaveraging: Compute performance for each class, then average.

•

Microaveraging: Collect decisions for all classes, compute contingency table,

evaluate.

Note that macroaveraging ignores how likely each class is, gives performance

on each class equal weight.

Microaveraging weights according to how likely the classes are.

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Sec. 15.2.4

Micro- vs. Macro-Averaging: Example

Class 1

Truth:

yes

Class 2

Truth:

no

Micro Ave. Table

Truth:

yes

Truth:

no

Truth:

yes

Truth:

no

Classifi 10

er: yes

10

Classifi

er: yes

90

10

Classifier:

yes

100

20

Classifi 10

er: no

970

Classifi

er: no

10

890

Classifier:

no

20

1860

Macroaveraged precision: (0.5 + 0.9)/2 = 0.7

Microaveraged precision: 100/120 = .83

Microaveraged score is dominated by score on common classes

Sec. 15.2.4

Results on Reuters Data

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Sec. 15.2.4

SVM vs. Other Methods

(Yang and Liu, 1999)

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Comparison of accuracy across three classifiers: Naive Bayes, Maximum Entropy and Linear

SVM, using three data sets: 20 newsgroups, the Recreation sub-tree of the Open Directory,

and University Web pages from WebKB. From Chakrabarti, 2003, Chapter 5.

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Sec. 15.3.2

Upweighting

• You can get a lot of value by differentially weighting contributions

from different document zones

– e.g., you count as two instance of a word when you see it in, say, the

abstract

– Upweighting title words helps (Cohen & Singer 1996)

• Doubling the weighting on the title words is a good rule of thumb

– Upweighting the first sentence of each paragraph helps (Murata, 1999)

– Upweighting sentences that contain title words helps (Ko et al, 2002)

Are there principled approaches to learn where and how to upweight for a

specific text classification task and corpus?

Data Mining Lectures

Notes on Text Classification

52

© Padhraic Smyth, UC Irvine

Sec. 15.3.1

Accuracy as a function of data size

• With enough data the choice

of classifier may not matter

much, and the best choice may

be unclear

Scaling to very very large corpora for natural language

disambiguation

Banko and Brill, Annual Meeting of the ACL, 2001

– Data: Banko and Brill on

“confusion-set” disambiguation,

e.g., principle v. principal

• But the fact that you have to

keep doubling your data to

improve performance is a little

unpleasant

53

Learning with Labeled and Unlabeled documents

• In practice, obtaining labels for documents is time-consuming,

expensive, and error prone

– Typical application: small number of labeled docs and a very large

number of unlabeled docs

• Idea:

– Build a probabilistic model on labeled docs

– Classify the unlabeled docs, get p(class | doc) for each class and doc

• This is equivalent to the E-step in the EM algorithm

– Now relearn the probabilistic model using the new “soft labels”

• This is equivalent to the M-step in the EM algorithm

– Continue to iterate until convergence (e.g., class probabilities do not

change significantly)

– This EM approach to classification shows that unlabeled data can help

in classification performance, compared to labeled data alone

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Learning with Labeled and Unlabeled Data

Graph from “Semi-supervised text classification using EM”, Nigam, McCallum, and Mitchell, 2006

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

MultiLabel Text Classification

•

Each document can have 1, 2, 3, … class labels

– E.g., news-articles annotated with “tags”

– Wikipedia categories

– MESH headings for biomedical articles

•

Common approach

– K labels

– Train K different “one versus all” classifiers, e.g., K binary SVMs

– Limitations

• Does not account for label dependencies (e.g. positive and negative correlations in

label patterns)

• Can have trouble with (a) many labels per document, and (b) few documents per label

– Alternatives

• Train K(K-1)/2 binary classifiers – not good computationally

• Capture labels correlations in lower dimensional space

– “compressed sensing” – Hsu, Kakade, Langford, NIPS 2009

– topic models – new research at UCI, will discuss later in quarter

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Other aspects of text classification

•

Real-time constraints:

•

Document length

•

Linked documents

– Being able to update classifiers as new data arrives

– Being able to make predictions very quickly in real-time

– Varying document length can be a problem for some classifiers

– Multinomial tends to be less sensitive than Bernoulli for example

– Can view Web documents as nodes in a directed graph

– Classification can now be performed that leverages the link structure,

• Heuristic = class labels of linked pages are more likely to be the same

– Optimal solution is to classify all documents jointly rather than individually

– Resulting “global classification” problem is typically computationally complex

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Summary of Text Classifiers

•

Naïve Bayes models (Bernoulli or Multinomial)

– Low time complexity (single linear pass through the data)

– Generally good, but not always best

– Widely used for spam email filtering

•

Linear SVMs

– Often produce best results in research studies

– But computationally complex to train

– not so widely used in practice as naïve Bayes

•

Logistic regression

– Can be as accurate as SVMs, computationally better

– A variant of this is sometimes referred to as “maximum entropy”

– Adaptations for sparse text data

• Feature selection or regularization (e.g., L1/Lasso) is essential

• Stochastic gradient descent can be very useful (see earlier lecture)

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Ch. 13

Alternatives: Manual Classification

• Manual classification

–

–

–

–

–

Used by the original Yahoo! Directory

Looksmart, about.com, ODP, PubMed

Very accurate when job is done by experts

Consistent when the problem size and team is small

Difficult and expensive to scale

– Can be noisy when done by different people

• E.g., spam/non-spam categorization by users

Note that this is how our training data is created for classification

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Ch. 13

Rule-Based Systems

Hand-coded rule-based systems

• One technique used by spam filters, Reuters, CIA, etc.

– Widely used in government and industry

• Companies provide “IDE” for writing such rules

• E.g., assign category if document contains a given Boolean

combination of words

• Standing queries: Commercial systems have complex query

languages (everything in IR query languages +score accumulators)

• Accuracy is often very high if a rule has been carefully refined over

time by a subject expert

• Building and maintaining these rules is expensive

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Further Reading on Text Classification

• Web-related text mining in general

– S. Chakrabarti, Mining the Web: Discovering Knowledge from Hypertext

Data, Morgan Kaufmann, 2003.

– See chapter 5 for discussion of text classification

• General references on text and language modeling

– Foundations of Statistical Language Processing, C. Manning and H.

Schutze, MIT Press, 1999.

– Speech and Language Processing: An Introduction to Natural Language

Processing, Dan Jurafsky and James Martin, Prentice Hall, 2000.

• SVMs for text classification

– T. Joachims, Learning to Classify Text using Support Vector Machines:

Methods, Theory and Algorithms, Kluwer, 2002

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine

Ch. 15

Additional Papers and Sources

•

•

•

•

•

•

•

•

•

Christopher J. C. Burges. 1998. A Tutorial on Support Vector Machines for Pattern

Recognition

S. T. Dumais. 1998. Using SVMs for text categorization, IEEE Intelligent Systems, 13(4)

S. T. Dumais, J. Platt, D. Heckerman and M. Sahami. 1998. Inductive learning algorithms and

representations for text categorization. CIKM ’98, pp. 148-155.

Yiming Yang, Xin Liu. 1999. A re-examination of text categorization methods. 22nd Annual

International SIGIR

Tong Zhang, Frank J. Oles. 2001. Text Categorization Based on Regularized Linear

Classification Methods. Information Retrieval 4(1): 5-31

Trevor Hastie, Robert Tibshirani and Jerome Friedman. Elements of Statistical Learning: Data

Mining, Inference and Prediction. Springer-Verlag, New York.

T. Joachims, Learning to Classify Text using Support Vector Machines. Kluwer, 2002.

Fan Li, Yiming Yang. 2003. A Loss Function Analysis for Classification Methods in Text

Categorization. ICML 2003: 472-479.

Tie-Yan Liu, Yiming Yang, Hao Wan, et al. 2005. Support Vector Machines Classification with

Very Large Scale Taxonomy, SIGKDD Explorations, 7(1): 36-43.

Data Mining Lectures

Notes on Text Classification

© Padhraic Smyth, UC Irvine