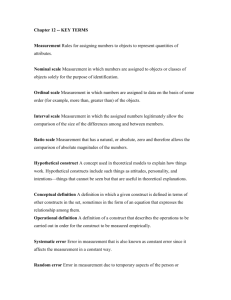

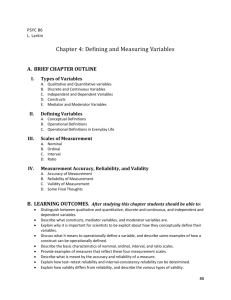

Ch3 Defining and Measuring Variables

advertisement

Defining and Measuring Variables Chapter 3 Dusana Rybarova Psyc 290B May 17 2006 Outline: 1. 2. 3. 4. 5. 6. An overview of measurement Constructs and operational definitions Validity and reliability of measurement Scales of measurement Modalities of Measurement Other Aspects of Measurement 1. An overview of measurement • two aspects of measurement are particularly important in planning a research study or reading a research report: – often there is not a one-to-one relationship between the variable measured and the measurement obtained (knowledge, performance and exam grade) – there are usually several different options for measuring any particular variable (types of exams and questions on exams) – Direct measurement (height, weight) vs indirect measurement (motivation, knowledge, memory, marital satisfaction) 2. Constructs and operational definitions • Theories summarize our observations, explain • mechanisms underlying a particular behavior and make predictions about the behavior. many research variables, particularly variables of interest to behavioral scientists, are hypothetical attributes or mechanisms explaining and predicting some behavior in a theory are called constructs external stimulus factor reward construct behavior motivation performance • constructs can not be directly observed or measured • however, researchers can measure external, observable • events as an indirect method of measuring the construct itself operational definition – is a procedure for measuring and defining a construct, indirect method of measuring something that can not be measured directly – an operational definition specifies a measurement procedure for measuring an external, observable behavior and uses the resulting measurements as a definition and a measurement of the hypothetical construct – e.g. IQ test is an operational definition for the construct intelligence –- provide and example of a theoretical construct and its operational definition – You don’t always have to come up with your own operational definition of the construct, you can use some conventional measurement procedure from previous studies 3. Validity and reliability of measurement • How do you decide which method of measurement (operational definition of a construct) is the best? • there are two general criteria for evaluating the quality of any measurement procedure – validity – reliability Validity of measurement • Validity of measurement – concerns the “truth” of the measurement – it is the degree to which the measurement process measures the variable it claims to measure – Is the IQ score truly measuring intelligence? What about size of the brain and bumps on the scull? Different kinds of validity – face validity • the simplest and least scientific definition of validity • it is demonstrated when a measure superficially appears to measure what it claims to measure • Based on subjective judgment and difficult to quantify • e.g. intelligence and reasoning questions on the IQ test • Problem - participants can use the face validity to change their answers – concurrent validity (criterion validity) • is demonstrated when scores obtained from a new measure are directly related to scores obtained from a more established measure of the same variable • e.g. new IQ test correlates with an older IQ test Different kinds of validity (cont.) • Different kinds of validity – predictive validity • when scores obtained from a measure accurately predict behavior according to a theory • e.g. high scores on need for achievement test predict competitive behavior in children (ring toss game) – construct validity • is demonstrated when scores obtained from a measure are directly related to the variable itself • Reflects how close the measure relates to the construct (height and weight example) • in one sense, construct validity is achieved by repeatedly demonstrating every other type of validity Different kinds of validity (cont.) • Different kinds of validity – convergent validity • is demonstrated by a strong relationship between the scores obtained from two different methods of measuring the same construct • e.g. an experimenter observing aggressive behavior in children correlated with teacher’s ratings of their behavior – divergent validity • is demonstrated by using two different methods to measure two different constructs • convergent validity must be shown for each of the two constructs and little or no relationship exists between the scores obtained from the two different constructs when they are measured by the same method • e.g. aggressive behavior and general activity level in children Convergent validity, divergent validity and construct validity • By demonstrating strong convergent validity for two different constructs and then showing divergent validity between the two constructs, you obtain strong construct validity of the two constructs Aggressive behavior Active behavior High convergent Experimenter’s Teacher’s ratings validity observation Related scores High Diver gent Vali dity Unrelated scores High Diver gent Vali dity Unrelated scores High convergent Teacher’s ratings Experimenter’s validity observation Related scores Reliability of measurement • Reliability of measurement – a measurement procedure is said to be reliable if repeated measurements of the same individual under the same conditions produce identical (or nearly identical) values – reliability is the stability or the consistency of measurement measured score = true score + error IQ score = true IQ score + mood, fatigue etc. Reliability and error of measurement • Inconsistency (lack of reliability) of measurement comes • • from error The higher the error the more unreliable the measurement Sources of error • observer error – the individual who makes the measurements can introduce simple human error into the measurement process • environmental changes – small changes in the environment from one measurement to another (e.g. time of the day, distraction in the room, lighting) • participant changes – participants change between measurements (mood, hunger, motivation) Types and measures of reliability • successive measurements • Obtaining scores from two successive measurements and calculating • • a correlation between them the same group, the same measurement at two different times test-retest reliability • simultaneous measurements • obtained by direct observation of behaviors (two or more separate observers at the same time), consistency across raters • inter-rater reliability • internal consistency • degree of consistency of scores from separate items on a test or • • • questionnaire consisting of multiple items you want all the items or groups of items tapping the same processes researchers commonly split the set of items in half, compute a separate score of each half, and then evaluate the degree of agreement between the two scores split-half reliability The relationship between reliability and validity – they are partially related and partially independent – reliability is a prerequisite for validity (measurement procedure can not be valid unless it is reliable – e.g. IQ, huge variance of repeated measurements is impossible if we are truly measuring intelligence) – it is not necessary for a measurement to be valid for it to be reliable (e.g. height as a measure of intelligence) 4. Scales of measurement • Scales define the type categories we use in measurement and the selection of a scale has direct impact on our ability to describe relationships between variables • the nominal scale – simply represents qualitative difference in the variable measured – can only tell us that a difference exists without the possibility telling the direction or magnitude of the difference – e.g. majors in college, race, gender, occupation • the ordinal scale – the categories that make up an ordinal scale form an ordered sequence – can tell us the direction of the difference but not the magnitude – e.g. coffee cup sizes, socioeconomic class, T-shirt sizes, food preferences Scales of measurement (cont.) • the interval scale – categories on an interval scale are organized sequentially, and all categories are the same size – we can determine the direction and the magnitude of a difference – May have an arbitrary zero (convenient point of reference) – e.g. temperature in Farenheit, time in seconds • the ratio scale – consists of equal, ordered categories anchored by a zero point that is not arbitrary but meaningful (representing absence of a variable – allows us to determine the direction, the magnitude, and the ratio of the difference – e.g. reaction time, number of errors on a test 5. Modalities of measurement • One can measure a construct by selecting a measure from three main categories • There are three basic modalities of measurement: – self-report – physiological measurement – behavioral measurement • behavioral observation • content analysis and archival research Self-report measures – you ask a participant to describe his behavior, to express his opinion or characterize his experience in an interview or by using a questionnaire with ratings – Positive aspects • Only the individual has direct access to information about his state of mind • More direct measure – Negative aspects • Participants may distort the responses to create a better self-image or to please the experimenter • The response can also be influenced by wording of the questions and other aspects of the situation Physiological measures – Physiological manifestations of the underlying construct – e.g. EEG, EKG, galvanic skin response, perspiration, PET, fMRI – advantages • provides accurate, reliable, and well-defined measurements that are not dependent on subjective interpretation – disadvantages • equipment is usually expensive or unavailable • Presence of monitoring devices may create unnatural situation • question: Are these procedures a valid measure of the construct (e.g. increase in heart rate to fear, arousal) Behavioral measures – behaviors that can be observed and measured (e.g. reaction time, reading speed, focus of attention, disruptive behavior, number of words recalled on a memory test) – How to select the right behavioral measure? • Depends on the purpose of the study – In clinical setting the same disorder can reveal itself through different symptoms – In studying memory we want to have the same measure for all subjects to be able to compare them – Beware of situational changes in behavior (e.g. disruptive behavior in school vs when observed) and different behavioral indicators of a construct 6. Other aspects of measurement • multiple measures – sometimes you can use two (or more) different procedures to measure the same variable (e.g. heart rate and questionnaire as a measure of fear) – problems (the two variables may not behave in the same way) • e.g. a specific therapy for treating fear may have large effect on behavior but no effect on heart rate – the lack of agreement between two measures is called desynchrony • One measure can be more sensitive than other • Different measures may indicate different dimensions of the variable and change at different times during the treatment Sensitivity and range effects – are the measures sensitive enough to respond to the type and magnitude of the changes that are expected? (e.g. seconds vs. milliseconds, difficult or easy exams) – range effects • a ceiling effect (the clustering of scores at the high end of a measurement scale, allowing little or no possibility of increases in value, e.g. test that is too easy) • a floor effect (the clustering of scores at the low end of a measurement scale, allowing little or no possibility of decreases in value, e.g. test that is too difficult) • Range effects are usually a consequence of using a measure that is inappropriate for a particular group (e.g. 4-grade test for college students) Participant reactivity and experimenter bias – participant reactivity is the way how participant reacts to the experimental situation (e.g. overly cooperative, overly defensive, or hostile) • To avoid these problems one can try to disguise the true purpose of the experiment or observe individuals without their awareness (beware ethical issues) – experimenter bias is the way experimenter influences results (e.g. by being warm and friendly with one group of participants vs. cold and stern with other group) – to avoid participant reactivity and experimenter bias we use: • standardized procedures (e.g. instructions recorded on a tape) • a research study is single blind if the researcher does not know the predicted outcome • a research study is double blind if both the researcher and the participants are unaware of the predicted outcome Participant reactivity and experimenter bias – to avoid participant reactivity and experimenter bias we use ‘blind’ experiments • a research study is single blind if the researcher does not know the predicted outcome • a research study is double blind if both the researcher and the participants are unaware of the predicted outcome