SILOs - Distributed Web Archiving & Analysis using Map Reduce

advertisement

Anushree Venkatesh

Sagar Mehta

Sushma Rao

Motivation

What

is Map-Reduce?

Why Map-Reduce?

The HADOOP Framework

Map Reduce in SILOs

SILOs Architecture

Modules

Experiments

Life

span of a web page – 44 to 75 days

Limitations of centralized/distributed

crawling

Exploring map reduce

Analysis

of web [ subset ]

Web graph

Search response quality

Tweaked page rank

Inverted Index

Divide

and conquer

Functional programming counterparts ->

distributed data processing

Plumbing behind the scenes -> Focus on the

problem

Map – Division of key space

Reduce – Combine results

Pipelining functionality

Open

source implementation of Map reduce

in Java

HDFS – Hadoop specific file system

Takes care of

fault tolerance

dependencies between nodes

Setup

through VM instance - Problems

Currently

HDFS

Single Node cluster

Setup

Incorporation

of Berkeley DB

Graph

Builder

Seed List

URL Extractor

Distributed Crawler

M

R

URL,

value

URL, page

content

M

Parse for

URL

R

URL,

Parent

(Remove

Duplicate

s)

Adjacency

List

Table

Key Word Extractor

<URL, parent URL>

Back Links

Mapper

M

Parent,

URL

R

URL, 1

M

Diff

Compression

Parse

for key

word

Page

Content

Table

URL

Table

Back Links

Table

R

KeyWord,

URL

Inverted

Index

Table

Map

Input <url, 1>

if(!duplicate(URL)) {

Insert into url_table

Page_content = http_get(url);

<hash(url), url, hash(page_content),time_stamp >

Output Intermediate pair < url, page_content>

}

Else If( ( duplicate(url) && (Current Time – Time Stamp(URL) > Threshold) {

Page_content = http_get(url);

Update url table(hash(url),current_time);

Output Intermediate pair < url, page_content>

}

Else {

Update url table(hash(url),current_time);

}

Reduce

Input < url, page_content >

If(! Exits hash(URL) in page content table) {

Insert into page_content_table

<hash(page_content), compress(page_content) >

}

Else if(hash(page_content_table(hash(url)) != hash(current_page_content) {

Insert into page_content_table

<hash(page_content), compress( diff_with_latest(page_content) )>

}

}

Currently

Manual

outside of Map-Reduce

transfer of files to HDFS

Currently

Depth First Search, will be

modified for Breadth First Search

Map

Input < url, page_content>

List<keywords> = parse(page_content);

For each keyword, emit

Output Intermediate pair < keyword, url>

Reduce

Combine all <keyword, url> pairs with the same keyword

to emit

<keyword, List<urls> >

Insert into inverted index table

<keyword, List<urls> >

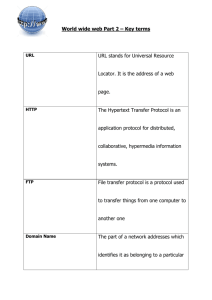

Top Words Along with their Frequency

CMU

Carnegie

Mellon

University

Alumni

Center

News

Library

PA

Research

Pittsburgh,

Information

School

Cornell

2456

2107

1157

786

466

395

393

373

357

352

313

309

Cornell

University

College

Admissions

Research

Student

School

Information

York

Alumni

Academics

Ithaca

Gatech

742

378

158

128

99

94

89

77

74

71

62

59

Tech

Georgia

Alumni

Services

Association

Career

Baseball

Engineering

Tennis

Information

students

Institute

Atlanta

2704

1882

1115

885

646

493

416

408

222

219

198

173

164

Top 6 URL domains that get traversed

CMU

Cornell

Gatech

alumni.cmu.edu 92

hr.web.cmu.edu 13

www.alumniconnecti

ons.com

16

www.carnegiemellon

today.com

10

www.cmu.edu 170

www.library.cmu.edu

69

www.cornell.edu 43

www.cuinfo.cornell.ed

u

2

www.gradschool.corne

ll.edu

2

www.news.cornell.edu

7

www.sce.cornell.edu

8

www.vet.cornell.edu

1

centennial.gtalumni

.org

4

cyberbuzz.gatech.e

du

7

georgiatech.searche

ase.com

9

gtalumni.org

236

ramblinwreck.cstv.c

om

56

www.gatech.edu 14

Avg URL Depth

CMU

Cornell

Gatech

cmu.edu

2.73

alumni.cmu.edu 2.18

www.library.cmu.edu

2.23

www.alumniconnecti

ons.com

4.81

cornell.edu

1.34

www.gradschool.corne

ll.edu

1

www.news.cornell.edu

2.57

www.sce.cornell.edu 1

gatech.edu

1

gtalumni.org

3

ramblinwreck.cstv.c

om

2.57

cyberbuzz.gatech.e

du

2

Questions, Comments,

Criticisms

21

HTML

Parser

Hadoop Framework (Apache)

Peer Crawl