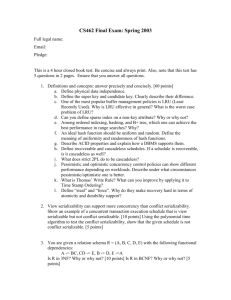

file4 - Department of Computer Science

advertisement

Instructor: Dr T.Y. LIN

One Pass Algorithm

Presented By: Pradhyuman raol

ID : 114

Agenda

One Pass algorithm

Tuple-at-a-Time

Unary Operators

Binary Operations

One-Pass Algorithms

One Pass Algorithm:

• Some methods involve reading the data only once

from disk.

• They work only when at least one of the

arguments of the operation fits in main memory.

Tuple-at-a-Time

We read the blocks of R one at a

time into an input buffer, perform

the operation on the tuple, and

more the selected tuples or the

projected tuples to the output

buffer.

Examples: Selection & Projection

Tuple at a time Diagram

R

Input buffer

Unary

operator

Output buffer

Unary Operators

• The unary operations that apply to relations

as a whole, rather than to one tuple at a time.

• Duplicate Elimination d(R) :Check whether

that tuple is already there or not.

M= Main memory

B(d(R))= Size of Relation R

Assumption: B(d(R)) <= M

Unary Operators

• Grouping : A grouping operation gives us zero

or more grouping attributes and presumably

one or more accumulated value or values for

each aggregation.

• Min or Max

• Count

• Sum

• Average

Binary Operations

Set Union

Set Intersection

Set Difference

Bag Intersection

Bag Difference

Product

Natural join

Nested Loops Joins

Book Section of chapter 15.3

Submitted to : Prof. Dr. T.Y. LIN

Submitted by: Saurabh Vishal

Topic to be covered

•

•

•

•

Tuple-Based Nested-Loop Join

An Iterator for Tuple-Based Nested-Loop Join

A Block-Based Nested-Loop Join Algorithm

Analysis of Nested-Loop Join

15.3.1

Tuple-Based Nested-Loop Join

• The simplest variation of nested-loop join has loops

that range over individual tuples of the relations

involved. In this algorithm, which we call tuple-based

nested-loop join, we compute the join as follows

R

S

Continued

• For each tuple s in S DO

For each tuple r in R Do

if r and s join to make a tuple t THEN

output t;

– If we are careless about how the buffer the blocks of

relations R and S, then this algorithm could require as

many as T(R)T(S) disk .there are many situations where this

algorithm can be modified to have much lower cost.

Continued

• One case is when we can use an index on the join

attribute or attributes of R to find the tuples of R that

match a given tuple of S, without having to read the

entire relation R.

• The second improvement looks much more carefully

at the way tuples of R and S are divided among

blocks, and uses as much of the memory as it can to

reduce the number of disk I/O's as we go through

the inner loop. We shall consider this block-based

version of nested-loop join.

15.3.2

An Iterator for Tuple-Based Nested-Loop Join

• Open() {

– R.Open();

– S.open();

– A:=S.getnext();

}

GetNext() {

Repeat {

r:= R.Getnext();

IF(r= Not found) {/* R is exhausted for the

current s*/

R.close();

s:=S.Getnext();

IF( s= Not found) RETURN Not Found;

/* both R & S are exhausted*/

R.Close();

r:= R.Getnext();

}

}

until ( r and s join)

RETURN the join of r and s;

}

Close() {

R.close ();

S.close ();

}

15.3.3

A Block-Based Nested-Loop Join Algorithm

We can Improve Nested loop Join by compute R

|><| S.

1. Organizing access to both argument

relations by blocks.

2. Using as much main memory as we can to

store tuples belonging to the relation S, the

relation of the outer loop.

The nested-loop join algorithm

FOR each chunk of M-1 blocks of S DO BEGIN

read these blocks into main-memory buffers;

organize their tuples into a search structure whose

search key is the common attributes of R and S;

FOR each block b of R DO BEGIN

read b into main memory;

FOR each tuple t of b DO BEGIN

find the tuples of S in main memory that

join with t ;

output the join of t with each of these

tuples;

END ;

END ;

END ;

15.3.4

Analysis of Nested-Loop Join

Assuming S is the smaller relation, the number

of chunks or iterations of outer loop is B(S)/(M

- 1). At each iteration, we read hf - 1 blocks of

S and

B(R) blocks of R. The number of disk I/O's is

thus

B(S)/M-1(M-1+B(R)) or B(S)+B(S)B(R)/M-1

Continued

Assuming all of M, B(S), and B(R) are large, but

M is the smallest of these, an approximation

to the above formula is B(S)B(R)/M. That is,

cost is proportional to the product of the sizes

of the two relations, divided by the amount of

available main memory.

Example

•

B(R) = 1000, B(S) = 500, M = 101

–

•

•

•

•

•

•

•

Important Aside: 101 buffer blocks is not as unrealistic as it sounds. There

may be many queries at the same time, competing for main memory

buffers.

Outer loop iterates 5 times

At each iteration we read M-1 (i.e. 100) blocks of S and all of R (i.e.

1000) blocks.

Total time: 5*(100 + 1000) = 5500 I/O’s

Question: What if we reversed the roles of R and S?

We would iterate 10 times, and in each we would read 100+500 blocks,

for a total of 6000 I/O’s.

Compare with one-pass join, if it could be done!

We would need 1500 disk I/O’s if B(S) M-1

Continued…….

1. The cost of the nested-loop join is not much

greater than the cost of a one-pass join,

which is 1500 disk 110's for this example. In

fact.if B(S) 5 lZI - 1, the nested-loop join

becomes identical to the one-pass join

algorithm of Section 15.2.3

2. Nested-loop join is generally not the most

efficient join algorithm.

Summary of the topic

In This topic we have learned about how the

nested tuple Loop join are used in database

using query execution and what is the process

for that.

Presentation Topic 18.7 of Book

Tree Protocol

Submitted to: Prof. Dr. T.Y.LIN

Submitted By :Saurabh Vishal

Topic’s That Should be covered in This

Presentation

• Motivation for Tree-Based Locking

• Rules for Access to Tree-Structured Data

• Why the Tree Protocol Works

Introduction

• Tree structures that are formed by the link pattern of the

elements themselves. Database are the disjoint pieces of

data, but the only way to get to Node is through its

parent.

• B trees are best example for this sort of data.

• Knowing that we must traverse a particular path to an

element give us some important freedom to manage

locks differently from two phase locking approaches.

Tree Based Locking

• B tree index in a system that treats individual

nodes( i.e. blocks) as lockable database

elements. The Node Is the right level

granularity.

• We use a standard set of locks modes like

shared,exculsive, and update locks and we use

two phase locking

Rules for access Tree Structured Data

•

•

•

•

There are few restrictions in locks from the tree protocol.

We assume that that there are only one kind of lock.

Transaction is consider a legal and schedules as simple.

Expected restrictions by granting locks only when they do

not conflict with locks already at a node, but there is no

two phase locking requirement on transactions.

Why the tree protocol works.

• A transaction's first lock may be at any node of

the tree.

• Subsequent locks may only be acquired if the

transaction currently has a lock on the parent

node.

• Nodes may be unlocked at any time

• A transaction may not relock a node on which

it has released a lock, even if it still holds a

lock on the node’s parent

A tree structure of Lockable elements

Three transactions following the tree protocol

Why the Tree Protocol works?

• The Tree protocol forces a serial order on the

transactions involved in a schedule.

• Ti <sTj if in schedule S., the transaction Ti and

Tj lock a node in common and Ti locks the

node first.

Example

• If precedence graph drawn from the precedence

relations that we defined above has no cycles, then we

claim that any topological order of transactions is an

equivalent serial schedule.

• For Example either ( T1,T2,T3) or (T3,T1,T2) is an

equivalent serial schedule the reason for this serial order

is that all the nodes are touched in the same order as

they are originally scheduled.

• If two transactions lock several elements in

common, then they are all locked in same

order.

• I am Going to explain this with help of an

example.

Precedence graph derived from Schedule

Example:--4 Path of elements locked by two

transactions

Continued….

• Now Consider an arbitrary set of transactions T1, T2;.. . .

Tn,, that obey the

tree protocol and lock some of the nodes of a tree

according to schedule S.

• First among those that lock, the root. they do also in

same order.

• If Ti locks the root before Tj, Then Ti locks every

node in common with Tj does. That is Ti<sTj, But not

Tj>sTi.

Concurrency Control by

Timestamps

Prepared By: Ronak Shah

Professor :Dr. T. Y Lin

ID: 116

Concurrency

• Concurrency is a property of a systems in which

several computations are executing and overlapping

in time, and interacting with each other.

Timestamp

Timestamp is a sequence of characters, denoting the date

or time at which a certain event occurred.

Example of Timestamp:

20-MAR-09 04.55.14.000000 PM

06/20/2003 16:55:14:000000

Timestamping

We assign a timestamp to transaction and timestamp is

usually presented in a consistent format, allowing for easy

comparison of two different records and tracking

progress over time; the practice of recording timestamps

in a consistent manner along with the actual data is called

timestamping.

Timestamps

To use timestamping as a concurrency-control

method, the scheduler needs to assign to each

transaction T a unique number, its timestamp

TS(T). Here two approaches use to generating

timestamps

1. Using system clock

2. Another approach is for the scheduler to

maintain a counter. Each time when transaction

starts the counter is incremented by 1 and new

value become timestamp for transaction.

Whichever method we use to generate timestamp ,

the scheduler must maintain a table of currently

active transaction and their timestamp.

To use timestamps as a concurrency-control

method we need to associate with each database

element x two timestamps and an additional bit.

• RT(x) The read time of x.

• WT(x) The write time of x.

• C(x) The commit bit of x. which is true if and only

if the most recent transaction to write x has

already committed. The purpose of this bit is to

avoid a situation of “Dirty Read”.

Physically Unrealizable Behaviors

• Read too late

Transaction T tries to read too late

• Write too late

Transaction T tries to write too late

Problem with dirty data

T could perform a dirty read if it is reads X

A write is cancelled because of a write with a later timestamp, but the writer

then aborts

Rules for timestamp based scheduling

Scheduler’s Response to a T’s request for Read(X)/Write(X)

1. Granting Request

2. Aborting T (if T would violate physical reality)

and restarting T with a new timestamp

(Rollback)

3. Delaying T and later deciding whether to abort

T or to grant the request

Rules

Request RT(X):

1.

If TS(T) >= WT(X), the read is physically

realizable

I. If C(X) is true, grant the request. If TS(T) >

RT(X), set RT(X) := TS(T); otherwise do not

change RT(X)

II. If C(X) is false, delay T until C(X) becomes

true or the transaction that wrote X aborts

2.

If TS(T) < WT(X), the read is physically

unrealizable. Rollback T; abort T and restart it

with a new, larger timestamp

Request WT(X):

1. If TS(T) >= RT(X) and TS(T) >= WT(X), the write

is physically realizable and must be performed

1. Write the new value for X

2. Set WT(X) := TS(T), and

3. Set C(X) := false

2. If TS(T) >= RT(X), but TS(T) < WT(X), then the

write is physically realizable, but there is already a

later value in X. If C(X) is true, then ignore the

write by T. If C(X) is false, delay T

3. If TS(T) < RT(X), then the write is physically

unrealizable

Timestamps Vs Locks

Timestamps

Superior if

• most transactions are read-only

Locks

Superior in high-conflict situations

• rare that concurrent transactions

will read or write the same element

In high-conflict situations, rollback will be

frequent, introducing more delays than a

locking system

Frequently delay transactions as they wait

for locks

Two-Pass Algorithms Based on

Sorting

Prepared By: Ronak Shah

ID: 116

Introduction

• In two-pass algorithms, data from the operand

relations is read into main memory, processed in

some way, written out to disk again, and then

reread from disk to complete the operation.

• In this section, we consider sorting as tool from

implementing relational operations. The basic idea

is as follows if we have large relation R, where B(R)

is larger than M, the number of memory buffers we

have available, then we can repeatedly

1. Read M blocks of R in to main memory

2. Sort these M blocks in main memory, using

efficient, main memory algorithm.

3. Write sorted list into M blocks of disk, refer this

contents of the blocks as one of the sorted sub list

of R.

Duplicate elimination using sorting

• To perform δ(R)

operation in two

passes, we sort tuples

of R in sublists. Then

we use available

memory to hold one

block from each stored

sublists and then

repeatedly copy one to

the output and ignore

all tuples identical to

it.

• The no. of disk I/O’s performed by this algorithm,

1). B(R) to read each block of R when creating the

stored sublists.

2). B(R) to write each of the stored sublists to disk.

3). B(R) to read each block from the sublists at the

appropriate time.

So , the total cost of this algorithm is 3B(R).

Grouping and aggregation using sorting

• Reads the tuples of R into memory, M blocks at a time. Sort

each M blocks, using the grouping attributes of L as the sort

key. Write each sorted sublists on disk.

• Use one main memory buffer for each sublist, and initially load

the first block of each sublists into its buffer.

• Repeatedly find least value of the sort key present among the

first available tuples in the buffers.

• As for the δ algorithm, this two phase algorithm for γ takes

3B(R) disk I/O’s and will work as long as B(R) <= M^2

A sort based union algorithm

• When bag-union is wanted, one pass algorithm

is used in that we simply copy both relation,

works regardless of the size of arguments, so

there is no need to consider a two pass

algorithm for Union bag.

• The one pass algorithm for Us only works when

at least one relation is smaller than the

available main memory. So we should consider

two phase algorithm for set union. To compute

R Us S, we do the following steps,

1. Repeatedly bring M blocks of R into main

3.Use one main memory buffer for each sublist

of R and S. Initialize each with first block from

the corresponding sublist.

4.Repeatedly find the first remaining tuple t

among all buffers. Copy t to the output , and

remove from the buffers all copies of t.

A simple sort-based join algorithm

Given relation R(x,y) and S(y,z) to join, and given M blocks

of main memory for buffers,

1. Sort R, using a two phase, multiway merge sort, with y as

the sort key.

2. Sort S similarly

3. Merge the sorted R and S. Generally we use only two

buffers, one for the current block of R and the other for

current block of S. The following steps are done

repeatedly.

a. Find the least value y of the join attributes Y that is

currently at the front of the blocks for R and S.

b. If y doesn’t appear at the front of the other relation,

then remove the tuples with sort key y.

c. Otherwise identify all the tuples from both relation

having sort key y

d. Output all the tuples that can be formed by joining

tuples from R and S with a common Y value y.

e. If either relation has no more unconsidered tuples in

main memory reload the buffer for that relation.

• The simple sort join uses 5(B(R) + B(S)) disk I/O’s

• It requires B(R)<=M^2 and B(S)<=M^2 to work

Summary of sort-based algorithms

Main memory and disk I/O requirements for sort based algorithms

Query Processing

(Two-Pass Algorithms)

Priyadarshini.S

006493851

Cs 257_117_ch 15_15.5

61

Two-Pass Algorithms Using Hashing

• General idea:

– Hash the tuples using an appropriate hash key

– For the common operations, there is a way to choose the

hash key so that all tuples that need to be considered

together has the same hash value

– Do the operation working on one bucket at a time

• Notice that the sort-based algorithms also gain a factor

of M by preprocessing. although the sorting and

hashing approaches achieve their similar gains by

rather different means

62

Partitioning by Hashing

initialize M-1 buckets with M-1 empty buffers

for each block b of relation R do

read block b into the Mth buffer

for each tuple t in b do

if the buffer for bucket h(t) is full then

copy the buffer to disk

initialize a new empty block in that buffer

copy t to the buffer for bucket h(t)

for each bucket do

if the buffer for this bucket is not empty then

write the buffer to disk

63

Duplicate Elimination Using Hashing

• Hash the relation R to M-1 buckets, R1, R2,…,RM-1

• Note: all copies of the same tuple will hash to the

same bu

• Do duplicate elimination on each bucket Ri

independently, using one-pass algorithm

• Return the union of the individual bucket results

64

Analysis of Duplicate Elimination

Using Hashing

• Number of disk I/O's: 3*B(R)

• In order for this to work, we need:

– hash function h evenly distributes the tuples

among the buckets

– each bucket Ri fits in main memory (to allow

the one-pass algorithm)

– i.e., B(R) ≤ M2

65

Grouping Using Hashing

• To perform the Y L ( R)relation,

Hash all the tuples of relation R to M-1 buckets, using a

hash function that depends only on the grouping

attributes

Note: all tuples in the same group end up in the same

bucket!

Use the one-pass algorithm to process each bucket

independently

Uses 3*B(R) disk I/O's, requires B(R) ≤ M2

66

Union, Intersection and Difference

Using Hashing

• Use same hash function for both relations!

• Hash R to M-1 buckets R1, R2, …, RM-1

• Hash S to M-1 buckets S1, S2, …, SM-1

• Do one-pass {set union, set intersection, bag

intersection, set difference, bag difference} algorithm

on Ri and Si, for all i

• 3*(B(R) + B(S)) disk I/O's; min(B(R),B(S)) ≤ M2

67

Join Using Hashing

• Use same hash function for both relations; hash

function should depend only on the join attributes

•

•

•

•

Hash R to M-1 buckets R1, R2, …, RM-1

Hash S to M-1 buckets S1, S2, …, SM-1

Do one-pass join of Ri and Si, for all i

3*(B(R) + B(S)) disk I/O's; min(B(R),B(S)) ≤ M2

68

Comparison of Sort-Based and HashBased

• For binary operations, hash-based only limits size

of smaller relation, not sum

• Sort-based can produce output in sorted order,

which can be helpful

• Hash-based depends on buckets being of equal

size

• Sort-based algorithms can experience reduced

rotational latency or seek time

69

CS 255

Concurrency Control by Validation

(Section 18.9)

Priyadarshini.S

006493851

Cs_257_117_ch 18_18.9

Validation

• Optimistic concurrency control

• Transactions are accessed data without locks.

• Concurrency Control assumes that conflicts

between transactions are rare

• Scheduler maintains record of active

transactions

• Does not require locking

• Check for conflicts just before commit

Phases

Read – Validate - Write

• Read

–

–

–

–

Reads from the database for the elements in its

read set

ReadSet(Ti): It is a Set of objects read by

Transaction Ti.

Whenever the first write to a given object is

requested, a copy is made, and all subsequent

writes are directed to the copy

When the transaction completes, it requests its

validation and write phases

Phases

Read – Validate - Write

• Validation

–

–

–

–

Checks are made to ensure serializability is not

violated

Scheduling of transactions is done by assigning

transaction numbers to each transactions

There must exist a serial schedule in which

transaction Ti comes before transaction Tj

whenever t(i) < t(j)

If validation fails then the transaction is rolled

back otherwise it proceeds to the third phase

Phases

Read - Validate - Write

• Write

–

–

–

Writes the corresponding values for the

elements in its write set

WriteSet(Ti): Set of objects where Transaction

Ti has intend to write on it.

Locally written data are made global

Terminologies

•

Scheduler maintains 3 states

–

•

START

–

•

Transactions that are started but not yet validated

VAL

–

•

START(T), VAL(T), FIN(T)

Transactions that are validated but not yet finished

FIN

–

Transactions that are finished

Validation Rule 1

TimeLine

•

•

•

•

•

Writ

e

Validation

T1

Read

T2

T2 starts before T1 finishes

FIN(T1) > START(T2)

RS(T2) WS(T1) =

Validation Rule 2

TimeLine

T1

Validation

T2

Write

T2 starts before T1 finishes

FIN(T1) > VAL(T2)

WS(T2) WS(T1) =

Interference –

Leads to

Rollback of T2

No Problem

RS(Tj) WS(Ti) =

WS(Tj) WS(Ti) =

FIN(Ti) > START(Tj) Rule 1

FIN(Ti) > VAL(Tj)

Rule 2 where j > i

Example:

TimeLine

T1

RS

A,B

WS

A,C

T2

B

D

T3

B

D,E

T4

A,D

A,C

Validation

Validation

•

T2 & T1

–

RS(T2) WS(T1) = {B} {A,C} =

–

WS(T2) WS(T1) = {D} {A,C} =

•

T3 & T1

–

RS(T3) WS(T1) = {B} {A,C} =

–

WS(T3) WS(T1) = {D,E} {A,C} =

•

T3 & T2

–

RS(T3) WS(T2) = {B} {D} =

–

WS(T3) WS(T2) = {D,E} {D} = D // Rule 2 Can't be applied; FIN(T2) < VAL(T3)

Validation

•

T4 Starts before T1 and T3 finishes. So T4 has to be checked against the sets of T1

and T3

•

T4 & T1

–

RS(T4) WS(T1) = {A,D} {A,C} = {A}

–

•

Rule 2 can not be

applied

T4 & T3

–

RS(T4) WS(T3) = {A,D} {D,E} = {D}

–

WS(T4) WS(T3) = {A,C} {D,E} =

Comparison

• Lock

–

–

–

–

Lock management overhead

Deadlock detection/resolution.

Concurrency is significantly lowered, when

congested nodes are locked. Locks can not be

released until the end of a transaction

Conflicts are rare. (We might get better

performance by not locking, and instead

checking for conflicts at commit time.)

Comparison

• Validation

–

–

–

–

Optimistic Concurrency Control is superior to

locking methods for systems where transaction

conflict is highly unlikely, e.g query dominant

systems.

Avoids locking overhead

Starvation: What should be done when validation

repeatedly fails ?

Solution: If the concurrency control detects a

starving transaction, it will be restarted, but

without releasing the critical section semaphore,

and transaction is run to the completion by write

locking the database

Comparison

• Timestamp

Deadlock is not possible

Prone to restart

– A more careful implementation we treat all

timestamps that are prior to the earliest active

transaction as "minus infinity” and not record

them

–

–

Query Execution

Index Based Algorithms (15.6)

Ashish Sharma

CS-257

ID:118

Clustering and Nonclustering Indexes

A relation is '.clustered" if its tuples are packed into roughly

as few blocks as can possibly hold those tuples.

Clustering Indexes, which are indexes on an attribute or

attributes such that all the tuples with a fixed value for the

search key of this index appear on roughly as few blocks as

can hold them. Note that a relation that isn't clustered

cannot have a clustering index, but even a clustered relation

can have nonclustering indexes.

A clustering index has all tuples with a fixed value packed

into the minimum possible number of blocks

• Note that a relation that isn't clustered cannot have a

clustering index but even a clustered relation can have non

clustering indexes.

Index-Based Selection

• Selection on equality: sa=v(R)

• Clustered index on a: cost B(R)/V(R,a)

– If the index on R.a is clustering, then the number of

disk I/O's to retrieve the set sa=v (R) will average

B(R)/V(R, a). The actual number may be somewhat

higher.

• Unclustered index on a: cost T(R)/V(R,a)

– If the index on R.a is nonclustering, each tuple we

retrieve will be on a different block, and we must

access T(R)/V(R,a) tuples. Thus, T(R)/V(R, a) is an

estimate of the number of disk I/O’s we need.

• Example: B(R) = 2000, T(R) = 100,000, V(R, a) = 20,

compute the cost of sa=v(R)

• Cost of table scan:

– If R is clustered: B(R) = 2000 I/Os

– If R is unclustered: T(R) = 100,000 I/Os

• Cost of index based selection:

– If index is clustered: B(R)/V(R,a) = 100

– If index is unclustered: T(R)/V(R,a) = 5000

• Notice: when V(R,a) is small, then unclustered index

is useless

Joining by Using an Index

R S : This is a Natural Join

Assume S has an index on the join attribute

Iterate over R, for each tuple fetch

corresponding tuple(s) from S

• Assume R is clustered. Cost:

•

•

•

– If index is clustered: B(R) + T(R)B(S)/V(S,a)

– If index is unclustered: B(R) + T(R)T(S)/V(S,a)

• Assume both R and S have a sorted index (B+

tree) on the join attribute

• Then perform a merge join (called zig-zag join)

• Cost: B(R) + B(S)

Example : Let us consider our running example, relations

R(X, Y) and S(Y, Z) covering 1000 and 500 blocks,

respectively. Assume ten tuples of either relation fit on one

block, so T(R) = 10,000 and T(S) = 5000. Also, assume V(S, Y)

= 100; i.e., there are 100 different values of Y among the

tuples of S.

Suppose that R is clustered, and there is a clustering index

on Y for S. Then the approximate number of disk I/O1s,

excluding what is needed to access the index itself, is 1000

to read the blocks of R plus 10,000 x 300 / 100 = 50,000 disk

I/O's. This number is considerably above the cost of other

methods for the same data discussed previously. If either R

or the index on S is not clustered, then the cost is even

higher

Joins Using a Sorted Index

• Still consider R(X,Y) S(Y,Z)

• Assume there's a sorted index on both R.Y and S.Y

– B-tree or a sorted sequential index

• Scan both indexes in the increasing order of Y

– like merge-join, without need to sort first

– if index dense, can skip nonmatching tuples without

loading them

– very efficient

• Used when the index is a B-tree or any other structure

from which we easily can extract the tuples of a

relation in sorted order, we have a number of other

opportunities to use the index.

• When the index is a B-tree. or any other structure

from which we easily can extract the tuples of a

relation in sorted order, we have a number of

other opportunities to use the index. Perhaps the

simplest is when we

want to compute R(X, Y)

S(Y, Z), and we have such an index on Y for either

R or S. We can then perform an ordinary sortjoin, but we do not have to perform the

intermediate step of sorting one of the relations

on Y.

•

• As an extreme case, if we have sorting indexes

on Y for both R and S, then we need to perform

only the final step of the simple sort-based join.

This method is sometimes called zig-zag join,

because we jump back and forth between the

indexes finding Y-values that they share in

common. Notice that tuples from R with a Y-value

that does not appear in S need never be

retrieved, and similarly, tuples of S whose Y-value

does not appear in R need not be retrieved

A zig-zag join using two indexes.

Example: Suppose that we have relations R(X,Y) and S(Y, Z) with indexes on

Y for both relations. In a tiny example, let the search keys (Y-values) for the

tuples of R be in order 1,3,4,4,4,5,6, and let the search key values for S be

2,2,4,4,6,7. We start with the first keys of R and S, which are 1 and 2,

respectively. Since 1 < 2, we skip the first key of R and look at the second

key, 3. Now, the current key of S is less than the current key of R, so we skip

the two 2's of S to reach 4.

At this point, the key 3 of R is less than the key of S, so we skip the key of R.

Now, both current keys are 4. \Ire follow the pointers associated with all

the keys 4 from both relations, retrieve the corresponding tuples, and join

them. Notice that until we met the common key 4, no tuples of the relation

were retrieved.

Having dispensed with the 4's: we go to key 5 of R and key 6 of S. Since 5 <

6, we skip to the next key of R. Now the keys are both 6, so we retrieve the

corresponding tuples and join them. Since R is now exhausted, we know

there are no more pairs of tuples from the two relations that join

Information Integration

Introduction (21.1)

Ashish Sharma

CS-257

ID:118

Why Information Integration ?

• Databases are created independently, even if

they later need to work together.

• The use of databases evolves, so we can not

design a database to support every possible

future use.

• We will understand Information integration

from an example of University Database.

University Database

Earlier we had different databases for different

functions like;

Registrar Database for keeping data about courses and

student grades for generating transcripts.

Bursar Database for keeping data about the tuition

payments by students.

Human Resource Department Database for recording

employees including those students with teaching

assistantship jobs.

Applications were build using these databases like

generation of payroll checks, calculation of taxes

and social security payments to government.

• But these databases independently were of no

use as a change in 1 database would not reflect in

the other database which had to be performed

manually. For e.g. we want to make sure that

Registrar does not record grades of the student

who did not pay the fees at Bursars office.

• Building a whole new database for the system

again is a very expensive and time consuming

process.

• In addition to paying for a very expensive

software the University will have to run both the

old and the new databases together for a long

time to see that the new system works properly

or not.

• A Solution for this is to build a layer of

abstraction, called middleware, on top of all

legacy databases, without disturbing the original

databases.

• Now we can query this middleware layer to

retrieve or update data.

• Often this layer is defined by a collection of

classes and queried in an Object oriented

language.

• New applications can be written to access this

layer for data, while the legacy applications

continue to run using the legacy database.

The Heterogeneity Problem

When we try to connect information sources that were

developed independently, we invariably find that sources

differ in many ways. Such sources are called Heterogeneous

sources. A problem is faced while integrating them, which is

referred to as the Heterogeneity Problem. There are

different levels of heterogeneity viz.

1.

2.

3.

4.

5.

6.

Communication Heterogeneity.

Query-Language Heterogeneity.

Schema Heterogeneity.

Data type differences.

Value Heterogeneity.

Semantic Heterogeneity.

Communication Heterogeneity

• Today, it is common to allow access to your

information using HTTP protocols. However,

some dealers may not make their databases

available on net, but instead accept remote

accesses via anonymous FTP.

• Suppose there are 1000 dealers of Aardvark

Automobile Co. out of which 900 use HTTP while

the remaining 100 use FTP, so there might be

problems of communication between the dealers

databases.

Query Language Heterogeneity

• The manner in which we query or modify a

dealer’s database may vary.

• For e.g. Some of the dealers may have

different versions of database like some might

use relational database some might not have

relational database, or some of the dealers

might be using SQL, some might be using Excel

spreadsheets or some other database.

Schema Heterogeneity

• Even assuming that the dealers use a

relational DBMS supporting SQL as the query

language there might be still some

heterogeneity at the highest level like

schemas can differ.

• For e.g. one dealer might store cars in a single

relation while the other dealer might use a

schema in which options are separated out

into a second relation.

Data type Diffrences

• Serial Numbers might be represented by a

character strings of varying length at one

source and fixed length at another. The fixed

lengths could differ, and some sources might

use integers rather than character strings.

Value Heterogeneity

• The same concept might be represented by

different constants at different sources. The

color Black might be represented by an integer

code at one source, the string BLACK at

another, and the code BL at a third.

Semantic Heterogeneity

• Terms might be given different interpretations

at different sources. One dealer might include

trucks in Cars relation, while the another puts

only automobile data in Cars relation. One

dealer might distinguish station wagons from

the minivans, while another doesn’t.

CS 257

Database Systems Principles

Assignment 1

Instructor:

Student:

Dr. T. Y. Lin

Rajan Vyas (119)

Modes of Information Integration

Information

• Information Integration allows database or other

distributed information to work together.

• Three most common approaches:

Federated Database

DataWareHousin

Mediators

Federated Database System

• Sources are independent, but one source can call on others to

supply information.

• One-to-One connection between the all pairs of databases

DB 1

DB 2

DB 3

DB 4

•

•

•

Dealer 1

NeededCars(mode1, color, autotrans)

Dealer 2

Autos(seria1, model, color)

Options(seria1, option)

Dealer 1 to Dealer 2

f or ( e a ch t u p l e (:m, : c , :a) in neededCars )

i f ( : a = TRUE) { /* automatic transmission wanted */

SELECT s e r i a l

FROM Autos, Options

WHERE Autos.seria1 = Options.seria1 AND

Options.option = 'autoTrans' AND

Autos.mode1 = :m AND

Autos.color = :c;

}

e l s e { /* automatic transmission not wanted */

SELECT serial

FROM Autos

WHERE Autos.mode1 = :m AND

Autos.color = :c AND

NOT EXISTS (

SELECT *

FROM Options

WHERE s e r i a l = Autos.seria1 AND

option = 'autoTrans'

}; } }

•

Dealer 3

Cars(serialN0, model, color, autoTrans, ...)

Data WareHouse

Information

• In the data warehouse integration architecture, data from

several sources is extracted and combined into a global

schema.

• Copies sources of data from

several sources are stored in a

Ware

single database.

House

User Query

Result

Combiner

Extractor 1

Extractor 2

Sour

ce 1

Sour

ce 2

• Dealer 1

Cars(serialN0, model, color, autoTrans, cdPlayer, ... )

• Dealer 2

Autos(seria1, model, color)

Opt ions ( s e r i a l , option)

• WareHouse

AutosWhse(seria1N0, model, color, autoTrans, dealer)

Mediators

• It is a software component that supports a virtual database.

• The mediator doesn't store any data. The mechanics of

mediators and warehouses are rather different

User Query

Result

Mediator

Wrapper

Wrapper

Sour

ce 1

Sour

ce 2

Extractor for translating Dealer-2 data to the warehouse

• INSERT INTO AutosWhse(serialNo, model, color,autoTrans , dealer)

SELECT s e r i a l , model, color, ' y e s ' , 'dealer2'

FROM Autos, Options

WHERE Autos.seria1 = Options.seria1 AND

option = 'autoTrans';

• INSERT INTO AutosWhse(serialNo, model, color,autoTrans , dealer)

SELECT s e r i a l , model, color, 'no', 'dealer2‘ FROM Autos

WHERE NOT EXISTS (

SELECT * FROM Options

WHERE s e r i a l = Autos.seria1 AND

option = 'autoTrans'

);

CS 257

Database Systems Principles

Assignment 2

Instructor:

Student:

Dr. T. Y. Lin

Rajan Vyas (119)

Buffer Management

Introduction

The central task of making main-memory buffers available to processes, such as queries, that act

on the database is given to the buffer manager.

• Buffer Manager manages the required memory for the process with

minimum delay.

Read/Write

Buffers

Buffer Manager

Request

Buffer Management Architecture

• Two types of architecture:

•

Buffer Manager controls main memory directly : as in many relational DBMS

Buffer Manager allocates buffer in Virtual Memory: allowing the OS to decide

which buffers are actually in the main memory at any time and which are in the

swap space on disk that the OS manages.

• In Each method, the Buffer Manager should limit the number

of buffers in use which fit in the available main memory.

• When Buffer Manager controls the main memory directly, it

selects the buffer to empty by returning its content to disk. If

it fails, it may simply be erased from main memory.

• If all the buffers are really in use then very little useful works

gets done.

Buffer Management Strategies

• LRU (Least Recent Used)

It makes buffer free from the block that has not been read or write for the longest

time.

• FIFO(First In First Out)

It makes buffer free that has been occupied the longest and assigned to

new request.

• The “Clock” Algorithm

0

0

1

1

0

0

1

1

The Relationship Between Physical Operator

Selection and Buffer Management

• The query optimizer will eventually select a

set of physical operators that will be used to

execute a given query.

• the buffer manager may not be able to

guarantee the availability of the buffers when

the query is executed.