Diversity and cross cultural sensitivity

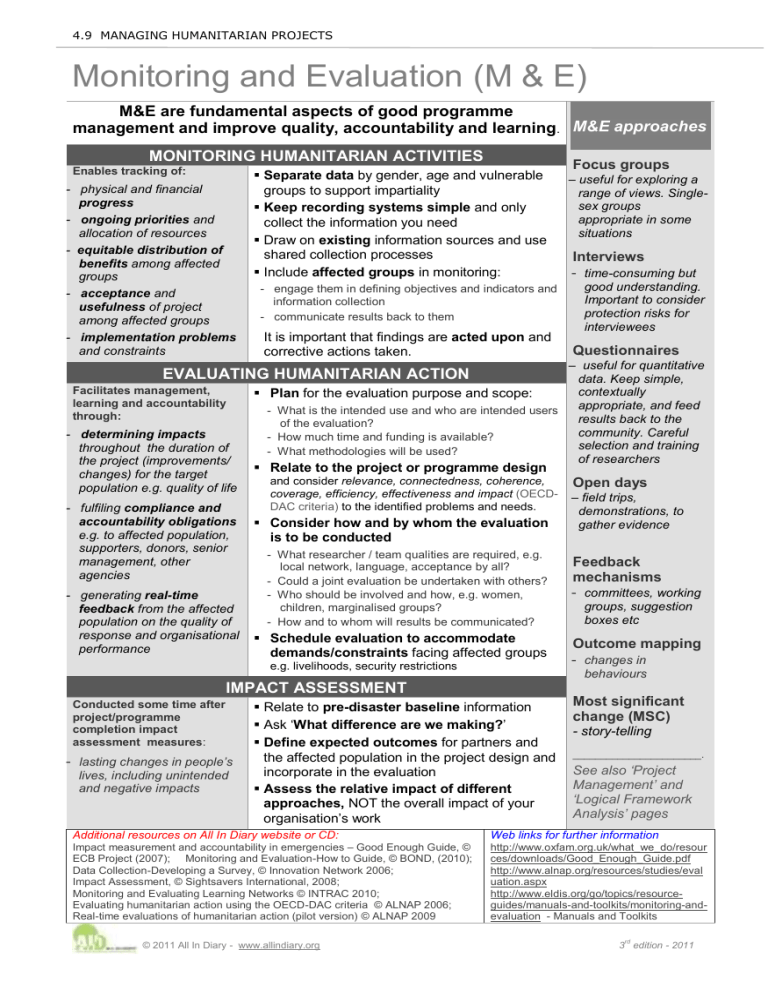

4.9 MANAGING HUMANITARIAN PROJECTS

Monitoring and Evaluation (M & E)

M&E are fundamental aspects of good programme management and improve quality, accountability and learning

.

MONITORING HUMANITARIAN ACTIVITIES

Enables tracking of:

- physical and financial

progress

- ongoing priorities and allocation of resources

- equitable distribution of

benefits among affected groups

Separate data by gender, age and vulnerable groups to support impartiality

Keep recording systems simple and only collect the information you need

Draw on existing information sources and use shared collection processes

Include affected groups in monitoring:

M&E approaches

Focus groups

– useful for exploring a range of views. Single- sex groups appropriate in some situations

Interviews

- acceptance and

usefulness of project among affected groups

and constraints

Facilitates management, learning and accountability through:

- determining impacts throughout the duration of the project (improvements/ changes) for the target population e.g. quality of life

- fulfiling compliance and

accountability obligations e.g. to affected population, supporters, donors, senior management, other agencies

- generating real-time

feedback from the affected population on the quality of performance

- engage them in defining objectives and indicators and information collection

- communicate results back to them

EVALUATING HUMANITARIAN ACTION

response and organisational

Conducted some time after project/programme completion impact assessment measures :

Plan for the evaluation purpose and scope:

- What is the intended use and who are intended users of the evaluation?

- How much time and funding is available?

- What methodologies will be used?

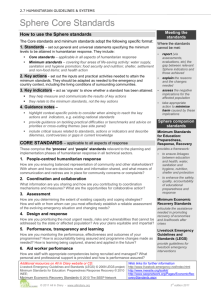

Relate to the project or programme design and consider relevance, connectedness, coherence, coverage, efficiency, effectiveness and impact (OECD-

DAC criteria) to the identified problems and needs.

Consider how and by whom the evaluation is to be conducted

- What researcher / team qualities are required, e.g. local network, language, acceptance by all?

- Could a joint evaluation be undertaken with others?

- Who should be involved and how, e.g. women, children, marginalised groups?

- How and to whom will results be communicated?

Schedule evaluation to accommodate demands/constraints facing affected groups e.g. livelihoods, security restrictions

IMPACT ASSESSMENT

- lasting changes in people’s lives, including unintended and negative impacts

It is important that findings are acted upon and corrective actions taken.

Relate to pre-disaster baseline information

Ask ‘

What difference are we making?

’

Define expected outcomes for partners and the affected population in the project design and incorporate in the evaluation

Assess the relative impact of different approaches, NOT the overall impact of your organisation’s work

- time-consuming but good understanding.

Important to consider protection risks for interviewees

Questionnaires

– useful for quantitative data. Keep simple, contextually appropriate, and feed results back to the community. Careful selection and training of researchers

Open days

– field trips,

demonstrations, to

gather evidence

Feedback mechanisms

- committees, working groups, suggestion boxes etc

Outcome mapping

- changes in behaviours

Most significant change (MSC)

- story-telling

_______________________.

See also ‘Project

Management’ and

‘Logical Framework

Analysis’ pages

Additional resources on All In Diary website or CD:

Impact measurement and accountability in emergencies

– Good Enough Guide, ©

ECB Project (2007); Monitoring and Evaluation-

How to Guide, © BOND, (2010);

Data CollectionDeveloping a Survey, © Innovation Network 2006;

Impact Assessment, © Sightsavers International, 2008;

Monitoring and E valuating Learning Networks © INTRAC 2010;

Evaluating humanitarian action using the OECDDAC criteria © ALNAP 2006;

Real-time evaluations of humanitarian action (pilot version)

© ALNAP 2009

Web links for further information http://www.oxfam.org.uk/what_we_do/resour ces/downloads/Good_Enough_Guide.pdf http://www.alnap.org/resources/studies/eval uation.aspx http://www.eldis.org/go/topics/resourceguides/manuals-and-toolkits/monitoring-andevaluation - Manuals and Toolkits

© 2011 All In Diary - www.allindiary.org

3 rd

edition - 2011