Nikhil Devanur: Hello, everyone

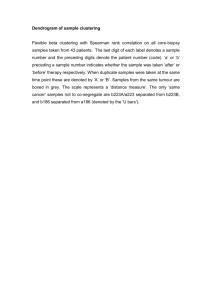

advertisement

>> Nikhil Devanur: Hello, everyone. It's my great pleasure to introduce Nina Balcan, who is professor at Georgia Tech. She did her PhD at CMU. So Nina's work, Nina moves seamlessly between machine learning and algorithms, game theory and she's going to tell us very interesting things about clusterings. [inaudible] applications. So Nina. >> Maria-Florina Balcan: All right. Thank you. Great. So I'll; talk about finding low error clusterings. And, in fact, an even better title for this work is approximate clustering without the approximation. And the meaning of this title will become clear later in the talk. And this is ->>: [inaudible]. >> Maria-Florina Balcan: Yes. And this is the line of work joint with a number of people that I'm going to describe throughout the talk. All right. So this talk is about unsupervised learning or clustering which is as you probably know it's a major research topic involve algorithms and machine learning these days. Why, because problems of clustering in data come up everywhere in many real whole applications and the few examples of alternate application include clustering news articles or Web pages by topic, clustering protein sequences by function or let's say clustering images by who is in them. Okay. Now, many of these clustering problems can be formally modelled as follows: So we see we are given a set S of an object, say N document. And we'll assume that there exists some unknown correct desired target clustering. So it means that each object has some known true label, in this case the topic. And then in this context, our goal will be to find the clusterings of low error where the error of a given clustering C prime is defined as a fraction of the points which are my classified with respect to target clusterings after re-indexing of the clusters. All right. So again the setup here is as follows: We assume that we have a target clusterings C, which is the partition C1, C2, CK of the whole set of points. And now if we are given a new clustering, a clustering C prime which is another partition C1 prime, C2 prime, CK prime of the same set of points and we define the error of C prime with respect to C as a fraction of the points that you get wrong, in the optimal matching between the clusters in C and clusters in C prime. That's a natural notion of error rate. It's the analog in the context of clustering is really the analog of the O-1 loss in the context of supervised classification. So it's very natural notion of error rates. It's in the context of clustering. We don't really care about getting the names of the clusters right, we only care about getting the clusters and [inaudible] right. >>: [inaudible]. >> Maria-Florina Balcan: We don't get penalized. So only care about getting the clusters right, not the names right. And this notion of error rate exactly captures it. Because we -- so the error is defined -- it's the fraction of the point that you get wrong in the optimal matching between the clusters. >>: [inaudible]. You need to know the [inaudible]. >> Maria-Florina Balcan: No, no, no, it's minimum [inaudible] over all possible permutations of the labels. It exactly captures what you want it to capture. All right. So that's a natural goal in the context of clustering. One can argue that to get the clustering of low error according to this notion of error. I'm not claiming that this is the only notion of error that you can consider but it's definitely a natural one. Okay? So that's our goal to get a clustering of low error. Now, in order to do so, as it is usually not clustering setting, we see that we are given a pairwise measure between a pairs of points, so a measure of similarity or the similarity between pairs of points. So for instance in the document clustering case, this can be something based on the number of key holes in common or in the protein clustering cases can be something based on the added distance and so on. And now clearing since our goal is to get the clustering of low error, this measure, this pairwise measure has to somehow be related to what they are trying to do, has to somehow be related to the topic because otherwise there will be absolutely no hope to do anything. And for actually for the rest of my presentation, I'm going to focus on the case when the pairwise measure that you are given is a did I similarity measure and in particular is a distant function satisfies a triangle inequality. All right. So that's our approach. Now, a classic approach to solve such a problem in -- especially in theoretical -- in the theoretical science community of machine learning sometimes is to view the data points as nodes in a weighted graph where the weights are based on the distance function which we are given and then to pick some objective function to optimize like k-median, k-means, min-sums and so on. And just to remind you in case you have never seen it, so in the k-median clustering problem, the goal is to find the partition C1 prime, C2 prime, CK prime and center points or medians for these parts for these clusters in order to minimize the sum over all points of the distance to their corresponding median. So that's the k-median clustering objective. And in the K means clustering case the goal is to minimize the sum of the square distances while in the min sum clustering case the goal is to minimize the sum of the intracluster dissimilarities. All right. So again, a standard approach to solve the -- such clustering problems in the theoretical computer science community and also in machine learning is to view the point -- the top point as node in a weighted graph where the weights are based on the dissimilarity function which we are given and then to pick some objective -- to optimize and to develop algorithms that are approximation algorithms for these objectives. So I should say that many of these objectives are actually NP-hard to optimize and so the best you can hope to do is to design an approximation algorithm for them. And significant effort has been spent in the last few years on developing better approximation algorithms for many of these objectives as well as developing but better results for them. So significant [inaudible] has been spent on this. For example, the best known approximation algorithm for k-median is a 3 plus epsilon approximation, and it's also known that it's NP-hard, beating one plus two over E it's NP-hard. And actually this is a result that appears in the paper by Mohammed [inaudible]. Okay. So this is all right. I mean, this effort is all -- well, is justified sometimes. However, in many -- in the clustering problems that I was talking about, our goal is to get a clustering of low error. Our goal is to get the points right to get close to the target clustering. And so that means that in those problems if we end up using a C approximation algorithm for objective phi k-median, in order to say to minimize the error rate, in order to say cluster our documents then that means that we must make an implicit assumption that all the clusterings that are in a factor of C of the optimal solution for objective phi are, in fact, close to our target clustering. I mean, it's implicit because otherwise the clustering with output are not going to be meaningful. So again, if we end up using a C approximation algorithm to objective phi, say to k-median to class of documents, what we really want to do is to get a clustering of low error to get close to our target clustering, which means that you must implicitly assume that any clustering within a factor of C of the optimal solution for objective phi must hold the epsilon close according to the semantic difference distance to our target clustering. >>: [inaudible]. >> Maria-Florina Balcan: It's -- so it's a parameter. It's closeness to the -- it's how close we are to the target clustering. >>: I have a basic question. [inaudible] clustering ->> Maria-Florina Balcan: Yeah? >>: [inaudible]. >> Maria-Florina Balcan: So we have this notion, that I'll introduce the notion of distance between two clusterings. So ->>: But I total know that ->> Maria-Florina Balcan: Right. So the problem is difficult. And that's why people go and come up with surrogate objectives. Right. Exactly. Because it's difficult to think what's -- I mean, I don't know that round two of this, given my -what I have, the information, I cannot compute distance to the ground truth and so that's how people look at these surrogate objectives like sort of objectives like optimal -- let's say give me some that I can measure actually. Which is one of the reasons for which people develop approximation algorithms for clustering. But the point that we make is that what we really care is to get close to the target clustering and then the question is under what conditions can we get close to the target clustering? >>: Are you saying [inaudible] epsilon such that [inaudible] exist in epsilon that is like low enough ->> Maria-Florina Balcan: No, no, no. >>: [inaudible]. >> Maria-Florina Balcan: So it's an assumption. So okay, so it's an assumption okay. So we -- I'm not -- so I'm okay. Let me call this a C epsilon property which says my instance satisfies this C epsilon property if any clusterings within a factor of C of the optimal solution is epsilon close to the target clustering. It's an assumption. But might be or might not be satisfied. But the claim is that I guess the -- it's not a claim. It's more like the motivation here is that use C approximation algorithm for objective phi, it must be the case that something like this should be satisfied for a reasonably small epsilon. Because otherwise the clustering that your are going to output, you'll see approximation is going to be far from the target clustering anyway, so you're going to be meaningless, you're going to be meaningless. >>: [inaudible]. >> Maria-Florina Balcan: It's not -- these are -- okay. So we're going to -- what I'm going to show is that you're going to be able to cluster while under such an assumption. >>: [inaudible] you can choose different phis and you -- you say that phis [inaudible] is a good choice. If it gives you back the clustering and this is the C epsilon. >> Maria-Florina Balcan: Okay. So think about it as being an assumption and we'll go -- and I'll go through some of the theorems and then we'll see how to [inaudible]. So for now, I just -- it's an assumption. So I'm going to say that my instance, what my instance is a similarity function with -- distance function which I am given and a hidden target clustering that I don't know so that my instance is equal to my algorithm and I'm going to save it in my algorithm, I'm going to satisfy this instance is going to satisfy this property if it is a K. But for my instance, all the clusterings that are within a factor of [inaudible] the optimal solution for [inaudible] close to the target clustering. So it's a property of a given instance. And the type of guarantees that you're going to make is that if these property's satisfied, then we're able to cluster well. >>: [inaudible] how truthful is that a sum of -- of course, it's [inaudible] with epsilon equal to one ->> Maria-Florina Balcan: Exactly. >>: But how truthful is it to expect that this is the case for ->> Maria-Florina Balcan: Okay. So I'll come on to that a bit later. >>: [inaudible]. >> Maria-Florina Balcan: Okay. So there is a question. So I'll come to that later. Okay. But for now, the motivation is what I'm trying to give is that if this is not satisfied anyway, using approximation algorithms to cluster well, it's not a good idea. So let's assume that this property's satisfied, and let's see what we can do with it. Okay. So given the C epsilon property -- so what we can show first of all, we can show that under the C epsilon property defining -- the problem finding a C approximation to objective phi is as hard as it is in the general case. So the problem of finding C approximation for objective phi does not become easier. However, under this property we are able to cluster well. We show that we'll be able to cluster well. We'll be able to get close to the target clustering without approximating the objective at all. So we solve the problem that we really wanted to solve without ever approximating the [inaudible] at all. And that's why we call this actually approximate clustering by the approximation. And please ask begin your question, Alexander, later, because I have answers to it. But it's too early to give them now. Okay. And so in particular what we can show is that -- so here is an example of a result. We can show that for the k-median clustering problem for any C greater than one under the C epsilon property will be able to get clear order of epsilon close to the target clustering. So we'll be able to offer a clustering of low error. And we are able to do so even for values of 0 getting a C approximation to the k-median clustering objective is actually NP-hard. And moreover if the target clusters are sufficiently large, we are able to get even epsilon close to the target clustering. These assumptions are best we can hope to do. >>: So [inaudible]. >> Maria-Florina Balcan: Yeah. So this is actually order of epsilon over C minus one. So I'm hiding some factors here, right. The target closures are sufficiently large we can get exact epsilon close which given the assumption is the best you can hope to do. >>: In those hard cases you would get close to the right cluster but then it would be hard to find the median ->> Maria-Florina Balcan: Right. So what we end up doing is without the clustering which is close to the target clustering, but they have no guarantee -not necessarily have a small k-median objective value: So that's with we do approximate clustering in some sense we've already approximation. >>: This is [inaudible]. >> Maria-Florina Balcan: Again? I'm sorry? >>: [inaudible] if we can't fix it, it ain't broke. Some sounds very similar to [inaudible] do you have any reason to believe in that assumption with C epsilon property? >> Maria-Florina Balcan: Okay. So no, I have no reason to believe on this. There are multiple answers actually. One of them is actually we did so I -- let me go for the dessert. I'll come back in a second again. Okay. Let me comment on this again later. Okay. So -- but that's a very good question. So is this assumption ever satisfied? And again, I'm going to comment on this later. All right. So now let me make a -- before actually presenting some of our results, let me make a note from -- make me just make a quit note. So from one approximation algorithm perspective a natural and absolutely totally legitimate and natural motivation for time to improve our approximation ratio from C1 to C2 where C2 is [inaudible] C1 is that the data satisfies this condition for C2 but not for C1. And this is absolutely natural and legitimate because, in fact, we can even show instances, so for any C2 smaller C1 we can construct distances that have the property but they satisfy the C2 epsilon property but do not satisfy even the C1, 0.9 -- 0.49 property. Okay? So this is an absolutely natural -- so it's absolutely natural -yes? >>: [inaudible] just having a softer version of the assumption or is that a requiring that all clustering [inaudible]. >> Maria-Florina Balcan: Okay. So please, please bear with me with a second. I have at the end actually, so I have multiple answers for the three questions that I got but are about the same topic. So I just -- I'm -- so this is -- I find that assumption [inaudible] from a theoretical point of view, because we get around an approximate result by using implicit assumptions with our of a basic assumption that I might present anyway. I'm not promoting it as something -- I'm not saying that this is satisfied in the real world. Although I do have some experimental evidence for it. And actually what I really think we should -- and so I comment on that. So I'm going to have a whole part of the talk where I'm going to talk more broadly but it may be more interesting to consider more broader properties. But I find this is a create assumption from a theoretical perspective. >>: [inaudible]. >>: [inaudible]. >> Maria-Florina Balcan: So it's along the same lines, please wait, okay. >>: It's not exactly the same line. I just -- so I don't understand what is [inaudible] about the objective phi because if phi is actually the distance, so you know, if phi were to [inaudible] the distance between the clusters and [inaudible] at the beginning then what does that mean? >> Maria-Florina Balcan: So this assumption -- so here we assume -- so it's an assumption about how this these distances and [inaudible] relate. The distance is objective phi, the [inaudible] objective phi [inaudible]. >>: [inaudible]. >> Maria-Florina Balcan: Yes, it's and assumption. But not all the instances are going to satisfy this assumption. But the type of guarantees that we make is that either assumption is satisfied -- is satisfied then we'll be able to cluster one. >>: The assumption is for specific objective function? >> Maria-Florina Balcan: Right. Exactly. It's not a genetic assumption. So it's an assumption. Yeah. Okay. Good. So okay. So just a quick comment before I guess I move on to some create results and maybe hopefully clarify some of the questions. So the comment is that from a approximation algorithm's perspective an absolutely legitimate and natural motivation for trying to improve the approximation ratio from say C1 to C2 or C2 is more than C1 is that maybe our data satisfies a C2 epsilon property but not even the C1, 0.49 property. Okay? And this is absolutely legitimate. We can show such datasets. However, what the kind of the very nice and distinct test is that in our work we can do much better. We are able to cluster well even for values of C or getting a C approximation to the objective phis actually NP-hard. So that's an interesting fact. All right. Then let me now give examples of results and we can show on this framework. So for instance, so here is an example. The results are we can show that either for the k-median clustering objective, either they satisfy the C epsilon property then we can get order of epsilon over C minus one close to the target clustering. And moreover, if the target clusters are sufficiently large, we can even get epsilon close to the target clustering. Or the notion of larger still depends on C. >>: So does that [inaudible] it needs the C in epsilon? >> Maria-Florina Balcan: It does. Yeah. So it needs ->>: [inaudible]. >> Maria-Florina Balcan: It's not clear because again, you need to be careful because again you cannot test if I give you a clustering of a close clustering you cannot test how close to the target clustering because they're not the target clustering. So it's not -- you cannot try the binary search. So you need to be more careful and to consider the [inaudible]. >>: It says here is [inaudible] of the target cluster. >> Maria-Florina Balcan: Yes. We can do something similar for the C epsilon -for the k-means clustering objective and this is based on this -- these results up here in the original paper. And we also looked at the min-sum clustering objective. And so here we saw again if data satisfies the C epsilon property and if the target clusterings are sufficiently large, then we can get order of epsilon over C minus one close to the target clustering. Now, in the case of arbitrary small target clusters if the number of clusters is smaller than log N over log log N, then again can get order of epsilon C minus -order of epsilon by C minus one close to the target. However, if K is larger than log N over log log N, then what we do, we output a list of size -- a small list that the target clustering is close to another clustering in the list. And this is actually enjoyment work with Mark Braverman. So we cite examples of results. And I should also ->>: K is the number of clusters? >> Maria-Florina Balcan: The number of target clusters. >>: In stone? [inaudible]. >> Maria-Florina Balcan: Yes. >>: [inaudible]. >> Maria-Florina Balcan: Yes. It's far from the input. Okay. So these are examples of theoretical -- of positive theoretical results. And I should also point out that in a recent UAI paper actually we implemented the -- the algorithm, the algorithm for large clusters for the K median objective, and it turns out that this algorithm or variant of this algorithm provide state of the art results for protein clustering. Okay. So this algorithm seemed to be useful. So in other words, we [inaudible] dataset where definitely the assumption is satisfied. Obviously we're not going to be satisfied on lots of datasets necessarily. Okay. And I hope that this provide a partially answer to your question. And I have an even more complete answer at the end of my presentation. Once I go through the C epsilon property. All right. So this is an overview of the type of results that I'm going to talk about. And for the rest of my presentation I'm going to pick an object in particular that k-median clustering objective and I'm going to show how we can includes well if the C epsilon property's satisfied. All right. So let's assume that indeed for our given dataset that C epsilon property is satisfied, that means any C approximation to the k-median optimal solution is in fact close to the target cluster. Okay? And just to simplify things, let's assume that this is just to avoid technicalities let's assume that a target clustering is a k-median optimal solution and that all the clusters are large enough. So we have size at least two times epsilon times N. This is again just to avoid technicalities in the presentation. We don't need these assumption in general. Then let's introduce some annotations. So for any point X let's you know by W of X the distance to its own center and let's you know by W2 of X the distance to its second closest center. And so that means -- and let's more over you know by W average the average of all points X of W of X. And so that means that just by definition OPT will be N times W average. Do you see there the value of the opt k-median solution at which by assumption -- by my assumption goes to the target cluster. Okay. So this is just annotation. Now, let me describe two -- let me now describe two properties, two implications that we can derive. The first one is an implication of the C epsilon property. And it says that if the C epsilon property is true then we can show that at most epsilon times N points can have W2 smaller than C minus one average over epsilon. Okay? Why? Because otherwise if more than epsilon N points have W2 small then what we could do, we could move those points to their second closest cluster, and you would do so without increasing the objective phi more than C minus one average over epsilon time epsilon N, which is C minus one times OPT, and so you get the clustering with these still C approximation to the k-median optimal solution but which is now epsilon far from the target clustering. So the C epsilon property would be contradicted. Okay? So a consequence of this C epsilon property is that we have it at most epsilon times N points can have W2 smaller than C minus 1 W average over epsilon. Now, an even simpler fact to prove is that at most five epsilon N over C minus one points can have W greater than C mines one W average over five epsilon. And this just follows the mark of inequality. So it's very easy to show. And so that means that now that for the rest of the points for what we call the good points, we have a huge gap. So for the rest of the point we have a W of X is more than C minus one W average over five epsilon and W2 of X is greater than C minus one W average over epsilon. So for the rest of the point we have huge gap. So W is smaller than C minus one W average over five epsilon and W2 is greater than five times this quantity over here. Okay. So let's not denote this quantity by D. Let me call this quantity D critical. And so what we have is that most of the points what we call the good points, one [inaudible] epsilon the points look like this. So they are in distance D critical of their own center. And so that means that by triangle inequality we get that the -- any two good points in the same cluster will be within distance two times the critical of each other. Now, we also know that any good point is a distance at least five sometimes the critical to any other center. And so again by triangle inequality this then implies the distance between any good two points into different clusters is at least four times the critical. And so now that means that if we now define a new graph G or we connect two points X and Y, if they are within distance two times the critical of each other then what we get is that the good points in the same clusters are going to form a clique so they are going to be connected in that graph G. And we also have good points into different clusters not even have a neighbor in common in this graph G because another two good points in two different clusters are distance at least four times the critical of each other. And so that means that now basically the world will look like this. So the good points are going to form cliques, so in the graph G, so these are the good sets over here. Now, good points might connect to bad points and bad points might connect to bad points. However, any bad point are only going to connect to a good set because we know that any two good points in two different sets are a distance at least four times the critical of each other. Okay? So the world in the -- basically looks like this. So good points from cliques, they connect to bad points, bad points can connect to bad basis points, however, any bad point can only connect to a good set. And so now that means that if we furthermore assume that the clusters are large, in particular if we assume that the clusters are -- have size at least two times the size of the bad set, roughly, then what we can do, we can create a new graph H where we connect two points X and Y if they share enough neighbors in common in the graph G, and then in this new graph -- so the largest -- the components of H, the K largest component -connective components of H are going to spill like this, and we can just output this clustering. And this will be a clustering of low error. In particular, the error that we get will be basically order of the fraction of the bad points. Because we know with any component, any connecting component of H is going to correspond to a good set plus possibly some bad points. So you correctly cluster all the good points might make mistakes on the bad points but a small fraction of the possible points. So they have able to get result of epsilon over C minus one. Okay. So that's basically the algorithm for the large clusters case. >>: [inaudible] question. Are the bad points the ones that are currently labeled incorrectly or the ones that don't fit the sort of separation assumption? >> Maria-Florina Balcan: Right. So the bad points are defined by the -- I'm defining the analysis, right. So all those points that do not satisfy the property put they are much closer to their own center than to any other center. So the bad points are defined in the analysis. Okay. Now, if the target clusters are not so large, so basically then -- so the previous algorithm that I described didn't really work because -- why, because it could have some clusters that are completed dominated by bad points. However, luckily it turns out that something -- some algorithm that is equally simple we're going to do the right thing, okay? And what's the algorithm? So what we do, we just as before, we can side the graph G or we connect to points within distance two times the critical of each other. Then what we do, we just pick the vertex of the highest degree in the graph G. We pull out its entire neighborhood and we just repeat again, pick the vertex of the highest degree in D, pull out its entire neighborhood and repeat. And what we can show is that this algorithm gives us a clustering of small error rate. And the main idea here is to basically charge off the errors to the bad points. Okay? And how? So basically here is the analysis. If the vertex V that we picked, the vertex of the highest degree that we picked was a good point, then we are happy. Because if we know that we can all pull outer an entire neighborhood, an entire good neighborhood. Yeah? So that's a good case. However, if the vertex V that we picked was a bad point, like this one here, then we might end up pulling only part of a good set. Okay? So you might end up missing say RI good points over here. However, since we are greedy, we know that we must have pulled out at least the right bad points as well. This is the vertex to the highest degree and so if we missed our high points here, that means that we must have pulled RI bad points here. And so that means that we can basically charge off all the errors to the bad points. And so we get a clustering again over the fraction of the bad points. And now here it is essential to use the fact with any good -- any pad point all attaches on the good sets. So this case the only bad case that we have to consider. >>: So this [inaudible] two clusters [inaudible] the original clusters? >> Maria-Florina Balcan: Yes. But the point is that we still don't -- so we -- so that's true, right. So if you pick a bad point here, we might end up only pulling part of a good set plus a bad set. So you might miss some good points. But the claim is that overall, if we sum over all target clusters we're not going to miss too many good points because since we are greedy here, the size -- so if we missed I but RI good points here, we must pull out some -- at least as many bad points, and so that means that all these can judge of such errors to the bad points. Right. So -- and so that means that basically overall we get the clustering of low error. Okay. Now, going back to the large clusters case, it is all that we can even -- what we can do even better. So we can get even epsilon close to the target clustering. If the target clusters are large, but now the notion of largeness are going to depend on this parameter C. Okay. And what's the main idea? The main idea is that there are really two kinds of bad points. So epsilon N bad points are confused bad points in the sense that the distance to their second closest center is not too much larger in the distance to their own center. So these are confused points. Now, the rest of the points, the rest of the bad points are not really confused, it's just they have W2 much larger, the larger than C minus one W average over epsilon W. However, they are far from their own center. So these are non confused bad points. And it turns out that. I mean, it's kind of nice, you can recover the non confused bad points. And in particular what the algorithm, given the output -- given the clustering C prime from the algorithm so far, what we do, we just reclassify each point X into the cluster of the lowest median distance. Okay? So we just have a [inaudible] same step. And now the idea is that why is this giving a clustering over R most epsilon, the idea is that the non confused bad point have a huge gap, at least five times the critical from their distance to their own center and the distance to any other center. Now, of course we don't really know which these centers are, but remember that any good point is within distance D critical of its own center. And so combining these two facts we get that the non confused bad points are much closer to good points in their own cluster than to good points in any other cluster. Now, if these clusters are large enough when the median will be controlled by good points. And so the non confused bad points are going to be pulled out in the right direction. So I'm going to reclassify them correctly. >>: But you don't compute the median. Do you compute the median? >> Maria-Florina Balcan: Yeah. Yes. So given the clustering so far for each point X we compute the median distance to each of the clusters. And we assign it to one of the smallest median distance. I mean, so it's a prosperous step. And if you do so for the charge clusters case, this gives you a clustering of small error. >>: [inaudible]. >> Maria-Florina Balcan: The median. By median I mean like the statistical median. >>: [inaudible]. >> Maria-Florina Balcan: Yes, yes, yes, yes, yes. >>: Because the word median it seems ->> Maria-Florina Balcan: Yeah, yeah. >>: [inaudible]. >> Maria-Florina Balcan: No. Statistical median. All right. And now a number of technical issues, so in particular -- so the definition of the algorithm -- so the description of the algorithm that I had so far depends on this value D critical, which C minus one W average over five epsilon which depends on W average which in turn depends on knowing the knowing OPT which is the value of the optimal solution say for the k-median. And now of course we don't really know what that is. Okay. Now, how can we do this? In the large clusters case, so what we can do is the following: So we can start with log S of W and we can keep increasing it until we get to a point of the K largest component of the graph H are large enough and cover a large fraction of the whole set of points. And we can show that if we do solve then we do get a clustering of low error. And at high level the idea is as follows, so if we do reach the right value of W, so in keep increasing the value W and when its only output -- so when the clustering with two output if we do reach the right value of W, then we clearly get a clustering of low error because I just target that. Now, however, we can -- our guess might be too small, so we might stop earlier. But because we make sure that all the components with the output have -- are large enough, if size at least say -- the size of the bad set, be very sure that all the components of the graph H correspond to different good sets. And this together with the fact that the only output cluster is a cluster of -- clusters which cover a large fraction of the space implies that the clustering with the output has a small error rate. And in order to show -- to show these basically use a structure of these graphs H and W and in particular -- H and G and H, and in particular use the facts that for smaller values of W these graphs are only sparser. Okay? So that's the high level idea. Yes? >>: So if I remember correctly the algorithm only depended on epsilon and C through D critical? >> Maria-Florina Balcan: It depends on epsilon and C which assumes that the right [inaudible] parameters but it also depend on W average which is OPT over N. So ->>: Which is interdependent on epsilon and C besides the ->>: Besides [inaudible]. >> Maria-Florina Balcan: Besides D critical. Also be like it's also on -- so the original algorithm, okay, you me -- no. No. So the well, yeah. Yeah. No. >>: In that case you are using [inaudible] C epsilon. The algorithm is [inaudible] way of finding C critical, so that's important property for the [inaudible] to know what the assumption is. >> Maria-Florina Balcan: Well, you mean practically speaking, yes. And I guess [inaudible] my student go for that when he implement the algorithm, right so he comes up with reasonable guesses of D critical, right. But so theoretically speaking, right. So this depends technically on epsilon and C and the moreover also on W average, which is optimal N and so again seems -- so and we don't really know OPT. But likely it turns out that in the large cluster's case we can kind of implement the [inaudible] of this algorithm where we keep increasing the value of W until the clustering, the K largest component, the connective components of H cover a large portion of the space and are large enough and even use the structure -- the structure of our problem [inaudible] this way we'll get the clustering of lower error. But these three only works in the case where we have large clusters. And ->>: [inaudible]. >> Maria-Florina Balcan: Is the number of bad points. I guess the fraction of bad points. So this should actually be B times that. B is a fraction of bad points. >>: [inaudible] C minus one ->> Maria-Florina Balcan: What's the exact [inaudible] basically, okay, you are asking what's the exact quantity. Let me quickly take a look. Whoops. Right. Epsilon over C minus one times N. Yeah. Okay. Now, in the small clusters case, however the trick that I had on the previous slide doesn't really work. And what we have to do in this case is to actually run -- to first use the constant factor approximation algorithm for k-median and then use -- and use it approximation in our algorithm and then the approximation part only goes into the final error guarantee. But actually it's an interesting -- I mean good technical question to see if in the small clusters case we can get rid of really running an approximation algorithm first. Okay. And I should also say that we have also looked at an inductive version of this -- so there's an inductive setting where we assume that the set of points that we see is only a small random sample from a much large abstract instance pace and our goal is to come up with a clustering of the whole instant space. And so algorithmically what we do in this case, we first draw a sample S. We apply our algorithm on the sample and then we insert new points into our clusters as they arrive. And so what really do basically we run -- we first run the algorithm on the sample. So, in other words, we -- for example, for the large cluster scales we create the graph G, the graph H. We take the largest component of H and then when new points arrive we send them based on the median distance to the clusters for produced over the sample. And then we can argue that this gives us a clustering of low error. And in particular, the key idea here is that the key property which we use in order to argue correctness for the large clusters case is that in each cluster we have more good points than bad points. Which is what we needed in the case where we knew the value of W, if you don't really know the value of W, you have twice more good points than bad points. Now, however, is the clusters are large enough and if the sample itself is large enough, this is also going to be true over the sample as well and so this helps us to argue correctness over all. Okay. So we also have done an inductive version of this algorithm. And I should also -- okay. Let me just mention that we also looked at the k-means and min-sum. For k-means the similar argument -- we can just derive a similar argument as for the k-median clustering objective. For min-sum, however, the argument is a bit more involved. The algorithm is again equally simple. So the first solution that we had for -- to the min-sum was to connect it in a standard way of something called the balanced k-median objective, where the goal is to find a partition and center points in order to minimize this quantity which is the sum over all points of the distance to our corresponding clusters times the size of the cluster varying. Okay. So it turns out that for this balanced k-median you derive similar properties. As for min-sum -- as for -- so for these balanced k-median you can derive similar properties for k-median, however, the main difference is that we -in these cases don't really have a uniform D critical so it turns out that here we can of large clusters with large of points that are very concentrated for small diameter and we could have small clusters with very few points that are -- that have a very huge diameter. But luckily it turns out that a similar argument can be applied in this case as well if a [inaudible] subtract for the large clusters. And then send largest and so on. And this gives a way to deal with the large clusters case for min-sum or k-median if C is greater than 2. And in order to do the small clusters case one -- we had to do a much more defined argument. All right. And so to summarize this part of the talk, this part of the presentation, so let me go a little bit of a high level. So one can think -one can pain view the usual approach, the usual approximation approach, the usual approximation algorithm approach to clustering as saying we cannot really measure what we wanted, that means closeness to the ground truth, because go back to your comment, so we cannot really measure it beyond closeness to ground truth so set up a proxy objective that we can optimize and let's approximate it. Okay. So this is one way to think about the usual approximation algorithm's approach to clustering. So this is almost like in this picture right here. So this guy is about to jump off the building and we tell him we couldn't get a psychiatrist but perhaps you'd like to talk about your skin. Dr. Perry here is a determine technologist. Yeah? So I could interpret the usual approximation algorithm's approach to clustering as exactly this way. Okay? However, this is maybe perhaps a bit too cynical because the point is that if we end up using the C approximation algorithm to clustering our arguments, that means that we must make an implicit assumption about how distances and the closeness to the ground truth relate. So you must make an implicit assumption about the structure of our problem. And so what we do in our work we make it explicit and if we make it explicit, we get around inapproximability results by using the structure that is implied by assumptions that we are making implicit anyway. Okay. So this is one way to think about this work. And I think it's kind of cold enough from a perceptual point of view and from a theoretical point of view. Now, of course I don't say that this assumption is an assumption that would be satisfied in practice and, in fact, what I say is that we should really analyze other interesting properties, other ways in which the similarity or the similarity information relates to the target clustering. Okay. And actually let me just take a comment that it will be also interesting to -so actually to be interesting to also use this framework in order to apply to other problems where the standard objective is just a proxy and maybe where implicit assumptions could be exploited to go around in approximate results. It would be nice to apply this to other problems as well. And just as a comment, recently I have been looking at the Epsilon Nash -approximate Epsilon Nash problem in this framework with some progress. Okay. And I think I have a few more minutes. Maybe I should make a few more comments. So this goes back I guess to one of the questions that I had earlier. So maybe the C epsilon property is not reasonable but maybe an approximation of this is reasonable. And, in fact, we did analyze a relaxation of this epsilon property and in particular we analyzed one that allows for outliers. So for example we say that data satisfies a new C epsilon property if it satisfied the C epsilon property only after a number of outlier misbehaved points, ill-behaved points have been removed, right. So we say data satisfies the C epsilon property for a number of really bad points have been removed. Okay. And for example in this case we need to output a list of clustering and we cannot output just the clustering. Now, if we think about it, for the C epsilon property any two clustering of a given dataset has satisfied the property will be chose to each other or be the order of epsilon close to each other. However, it turns out that for this new C epsilon property two different sets of outliers could result in two very different clusterings satisfying this property, new C epsilon property. And this is true even if most of the points come from large target clusters. And so in this case the best we can hope for is to output the small list of clustering satisfying -- the property with any clustering satisfies this new C epsilon property it will be close to one of the clusterings in the list. So this clustering is here necessarily. Okay? And so this is an example of relaxation of the C epsilon property but analyze -- and more generally -- let me just give you this example. More generally, going back to the general picture, one could imagine where -- so going back to this module picture, what our goal was to get a clustering of low error, one could just imagine analyzing other types of properties about how the target clustering and dissimilarity relate and develop algorithms that are -- that can be used to cluster while those assumptions are satisfied. And, in fact, I have a whole line of work that does this. And the interesting fact is that -- program, so the C EPS property was an example of a property with output just a single clustering was this close to the target clustering. However, it turns out that once you start analyzing more interesting properties, more realistic properties, basically it's not possible to just output a single clustering with this close to the target clustering and that indeed you are looking at clustering well up to a three or up to a list. And I think that actually this is like a really interesting direction to analyze ->>: [inaudible]. >> Maria-Florina Balcan: Up to a tree good. So what I mean is to output the tree such that the target clustering is close to a pull of the tree. So let me give you an example, okay? So here is a different -- okay. So now I'm going back. I'm going away from the C epsilon property so -- and let's just list there another property, a natural property that you might hope that your target clustering is going to satisfy with respect to your similarity information. So let's assume that you have the follow property that says that all points -- I mean, sounds very strong, all points are more similar to points in their own cluster than to points in any other cluster. So we are given a similarity information to satisfy this property. Yes. So this sounds extremely strong, really strong. All points are more similar to points in their own cluster than to points in any other cluster. However, it turns out that in this case you don't have enough information to just output the clustering which is close to your target clustering. Why? Because you could have multiple different clusterings of the same data to satisfy this property. And since you have no labeled examples it would be impossible for you to know which of them is your target clustering. And here I have an example here so say that you have these four blobs over here, the similarity being each of the blobs is on, the similarity of each of the blobs is on, the similarity of down is a half and the similarity across is zero. Now if you think about it, even if I tell the number of clusters is exactly three, then we still have three clustering, particular this one here and this one here to satisfy this property. They both satisfy the property but each point is more similar to points in its own clusters than to points in any other cluster. >>: [inaudible] in this case the assumption that there's two clusters is false and there are really four clusters and we should output the [inaudible] clustering to satisfy the clusters. >> Maria-Florina Balcan: Well, this would be ->>: [inaudible]. >> Maria-Florina Balcan: This will be one way to solve the problem. But a different way to solve the problem is to -- well, actually no. My target clustering to be honest is maybe this one. I mean, this is -- this is my target clustering. And this I want to see as an output. >>: [inaudible] comes back to my understanding of the [inaudible] if the starting cluster is a [inaudible] objective as [inaudible] so the advantage of the k-means or cluster [inaudible] some objective definition of [inaudible] clustering but here the notion of target clustering which -- how is that. >> Maria-Florina Balcan: Well, usually in supervised learning we have the notion of a target function and use the input that I can supervise learning, right? We have emotional target function, we use labeled examples and we learn it, right? Similarly here we have the notion of a target clustering. We use the similarity information that we are given and we output that is close to our target clustering. And by this examples ->>: Where this notion comes from. >> Maria-Florina Balcan: I mean the true clustering of proteins by function, for example. I mean it's a target clustering, right? It's just that -- so for example for the clustering protein sequence is by function. There's two clusterings of proteins sequences by function in that case. It's a simple case. Now here, it's a true ->>: [inaudible] how do you tell that this is the true ->> Maria-Florina Balcan: So the user -- the assumption is that the user would know to recognize it, right? So I would be able to -- if you are on ->>: The user when he knows [inaudible] I mean, they showcased it so -- his knowledge is not [inaudible] there is some way [inaudible]. >>: Just [inaudible] in different forms. You can get these back on the form of [inaudible] and ask them if this is a cluster or not or you can give them pairs and ask them [inaudible] same cluster or not. So there's multiple ways to [inaudible] from users [inaudible]. [brief talking over]. >>: [inaudible] target function which [inaudible] the function is -- you can see what the function [inaudible]. >> Maria-Florina Balcan: Yes. Similarly here I can see what it does. So I can see the partition, right. >>: No. I mean, you can't see a target as [inaudible] action. They can give you something -- I don't know whether it's [inaudible] target or [inaudible] I never see it in action. >> Maria-Florina Balcan: What do you mean by that? >>: I cannot clarify -- what it is equivalent of, you know, the [inaudible] function but I see that function being acted on by [inaudible]. >> Maria-Florina Balcan: Okay. >>: So there's nothing -- no equivalent of that here. I can see what this function does on ->> Maria-Florina Balcan: I can see the label of every single -- right. So I can similarly exactly like [inaudible] what he said, for example, say I can verify it so maybe I can take a few points from the versatile document and so maybe the algorithm presents me the -- all topics is a proposed cluster. I can draw a few points at random and then I can ask the user is this really a true cluster? And then it yes it might be no. In that case, what my algorithm would do would then split into two and then you get the few points at random from [inaudible] and then you verify that those points are the same cluster or not. So you can just very -- I mean ->>: But what I'm saying is that is not part of the [inaudible]. >> Maria-Florina Balcan: Well, it is ->>: [inaudible] saying, you know, you can show the customer and ask him if ->> Maria-Florina Balcan: No, it's an assumption -- we assume. No, no, no, it's and assumption. It's an assumption that there is hidden target including. And somebody knowing the truth -- the ground truth labors can measure closeness to it. The algorithm cannot measure closeness to it, but the user can verify closeness to it. >>: This target is not part of ->> Maria-Florina Balcan: It is. It is implicitly. It is. It's just that the algorithm cannot use the labels, cannot use the ground truth label, but once you deploy for example like here a tree and you also propose a clustering, the user can verify closeness to it. I mean, that's implicit an assumption. >>: I think that that [inaudible] of this discussion [inaudible]. >> Maria-Florina Balcan: Well, it's -- well, the problem with clustering is [inaudible] what I try to do in my work is to make it well posed and actually I want to think about this by analyzing these properties is in a way trying to make it well posed. And by the way I think about the property, one of -- for example, here's an example of a property. So the way I think about one of this property or the C epsilon property it's like it's real a concept class in supervised learning, right, it's -- it catches the bus, our prior knowledge about the clustering problem, right? And supervised learning if you assume that the target function is linear separate organizer, linear separate learning algorithm. If you assume it's a decision tree, organize a decision tree learning algorithm, and similarly here, depending on my assumption, my prior knowledge about how the similarity function lets the target clustering, I'm going to use a certain clustering algorithm versus another. I mean, the only interesting fact that comes in the context of clustering is that since we have no labeled examples at all, when you analyze more realistic properties you really want to output the difference not only a clustering but maybe a different structure, maybe a -- say a tree that compacting represents many different clustering that satisfy the property. >>: [inaudible] the results of [inaudible] if you try to mix it [inaudible]. >> Maria-Florina Balcan: So he mentions axioms about the clustering algorithm, which is a bit different. >>: Yes. >> Maria-Florina Balcan: He's ->>: It's true. But [inaudible] you've taken a different view on that. But he was trying to say, and this is what we see here, that if you make certain assumption of what you can expect to happen, so things like, you know, if I just blow up everything [inaudible] expect my cluster to change and stuff like that, and if you make this kind of assumption still you can come up with different solution which all satisfies your assumptions. So at this. [brief talking over]. >>: Sorry? >>: In this case [inaudible] this assumption that the number of clusters [inaudible]. [brief talking over]. >> Maria-Florina Balcan: No, in the more general model ->>: [inaudible]. I don't think that it's a done deal, right? But still I think that the sum total that we see here is exactly this type of assumption where, you know ->> Maria-Florina Balcan: Right. So even in Clambers [phonetic] case, so he forces the algorithm -- first of all, the way -- his approach is a bit different. He comes up with axioms that are not reasonable clustering algorithms which, as you say, are questionable. But he forces the algorithm to output the partition. Even in this case, if you allow the algorithm to output the hierarchy or something more then these axioms will break. So yeah. And by the way, for this -- for this module model case not fixed. It goes just for the C epsilon property to a specific part of my talk. Yeah? >>: Yes. Most NP reductions start with extremely bad problems, the clustering can barely be made to work. >> Maria-Florina Balcan: Yeah. >>: [inaudible] where K is of clustering doesn't make sense. >> Maria-Florina Balcan: So one way to interpret this work is -- okay. So, yeah, thank you, that's a good point. So there are good ways to interpret this work. So the C epsilon property, going back to the first part of the talk. So one way to interpret it is well, we make implicit assumptions that -- explicit. So this is one way to interpret. We make an assumption of how the target's function -- the [inaudible] with -- with the similarity information. So this is one way to interpret it. A different way to interpret it is that we show that we can cluster well if from natural assumptions, natural stability assumptions are satisfied. And in particular, the stability assumption is with any two clustering that have a good say k-median value are close to each other. Right. And in that case we show that you can cluster well. You can -- yeah. So that's a very good point actually. All right. More questions? >>: Sort of a broad question, I guess. So in supervised learning you have labels. >> Maria-Florina Balcan: Yeah. >>: [inaudible] that you try to [inaudible] the function that gives you the label given the data point. And like in those supervised and unsupervised learning you have to make an assumption with your model. And you could use like a median -- you could use the same model as your median, supervised learning you could say you're in a [inaudible]. >> Maria-Florina Balcan: Yeah. >>: So you could -- you could use the same models in supervised and unsupervised settings? Like [inaudible] ->> Maria-Florina Balcan: Well, it's -- yeah. So in supervised you have a lot more. So the algorithm has a lot more power because it can get labeled examples. And moreover you can test how we -- you and I do some calculation and come up with a hypothesis and then I can test it, you know. But in the clustering case is problem is somehow more difficult because ->>: Yeah. I guess if you have in the unsupervised case if you have the right model ->> Maria-Florina Balcan: Yeah? >>: So like in this case it's like the -- I guess it seems the C epsilon assumption is basically did you have the right model for the data, like the data separable or whatever feature space. >> Maria-Florina Balcan: Right. So these are assumptions. The C epsilon assumption is a assumption, which would be a different assumption, right. And the -- and these are ->>: [inaudible] if you have the right model then is the -- is the unsupervised case sort of -- like are there -- is it at a disadvantage, like is it going to get confused like if the -- is the unsupervised case more likely to say split a cluster [inaudible] as the supervised case? Is it more likely to make those kind of mistakes? I mean, other than the fact that you can't get the actual [inaudible] if you have the right model, is it more likely split clusters or return clusters [inaudible]? I don't know if I'm ->> Maria-Florina Balcan: Well, you need to be more specific, right. Right. So having labels like -- even for supervised learning you can use a similarity information in addition to labels. And you already have much more power. Like learning with kernel function, similarity function. You have similarity functions plus labels. The labels give you a lot more power, right? Because the algorithm can use the label to check, you know. It does some computations, it come up with some hypotheses and then you can check, you know, is this the close to being something good or not. But for clustering you can only do that because the only information you have is the similarity information and so -- I mean there is a clear advantage in that sense. >>: I guess the supervised case you definitely would not make the kind of mistakes that an unsupervised ->> Maria-Florina Balcan: Right. So maybe split the cluster when they should have been together, for example. >>: Yeah. Okay. >> Maria-Florina Balcan: Yeah. >> Nikhil Devanur: So Nina will be here until Monday and [inaudible]. Thank you. [applause]