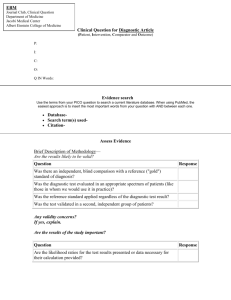

M1-PatientsPopulations-MedicalDecisionMaking

advertisement