IRLbot: Scaling to 6 Billion Pages and Beyond

advertisement

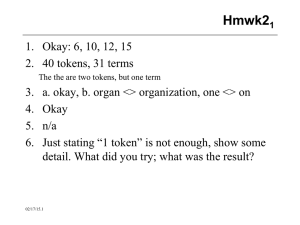

IRLbot: Scaling to 6 Billion Pages and Beyond Hsin-Tsang Lee, Derek Leonard, Xiaoming Wang, and Dmitri Loguinov 2008 presented by Gleb Borovitsky Presentation plan 1. Paper content. 2. Web crawler definition. 3. Focus of the paper. 4. Complications and problems. 5. Faced problems. 6. Results of the research. Paper content 1. Brief introduction. 2. Definition of open problems. 3. Web-crawling algorithms. 4. Performance examination of web crawler. Search engine Search engine components: • Web crawlers – find, download, parse content • Data miners – extract keywords – rank documents – answer user queries Basic crawler Crawling algorithm 1) Remove next URL u from Q queue. 2) Download of u and extraction of new URLs – u1 … uk from u. 3) For each ui verify the uniqueness of URL against URLseen. 4) Addition of passing URLs to Q and URLseen. 5) Update RobotCache. May also maintain DNScache. Crawler examples Paper focus Main points: • Design of a high-performance web crawler • Definition of main obstacles • Implementation and analysis of it’s effectiveness Main obstacles Paper addresses 3 serious problems: 1. Designing of a web-crawler that can parse large amount of pages with high speed and operate with fixed resources. 2. Designing of robust algorithms for computing site reputation during the crawl. 3. Avoiding crawler stalling due to politeness restrictions. Limitations • Scalability (N) – number of pages • Performance (S) – speed • Resource usage (Σ) – CPU and RAM resources Complications • Dynamically generated content – infinite loops – high-density link farms (SEO) – high number of hostnames (*.blogspot.com) • Web spam (spam farms) BFS ineffectiveness 1. The queue contents a lot of spam links due to high branching. 2. A lot of hostnames within a single domain. 3. Deliberate delay in HTTP and DNS requests. A better choice is to decide the link priority real-time. BFS (Breadth-First Search) Politeness Incorporating per-website and per-IP hit limits into a crawler is easy; however, preventing the crawler from “choking" […] is much more challenging IRLbot Objectives: • Keeping fixed crawling rate (1000 p/s) • Downloading large amounts of pages (1 bil) • Operation on a single server Faced problems • Verifying the uniqueness of URLs • Backlogging of multimillion-pages • Live-locks in processing URLs that exceed their budget Verifying the uniqueness of URLs • DRUM (Disk Repository with Update Management) – Stores large volumes of hashed data and implements fast check/update using bucket sort. Bucket sort: Backlogging of multimillion-pages • STAR (SPAM Tracking and Avoidance through Reputation) – Dynamically allocates the budget of allowable pages for each domain (and subdomains) considering indegree links. Live-locks in processing URLs • BEAST (Budget Enforcement with Anti-SPAM Tactics) – Sites with significantly exceeded budget are placed in back of the queue and are examined less frequently. IRLbot performance and results 1) 2) 3) • Duration = 41.25 days. N = 6 380 051 942 html pages. S = 1 789 pages/second (319 Mbit/s). Σ – just one stand-alone server (24-disk RAID5). “our manual analysis of top-1000 domains shows that most of them are highly-ranked legitimate sites, which attests to the effectiveness of our ranking algorithm.” Paper evaluation + • Real impressive results with implementation. • Effective approaches and deep research. • • Doesn’t explain much about caching techniques. The organization of the paper could be better. Thank you for the attention! Questions?