Writing Binary Files to HDFS

advertisement

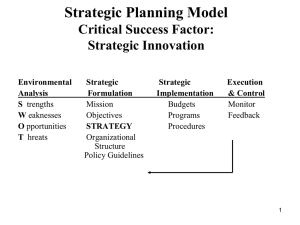

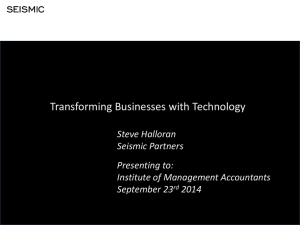

Writing Binary Files to HDFS © 2014 Informatica Corporation. No part of this document may be reproduced or transmitted in any form, by any means (electronic, photocopying, recording or otherwise) without prior consent of Informatica Corporation. All other company and product names may be trade names or trademarks of their respective owners and/or copyrighted materials of such owners. Abstract You can run an Informatica mapping to write a binary file to HDFS. In the mapping, create a Data Processor transformation to generate the binary file. Define a complex file data object to read the binary file and write it to HDFS. Supported Versions • Informatica Big Data Edition 9.6.1 Table of Contents Overview. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Example . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Complex File Writer Mapping. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3 Mapping Run-Time Properties. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4 Customer Source Files. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4 Sales Source Files. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4 Joiner Promotions Transformation. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5 Joiner Products Transformation. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5 Customer Sales Data Processor Transformation. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6 Creating the Data Processor Transformation. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7 Write Binary Single File . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7 Creating a Complex File Data Object . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8 Overview You can run an Informatica mapping to write a binary file to HDFS. In the mapping, create a Data Processor transformation to generate the binary file. Define a complex file data object to read the binary file and write it to HDFS. Example The following example describes a sample use case. An organization receives sales data in large flat files. They need to organize the sales data by customer and store the information on HDFS. The organization has multiple flat files that contain sales information. They need to consolidate the information by customer. The organization has a customer file, sales file, promotion file, products file, and a countries file. The sales, the promotions, and the products files contain a Sale_ID field that identifies a specific sale transaction. The countries file identifies the country for each customer. A developer creates a mapping to join the customer ID to the product information, and to join the customer ID to the promotions data. A Data Processor transformation consolidates the information from each file and writes an XML file to HDFS. To write the binary file to HDFS, the developer completes the following steps: 1. Configure an Informatica mapping to process the sales data. 2. Configure the physical data objects that contain the source data. 2 3. Configure a Joiner transformation to join the customer ID to the products record. Configure another Joiner transformation to join the customer ID to the promotions record. 4. Configure a Data Processor transformation to read the customer data, products, promotions, sales, and countries records. Configure the Data Processor transformation to return an XML file that contains sales data by customer. 5. Configure a complex data object to read the XML and write the binary stream to HDFS. The example is part of the Informatica Big Data Trial Sandbox for Hortonworks VM. You can download the VM from Informatica Marketplace in the following location: https://community.informatica.com/solutions/bde_trial_sandbox_for_hortonworks Complex File Writer Mapping The Complex File Writer mapping combines multiple flat files into an XML file and writes the XML file to HDFS. The following image shows the objects in the mapping: The mapping contains the following objects: Customer flat file source files The customer source files include information about customers. The files are Customers and Countries flat files. Sales flat file source files The sales source files include information about sales transactions. The files are Sales, Products, and Promotions flat files. Joiner_Promotions Joiner transformation that joins promotions with sales. 3 Joiner_Products Joiner transformation that joins sales with products. Customer_Sales_XML_Generator Data Processor transformation that generates an XML file of the sales by customer. Write_Binary_Single_File Complex file data object that writes one binary file to HDFS. Mapping Run-Time Properties Select the run-time environment to use when the mapping runs. When you run a mapping in the native environment, the Data Integration Service processes the transformation logic. When you run a mapping in the Hive environment, the Data Integration Service pushes the transformation logic to the Hadoop cluster through the Hive connection. The Hadoop cluster processes the data. For this example, the run-time environment is Hive. You can select either environment. Customer Source Files The Customers and Countries flat files contain information about the customer. The Customers source file contains the customer information, such as the customer ID, the name, and address. The following table includes example Customer records: Cust_Id 3 4 Cust_Fir Buick Frank Cust_Last Emmerson Hardy Cust_Gender M M Cust_Yr 1939 1934 Cust_Street 7 South Boyd Circle 17 Boyd Court The Countries source file contains the country and region information for each customer. The following table includes example Countries records: Customer 3 4 CountryID 52770 52770 CountryCD IT IT CountryName Italy Italy SubRegion Western Europe Western Europe Region Europe Europe Sales Source Files The Sales, the Products, and the Promotions files contain information about the sales. Each file contains the sales ID that identifies a sales transaction. The Sales file contains the customer ID, the sales ID, the product ID, and the promotion number. The following table includes examples of Sales records: Customer 3 4 Sale_ID 1 169 Prod 18 24 Date/Time 1999-06-03 00:00:00 2000-07-30 00:00:00 Promo 1 999 Qty 1.00 1.00 Cost 1758.11 47.86 The Products file contains product detail information for the products in each sale. The Products file contains the sales ID, the product ID, and the fields that describe the product. 4 The following table includes examples of Products records: Sale_ID 1 169 Prod_ID 18 24 Prod_Name Envoy Amb PCMCIA modem/fax Prod_Desc Envoy Amb PCMCIA modem/fax Prod_Subcat PCs Modems/Fax Prod_Cat Hardware Accessories The Promotions file contains promotion information for each sale. A Promotions record contains the sales ID, the promotion ID, and the fields that describe the promotion. The following table includes examples of Promotion records: Sale_ID 1 169 Promo_ID 1 999 Promo_Name Promotion 1 No Promotion Promo_Subcategory PCs No Promotion Promo_Category Hardware No Promotion Joiner Promotions Transformation The Joiner_Promotions transformation joins the customer ID from the sales record with the fields from the promotions records. The transformation returns a record that contains the promotion information for each sale for a customer. The following image show the ports in the Joiner transformation: The master source is the sales record. The detail source is the promotions record. The join condition is SALES_ID = SALE_ID. The transformation passes the customer ID and the promotion fields to the Data Processor transformation. Joiner Products Transformation The Joiner_Products transformation joins the customer ID from the sales records and the product information from the products record. The transformation returns a record that contains the product information in each sale for a customer. The following image show the ports in the Joiner transformation: 5 The master source is the sales record. The detail source is the products record. The join condition is SALES_ID = SALE_ID. The transformation passes the customer ID and the product fields to the Data Processor transformation. Customer Sales Data Processor Transformation The Customer_Sales_XML_Generator transformation is a Data Processor transformation that processes the customers, the sales, the promotions, the products, and the countries files. The transformation runs returns an XML file that contains sales data by customer. To process the source rows, enable the Data Processor transformation for relational input. Then, map the input ports to nodes in the XML output hierarchy. The following image shows where to map nodes from the input ports to the output XML hierarchy to the output ports in the Data Processor transformation: The XML output hierarchy contains the customers node. Within each customer node is the customer's country and multiple-occurring sales nodes. Within each sale are product and promotion nodes. The following text shows the structure of the output XML: customers customer (multiple-occurring) customerid firstname lastname gender ... 6 country countryID countrycode countryname sales sale (multiple-occurring) saleid timesold quantitysold ... product productid productname description ... promotion promotionid promotionname promotionsubcategory Note: The sample XML hierarchy does not include all the elements for each complex data type. Creating the Data Processor Transformation To create the Data Processor transformation, choose an XML schema that defines the XML output and then define the parser that processes the data. 1. In the Developer tool, click File > New > Transformation. 2. Select the Data Processor transformation and click Next. 3. Choose to create a Data Processor transformation with a wizard. 4. Select Relational Data as the input format and then click Next. 5. Select XML as the output format and click Next. 6. Browse for the XML schema that represents the structure of the target file. The schema for this example is customer_sales_schema.xsd. When you select this schema from the file system, the Developer tool imports the schema to the repository. The Developer tool shows that the customers node is the input hierarchy root. 7. Click Finish. The Developer tool creates the transformation in the repository. The Overview view appears in the Developer tool. You can view the input and the output ports in the Overview view. 8. Change the Output port precision to 65536, which is the maximum port size. The transformation must return an XML file in the port. 9. Click Input Mapping to view how the Developer tool maps the nodes from the input ports to the output hierarchy. The transformation writes the XML data to the binary Output port. 10. Save the transformation. Write Binary Single File Write_Binary_Single_File is a complex file data object that reads the XML file from the Data Processor transformation and writes the file to HDFS. When you create a complex file data object, the Developer tool creates a read and write operation. Use the complex file data object write operation as the target in the mapping. Use an HDFS connection to access the Hadoop distributed file system. For this example, the complex file data object writes a single file to HDFS. 7 When you open the complex file data object in a mapping, you can view a source transformation and an output transformation in the editor. Click the Source transformation to edit the General, Port, and Sources properties. Select the Output transformation and edit the Advanced properties. The Advanced properties include the output file path, the file format, and the file compression format. The following image shows the Advanced properties: Creating a Complex File Data Object The complex file data object receives the XML file from the Data Processor transformation and it writes a binary stream to HDFS. 1. In the Developer tool, select a project or folder in the Object Explorer view. 2. Click File > New > Data Object. 3. Select Complex File Data Object and click Next. The New Complex File Data Object dialog box appears. 4. Click Browse next to the Location option and select a repository location. 5. In the Resource Location list, select Remote. 6. Click Browse next to the Connection option and select an HDFS connection. 7. To add a resource to the data object, click Add next to the Selected Resource option. The resource is the complex file to write to HDFS. You can override the resource in the mapping. 8. Browse for the resource to add to the data object and click OK. 9. Optionally, enter a name for the complex file data object. The default name is Complex_File_Data_Object. 10. Click Finish. A read data object and two write data objects appear under the Physical Data Objects category in the Object Explorer view. 11. Select the write_binary_single_file operation as a target in the mapping. 12. Click the Advanced Properties in the complex file write data object. Configure the target file path, the target file type, and the type of compression. 13. Save the complex file data object. Author Ellen Chandler Principal Technical Writer 8