Spreadsheet Auditing

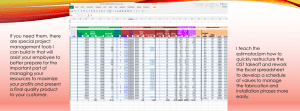

advertisement