The Moment Method for Combining Expert Judgements

advertisement

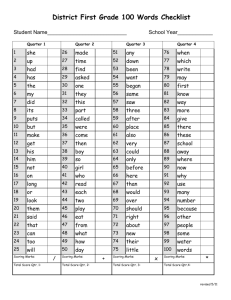

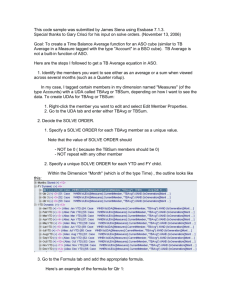

The Moment Method for Combining Expert Judgements Bram Wisse* & Tim Bedford & John Quigley University of Strathclyde *TNO Defence, Security and Safety, The Hague, NL tim.bedford@strath.ac.uk Overview • Expert judgement combination problem • Discussion of the Classical Method • Using expected values to specify uncertainties • Performance based weighting for the combination of assessments of moments • Trading off location and spread accuracy • Comparison of methods • Conclusion Expert judgement combination problem • • • • Each expert specifies distribution for variable of interest “Combined expert” is a weighted mixture Good theoretical justification for taking weighted mixture (linear pool) Problem is to choose weights • • • • Equal weights User determined Analyst determined Performance based 45 40 40 35 35 30 30 25 25 20 20 15 15 10 10 5 5 0 1st Qtr 2nd Qtr Weight 0.5 3rd Qtr 0 4th Qtr 1st Qtr 2nd Qtr 3rd Qtr 4th Qtr Weight 0.5 35 30 25 20 15 10 5 0 1st Qtr 2nd Qtr 3rd Qtr 4th Qtr Classical model of Cooke • Data provided in terms of quantiles – usually 5, 50, 95% • Model enables us to choose weights by means of an “asymptotically proper scoring rule” • Scores calculated using quantities known only to analyst • Score is product of two components – Calibration – Information • Calibration shows whether experts are assessing probabilities accurately – measured by chi-square likelihood • Information shows whether experts give narrow or broad uncertainty bands – measured by relative information to “merged” uncertainty bands Classical Model Exercise Used every year in Strathclyde risk class… Experience of the class test • • • • • • Protests… Chatting… Attempted use of internet… Lack of geographical knowledge Overconfidence Almost every time, someone strategizes…deliberately wide bands Issues with Classical model • Definitely good at selecting experts who are good at assessing uncertainty • Usually considered better than equal weights • But… • Trade-off of calibration and information is not clear • Possible to “game” by giving wide uncertainty bands – its only an asymptotic proper scoring rule 5% 50% 95% 5% 50% 95% Wider uncertainty bands do better when there is a low number of calibration questions Moment based method as alternative • Moments valid theoretical way of representing uncertainties • Can try to elicit means, variances etc or get them implicitly from quantiles • To go from quantiles to moments, use Extended Pearson-Tukey method…. 𝜇𝑛 = 0.185 (𝑥0.05 )𝑛 + 0.63 (𝑥0.50 )𝑛 + 0.185 (𝑥0.95 )𝑛 where x0:05; x0:50 and x0:95 are the expert’s assessments of the 5%-, 50%- and 95%-quantile for variable x. Key idea… • Natural quadratic loss score for moments 𝜑 = 𝑥 − 𝐸(𝑋) 2 • Overall loss for expert 𝑖 over all variables: 𝜑𝑖 = 𝑐𝑗 𝑥𝑗 − 𝐸𝑖 (𝑋𝑗 ) 2 Expert weighting • To convert to a score….high loss implies low score • Choose cut off value 𝛼 and give expert score 0 𝑖𝑓 𝜑𝑖 > 𝛼 𝑠𝑖 = 𝛼 − 𝜑𝑖 𝑜𝑡ℎ𝑒𝑟𝑤𝑖𝑠𝑒 • Expert weight is 𝑤𝑖 is normalized score 𝑤𝑖 = 𝑠𝑖 / 𝑠𝑗 • This is a proper scoring rule – expert who wants to maximize score should say what he/she thinks! • Choose optimal 𝛼 to minimize loss of combined expert • Parameters 𝑐𝑗 show relative importance Geometric interpretation, examples Assessment of uncertain quantities X and Y, by 4 experts Convex hull of experts’ assessments e3 ● e2 ● α ∞ 2 4 ● r 3 ● e1=(E1(X), E1(Y)) Linear pool expectations for all Cut-off’s α in (miniφi, ∞) ● e4 Geometric interpretation, examples Assessment of uncertain quantities X and Y, by 4 experts Convex hull of experts’ assessments e3 ● e2 ● 2 α ∞ ● 1 3 ● e1=(E1(X), E1(Y)) Linear pool expectations for all Cut-off’s α in (miniφi, ∞) e4 ● r2 Properties of proposed weighting scheme 1. 2. 3. 4. 5. The unnormalised weight of an expert is a proper scoring rule. The expert with the smallest loss always remains in the pool. The DM loss is always smaller than, or equal to the loss of any individual expert. The DM loss is always smaller than, or equal to the loss obtained when using equal weights for all experts. The weighting scheme defines a continuous mapping from a vector of expert losses to a vector of expert weights. Coherent Expectations (based on the notion of prevision, (DeFinetti, 1974), (Lad, 1996) ) 1. An expectation E(X) for vector X is coherent as long as there is no vector s with allowable scale such that sT∙[X – E(X)] < 0, for all X in R(X), the realm of X. 2. An expectation E(X) for vector X is coherent iff ● min R(X) ≤ E(X) ≤ max R(X), and ● E(X+Y) = E(X) + E(Y). 3. The assertion E(X)=e is coherent iff e in C(R(X)), where C(A) denotes the convex hull of set A. Example Convex hull for (E(X), E(X2)), X in [0,1] (recall Var(X) = E(X2) – E(X)2 ≥ 0 ) New scoring rule for means and variances • If symmetric, then a proper scoring rule for mean m and variance v, is 𝜑 = 𝑐1 (𝑥 − 𝑚) 2 +𝑐2 [ 𝑥 − 𝑚 Penalty from mis-estimation of mean 2 − 𝑣]2 Penalty from mis-estimation of variance Scoring rule for means and variances • If symmetric, then a proper scoring rule for mean m and variance v, is 𝜑 = 𝑐1 (𝑥 − 𝑚) 2 +𝑐2 [ 𝑥 − 𝑚 Weight on location penalty Weight on spread penalty 2 − 𝑣]2 𝑐1 𝑐2 Ratio represents trade-off between location and spread penalty Classical vs Moment methods • Comparison measures? • No absolute measures of performance external to methods • Classical and moment methods each have “own” performance criteria • General experience from comparing methods is • Optimal combination of experts is different • Overall performance on the “other” performance criteria good, so plausible combinations produced by both methods Conclusions • A performance based weighting scheme developed based on moment methods, with good properties • Cases show comparable performance of the classical model and the moment model on some existing data sets. • Both models provide good alternative to choosing equal weights for all experts. • Moment method has better foundational properties! • Transparent way of choosing weights that trade off location and spread accuracy – so more flexible than classical model