Word Document

advertisement

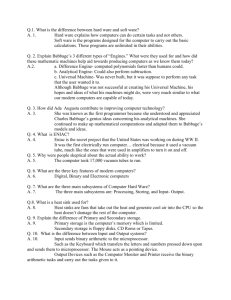

Introduction to the History of Computing Lecture 34, CSE1301 Computer Programming, 2003 Notes and references Sundials, Stonehenge Abacus http://www.ee.ryerson.ca:8080/~elf/abacus/history.html Astrolabe http://www.hps.cam.ac.uk/starry/isaslabe.html Da Vinci’s Mechanical Calculator http://www.maxmon.com/1500ad.htm Da Vinci was a genius: painter, musician, sculptor, architect, engineer, and so on. However, his contributions to mechanical calculation remained hidden until the rediscovery of two of his notebooks in 1967. These notebooks, which date from sometime around the 1500s, contained drawings of a mechanical calculator, and working models of da Vinci's device have since been constructed. Napier’s Bones http://www.maxmon.com/1600ad.htm In the early 1600s, a Scottish mathematician called John Napier invented a tool called Napier's Bones, which were multiplication tables inscribed on strips of wood or bone. Napier, who was the Laird of Merchiston, also invented logarithms, which greatly assisted in arithmetic calculations. Slide Rules http://www.hpmuseum.org/sliderul.htm http://www.oughtred.org/ http://www.maxmon.com/1600ad.htm In 1621, an English mathematician and clergyman called William Oughtred used Napier's logarithms as the basis for the slide rule (Oughtred invented both the standard rectilinear slide rule and the less commonly used circular slide rule). However, although the slide rule was an exceptionally effective tool that remained in common use for over three hundred years, like the abacus it also does not qualify as a mechanical calculator In 1614, John Napier discovered the logarithm which made it possible to perform multiplications and divisions by addition and subtraction. (ie: a*b = 10^(log(a)+log(b)) and a/b = 10^(log(a)-log(b)).) This was a great time saver but there was still quite a lot of work required. The mathematician had to look up two logs, add them together and then look for the number whose log was the sum. Edmund Gunter soon reduced the effort by drawing a number line in which the positions of numbers were proportional to their logs. The scale started at one because the log of one is zero. Two numbers could be multiplied by measuring the distance from the beginning of the scale to one factor with a pair of dividers, then moving them to start at the other factor and reading the number at the combined distance. Picture of a 2 foot Gunter scale (~110K) The yellow spots are brass inserts to provide wear resistance at commonly used points. Closeup on the Gunter scale (~72K) Soon afterwards, William Oughtred simplified things further by taking two Gunter's lines and sliding them relative to each other thus eliminating the dividers. In the years that followed, other people refined Oughtred's design into a sliding bar held in place between two other bars. Circular slide rules and cylindrical/spiral slide rules also appeared quickly. The cursor appeared on the earliest circular models but appeared much later on straight versions. By the late 17th century, the slide rule was a common instrument with many variations. It remained the tool of choice for many for the next three hundred years. Pascal’s Arithmetic Engine http://www.maxmon.com/1640ad.htm In 1640, Pascal started developing a device to help his father add sums of money. The first operating model, the Arithmetic Machine, was introduced in 1642, and Pascal created fifty more devices over the next ten years. (In 1658, Pascal created a scandal when, under the pseudonym of Amos Dettonville, he challenged other mathematicians to a contest and then awarded the prize to himself!) a However, Pascal's device could only add and subtract, while multiplication and division operations were implemented by performing a series of additions or subtractions. In fact the Arithmetic Machine could really only add, because subtractions were performed using complement techniques, in which the number to be subtracted is first converted into its complement, which is then added to the first number. Interestingly enough, modern computers employ similar complement techniques. Leibnez’s step reckoner http://www.maxmon.com/1670ad.htm Leibniz developed Pascal's ideas and, in 1671, introduced the Step Reckoner, a device which, as well as performing additions and subtractions, could multiply, divide, and evaluate square roots by series of stepped additions. Leibniz also strongly advocated the use of the binary number system, which is fundamental to the operation of modern computers. Jacquard’s punched card http://history.acusd.edu/gen/recording/jacquard1.html exhibit caption: "Joseph Marie Jacquard's inspiration of 1804 revolutionized patterned textile waving. For the first time, fabrics with big, fancy designs could be woven automatically by one man working without assistants. Jacquard never obtained a patent for this device. His 1801 patent, issued for an improved drawloom, is often mistaken for the punched-card controlled device that bears his name. Working in Lyon, France, Jacquard had created his machine by combining two earlier French inventors' ideas: he applied Jean Falcon's chain of punched cards to the cylinder mechanism of Jacques Vaucanson. Then he mounted his device on top of a treadleoperated loom. This was the earliest use of punched cards programmed to contral a manufacturing process. Although he created his mechanism to aid the local silk industry, it was soon applied to cotton, wool, and linen weaving. It appeared in the United States about 1825 or 1826. William Horstmann, the owner of a Philadelphia firm, may have introduced it here for weaving coach laces. Erastus Bigelow was issued the first patent for a Jacquard power loom in 1842. He used the loom for ingrain carpet weaving." Babbage’s Analytical Engine http://www.cbi.umn.edu/ http://www.fourmilab.ch/babbage/ http://www.ex.ac.uk/BABBAGE/ http://ei.cs.vt.edu/~history/Babbage.html http://hoc.co.umist.ac.uk/storylines/compdev/earlymechanical/analytengine.html Difference Engine http://hoc.co.umist.ac.uk/storylines/compdev/earlymechanical/diffengine.html Although the Difference Engine was very important in the development of computation, the Analytical Engine was revolutionary. It was the first example of a machine being directed by an external program. This arose from the idea that the contents of the result wheel could be fed back in to the difference wheels, thereby allowing the automatic calculation of tables whose contents had no elementary analytical solution. As he thought this problem over, he began to grasp the concept of the mechanical registers all being identical, autonomous units. Rather than just adding their quantities to their neighbours, they could be thought of as stores for numbers. These numbers could even be simply passed from register to register without any arithmetic done on the values. His letter of May 1835 to Mr. Quetelet shows the impact of the revelation occurring to him, in which he says he had... "...for six months been engaged in making the drawings of a new calculating engine of far greater power than the first. I am myself astonished at the power I have been enabled to give this machine; a year ago I should not have believed this result possible." The design of this machine was to remain an academic exercise, Babbage apparently satisfying himself with the design and redesign of the machine until his death. Despite this, it is apparent from the design that he is designing it with construction in mind, as many components were clearly designed to be produced without undue difficulty. A birds eye view of a design near to the final version is shown below. This shows the number stores similar to the Difference Engine, along with the numerous detailed control axles involved. The various components can be split down into the store, the mill and he control barrel, which correspond to the memory, the arithmetic unit and the program control unit respectively. The number stores can be expanded, and in Babbage's literature he refers to systems with 100 40 digit numbers and ones with 1000 50 digit numbers. The mill consists of three main registers, two to contain operands, and one for housekeeping jobs related to multiplication etc. It also contains 9 table axes for intermediate results in multiplication and division. The control barrels had the routines for the various operations on it in such a way that the studs pushed on different sets of control rods to engage or disengage the levers and gear trains that were necessary to implement different instruction sets. The control barrel had the additional capacity of controlling its own action to execute different operations. Each step on the barrel therefore was similar to an instruction of today, with the address of the next instruction coded in the current instruction. Another facility was a counter register, which was intended to count the number of iterations of a particular operation, (the number of times it had been performed). This relates directly to the loop counters frequently used in today's programming languages. A notable advance was also made in dealing with propagating carries, which originally required an additional operation. This was done with a novel mechanical system whereby the carry digit bypasses all the numbers set to 9, to arrive straight at the first number it can be added to. This anticipating carriage allowed carries to be propagated regardless of the distance they have to cover. Analogies of this method were used in some early electrical accounting machines too. As if this wasn't enough, it also used punched cards to input it's programs, an idea Babbage took from the automatic Jacquard loom, which loaded its patterns from punched cards. They could not only include basic instructions but also conditional and non-conditional branches and loops. This meant the machine was easily capable of carrying out any calculations Babbage could ever require of it. Babbage also conceived the potential for multiple card readers for program, data and function values. These are all concepts that were repeatedly used in the field for well over 100 years. Babbage's Lithograph of the Analytical Engine The final machine was capable of additions in about 1 second, and multiplications would take approximately 1 minute. Even 100 years later, as will be seen, the mechanical Harvard Mark I would take 0.3 of a second for an addition. The final machine would take up a space of 15 by 6 by 10 to 20 feet, depending on the number of numbers it incorporated. The tolerance of the components would have to be within 1 / 500 of an inch, which although possible at that time, would be very expensive. The Analytical Engine However, in 1906 the actual construction was completed by his son, Major Henry Babbage, with help from a local engineering firm. The first program was to calculate and print the first 25 multiples of pi to 29 decimal places, to demonstrate that it worked. This machine is now housed along with several other Babbage machines in the Science museum in London, a photo of which can be seen here. The basic design was copied to a large degree in the later Harvard Mark 1 machine, which was itself almost exactly duplicated electronically in the ENIAC. Ada Augusta Lovelace (nee Byron) "Sketch of the Analytical Engine" by L. F. Menabrea, translated and with extensive commentary by Ada Augusta, Countess of Lovelace. This 1842 document is the definitive exposition of the Analytical Engine, which described many aspects of computer architecture and programming more than a hundred years before they were "discovered" in the twentieth century. If you have ever doubted, even for a nanosecond, that Lady Ada was, indeed, the First Hacker, perusal of this document will demonstrate her primacy beyond a shadow of a doubt. http://www.ex.ac.uk/BABBAGE/ada.html In 1833 Ada met Babbage and was fascinated with both him and his Engines. Later Ada became a competent student of mathematics, which was most unusual for a woman at the time. She translated a paper on Babbage's Engines by General Menabrea, later to be prime minister of the newly united Italy. Under Babbage's careful supervision Ada added extensive notes (c.f. Science and Reform, Selected Works of Charles Babbage, by Anthony Hyman) which constitute the best contemporary description of the Engines, and the best account we have of Babbage's views on the general powers of the Engines. Beautiful, charming, temperamental, an aristocratic hostess, mathematicians of the time thought her a magnificent addition to their number. It is often suggested that Ada was the world's first programmer. This is nonsense: Babbage was, if programmer is the right term. After Babbage came a mathematical assistant of his, Babbage's eldest son, Herschel, and possibly Babbage's two younger sons. Ada was probably the fourth, fifth or six person to write the programmes. Moreover all she did was rework some calculations Babbage had carried out years earlier. Ada's calculations were student exercises. Ada Lovelace figures in the history of the Calculating Engines as Babbage's interpretress, his `fairy lady'. As such her achievement was remarkable. http://historia.et.tudelft.nl/wggesch/geschiedenis/computer/ Herman Hollerith http://www.maxmon.com/punch1.htm The first practical use of punched cards for data processing is credited to the American inventor Herman Hollerith, who decided to use Jacquard's punched cards to represent the data gathered for the American census of 1890, and to read and collate this data using an automatic machine. a Many references state that Hollerith originally made his punched cards the same size as the dollar bills of that era, because he realized that it would be convenient and economical to buy existing office furniture, such as desks and cabinets, that already contained receptacles to accommodate stacks of bills. Other sources consider this to be a popular fiction. Whatever the case, we do know that these cards were eventually standardized at 7 and 3/8 inches by 3 and 1/4 inches, and Hollerith's many patents permitted his company (which became International Business Machines (IBM) in 1924) to hold an effective monopoly on punched cards for many years. Herman Hollerith Copyright (c) 1997. Maxfield & Montrose Interactive Inc a Hollerith, who was no one's fool, had quickly realized that the real money was not to be made in the tabulating machines themselves, but rather in the tens or hundreds of thousands of cards that were used to store data. Although other companies came up with innovative ways to bypass Hollerith's patents, they failed to capitalize on their advances, thereby giving IBM a chance to regain the high ground. a For example, Hollerith's early This set the standard until 1924- cards were punched with round holes, because his prototype machine employed cards with holes created using a tram conductor's ticket punch. 1925, when the Remington Rand Corporation evolved a technique for doubling the amount of information that could be stored on each card. But they failed to exploit this advantage to its fullest extent, and, in 1929-1931, IBM responded by using rectangular holes, which allowed them to pack 80 columns of data onto each card. Although other formats appeared sporadically (including some from IBM), the 80 column card shown above overwhelmingly dominated the punched card market from around the 1950s onward. Hollerith continued to use round holes in his production machines, which effectively limited the amount of data that could be stored on each card. By the early 1900s, Hollerith's cards supported 45 columns, where each column could be used to represent a single character or data value. a The figure to the left shows one of IBM 80-column punched card format the early 80 column IBM cards (not to scale). Each card contains 12 rows of 80 columns, and each column is typically used to represent a single piece of data such as a character. The top row is called the "12" or "Y" row; the second row from the top is called the "11" or "X" row; and the remaining rows are called the "0" to "9" rows (indicated by the numbers printed on the cards). a This figure (which took one heck of a long time to draw let me tell you) illustrates one of the early, simpler coding schemes, in which each character could be represented using no more than three holes. (Note that we haven't shown all of the different characters that could be represented). Over the course of time, more sophisticated coding schemes were employed to allow these cards to represent different character sets such as ASCII and EBCDIC; the rows and columns stayed the same, but different combinations of holes were used. a One advantage of punched Although punched cards are cards over paper tapes was that the textual equivalent of the patterns of holes could be printed along the top of the card (one character above each column). Another advantage was that it was easy to replace any cards containing errors. However, the major disadvantage of working off-line (with both punched cards and paper tapes) was that the turn-around time to actually locate and correct any errors was horrendous. rarely used now, we endure their legacies to this day. For example, the first computer monitors were constructed so as to display 80 characters across the screen. This number was chosen on the basis that you certainly wouldn't want to display fewer characters than were on an IBM punched card, and there didn't appear to be any obvious advantage to being able to display more characters than were on a card (see also John Vincent Atanasoff's burnt offerings). a MIT Differential Analyzer http://web.mit.edu/mindell/www/analyzer.htm http://www.coe.uh.edu/courses/cuin7317/students/museum/bbrown.html Atanasoff-Berry Computer http://www.ieee.org/organizations/history_center/milestones_photos/atanasoff.ht ml John Vincent Atanasoff conceived basic design principles for the first electronic-digital computer in the winter of 1937 and, assisted by his graduate student, Clifford E. Berry, constructed a prototype here in October 1939. It used binary numbers, direct logic for calculation, and a regenerative memory. It embodied concepts that would be central to the future development of computers. "It was at an evening of bourbon and 100 mph car rides," Atanasoff said, "when the concept came, for an electronically operated machine, that would use base-two (binary) numbers instead of the traditional base-10 numbers, condensers for memory, and a regenerative process to preclude loss of memory from electrical failure.” Atanasoff wrote most of the concepts of the first modern computer on the back of a cocktail napkin. Then, in late 1939, John V. Atanasoff teamed up with Clifford E. Berry to build a prototype. They created the first computing machine to use electricity, vacuum tubes, binary numbers and capacitors. The capacitors were in a rotating drum that held the electrical charge for the memory. Berry, with his background in electronics and mechanical construction skills, was the ideal partner for Atanasoff. The prototype won the team a grant of $850 to build a fullscale model. They spent the next two years further improving the Atanasoff-Berry Computer (aka ABC). The final product was the size of a desk, weighed 700 pounds, had over 300 vacuum tubes, and contained a mile of wire. It could calculate about one operation every 15 seconds, today a computer can calculate 150 billion operations in 15 seconds. Too large to go anywhere, it remained in the basement of the physics department. The war effort prevented Atanasoff from finishing the patent process and doing any further work on the computer. When they needed storage space in the physics building, they dismantled the Atanasoff-Berry Computer. Alan Turing http://www.turing.org.uk/turing/ Biography: Alan Turing: the enigma, by Alan Hodges http://ei.cs.vt.edu/~history/Turing.html Konrad Zuse http://irb.cs.tu-berlin.de/~zuse/Konrad_Zuse/en/Rechner_Z1.html Computer Generations history.acusd.edu/gen/recording/ computer1.html Moore’s Law http://www.intel.com/research/silicon/mooreslaw.htm Gordon Moore made his famous observation in 1965, just four years after the first planar integrated circuit was discovered. The press called it "Moore's Law" and the name has stuck. In his original paper, Moore observed an exponential growth in the number of transistors per integrated circuit and predicted that this trend would continue. Through Intel's relentless technology advances, Moore's Law, the doubling of transistors every couple of years, has been maintained, and still holds true today. Intel expects that it will continue at least through the end of this decade. The mission of Intel's technology development team is to continue to break down barriers to Moore's Law. 4004 8008 8080 8086 286 386™ processor 486™ DX processor Pentium® processor Pentium II processor Pentium III processor Pentium 4 processor Year of introduction 1971 1972 1974 1978 1982 1985 1989 Transistors 2,250 2,500 5,000 29,000 120,000 275,000 1,180,000 1993 3,100,000 1997 7,500,000 1999 24,000,000 2000 42,000,000 Gordon Moore made his famous observation in 1965, just four years after the first planar integrated circuit was discovered. The press called it "Moore's Law" and the name has stuck. In his original paper, Moore observed an exponential growth in the number of transistors per integrated circuit and predicted that this trend would continue. Through Intel's relentless technology advances, Moore's Law, the doubling of transistors every couple of years, has been maintained, and still holds true today. Intel expects that it will continue at least through the end of this decade. The mission of Intel's technology development team is to continue to break down barriers to Moore's Law. The History of the Computer The earliest computing instrument is the 'abacus', which was first used 2,000 years ago. It is a simple wooden rach holding parallel wires on which beads are strung. These beads can be moved along the wire helping the user to calculate ordinary arithmetic operations. In 1642 Blaise Pascal built the first "digital calculating machine". It could add numbers that were entered into the machine using dials. Pascal initially built it to help his father, who was a tax collector. Gottfried Wilhelm von Liebniz in 1671 invented a computer that could add and multiply. Multiplication was done by successive adding and shifting. This computer was built in 1694, and used a special "stepped gear" mechanism (which is still in use) for introducing the addend digits. Although the prototypes built by Leibniz and Pascal were not widely used, they remained curiosities until more than a century later, when Tomas of Colmar (Charles Xavier Thomas) developed the first commercially successful mechanica calculater in 1820. This calculator was capable of adding, subtracting, multiplying and deviding. By the 1890 the caclulator had developed into an apparatus that could accumulate results, store them and print them. Charles Babbage realized (1812) that many long computations, especially those needed to prepare mathematical tables, consisted of routine operations that were regularly repeated; from this he surmised that it ought to be possible to do these operations automatically. He began to design an automatic mechanical calculating machine, which he called a "difference engine," and by 1822 he had built a small working model for demonstration. With financial help from the British government, Babbage started construction of a full-scale difference engine in 1823. It was intended to be steam-powered; fully automatic, even to the printing of the resulting tables; and commanded by a fixed instruction program. Charles Babbage (1792-1871) (National Portrait Gallery, London) The difference engine, although of limited flexibility and applicability, was conceptually a great advance. Babbage continued work on it for 10 years, but in 1833 he lost interest because he had a "better idea" --the construction of what today would be described as a general-purpose, fully program-controlled, automatic mechanical digital computer. Babbage called his machine an "analytical engine"; the characteristics aimed at by this design show true prescience, although this could not be fully appreciated until more than a century later. The plans for the analytical engine specified a parallel decimal computer operating on numbers (words) of 50 decimal digits and provided with a storage capacity (memory) of 1,000 such numbers. Built-in operations were to include everything that a modern general-purpose computer would need, even the all-important "conditional control transfer" capability, which would allow instructions to be executed in any order, not just in numerical sequence. The analytical engine was to use a punched card (similar to that used on a Jacquard loom), which was to be read into the machine from any of several reading stations. The machine was designed to operate automatically, by steam power, and it would require only one attendant. Babbage's computers were never completed. Various reasons are advanced for his failure, most frequently the lack of precision machining techniques at the time. Another conjecture is that Babbage was working on the solution of a problem that few people in 1840 urgently needed to solve. Babbage's Difference Engine (1833) (The Bettmann Archive) After Babbage there was a temporary loss of interest in automatic digital computers. Between 1850 and 1900 great advances were made in mathematical physics, and it came to be understood that most observable dynamic phenomena can be characterized by differenctial equations, so that ready means for their solution and for the solution of other problems of calculus would be helpful. Moreover, from a practical standpoint, the availability of steam power caused manufacturing, transportation, and commerce to thrive and led to a period of great engineering achievement. The designing of railroads and the construction of steamships, textile mills, and bridges required differential calculus to determine such quantities as centers of gravity, centers of buoyancy, moments of inertia, and stress distributions; even the evaluation of the power output of a steam engine required practical mathematical integration. A strong need thus developed for a machine that could rapidly perform many repetitive calculations. A step toward automated computation was the introduction of punched cards, which were first successfully used in connection with computing in 1890 by Herman Hollerith and James Powers, working for the U.S. Census Bureau. They developed devices that could automatically read the information that had been punched into cards, without human intermediation. Reading errors were consequently greatly reduced, work flow was increased, and, more important, stacks of punched cards could be used as an accessible memory store of almost unlimited capacity; furthermore, different problems could be stored on different batches of cards and worked on as needed. Herman Hollerith (1860-1929) (The Bettmann Archive) These advantages were noted by commercial interests and soon led to the development of improved punch-card business-machine systems by International Business Machines (IBM), Remington-Rand, Burroughs, and other corporations. These systems used electromechanical devices, in which electrical power provided mechanical motion--such as for turning the wheels of an adding machine. Such systems soon included features to feed in automatically a specified number of cards from a "read-in" station; perform such operations as addition, multiplication, and sorting; and feed out cards punched with results. By modern standards the punched-card machines were slow, typically processing from 50 to 250 cards per minute, with each card holding up to 80 decimal numbers. At the time, however, punched cards were an enormous step forward. By the late 1930s punched-card machine techniques had become well established and reliable, and several research groups strove to build automatic digital computers. One promising machine, constructed of standard electromechanical parts, was built by an IBM team led by Howard Hathaway Aiken. Aiken's machine, called the Harvard Mark I, handled 23-decimal-place numbers (words) and could perform all four arithmetic operations. Moreover, it had special builtin programs, or subroutines, to handle logarithms and trigonometric functions. The Mark I was originally controlled from prepunched paper tape without provision for reversal, so that automatic "transfer of control" instructions could not be programmed. Output was by card punch and electric typewriter. Although the Mark I used IBM rotating counter wheels as key components in addition to electromagnetic relays, the machine was classified as a relay computer. It was slow, requiring 3 to 5 seconds for a multiplication, but it was fully automatic and could complete long computations. Mark I was the first of a series of computers designed and built under Aiken's direction. Electronic Digital Computers The outbreak of World War II produced a desperate need for computing capability, especially for the military. New weapons systems were produced for which trajectory tables and other essential data were lacking. In 1942, J. Presper Eckert, John W. Mauchly, and their associates at the Moore School of Electrical Engineering of the University of Pennsylvania decided to build a high-speed electronic computer to do the job. This machine became known as ENIAC, for Electronic Numerical Integrator and Computer (or Calculator).The size of its numerical word was 10 decimal digits, and it could multiply two such numbers at the rate of 300 products per second, by finding the value of each product from a multiplication table stored in its memory. Although difficult to operate, ENIAC was still many times faster than the previous generation of relay computers. ENIAC, the first high-speed electronic computer. (The Bettmann Archive) ENIAC used 18,000 standard vacuum tubes, occupied 167.3 sq m (1,800 sq ft) of floor space, and consumed about 180,000 watts of electrical power. It had punched-card input and output and arithmetically had 1 multiplier, 1 dividersquare rooter, and 20 adders employing decimal "ring counters," which served as adders and also as quick-access (0.0002 seconds) read-write register storage. The executable instructions composing a program were embodied in the separate units of ENIAC, which were plugged together to form a route through the machine for the flow of computations. These connections had to be redone for each different problem, together with presetting function tables and switches. This "wire-your-own" instruction technique was inconvenient, and only with some license could ENIAC be considered programmable; it was, however, efficient in handling the particular programs for which it had been designed. ENIAC is generally acknowledged to be the first successful high-speed electronic digital computer (EDC) and was productively used from 1946 to 1955. A controversy developed in 1971, however, over the patentability of ENIAC's basic digital concepts, the claim being made that another U.S. physicist, John v. Atanasoff, had already used the same ideas in a simpler vacuum-tube device he built in the 1930s at Iowa State College. In 1973 the court found in favor of the company using the Atanasoff claim. Intrigued by the success of ENIAC, the mathematician John von Neumann undertook (1945) a theoretical study of computation that demonstrated that a computer could have a very simple, fixed physical structure and yet be able to execute any kind of computation effectively by means of proper programmed control without the need for any changes in hardware. Von Neumann contributed a new understanding of how practical fast computers should be organized and built; these ideas, often referred to as the stored-program technique, became fundamental for future generations of high-speed digital computers. John von Neumann (1903-57) (The Bettmann Archive) The stored-program technique involves many features of computer design and function besides the one named; in combination, these features make very-highspeed operation feasible. Details cannot be given here, but a glimpse may be provided by considering what 1,000 arithmetic operations per second implies. If each instruction in a job program were used only once in consecutive order, no human programmer could generate enough instructions to keep the computer busy. Arrangements must be made, therefore, for parts of the job program called subroutines to be used repeatedly in a manner that depends on how the computation progresses. Also, it would clearly be helpful if instructions could be altered as needed during a computation to make them behave differently. Von Neumann met these two needs by providing a special type of machine instruction called conditional control transfer--which permitted the program sequence to be interrupted and reinitiated at any point--and by storing all instruction programs together with data in the same memory unit, so that, when desired, instructions could be arithmetically modified in the same way as data. As a result of these techniques and several others, computing and programming became faster, more flexible, and more efficient, with the instructions in subroutines performing far more computational work. Frequently used subroutines did not have to be reprogrammed for each new problem but could be kept intact in "libraries" and read into memory when needed. Thus, much of a given program could be assembled from the subroutine library. The allpurpose computer memory became the assembly place in which parts of a long computation were stored, worked on piecewise, and assembled to form the final results. The computer control served as an errand runner for the overall process. As soon as the advantages of these techniques became clear, the techniques became standard practice. The first generation of modern programmed electronic computers to take advantage of these improvements appeared in 1947. This group included computers using random access memory (RAM), which is a memory designed to give almost constant access to any particular piece of information. These machines had punched-card or punched-tape input and output devices and RAMs of 1,000-word capacity with an access time of 0.5 microseconds (0.5 x 10 to the power of minus 6 seconds); some of them could perform multiplications in 2 to 4 microseconds. Physically, they were much more compact than ENIAC: some were about the size of a grand piano and required 2,500 small electron tubes, far fewer than required by the earlier machines. The first-generation stored-program computers required considerable maintenance, attained perhaps 70 percent to 80 percent reliable operation, and were used for 8 to 12 years. Typically, they were programmed directly in machine language, although by the mid-1950s progress had been made in several aspects of advanced programming. This group of machines included EDVAC and UNIVAC (see UNIVAC), the first commercially available computers. UNIVAC computer, 1955 (The Bettmann Archive) Early in the 1950s two important engineering discoveries changed the image of the electronic-computer field, from one of fast but often unreliable hardware to an image of relatively high reliability and even greater capability. These discoveries were the magnetic-core memory and the transistor-circuit element. These new technical discoveries rapidly found their way into new models of digital computers; RAM capacities increased from 8,000 to 64,000 words in commercially available machines by the early 1960s, with access times of 2 or 3 msec. These machines were very expensive to purchase or to rent and were especially expensive to operate because of the cost of expanding programming. Such computers were typically found in large computer centers--operated by industry, government, and private laboratories--staffed with many programmers and support personnel. This situation led to modes of operation enabling the sharing of the high capability available; one such mode is batch processing, in which problems are prepared and then held ready for computation on a relatively inexpensive storage medium, such as magnetic drums, magnetic-disk packs, or magnetic tapes. When the computer finishes with a problem, it typically "dumps" the whole problem--program and results--on one of these peripheral storage units and takes in a new problem. Another mode of use for fast, powerful machines is called TIME-SHARING. In time-sharing the computer processes many waiting jobs in such rapid succession that each job progresses as quickly as if the other jobs did not exist, thus keeping each customer satisfied. Such operating modes require elaborate "executive" programs to attend to the administration of the various tasks. In the 1960s efforts to design and develop the fastest possible computers with the greatest capacity reached a turning point with the completion of the LARC machine for Livermore Radiation Laboratories of the University of California by the Sperry-Rand Corporation, and the Stretch computer by IBM. The LARC had a core memory of 98,000 words and multiplied in 10 msec. Stretch was provided with several ranks of memory having slower access for the ranks of greater capacity, the fastest access time being less than 1 msec and the total capacity in the vicinity of 100 million words. During this period the major computer manufacturers began to offer a range of computer capabilities and costs, as well as various peripheral equipment--such input means as consoles and card feeders; such output means as page printers, cathode-ray-tube displays, and graphing devices; and optional magnetic-tape and magnetic-disk file storage. These found wide use in business for such applications as accounting, payroll, inventory control, ordering supplies, and billing. Central processing units (CPUs) for such purposes did not need to be very fast arithmetically and were primarily used to access large amounts of records on file, keeping these up to date. By far the greatest number of computer systems were delivered for the more modest applications, such as in hospitals for keeping track of patient records, medications, and treatments given. They are also used in automated library systems, such as MEDLARS, the National Medical Library retrieval system, and in the Chemical Abstracts system, where computer records now on file cover nearly all known chemical compounds. The trend during the 1970s was, to some extent, away from extremely powerful, centralized computational centers and toward a broader range of applications for less-costly computer systems. Most continuous-process manufacturing, such as petroleum refining and electrical-power distribution systems, now use computers of relatively modest capability for controlling and regulating their activities. In the 1960s the programming of applications problems was an obstacle to the self-sufficiency of moderate-sized on-site computer installations, but great advances in applications programming languages are removing these obstacles. Applications languages are now available for controlling a great range of manufacturing processes, for computer operation of machine tools, and for many other tasks. Moreover, a new revolution in computer hardware came about, involving miniaturization of computer-logic circuitry and of component manufacture by what are called large-scale integration, or LSI, techniques. In the 1950s it was realized that "scaling down" the size of electronic digital computer circuits and parts would increase speed and efficiency and thereby improve performance--if only manufacturing methods were available to do this. About 1960 photoprinting of conductive circuit boards to eliminate wiring became highly developed. Then it became possible to build resistors and capacitors into the circuitry by photographic means (printed circuit boards). In the 1970s vacuum deposition of transistors became common, and entire assemblies, such as adders, shifting registers, and counters, became available on tiny "chips." In the 1980s very large-scale integration (VLSI), in which hundreds of thousands of transistors are placed on a single chip, became increasingly common. Many companies, some new to the computer field, introduced in the 1970s the programmable minicomputer supplied with software packages. The size-reduction trend continued with the introduction of personal computers, which are programmable machines small enough and inexpensive enough to be purchased and used by individuals. Many companies, such as Apple Computer and Radio Shack, introduced very successful personal computers in the 1970s. Augmented in part by a fad in computer, or video, games, development of these small computers expanded rapidly. In the 1980s the enormous success of the personal computer and resultant advances in microprocessor technology initiated a process of attrition among giants of the computer industry. That is, as a result of advances continually being made in the manufacture of chips, rapidly increasing amounts of computing power could be purchased for the same basic costs. Microprocessors equipped with ROM, or read-only memory (which stores constantly used, unchanging programs), now were also performing an increasing number of process-control, testing, monitoring, and diagnosing functions, as in automobile ignition systems, automobile-engine diagnosis, and production-line inspection tasks. By the early 1990s these changes were forcing the computer industry as a whole to make striking adjustments. Long-established and more recent giants of the field--most notably, such companies as IBM, Digital Equipment Corporation, and Italy's Olivetti--were reducing their work staffs, shutting down factories, and dropping subsidiaries. At the same time, producers of personal computers continued to proliferate and specialty companies were emerging in increasing numbers, each company devoting itself to some special area of manufacture, distribution, or customer service. These trends will probably continue for the foreseeable future. Computers continue to dwindle to increasingly convenient sizes for use in offices, schools, and homes. Programming productivity has not increased as rapidly, and as a result software has become the major cost of many systems. New programming techniques such as object-oriented programming, however, have been developed to help alleviate this problem. The computer field as a whole continues to experience tremendous growth. As computer and telecommunications technologies continue to integrate, computer networking, computer mail, and electronic publishing are just a few of the applications that have matured in recent years. Bron: