operating system concepts

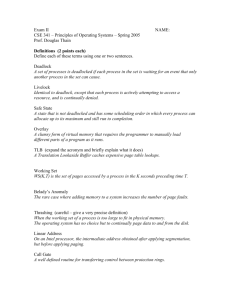

advertisement