Non-Visual User Interfaces

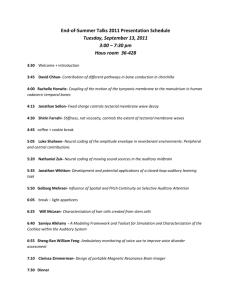

advertisement