a review of recent developments in integrity test research

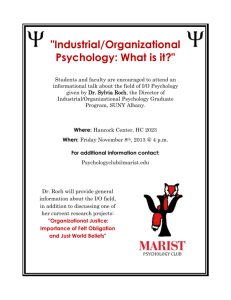

advertisement