252regrext

advertisement

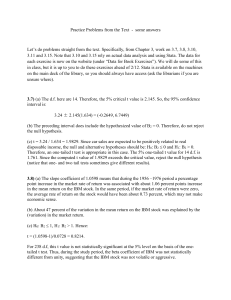

252regrext.doc 12/6/99 K. REGRESSION EXTENSIONS 1. Residual Analysis a. Presence of Outliers b. Patterns of Residuals (i) Nonlinear Equations (ii) Heteroskedasticity (iii) Missing Variables (iv) Autocorrelation 2. Dummy Variables a. Limits on numbers of Dummy Variables (Overdetermination) b. Use in ANOVA 3. Nonlinear regression Semilog, Double log, Reciprocal, Polynomial and Cyclical Forms. 4. Runs test Consider the sequence + + + - - + + - - or AAABBAABB The Runs Test is a test to see if the sequences of two items, plusses and minuses in the first case and ‘A’s and ‘B’s in the second case is random. If the sequence is not random, the alternation of the two kinds of item tends to follow a predictable pattern. Our null hypothesis is randomness. In the sequences above, let n be the total number of items, n1 be the total number of items of the first kind (plusses or ‘A’s), n 2 be the total number of items of the second kind (minuses or ‘B’s) and r be the total number of runs, that is the number of sequences of one kind. In the sequences above there are two sequences of plusses or ‘A’s and two of minuses or ‘B’s, so that r 4. (Note that the sequence ABA is three runs in itself). In the sequences above, we can also see that n1 5, n 2 4 and n n1 n 2 9 . To test the null hypothesis of randomness for a small sample, assume that the significance level is 5% and use the table entitled 'Critical values of r in the Runs Test.’ For n1 5 and n 2 4 , the top part of the table gives a critical value of 2 and the bottom part of the table gives a critical value of 9. This means that we reject the null hypothesis if r 2 or r 9. In this case, since r 4 we do not reject the null hypothesis. For a larger problem (if n1 and n 2 are too large for the table), r follows the normal distribution with 2n1 n 2 1 2 . Example: Assume that the significance level is 5% and that we 1 and 2 n n 1 2n n 225 30 1 28.27273 find that r 5 , n1 25, n 2 30 and n n1 n 2 55 . Then 1 2 1 n 55 1 2 27 .27273 26 .27273 13 .26905 . So z r 5 28 .27273 6.39 . Since and 2 n 1 54 13 .26905 this value of z is not between z 1.960 , we reject H 0 : Randomness. 2 5. Durbin-Watson test This is a test for randomness of regression residuals . The alternative hypothesis is (first-order) e autocorrelation. The computer will print d t et 1 2 , and compute the Durbin-Watson statistic, et DW . Use a Durbin-Watson table to fill in the diagram below. 0 + 0 dL + ? dU + 0 2 0 + 4 dU + ? 4 dL + 0 4 + For example, if for a significance level of .05 and we want a 2-sided test for a regression with a sample size of n 20 observations with k 4 independent variables and DW 1.01 ,we go to a Durbin-Watson table for 2 .025 . There we find for these values of n and k that d L 0.79 and d U 1.70 , so that 4 dU 4 1.70 2.30 and 4 d L 4 0.79 3.21 . Our diagram is now 0 + 0 0.79 + ? 1.70 0 + 2 0 + 2.30 + ? 3.21 + 0 4 + Because DW 1.01 is between 0.79 and 1.70, it is in an area marked with a question mark, and we cannot be sure whether to reject H 0 : randomness. If, however, DW were between 0 and 0.79 or 3.21 and 4 we could reject the null hypothesis, while if it were between 1.70 and 2.30 we could say that we cannot reject the null hypothesis. We can also do a one-sided test for an alternative hypothesis of positive autocorrelation by looking up critical values for .05 and saying that we reject the null hypothesis if DW is below d L , that we do not reject it if DW is above d U and that we are not sure if DW is between d L and d U . If the alternative hypothesis is negative autocorrelation, we use the same critical values but we reject the null hypothesis if DW is above 4 d L , we do not reject it if DW is above 4 d U and we are not sure if DW is between 4 d L and 4 d U . For example, if DW 0.85 and H 1 is 0 , and we again have a regression with a sample size of n 20 observations with k 4 independent variable, a one sided 5% table gives us d L 0.90 and dU 1.83. Thus, since our computed DW is below d L , we reject the null hypothesis and conclude that there is positive autocorrelation. The most accessible D-W table can be found through D-W table.