Memory-Based Embodied Cognition: a Computational

advertisement

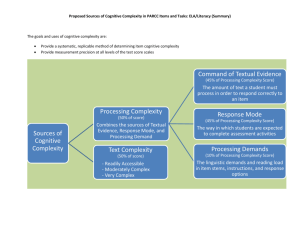

MEMORY-BASED EMBODIED COGNITION: A COMPUTATIONAL ARCHITECTURE Paul Baxter* and Will Browne C.I.R.G., *p.e.baxter@reading.ac.uk ABSTRACT At its most fundamental, cognition as displayed by biological agents (such as humans) may be said to consist of the manipulation and utilisation of memory. Recent discussions in the field of cognitive robotics have emphasised the role of embodiment and the necessity of a value or motivation for autonomous behaviour. This work proposes a computational architecture – the Memory-Based Cognitive (MBC) architecture – based upon these considerations for the autonomous development of control of a simple mobile robot. This novel architecture will permit the exploration of theoretical issues in cognitive robotics and animal cognition. Furthermore, the biological inspiration of the architecture is anticipated to result in a mobile robot controller which displays adaptive behaviour in unknown environments. 1. INTRODUCTION The development of artificial agents with autonomous and adaptive behaviour is an ongoing goal for cognitive robotics research. Biological agents (most notably mammals) provide arguably the best examples of these properties, and so are the source of design concepts and principles [1]. In addition, the implementation of these concepts allows an examination of their theoretical bases in the biological agents themselves. One such concept obtained from biological theory is that cognition is fundamentally concerned with the manipulation and utilisation of memory: this forms the basis of the present work. This paper proposes an explicit memory-based control architecture for a mobile robot based on functional neurobiological principles. It is proposed that this will result in an agent capable of autonomous and adaptive behaviour. Furthermore, it is anticipated that this architecture will establish a platform for exploring theoretical issues in both cognitive robotics and animal behaviour. There is a particular emphasis on allowing the specific functionality of the architecture to develop through interaction with the world, and not through an a priori or otherwise human-centred approach [2, 3]. 1.1 Motivation One of the most widespread approaches to implementing the desired autonomy and adaptivity in artificial agents is behaviour-based robotics. For example, the subsumption architecture [4, 5] has proven very successful in producing real time and real world behaviour [6], but is unable to account for biological observations relating to the flexibility of mammalian cognition. There have thus been numerous endeavours to incorporate neurobiological principles and models into robotics work, with the dual goals of capturing the desirable behaviours of the biological systems, e.g. [711], and as an alternate means of understanding these systems and their constituent processes, e.g. [12-14]. Whilst these approaches implement the neural or behavioural dynamics of the cognitive processes of interest, the consideration of autonomy and embodiment as playing a central role in these is often overlooked. The underlying thesis for this architecture is relatively uncontroversial. It is that cognitively flexible agents are constrained both by their physical instantiation and their environment, meaning that developed and learned behaviours must emerge from the interaction of the two [3]. Therefore, it is not enough to consider the agent, and indeed the development of its cognitive abilities, as separable from its interaction with the environment in pursuit of its goals. On this basis, the presented Memory Based Cognitive (MBC) architecture is formulated in an attempt to address some of these difficulties, and provide a means of examining the issues involved (both theoretical and practical) in the learning of basic sensorimotor competences, upon which more complex behaviours may subsequently be learned [15]. It is hypothesised that a number of benefits exist through the use of this approach. Firstly, the biologically inspired approach is envisaged to produce a behaviourally flexible artificial agent. The resulting platform will also allow the investigation of theoretical issues because it brings together both the neural considerations for a cognitive architecture, and the more philosophical considerations such as embodiment and autonomy. Furthermore, the use of explicit representations of memory (in the form of a rule-base) affords a detailed analysis of the development of functionality. For the remainder of this paper, the following terms are defined for clarity. “Architecture” is used to refer to the software-implemented element of the work. “Model” is analogous to this, but emphasis is on the theoretical underpinnings. “System” refers to the ensemble of architecture and the physical mobile robot: the behaviour of the system is emergent from the interaction of robot (be it real or simulated), the architecture, and the environment. The remainder of this paper is organised into four sections. In the first, a more detailed overview is given of the motivation of the novel architecture, and how it relates to existing approaches. In the following section, the neuroscientific theory upon which the architecture is founded is reviewed. Thirdly, the theoretical concepts of embodiment, autonomy and value (or motivation) are discussed in relation to the present work. Finally, the MBC architecture is introduced, with a discussion of its potential applications to both practical and theoretical issues. 2. THE DEVELOPMENT OF A MEMORYBASED COGNITIVE COMPUTATIONAL ARCHITECTURE When incorporating biologically-inspired functionality into robotics work, two broad approaches have been used: neural modelling, and behavioural modelling. In the first, a model of neural connectivity serving the function of interest is created, and implemented as an artificial neural network. This approach is essentially one of learn-by-building: further understanding of the biological system of interest may be gained through the implementation of biologically-plausible neural mechanisms for performance of a behavioural task. For example, a model of the mammalian nervous system has been implemented [16], in which a number of brain regions are simulated. The architecture as a whole is embedded in a mobile robot which interacts with the real world [17], which allowed the successful learning of a spatial navigation task. This approach has allowed the functional characterisation of a number of hippocampal pathways [18], which demonstrates its utility both to the understanding of biological systems, but also to the creation of behaviourally flexible artificial agents. In the second approach, computational architectures are implemented based on higher level cognitive psychological theories. This approach emphasises the behavioural functionality over the specific neural implementation. For example, Kawamura et al. [19-21] have implemented a model of human working memory in an upper-torso humanoid robot, with the aim of providing cognitive control. Whilst a more engineering solution to the problem of intelligent/cognitive control of mobile robots (no claims are being made concerning the biological theory upon which it is based), this example demonstrates the applicability of biologicallybased theories to robot control problems. Both of these approaches collectively stress the need for embodiment in the real world as a prerequisite for the emergence of intelligent behaviour through learning. However, this form of embodiment does not necessarily place the constraints on the computational architecture that the 'body' of a biological agent would place on its nervous system – in general, in these approaches the body provides the necessary sensory and motor spaces, but the relation between the software architecture and hardware body is wholly defined by the designer, rather than providing mutual constraints. Furthermore, in terms of autonomy of learning and behaviour, the predefined nature of the chunks of information in the second approach proves problematic if the task environment changes significantly. Finally, it may be argued that the transparency (in terms of ability to explain the causes of produced behaviour) of neural system-based modelling approaches, such as that presented in the first approach, is reduced as the fidelity (and hence complexity) of the model increases. As a general observation, these approaches tend to focus on what may be described as higher-level cognitive functions. The novel MBC architecture allows these issues to be addressed by incorporating two considerations. Firstly, the utility of neural systems-based architectures for the potential elucidation of biological mechanisms (at least in terms of function). Secondly, the theoretical and philosophical considerations of autonomy and embodiment that describes the conditions under which the biological agents have developed – an aspect frequently overlooked in current robotics work. By combining these two elements, the architecture my be used to explore issues which arise at the intersection of the two approaches discussed, and more theoretical considerations. The following sections describe the basis of the MBC architecture. Firstly, the network memory theory is reviewed as a broad neuroscientifically-supported theory of human cognition, upon which the main functional mechanisms of the architecture may be established. Since the theoretical considerations are of central importance in addressing these issues, these are discussed afterwards. Finally, these two strands are brought together with a discussion of the MBC architecture, its structure, and functionality. 3. THE NETWORK MEMORY THEORY Based on neuroanatomical and neuroscientific evidence, Joaquin Fuster has proposed the “Network Memory” theory [22]. This theory of human cortical and subcortical organisation and functioning is based largely on single-unit recording studies conducted on primates, which on an anatomical level display many similarities with human neuroanatomy [23]. It is of particular applicability to the present work as it provides a wide ranging theory of human cognition. The MBC architecture is based upon these principles in order to create a biologically non-implausible framework within which it can take shape. There are three central ideas which underlie the Network Memory theory: that memory is at its most basic an associative process that memory is not localised in specific regions of the brain, but distributed across the cortex (and to a lesser extent sub-cortical regions) that the organisation of these distributed memories is roughly hierarchical. In addition to this, Fuster postulates the presence of “phyletic memory”, in other words, memory of the species – that which is genetically encoded [24]. This type of memory is the basis of all of the initial associative units, however, as it is similarly encoded neurally, it is subject to the same potential for change as subsequently learned information. The first point is not a controversial one in itself as it is a widely held view. It is held that a process akin to hebbian learning (such as long-term potentiation [25]) is the underlying process of memory formation. The second point is in contrast to traditional theories of memory, which, inspired by the Turing machine metaphor, views brain organisation as essentially modular, where specific memories are locatable in particular parts of the cortex. In the network memory theory, it is postulated that through the use of the associative processes, incident sensory (or indeed afferent motor) information causes the formation of 'units' of memory which store that particular sensory (or motor) experience. Known as “cognits” [26], these units may be associated with one another as required through experience to form further associative units. These cognits are not spatially located in particular regions or consist of single neuronal units – rather, they are made up of networks of neurons (where individual neurons may be a part of multiple cognits) which are distributed across the cortex [27]. Consequently, cognits are not fundamentally discreet, as there may be significant overlap (in terms of neural substrate) between cognits. Thus cognits are distributed, overlapping neural representations. Another important point made in the Network Memory theory is that memory and perception share the same neural substrate. This is an extension of the cognit idea, where cognits are created or activated when their 'conditions' (i.e. the conditions in which they were created) are met, which is the process of perception. The third central idea of the Network Memory theory is the self organisation of cognits into loose hierarchies: one for sensory-based associations, and one for motor-based associations. These two hierarchies are not however entirely dissociable: whereas somewhat separable neural substrates may be identified, there are multiple bi-directional interconnections, and a common apex. Based on neuroanatomical evidence [28], the prefrontal cortex (PFC) has been suggested to be this common apex – the main function ascribed to it is the temporal integration of behaviour [29], which allows novel goal directed behaviours to be concatenated from previously learned behaviours. Koechlin and associates have provided neuroimaging evidence in support of a functional model of PFC functionality in line with this proposal [30]. Note that the Network Memory theory proposes that well-rehearsed (or otherwise automatic) actions may be executed without the mediation of the hierarchy apex, occurring as a result of activity in the lower echelons of both the sensory and motor hierarchies, and with the involvement of sub-cortical regions [29]. 3.1. Related work to the Network Memory theory There exist an increasing number of theories that emphasise the widely distributed nature of memory and cognitive processes over the competing modular view (indeed, the network memory theory was itself inspired by the work of Lashley, e.g. [31], who proposed the principles of mass action and equipotentiality). As examples of this trend, Postle [32] and Chadderdon and Sporns [33] have recently presented theories of Working Memory (WM) which emphasise the coordination of distributed brain regions to provide the observed functionality, rather than proposing a specialised neural system, as occurs in more traditional psychological theories of WM. The approach of the present work in emphasising the distributed nature of memory and cognition is thus justified. 4. THEORETICAL AND PHILOSOPHICAL CONSIDERATIONS 4.1. Autonomy and ‘Emotion’ Autonomy is considered to be a desirable trait for artificial systems, despite differences over precise definition, as it broadly implies the ability to act without explicit direction by the human designer [34]. constrained by the particular physical instantiation of the architecture (and therefore the interaction with the environment). The resultant system is then dependant on the interaction of the embodiment of the architecture with the functionality of the architecture itself. 5. THE MBC ARCHITECTURE 5.1. An Overview The creation of autonomous systems, or systems in which there is a significant autonomous component, has thus been the subject of much work, e.g. [35, 36]. However, it has been argued that since autonomous systems result from processes which maintain the identity of the system, autonomy can not be modelled as a function – consequently, approaches that do so are inherently problematic [37]. An alternative approach would be the introduction of homeostatic processes, or ‘emotion’, into artificial systems, thereby providing the necessary link (in terms of autonomy) between system behaviours (or ‘cognition’) and system implementation (or ‘embodiment’) [37, 38]. The involvement of emotion and homeostatic processes with cognition is now generally accepted, largely due to [39]. Indeed, there is a growing consensus that these processes are not independent from cognition, but rather intrinsically integrated [40]. Robotics work has followed this trend, allowing an advance towards autonomy in artificial agents, and providing an insight into the nature of emotions themselves in the process [41]. The central role of an ‘emotion’ system for supporting autonomy of the system is thus established. The MBC architecture is implemented on the basis of the discussed characteristics of the Network Memory theory, which essentially provide the framework and fundamental functional guidelines. It is memory-based in the sense that its functionality revolves around the acquisition and manipulation of information gained through experience in its environment. Whilst there is no information on the environment set a priori, any information which is embedded in the architecture (such as the architecture framework for example), is analogous to the phyletic memory proposed by the network memory theory. The association hierarchy is based in the particular sensory and motor spaces of the physical device in which the architecture is embodied, Figure 1. These associations are encoded in the form of ‘rules’ – which are Learning Classifier System [45] -inspired constructs which explicitly encode a relationship (as defined by temporal or spatial adjacency during operation) between two sensory and/or motor space elements. This organisation is viewed to be an abstraction of the ‘cognit’ concept introduced by the Network Memory theory: where a cognit is the fundamental unit of memory in the biological neural system, so is the ‘rule’ 4.2. Embodiment and Environmental Interaction This emphasis on homeostatic (and emotion) processes underlines the requirement for the strong embodiment of artificial systems, as a means of integrating the ‘cognitive’ part of the system with the physical system in which it is instantiated. A discussion of the requirement and meaning of embodiment is relevant. Whilst numerous concepts of embodiment exist, with some definitions being far more restrictive than others [42], it has been argued that even if the most restrictive definition holds (organismic embodiment, which holds that only living organisms can be truly embodied), there remains utility in examining ‘weakly’ embodied systems as if they were ‘strongly’ embodied [43]. With respect to the discussion of autonomy, embodiment is proposed to ground cognition – cognitive processes are inherently body-based [44]. In line with this, embodiment of the MBC architecture is required to define sensory and motor spaces upon which the development of environmental ‘knowledge’ is founded. This development is thus Figure 1. Functional diagram of MBC architecture. The sensory and motor spaces tightly integrate the architecture with its physical instantiation. The credit, or value, system plays a central role in the development of the hierarchy of associations. proposed here the fundamental unit of memory and association in the MBC architecture. In particular, the functional properties of the cognit are of importance: the overlapping nature, the manner in which cognits may be combined to form other cognits, and the way in which cognits form the basis of memory and perception. Furthermore, where a cognit can be activated, so too does a 'rule' have an associated activation value. Whilst the property of distributed basis (in terms of neural substrate) is also central to the cognit idea, for the ‘rules’ here proposed, this does not apply since the intention is to capture the essential functional nature of cognits, and so no claims are being made regarding their fundamental neural properties. 5.2. Implementational Details The architecture is implemented using the objectoriented programming language C++. The intention is to create a generic library which can be used to easily create instantiations of the MBC architecture for operation with different hardware platforms. At the present time, this library is subject to extension, particularly in the areas outlined below. A high-level description of the main system execution steps in the current version of the architecture may be seen in Figure 2. This execution scheme applies irrespective of the hardware embodiment. After an initialisation stage that defines the sensory and motor spaces (thus tying the architecture to the physical embodiment), each iteration of the loop performs operations from sense to act. This loop is closed through interaction with the environment. At the current stage of development, this loop incorporates relatively simplistic incarnations of the functional aspects presented in Figure 1. A number of extensions may be made to these mechanisms, which are outlined below. The following points refer to the labelled stages in Figure 2: Figure 2. High- level program flow indicating six main stages of processing. Within the functional architecture framework, Figure 1, the development of the hierarchy of associations is constrained by the particular physical embodiment. In other words, since each mobile robot in which the architecture is embodied has (although perhaps very subtle) differences in its array of sensors and effectors (either physical differences in the units or the topological arrangement thereof), the associations that are formed would differ. This also indicates the necessity for interaction with the environment to form the associations in the first place. From the discussion of autonomy and value systems in the previous section, and given the requirement of the architecture to autonomously develop, the value/credit system indicated has central importance. Because the development of the association rules is initially essentially random (see Implementational details), this system is required for the evaluation of associations for the creation of behaviour, e.g. [46]. The information contained within this value system is implemented as part of the framework of the architecture, and is thus defined explicitly. However, this is goal and environment independent, thus maintaining the autonomy of the system in this respect. 1) Given a motor command executed on the previous time-step, this stage evaluates the effect on the system, and updates the value element of the relevant associations. Whilst in the current implementation this update concerns only that association which was selected, this may be extended to related associations (on the basis of similarity). Additionally, the value system may itself be extended to account for a number of biological theories of emotion, e.g. [47]. 2) Associations in MBC architecture are explicitly represented by an object containing two elements, which may be either sensory or motor, or indeed another association. In this way, the loose hierarchies may be formed in line with the proposal of no explicit layers in the network memory theory. An open question remains as to which associations are formed on a given time-step – as the number of sensory and motor spaces increase, the number of possible associations increases combinatorially, which leads to the potential for exceeding available computational power. A possible solution to this would be to take into account spatial arrangement and type of sensors in order to reduce this number. In the current version of the architecture, where the mobile robot used allows small sensory and motor spaces, all possible associations are encoded on each time-step, and no such problems are anticipated. 3) Because the association rules have a coupled activation value, this stage updates them according to the recency of last use. The activation is in this way a time decaying function, which is allocated not just to those associations which exactly match the incident sensory states, but also those adjacent (in a scaled fashion). In a similar manner, a scaled activation level may be spread to related associations. 4) The associations whose activation values exceed a certain threshold form the association match set. This is in accordance with a number of biological action selection schemes, such as the affordance competition hypothesis [48]. Because this selection is based on activation and not directly the sensory state, there is a possibility that rules not directly related to the current sensory state are part of the action set, thereby allowing the potential for chaining of rules. 5) The action selection procedure operates on the match set produced in the previous stage. In the current version of the architecture, the action selection procedure selects the associations for each system effector with the highest value element. However, a number of other action selection schemes may be implemented in the future – indeed, the architecture may serve as a platform for comparing alternative actions selection schemes. 6) From the selected rules, the motor commands are executed, and preparations are made for the next time-step. As the implemented system is merely an abstraction of the natural neural system from which it is inspired, the relevant neural dynamics (for example the suppression of competing activation profiles) must be explicitly implemented. In this way, the action selection procedures (as discussed in points four and five) may be viewed as explicit representations of functions which are performed neurally, even though no claim is made as to their particular neural implementation. on each time-step. This use of explicit representations of association also has an inherent cost relating to computational load. Because associations are encoded on each time step from the systems' experience, the number of rules would increase rapidly, especially given more complex sensory and motor spaces, thus requiring greater computational power. In this way, the system may lag in terms of realtime performance. However, a number of alternative schemes for the creation of associations, and indeed their removal once created, would alleviate the problem. This need not reduce the biological plausibility of the architecture, as such schemes may be implemented in accordance with current knowledge of nervous system operation: for example, strong attention and affective effects in memory processes, e.g. [49, 50], and the largescale synaptic pruning which occurs in early childhood [51] may provide suitable mechanisms. The placement of the architecture in the trade-off between speed of operation (as may be afforded by an equivalent ANN implementation) and explanatory power (afforded by this rule-based setup) is justified given the purpose of the model: to provide adaptive behaviour in unknown environments, but also for use as a platform for investigating theoretical issues, for which explanatory power is desirable. The utility of the architecture is thus maintained despite the potentially slow real-time behaviour. For the development of cognitive robotics, as in the present work, the emergent behaviour is of critical importance. Techniques from artificial ethology [52] are thus required to provide metrics for the characterisation of the behaviour. This ability to compare the internal processes with the resulting behaviour is one which is not (currently at least) possible with biological agents, so the use artificial agents in this regard is a major advantage to the study of general principles of cognition and intelligence [53], and thus the applicability to the present work. 6. DISCUSSION 7. APPLICATIONS AND FURTHER WORK ‘Rules' are abstracted analogues of cognits, and explicitly encode associations. This has a notable advantage over artificial neural network (ANN) approaches in the transparency of operation: internal methods and operations are easier to interrogate, thus affording a more detailed analysis of the functionality of the architecture. Whereas similar methods exist for artificial nervous system ANN's (such as the backtrack method proposed in [18]), these may generally only be applied retrospectively on recorded data, whereas with the rule-base system here proposed, it may be conducted on-line (i.e. during run time) for an analysis of the internal events which result in the observed behaviour. This analysis may of course also be conducted retrospectively given a suitable recording of the events One of the central tenets of the MBC architecture is that no a priori knowledge of the environment is required for the system to produce a 'useful' behaviour. In this context, 'useful' would in the first instance refer to the ability to develop the necessary sensory-motor contingencies to move around the environment without collisions or becoming trapped. While this in itself is a constructive behaviour from a real world application point of view (autonomous rescue vehicles for example), it is hypothesised that the development of these most basic of behaviours would be prerequisite for more complex cognitive abilities, as postulated by the fields of developmental and epigenetic robotics [3, 54], and particularly the concept of ongoing emergence [15]. The application of the MBC architecture to this task, and an analysis of the manner in which successful performance is attained, would then provide a proof-ofconcept experiment. This would demonstrate the utility of this approach, and the MBC architecture in particular, by showing how general behaviours can be developed from individual instances, over the course of experience. One particular application envisaged for the MBC architecture is an issue in animal (and to a lesser extent, human) cognition: the question of whether the representation of knowledge for spatial information is represented topologically (i.e. through relations, or associatively) or in a euclidean manner (i.e. an explicit map). For example, it is widely held that place cells in the hippocampi of rodents encode an explicit representation of space, or a cognitive map [55]. However, a competing view exists which states that rather than being an explicit map, place cells actually encode the associative properties of spatial locations [56]. When place cell functionality is implemented, or observed to emerge, in robotics work through the implementations of ANN's, the euclidean view is implicitly assumed, e.g. [12]. These studies therefore do not address the possibility that the observed place cell behaviour encodes not a specific spatial location in an environment-grid (as it is commonly seen), but the sensory (and possibly also motor) associations applicable when the agent is in a specific place – indeed, it would be very difficult to do so in an ANN. This is where the rule-based implementation of the MBC architecture demonstrates its utility. If through experience in the environment, higher level associations are observed to be applicable (i.e. activated) only in specific spatial locations, then this would lend support to the topological view of hippocampal place cells. It would not deny the existence of explicit maps, but would lend support to the alternative paradigm. For this application, a suitable spatial task would be an adaptation of the Morris water maze [57], in which an agent attempts to learn the position of a hidden (i.e. detectable only when the agent is actually there) location within an enclosed arena, with static spatial cues. This setup has been applied to previous robotics work, for example [18, 58]. The processing stages of the currently implemented version of the architecture are in some respects simplistic. An ongoing task is the extension of the architecture to incorporate further biological evidence to further expand its biological plausibility, and hence its explanatory power. Particular regard is first to be made to the action selection mechanism. developmental cognitive architecture, with a framework which incorporates the central role that autonomy and embodiment play in these processes. As such, this architecture is based on neuropsychologically plausible mechanisms, which are provided by the Network Memory theory of cognition. Furthermore, an analysis of the theoretical issues of autonomy and embodiment has demonstrated their applicability to the study of cognitive architectures in general. These elements combined provide the foundation of the MBC architecture. The resulting system is to be used as a platform for investigating the interplay of embodiment with cognition (or more specifically, the development of cognition), in addition to providing a model of autonomous behaviour for application to artificial agents. A novel memory-based cognitive architecture has been introduced which will allow the investigation of theoretical issues in cognitive robotics and animal cognition, in addition to providing an autonomous and adaptive mobile robot controller. Acknowledgements: The authors would like to thank Prof. Richard Sibly (School of Biological Sciences, University of Reading) and Dr. Phil Beaman (Department of Psychology, University of Reading) for their helpful comments and discussions. 9. REFERENCES [1] A. Guillot and J.-A. Meyer, "The Animat contribution to Cognitive Systems Research," Journal of Cognitive Systems Research, vol. 2, pp. 157-165, 2001. [2] L. Berthouze and G. Metta, "Epigenetic robotics: modelling cognitive development in robotic systems," Cognitive Systems Research, vol. 6, pp. 189-192, 2005. [3] J. Weng, J. McClelland, A. Pentland, O. Sporns, I. Stockman, M. Sur, and E. Thelen, "Autonomous mental development by robots and animals," Science, vol. 291, pp. 599-600, 2001. [4] R. A. Brooks, "A robust layered control system for a mobile robot," IEEE Journal of Robotics and Automation, vol. 2, pp. 14-23, 1986. [5] R. A. Brooks, "Intelligence without Representation," Artificial Intelligence, vol. 47, pp. 139-159, 1991. 8. CONCLUSIONS [6] R. A. Brooks, "Elephants don't play chess," Robotics and Autonomous Systems, vol. 6, pp. 3-15, 1990. The MBC architecture was developed in response to an identified need to combine a biologically inspired [7] D.-A. Jirenhed, G. Hesslow, and T. Ziemke, "Exploring internal simulation of perception in mobile robots," presented at Fourth European Workshop on Advanced Mobile Robotics, Lund University, 2001. [8] N. L. Cassimatis, J. G. Trafton, M. D. Bugajska, and A. C. Schultz, "Integrating Cognition, Perception and Action through Mental Simulation in Robots," Robotics and Autonomous Systems, vol. 49, pp. 13-23, 2004. [9] M. Milford, R. Schulz, D. Prasser, G. Wyeth, and J. Wiles, "Learning spatial concepts from RatSLAM representations," Robotics and Autonomous Systems, vol. 55, pp. 403-410, 2007. [10] J. L. Phillips and D. C. Noelle, "A Biologically Inspired Working Memory Framework," presented at 27th Annual meeting of the Cognitive Science Society, Stresa, Italy, 2005. [11] M. Shanahan, "Consciousness, Emotion, and Imagination: A brain inspired architecture for cognitive robotics," presented at AISB 2005 Workshop: Next Generation to machine consciousness, University of Hertfordshire, 2005. [12] N. Burgess, J. G. Donnett, K. J. Jeffery, and J. O'Keefe, "Robotic and neuronal simulation of the hippocampus and rat navigation," Philosophical Transactions Of the Royal Society of London: Biological Sciences, vol. 352, pp. 1535-1543, 1997. [13] C. May, J. C. Shank, S. Joshi, J. Tran, R. J. Taylor, and I.-E. Scott, "Rat pups and random robots generate similar self-organized and intentional behavior," Complexity, vol. 12, pp. 53-66, 2006. [14] R. Wyss, P. Konig, and P. F. M. J. Verschure, "A model of the ventral visual system based on temporal stability and local memory," PLoS Biology, vol. 4, pp. e120:1-8, 2006. [15] C. G. Prince, N. E. Helder, and G. J. Hollich, "Ongoing Emergence: A core concept in epigenetic robotics," presented at Fifth International Workshop on Epigenetic Robotics, Nara, Japan, 2005. [16] J. L. Krichmar and G. Edelman, "Principles Underlying the Construction of Brain-Based Devices," presented at AISB06, Bristol, 2006. [17] J. L. Krichmar and G. M. Edelman, "Brain-Based Devices: Intelligent Systems based on Principles of the Nervous System," presented at Intl. Conf. on Intelligent Robots and Systems, Las Vegas, Nevada, 2003. [18] J. L. Krichmar, D. A. Nitz, J. A. Gally, and G. M. Edelman, "Characterising functional hippocampal pathways in a brain-based device as it solves a spatial memory task," Proceedings of the National Academy of Sciences of the USA, vol. 102, pp. 2111-2116, 2005. [19] K. Kawamura, R. A. Peters, R. E. Bodenheimer, N. Sarkar, J. Park, C. A. Clifton, A. W. Spratley, and K. A. Hambuchen, "A parallel distributed cognitive control system for a humanoid robot," International Journal of Humanoid Robotics, vol. 1, pp. 65-93, 2004. [20] K. Kawamura and S. Gordon, "From Intelligent Control to Cognitive Control," presented at ISORA 2006 - International Symposium on Robotics and Applications, Budapest, Hungary, 2006. [21] M. Skubic, D. Noelle, M. Wilkes, K. Kawamura, and J. M. Keller, "A biologically inspired adaptive working memory for robots," presented at AAAI Fall Symposium, Washington D.C., 2004. [22] J. M. Fuster, "Network Memory," Trends in Neuroscience, vol. 20, pp. 451-459, 1997. [23] J. M. Fuster, The Prefrontal Cortex: anatomy, physiology, and neuropsychology of the frontal lobe. New York: Raven Press Books, 1980. [24] J. M. Fuster, "Cortical Dynamics of Memory," International Journal of Psychophysiology, vol. 35, pp. 155-164, 2000. [25] S. F. Cooke and T. V. P. Bliss, "Plasticity in the human central nervous system," Brain, vol. 129, pp. 1659-1673, 2006. [26] J. M. Fuster, "The Cognit: a network model of cortical representation," International Journal of Psychophysiology, vol. 60, pp. 125-132, 2006. [27] J. M. Fuster, "Distributed Memory for both Short and Long term," Neurobiology of Learning and Memory, vol. 70, pp. 268-274, 1998. [28] J. M. Fuster, "Frontal lobe and cognitive development," Journal of Neurocytology, vol. 31, pp. 373-385, 2002. [29] J. Fuster, "The Prefrontal Cortex - An Update: Time is of the Essence," Neuron, vol. 30, pp. 319333, 2001. [30] E. Koechlin, C. Ody, and F. Kouneiher, "The architecture of cognitive control in the human prefrontal cortex," Science, vol. 302, pp. 11811185, 2003. [31] K. S. Lashley, Brain Mechanisms and Intelligence: a quantitative study of injuries to the brain. Chicago: The University of Chicago Press, 1929. [32] B. R. Postle, "Working memory as an emergent property of the mind and brain," Neuroscience, vol. 139, pp. 23-38, 2006. [33] G. L. Chadderdon and O. Sporns, "A large-scale neurocomputational model of task-oriented behavior selection and working memory in prefrontal cortex," Journal of Cognitive Neuroscience, vol. 18, pp. 242-257, 2006. [34] M. Boden, "Autonomy: What is it?," BioSystems, vol. 91, pp. 305-308, 2008. [35] J. L. Krichmar and G. M. Edelman, "Machine Psychology: Autonomous behavior, perceptual categorisation and conditioning in a brain-based device," Cerebral Cortex, vol. 12, pp. 818-830, 2002. [36] A. Jacoff, E. Messina, and J. Evans, "Performance evaluation of autonomous mobile robots," Industrial Robot, vol. 29, pp. 259-267, 2002. [37] E. Di Paolo and H. Iizuka, "How (not) to model autonomous behaviour," BioSystems, vol. 91, pp. 409-423, 2008. [38] T. Ziemke, "On the role of emotion in biological and robotic autonomy," BioSystems, vol. 91, pp. 401-408, 2008. [39] A. Damasio, Descartes' error : emotion, reason and the human brain. New York: Grosset/Putnam, 1994, 1994. [40] L. Pessoa, "On the relationship between emotion and cognition," Nature Reviews: Neuroscience, vol. 9, pp. 148-158, 2008. [41] L. Canamero, "Emotion understanding from the perspective of autonomous robots research," Neural Networks, vol. 18, pp. 445-455, 2005. [42] T. Ziemke, "What's that thing called Embodiment?," presented at 25th Annual Meeting of the Cognitive Science Society, 2002. [43] N. E. Sharkey and T. Ziemke, "Mechanistic versus Phenomenal embodiment: can robot embodiment lead to strong AI?," Journal of Cognitive Systems Research, vol. 2, pp. 251-262, 2001. [44] H. Svensson and T. Ziemke, "Making sense of Embodiment: simulation theories and the sharing of neural circuitry between sensorimotor and cognitive processes," presented at 26th Annual Cognitive Science Society Conference, Chicago, IL, 2004. [45] P. L. Lanzi, W. Stolzmann, and S. W. Wilson, "Learning Classifier Systems: from foundations to applications," in Lecture notes in Artificial Intelligence, J. G. Carbonell and J. Siekmann, Eds. Berlin: Springer-Verlag, 2000. [46] K. J. Friston, G. Tononi, J. G.N. Reeke, O. Sporns, and G. M. Edelman, "Value-dependent selection in the brain: Simulation in a synthetic neural model " Neuroscience, vol. 59, pp. 229-243, 1994. [47] E. T. Rolls, The Brain and Emotion. Oxford: Oxford University Press, 1999. [48] P. Cisek, "Cortical mechanisms of action selection: the affordance competition hypothesis," Philosophical Transactions Of the Royal Society B, vol. 362, pp. 1585-1599, 2007. [49] M. Mather and L. L. Carstensen, "Aging and motivated cognition: the positivity effect in attention and memory," Trends in cognitive sciences, vol. 9, pp. 496-502, 2005. [50] N. Fragopanagos, N. Korsten, and J. G. Taylor, "A neural model of the enhancement of perception caused by emotional cues," presented at International Joint Conference on Neural Networks, Vancouver, Canada, 2006. [51] G. Chechik, I. Meilijson, and E. Ruppin, "Neuronal Regulation: a mechanism for synaptic pruning during brain maturation," Neurocomputing, vol. 2627, pp. 633-639, 1999. [52] O. Holland and D. McFarland, Artificial Ethology. Oxford: Oxford University Press, 2001. [53] K. Warwick and S. J. Nasuto, "Historical and Current Machine Intelligence," in IEEE Instrumentation and Measurement Magazine, vol. 9, 2006, pp. 20-26. [54] L. A. Meeden and D. S. Blank, "Introduction to Developmental Robotics," Connection Science, vol. 18, pp. 93-96, 2006. [55] J. O'Keefe and L. Nadel, The Hippocampus as a Cognitive Map: Oxford University Press, 1978. [56] H. Eichenbaum, P. Dudchenko, E. Wood, M. Shapiro, and H. Tanila, "The Hippocampus, Memory, and Place Cells: Is it Spatial Memory, or a Memory Space?," Neuron, vol. 23, pp. 209-226, 1999. [57] R. Morris, "Developments of a water-maze procedure for studying spatial learning in the rat," Journal of Neuroscience Methods, vol. 11, pp. 4760, 1984. [58] M. A. Busch, M. Skubic, J. M. Keller, and K. E. Stone, "A robot in a water maze: Learning a spatial memory task," presented at IEEE Conference on Robotics and Automation, Roma, Italy, 2007.