A Machine Learning Approach to Object Recognition in the Context

advertisement

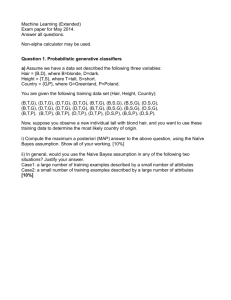

A Machine Learning Approach to Object Recognition in the Context of Visual Road Scene Analysis from a Moving Vehicle Jivko Sinapov Dept. of Computer Science Iowa State University I. Introduction This project address an object recognition task related to visual road scene analysis in the context of a moving vehicle. The goal is to implement and evaluate a robust object recognition technique for detection of various objects of interest. Object recognition tasks such as detecting other cars and traffic signs are very important when designing driving assistive systems or autonomous driving agents. In this project we implement and evaluate an object detection scheme utilizing a cascade of Haar feature classifiers, as well as a boosting technique utilizing SVM. II. Background and Motivation The success of several of the challengers in last year’s DARPA Grand Challenge shows that computer vision can be used effectively in solving many of the problems associated with autonomous driving. Many problems remain unsolved, however. For example, the computers that processed the data in the autonomous vehicles participating in the challenge are far superior to the average PC and it is unlikely that people at large would be able to outfit theirs cars with such systems. In the coming years we are likely to see various driving assistive technologies appear on the market and there is currently a large overlap between the set of problems associated with autonomous driving and that of problems associated in the area of driving assistive technology. A large fraction of accidents occurs because the driver is not paying attention to the road and cars in front of their vehicle. For example, a driver not paying attention can easily veer off course and enter an undesired lane, or fail to stop at a traffic light. As such, real time traffic light, and vehicle detection are very appropriate problems to tackle since any driving assistive or autonomous driving system would have to be able to perform those tasks. The primary goal for this project is to provide appropriate solutions for these problems which can work in real time on a regular PC. In particular, we’ll take a look at the problems of detecting traffic lights and other cars in the field of view. It is conceivable that in the near future cars would come equipped with systems which monitor the road, as well as the driver in order to determine if he or she is not paying attention to the road. In such cases, the system must be able to detect situations which demand the driver’s immediate attention – for example, if the car is approaching a red light at high speed or if the car in front is suddenly slowing down. In order for such a system to work, it will need to be able to accurately detect the objects of interest and a machine learning approach is likely to provide such a solution. III. Object Recognition Using a Haar Cascade Classifier In the task of object recognition, we implement an approach which classifies objects based on an extended set of Haar features. This approached was originally proposed by Viola and Jones [1] and extended by Lienhart [2]. The detection scheme uses the values of Haar-like features in an image in order to classify an object as a positive or negative instance. A subset of simple features used in this model is shown in Figure 1. Figure 1: Some simple examples of Haar-based features Each of these features consists of a geometric representation of two regions – black and white. The value of each feature at a given position in the image is the difference between the sums of the pixels within the two regions. Haar features can take arbitrarily complex shapes and the size of the full set available in this model is in the order of tens of thousands. In order to compute the value of each feature at a given location of the image, the image is represented in an integral form: the value at position (x, y) in the integral image will contain the sum of pixels that are above y and to the left of x. The general formula for the integral image representation is the following: ii ( x, y) I ( x' , y ' ) x 'x , y ' y The integral image representation is chosen for several reasons. First, it allows for efficient computation of a given Haar feature at a given position of the test image. In addition, it allows for robust object detection regardless of global lightning conditions, since the Haar features take into account only the differences of sums of pixels, which are invariant in terms of the global intensity of the image. Last but not least, the integral image representation allows for the object detection algorithm to scan for objects at different scales very efficiently since scaling the integral image can be done much faster than scaling the RGB image [1]. This is a very desirable property since real-time usability is a major goal for this object recognition system. The classifier is built in stages – at each stage, an AdaBoost-like approach is applied to selecting one or more Haar-features, as well as determining appropriate thresholds which can be applied to reject a large number of negative training instances. Important input parameters for the training procedure are the minimum hit ratio and maximum false alarm rate – the search for optimal feature and threshold selection will continue until those two requirements are met, at which point the remaining training examples will be passed on to the next stage. For example, if those parameters are set to 0.995 and 0.5 respectively, at each stage, feature selection and threshold optimization will be applied until the resulting stage is capable of classifying 99.5% of the positive instances as positive and does not classify more than 50% of the negative images as positive. For more precise details regarding feature selection and training, consult Viola and Jones [1]. In the extended model of the classifier implemented in the OpenCV C++ library, each stage of the classifier can make use of more than one feature in order to meet the requirements set by the input parameters, in which case each stage Figure 2: Schematic description of the classifier. At each stage, the classifier either rejects the instance can be viewed as a decision tree, rather (represented as a sub-window from a given test image) than a decision stump [3]. It is also based on a given feature value or sends the instance important to note that at each stage, the further down the tree for more processing. At the classifier uses a different set of negative initial stages a large number of negative examples are training images which are sampled from a eliminated [1]. given database of images that do not contain the specified object. After training the desired number of stages, the result is a cascade of tree-like classifiers, as show in Figure 2. The structure of the resulting classifier is essentially that of a degenerate decision tree or a decision list. Each added stage to the classifier tends to reduce the false positive rate, but also reduces the detection rate [3]. As such, it is essential to train the classifier with the appropriate number of stages for the given task. Once a classifier is trained, detection is done by sliding a window across an input image and passing the cropped sub-image through the classifier. In order for classification to be size-invariant, the same procedure is also performed on the input integral image at different scales. Given this scheme, the output of classification is a series of sub-windows of the test image which contain the desired object. In the following two sections we outline how this model was applied to the problems of traffic light and car detection. IV. Traffic Light Detection The problem of traffic light detection is important in the area of driving assistive technology. A system which is tasked with preventing accidents when a driver is not paying attention must always know whether there is a traffic light in the scene and what its state is. To solve this task, a Haar cascade classifier for traffic lights was trained. The dataset used in these experiments consists of real time video taken from a camcorder positioned inside a passenger car. The camera resolution is low (320 by 240) and so is the image quality, thus adding another challenge to this problem. Figure 3: Positive examples of traffic lights used for training. Negative samples are randomly selected from an image collection that does include traffic lights. The classifier was trained with 5 stages on 120 positive examples, and 120 negative examples. The minimum hit ratio at each stage is set to 0.95 and the maximum false alarm rate is set to 30%. To improve results and decrease computation time, the area of the image being searched through is restricted to the portion where a traffic light could actually occur – there is no point at looking for that object on the actual road, for example. Once a traffic light is detected in the input stream, the image is analyzed to determine its state. Ideally, we would want to identify the area of the traffic light which contains the actual color signal. In our case, however, the resolution was low enough such that the number of pixels that actually correspond to the light in the traffic light is usually about 5 or 6 which makes it quite difficult to analyze. Nevertheless, a simple scheme for determining the color of the light is implemented which works the following way: Input: cropped image of detected traffic light Steps: 1. G = Sum the green components of the cropped image 2. R = Sum of the red components 3. If (G/R) < t1, then output RED 4. If (G/R) > t2, then output GREEN 5. Else, output YELLOW The thresholds t1 and t2 were automatically optimized based on a training set of traffic light images. In practice this scheme worked well in determining the color of a given light, although if we had better resolution, it is conceivable that a much better and more robust algorithm could be devised. The classifier was evaluated on about 20 minutes of continuous input stream recorded while driving in Ames, IA. The detection scheme works quite comfortably in real time due to the small size of the trained classifier and small area of the image that is being processed. In all the occasions on which a traffic light was passed, the classifier is able to detect it and almost always outputs the correct color, so long it is green or red. The low-quality of the video input makes it difficult to recognize yellow since the pixels of the light signal actually assume white color in such cases. The good detection performance is likely due to the fact the traffic light shape is very distinct and there are almost no other objects present in the portion of the image that is being searched. One obvious drawback is that only traffic lights of this particular shape can be detected – while most traffic lights in Ames follow this standard, the same might not be true for other cities. Figure 4 shows some example results. At the end of this paper there is a discussion about some available online demos of this system that demonstrate how it works in practice. Fig.4. Example results from running the traffic light detection procedure V. Car Detection In this particular problem, we are interested in detecting vehicles in front of the observer. A series of Haar cascade classifiers are trained and evaluated on two different datasets. The first dataset, as in the previous problem, consists of low-quality video taken while driving in Ames and the surrounding areas. The low quality, however, makes it difficult to detect objects further in the distance and as such a second dataset of good quality images was used in order to evaluate the detection scheme in more detail. 5.1. Car Detection in low-quality and low-resolution video stream As in the previous section, the experiments are performed on a dataset comprising of a recorded video from a camera installed in a passenger car while driving in Ames. A classifier with 10 stages is trained on 200 sample images of cars taken from half the amount of video available. The training parameters minimum hit ratio and maximum false alarm rate are set to 0.995 and 0.3 respectively. The resulting classifier is tested on about 20 minutes of video recorded while driving on the freeway. Once again, since the position of the road relative to the observer is known in this context, we are able to restrict the image area in which a car is hypothesized to be. Restricting the region of interest allows for greater speed of computation and for elimination of false positives which could not possibly be actual cars due to their location. Once the region of interest is identified, it is scanned by a widnow at different scales, and any sub-windows which are marked as positive by the Haar cascade Figure 7: Identifying region of interest, and performing detection with trained Haar cascade classifier classifier are deemed to be detected cars. Restricting the search area helps eliminate almost all false positives. A passing car was always detected as such, although once it gets far ahead enough, the detection scheme fails due to the small size of the object and low image quality. Even though large semi-trucks were not part of the training set, they generally tended to be recognized as cars by the classifier, if close enough. The demos available online can give an accurate illustration of how well this detection and classification scheme works. Some sample screenshots are included in Appendix I. Overall, with a large data set and good quality video stream, such system could be fairly robust although it will never be absolutely perfect and hence an autonomous driving agent would need a much smarter framework in order to detect vehicles on the road. In the next section, we evaluate this object recognition and detection scheme much more precisely with mid- to good- quality input data. 5.2. Car Detection in mid- to good-quality images The dataset used in the following experiments consist of 526 images taken from inside the driver seat of a vehicle, each of which contains at least one car in front of the observer. The images are not sequential frames from a video feed. Sample images from this dataset are shown in Figure 5. The dataset was split into 2/3 training and 1/3 test sets. Overall, 300 sample images of cars were extracted which were used for training each classifier. Knowing that detection rate can decrease as the number of stages in a classifier increase, our task is to determine the optimal number of stages for this given problem. The training parameters minimum hit ratio and maximum false alarm rate are set to 0.995 and 0.3 respectively for all trained classifiers. Following, classifier with number of stages ranging from 5 to 10 are trained and evaluated on the test set. Figure 5: Samples from a car image database. Evaluation is performed by running the detection scheme on the test set and taking note of the type of results that are outputted at each frame. Each output result falls within one of three categories: positive, negative, or partial. Positive results are those that contain a car in a well-defined box. Negative results are such outputs that do not contain any major distinguishable portion of a car. Partial results contain everything in between – if the result contains a major portion of the car, or if it contains a car, but also lots of other stuff, then it is labeled as partial. Figure 6 shows examples of each type of outputs. (a) (b) (c) Figure 6: Examples of a positive (a), negative (b) and a partial (c) detected object. Each trained classifier was tasked with detecting cars in the test set and the resulting outputs were saved and manually labeled as positive, negative or partial. Figure 7 shows the results of each run. As we can see from the chart, the 7-stage classifier detects the highest number of cars in the test set, while the 10-stage classifier detects the lowest false alarm rate, as expected. Performance of Haar-cascade classifiers with varied number of stages 350 300 # detected 250 200 150 100 50 0 n=5 n=6 n=7 n=8 n=9 n = 10 Num . of Stages of Classifier positive partial negative Figure 7: Summary of classifiers’ performance. The results of these experiments illustrate the tradeoff between the hit rate and the false alarm rate of each classifier. Ideally, we want to detect as many actual appearances of the target object in the input stream without reporting too many false positives. In both, driving assistive technology and autonomous driving applications, a false positive error is not nearly as bad as a complete miss of an actual object of interest. Following, we explore an approach to boost the classifier in order to minimize the false alarm rate while maintaining a good hit ratio. One such approach would be to reinsert samples of false positive outputs into the training set and further train the Haar cascade classifier. Retraining the classifier, however, is a highly time-consuming process when compared to other machine learning techniques. A 10-stage Haar cascade classifier, for example, can take up to one hour train on an average PC, even when faced with only a small dataset of 300 positive and 300 negative samples. If a real-time system is being told by the user that some of its findings are false positives, it would not have the luxury of time to adapt to those results. Our approach to improving performance in real time utilizes an SVM which is trained on labeled detected outputs resulting from running the Haar cascade classifier detection scheme. This technique proves time-efficient and it improves performance. A good question at this point is why not use SVM from the very beginning? An SVM approach would likely yield better results than Haar cascade classification. However, we note that it is difficult to efficiently search through an RGB image at different scales for potential candidates. If the SVM makes use of global and local features instead of the raw pixel values, then there would be even extra computational overhead (in addition to scaling the image) when sliding a window and looking for a match. Real-time usability is a requirement for any driving assistive technology or autonomous driving system. We also have to note that object detection and recognition is only a small portion of such a system and as such, we need an efficient algorithm which saves computational resources for other tasks such as object tracking and decision making. We perform experiments to initially validate whether an SVM can be used to distinguish between positive and negative results of the Haar cascade classifier and determine what image representation is best to use. The set of 232 positive and 194 negative output samples from the 5-stage classifier is used as a dataset in this experiment. Each sample is scaled to size 15 by 15, converted to gray-scale image, and undergoes histogram equalization. The equalized gray-scale image is used as the raw input, which each attribute corresponding to a particular pixel with value of 0 to 255 scaled to a real value between 0 and 1. We also perform an experiment to see whether it is better to use the edges in the image as a representation of the instances, rather than the gray-scale image itself. Overall, there are 225 attributes per instance (1 for each pixel), regardless of which representation we use. Figure 8 (a) (b) (c) illustrates the way instances are Figure 5: Samples’ preprocessing: (a) gray scale, (b) preprocessed for input into the SVM histogram equalization, (c) Canny edge detection. algorithm. The experiment suggests that using the equalized gray-scale representation yields better classification results. Using 5-fold cross-validation with a polynomial kernel SVM, we can achieve 93.8% accuracy which is illustrated in the following confusion matrix: predicated positive negative 5 positive 16 178 negative actual 237 Using the detected edges representation of the samples, on the other hand, yielded accuracy of only 83%. Experiments were also performed to determine the optimal scale of the samples and the results show that increasing the image dimensions beyond 15 by 15 does not produce a significant increase in accuracy, but as expected, slows down training and testing due to the quadratic increase of the number of attributes. Following this result, we attempt to boost the 7-stage classifier by training and evaluating an SVM on the dataset comprised of the Haar cascade classifier’s output results. The dataset contains 512 instances, of which 250 are positive, 221 are partial, and 41 are negative. We conduct a 5-fold cross-validation experiment with a multi-class SVM with polynomial kernel of 4th degree and the result is the following confusion matrix: predicted negative 0 24 3 partial 10 14 192 positive negative partial actual positive 240 3 26 All positive instances get classified as either positive or partial, while only a small fraction of negative and partial instances gets classified as positive. No positive instance is classified as negative, which is a highly desirable property in the applications discussed previously. The results are promising and show that boosting a Haar cascade classifier with an SVM can increase performance. The boosted 7-stage Haar cascade classifier is clearly superior to the 10-stage classifier in terms of quality of results. VI. Discussion We have shown that a machine learning approach utilizing a Haar cascade classifier and an SVM can be an efficient and accurate method for performing object detection in real time input video stream. While the detection rate achieved is not high enough for an autonomous driving agent, the proposed scheme could be utilized within a driving assistive technology system. For demos of the currently developed framework, visit: http://www.cs.iastate.edu/~jsinapov/Vision/ The question of whether boosting a Haar cascade classifier with an SVM is more efficient than using SVM for detection itself still remains to be answered. Intuitively, searching for an object within the image would be faster if using a Haar cascade classifier for recognition, but this hypothesis is yet to be validated. Object recognition with SVM and local features (such as SIFT features, for example) has been shown to have very high performance, but it is still a question of whether localizing the target object in an input image can be done efficiently if the features used for recognition are not easy to compute [4]. Ultimately, the goal is to design a system which can efficiently detect an object in the input video stream, as well as efficiently update its model of the target to be detected. SVM is the most likely candidate to achieve this task, as long as we can implement an efficient search routine through the input image. From this standpoint, we can view the Haar cascade object detection scheme as a search technique which identifies the areas most likely to contain the target we are looking for. Once those candidates are localized, they can be passed on to a stronger classifier which would not only produce better results, but also be able to adapt its model based on user feedback. An alternative approach would be to still use AdaBoost for feature selection during training but utilize SVM directly instead of constructing a cascade of decision trees. This has the potential to combine the efficiency of Haar cascade classifier detection scheme with the classification power and robustness of the SVM. References [1] Viola, P., and Jones, M., “Rapid Object Detection using a Boosted Cascade of Simple Features,” IEEE CVPR, 2001. [2] Lienhart, R., and Maydt, J. “An Extended Set of Haar-like Features for Rapid Object Detection,” Submitted to ICIP2002. [3] Bradski, G., Kaehler, A., Pisarevsky, V. "Learning-Based Computer Vision with Intel's Open Source Computer Vision Library." Intel Technology Journal. 2005. [4] Serre, T., Wolf, L., Poggio, T., “Object Recognition with Features Inspired by Visual Cortex”. Proceedings to IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 2005. Appendix I: Sample results from performing car detection on real-time video input feed.