A Co-Chunk based method

advertisement

A CO-CHUNK BASED METHOD

FOR SPOKEN-LANGUAGE TRANSLATION1

CHENG Wei2, ZHAO Jun, LIU Feifan and XU Bo

National Laboratory of Pattern Recognition

Institute of Automation, Chinese Academy of Sciences, Beijing, China

Wcheng@bcu.edu.cn , jzhao@nlpr.ia.ac.cn , ffliu@nlpr.ia.ac.cn , xubo@hitic.ia.ac.cn

ABSTRACT

More flexible speech styles: slow or rapid speeches

Chunking is a useful step for natural language

processing. The paper puts forward a definition of cochunks for Chinese-English spoken-language translation,

based on both the characteristics of spoken-language and

the differences between Chinese and English. An

algorithm is proposed to identify the co-chunks

automatically, which combines the rules into a statistical

method and makes a co-chunk has both syntactical

structure and perfect meaning. Using the co-chunk

alignment corpus, we present the framework of our

translation system. In the framework, the word-based

translation mode is employed to smooth the co-chunkbased translation model. A series of experiments show

that the proposed definition and the co-chunking method

can lead to great improvement to the quality of the

Chinese-English spoken-language translation.

KEYWORDS

chunking; spoken-language

machine translation.

translation;

statistical

1. INTRODUCTION

It is well known that speech-to-speech translation faces

more problems in comparison with pure text translation,

such as:

More irregular spoken utterances: There are much

more pauses, repetitions, omitting etc. in spoken

language.

1

2

with different stresses, accent, appears.

No punctuation to segment the utterances.

Confronted with these problems, more robust

technologies are needed to be developed to achieve an

acceptable performance in the spoken-language

translation system. In recent years, some data-driven

methods are taken as the effectual ways for machine

translation, such as the example-based machine

translation (EBMT, proposed by ATR) and the statistical

machine translation (SMT). The statistical approach is an

adequate framework for introducing automatic learning

techniques in spoken-language translation. It has been

studied for many years[1][2][3][4][5]. However, its

performance isn’t very satisfactory[6].

In this paper, we introduce text chunking into the SMT

model to improve the translation quality. Chunking is a

useful step for natural language processing. There are

many researches dealing with chunk parsing for single

language[7][8][9]. However, in machine translation, we need

a definition correlative with both the source language and

the target language. Therefore, we first present the cochunk definition for Chinese-English spoken-language

translation. Then a co-chunking method based on the

definition is investigated. Finally a SMT system based on

co-chunks is built to improve the translation quality.

The paper is organized as follows. Section 2 describes the

definition and the features of the co-chunk. Section 3

presents an automatic algorithm for the co-chunk

identification. And section 4 presents a statistical

translation framework based on the co-chunk. In section 5

experimental results are presented and analyzed. Some

remarks are given in section 6.

This work is sponsored by the Natural Sciences Foundation of China under grant No. 60272041, 60121302, 60372016

This author is now working in the Artificial Intelligence Laboratory of Beijing City University.

3)

2. DEFINITION OF CO-CHUNKS

In this paper, a co-chunk is composed of a source subchunk and a target sub-chunk. Each of them has both the

syntactic structure and the low ambiguous meaning. The

definition can be described by the following formula:

BC { bs, bt | bs ws0 ,, wsl , bt wt0 ,, wt m ;

bs bt; wsi wsl0 , wt j wt0m ;

(1)

l [0, NS ], m [0, NT ]}

Where, BC denotes a set of co-chunks. “bs” is the source

sub-chunk and l is its length. wsi is a word in the source

sentence. “bt” is the target sub-chunk and m is its length.

wti is a word in the target sentence. NS is the number of

source sub-chunks in the source sentence. And NT is the

number of target sub-chunks in the target sentence. The

detailed explanations are as follows.

1)

2)

3)

Meaning. The typical sub-chunk consists of a single

content word and its contextual environment.

Therefore the meaning of the sub-chunk is the less

ambiguous. This definition can be used for

disambiguation in the machine translation.

Transition. Meanwhile, the meaning of the target

sub-chunk should be as the same as that of the

corresponding source sub-chunk, except that a

source sub-chunk is corresponding to a null target

sub-chunk and vice versa.

NP

VP

NP

ADVP

VP

ADJP

PUN

两 人 || 住 || 这 房间 || 可 || 是 || 小 了 点儿 || 。

I am afraid || this room || is || too small || for two || .

ID

NP

VP

ADJP

PP

PUN

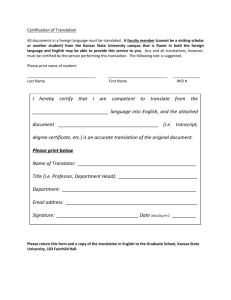

Fig. 1 An example of Chinese-English co-chunk

2)

3. THE AUTOMATIC IDENTIFICATION OF

CO-CHUNKS

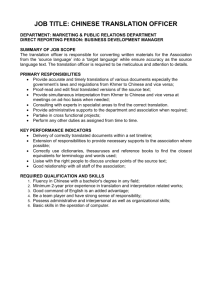

Figure 2 gives the process of the automatic identification

of the co-chunks. It includes three parts: 1) source

chunking, 2) searching the target chunks according to the

source chunks, 3) proof-checking of the co-chunks.

Structure. The sub-chunk is defined as a syntactic

structure which can be described as a connected subgraph of the sentence’s parse-tree. None of them in a

sentence overlaps each other.

An example of Chinese-English co-chunk is given in

figure 1. From it we can see some features of the cochunk:

1)

It integrates the syntactic rules of two different

languages. Here we define 8 kinds of basic subchunks for Chinese as noun sub-chunk, verb subchunk, interrogative sub-chunk, adjective subchunk, preposition sub-chunk, adverb sub-chunk,

modal/punctuation sub-chunk and idiom sub-chunk.

While in English, a SBAR sub-chunk is added and

the modal/punctuation sub-chunk is renamed as the

interjection sub-chunk. The definitions of all kinds

of the sub-chunk are according to the character of

both the Chinese and the English.

It builds the semantic relation between two

languages.

It keeps one of the characteristics in the most

definitions of monolingual chunk, that is, chunks

have a legal syntax structure. Therefore, we can use

the shallow analysis to extract the co-chunk.

The automatic identification system of co-chunks

Bilingual

sentences

Parsing by Possible Search for Possible Proofsource chunk source co-chunks co-chunks checking

co-chunks

chunks

Rules of the

source language

Stochastic

parameter

Rules of the

target language

Bilingual corpse

Fig. 2 Structure of the identification system for co-chunks

3.1. Searching for co-chunk

The finite state machine (FSM) can be employed in the

stages of source chunking and proof-checking. Dynamic

programming together with heuristic function is used in

the searching for the co-chunks. The search algorithm is

as follows.

1)

OPEN := (s), g(s) := 0;

2)

LOOP: IF OPEN = () THEN EXIT (FAIL);

3)

n := FIRST(OPEN);

4)

IF END OF SENTENCE THEN EXIT (SUCCESS);

5)

REOMOVE (n, OPEN), ADD(n, CLOSED);

6)

EXPAND(n)--->{ml}.

7)

IF CHUNK(ml) follows syntactic rules, ADD(ml,

OPEN), and tag POINTER(ml, n);

f ( n, ml ) g ( n, ml ) h(ml ) ;

8)

SAVE min f(PATHi), SORT(NODEj);

9)

GOTO step 2).

f ( k ) g ( k ) h( k )

[ log p(bt k | bs k ) log p(bt rest | bs rest)]

(9)

k

3.2. Calculation algorithm

4. THE CO-CHUNK BASED TRANSLATION

We define

g (k ) log p(btk | bsk )

(2)

k

Where, bsk is the source sub-chunk of the kth co-chunk

and btk is the target sub-chunk of the kth co-chunk. The

objective of the search can be described as

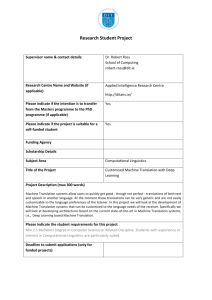

Fig.3 gives the structure of the translation system based

on the co-chunks. It includes two steps:

1)

Training: First, some preprocessing steps are applied

to the Chinese-English corpus, such as, sentence

segmentation and word segmentation. Then the

identification system is employed to identify the cochunks in the corpus automatically. Therefore, the

statistical models can be trained with the co-chunkbased corpus.

2)

Translation: This step consists of the chunk

matching and the translation decoding. The chunk

matching is similar to Chinese word segmentation. It

can be done by the maximum matching algorithm

according to a Chinese chunking corpus. The

translation decoding is the co-chunk-based SMT

whose unit is not a word but a co-chunk.

K

min{ log p (bt k | bs k )}

(3)

k 1

S bs1 bs K ; T bt1 bt K

According to Bayesian formula

p( ) p (bs k | bt k )

p (bt k | bs k ) bt k

p (bs k )

(4)

Where p(bsk) and p(btk) can be estimated by the bigram

language model as

mk

p(bs k ) p( ws j | ws j 1);

j 1

(5)

lk

p(bt k ) p( wt i | wt i 1)

I TRAINING

i 1

p(bsk|btk) is the translation probability of the source subchunk on condition that the target sub-chunk occurs.

mk l k

p(bs k | bt k ) p(l k | m k ) p( ws j | wt i )

(6)

j 1i 1

Where,

can

p ( ws j | wt i )

be

estimated

by

EM

[10]

. p(l k | mk ) is the probability of length

and can be estimated by Possion distribution.

algorithm

Hence, estimation from start node to middle node k is

Bilingual

corpse

Test

sentence

Word-based

corpse

Identification system Chinese Chinese chunk

Chunks

of the co-chunks

matching

Co-chunk

based corpse

Model training

lk

II TRANSLATION

Preprocessing

Parameters

g ( k ) { log p( wti | wti 1) log p(l k | mk )

Statistical Translation

machine

results

translation

i 1

k

mk

lk

j 1

i 1

log p( ws j | wti )

(7)

Fig. 3 Structure of the SMT system based on co-chunks

mk

log p( ws j | ws j 1)]}

4.1. Co-chunk-based translation model

j 1

On the other hand,

h(k ) log p(bt rest | bsrest)

NT

NS

NT

p( wti | wti 1) p( ws j | wti )

log

i k 1

j k 1 i k 1

NS

p( ws j | ws j 1)

j k 1

Thus, we get

(8)

In statistical opinions, translation task can be described as

follow. Given a source (“ Chinese ”) string

C : c1M wc1 , wc2 , wc M , we choose the string E* among

all

possible

target

(“

English

”)

strings

E : e1L we1, we2 ,, weL with the highest probability that is

given by Bayes’ decision rule [1]

E* arg max {Pr( e1L | c1M )}

e1L

arg max {Pr( e1L ) Pr( c1M | e1L )}

e1L

(10)

This is the word-based SMT approach. Pr( e1L ) is the

probability of the language model produced by the target

language. Pr( c1M | e1L ) is the probability of the string

translation model from the target language to the source

language. The argmax operation denotes the decoding

problem, i.e. the generation of the output sentence in the

target language.

In our system, a dynamic programming algorithm is used

as the decoding method which is the same as the fast stack

decoder[11].

Then we define the sentences as

In this section, some results of the automatic identification

system for the co-chunks are presented. A corpus of

66061 sentence pairs is used to train the parameters. A

close test set includes 2487sentences. And the open test

set includes 845 sentences. The precision and the recall is

defined as

N

N

precision r 100 %; recall r 100 % (15)

Np

Na

C : bc1J

bc j wc1, wc2 ,;

E : be1I

bei we1, we2 ,

Where, bcj is a Chinese chunk, bei is an English chunk. J

is the co-chunk number in the Chinese sentence. It is the

co-chunk number in the English sentence. Because a

source sub-chunk can correspond to a null target subchunk, J isn’t always as same as I. Then the equation 10

can be rewritten as

(11)

E* arg max P(be1I ) P(bc1J | bt1I )

E

As the word-based SMT, P(be1I ) is the probability of the

5. EXPERIMENTS AND DISCUSSION

5.1. Experiment of the co-chunk identification

Where N p is the co-chunk number of the identification

result. N a is the co-chunk number of the answers. And

N r is the co-chunk number of the right identification.

Table 1 Results of the co-chunk identification

Test set

Closed Test

Open Test

83.86

81.20

Precision (%)

84.65

81.19

Recall (%)

co-chunk language model. P (bc1J | bt1I ) is the probability

of the co-chunk translation model.

4.2. Smoothing

Because the unit number of co-chunk-based system is

larger than that of the word-based system, the data

sparseness problem is a severe problem for the co-chunkbased translation. That is to say, it needs to be smoothed

in both the co-chunk language model and the co-chunk

translation model.

In our system, the trigram model is used as the co-chunk

language model.

I

P( E ) p(be1) p(be2 | be1) p(bei | bei 2 bei 1)

(12)

i 3

Table 1 shows that the automatic identification method

can deal with parallel corpus effectively. The following

are some analysis and advices for improving the

performances.

1)

2)

3)

And its smoothing algorithm is the back-off method.

Moreover, the co-chunk translation model just likes the

model 1 of IBM[10].

P (C | E ) P (bc1J | be1I )

J

I

p(bc j | bei )

( I 1) J j 0 i 0

(13)

presents some small, fixed number. p (bc j | bei ) is the

translation probability of bcj given bei. It can be estimated

from the EM algorithm. And we smooth this model

according to the word-based translation model.

~

p (bc | be)

p(bc | be) 0

p(bc | be)

m n

p

(

|

)

wc j wei p(bc | be) 0

( n 1)m j 0 i 0

(14)

4)

Accuracy rate and callback rate reach 84.5%

simultaneously for close testing. In the open test its

performance degrades to about 80% which is still

attractive for machine translation.

Most errors are caused by mapping errors between

Chinese chunks and English chunks.

Probability parameters are not accurate enough, due

to the sparse training data we used. It is another

source of mapping errors.

Error rate can be alleviated if more training data are

employed.

Table 2 Examples of the identification results

Examples

麻烦 您 (4)|| 把 预约 (3)|| 推迟 (2)|| 到 三 天 后 (1)|| 。

please (4)|| postpone (2)|| my reservation (3)|| for three days (1)|| .

预定 (10)|| 是 (9)|| 住 (8)|| 两 个 晚上 (7)|| , (6)|| 但 (5)|| 想 (4)||

改为 (3)|| 住 (2)|| 三 个 晚上 (1)|| 。

I (4)|| had (8)|| a reservation (10)|| for (2)|| two nights (7)|| , (6)||

but (5)|| please (-1)|| change (3)|| it (9)|| to three nights (1)|| .

我 (7)|| 今天 (6)|| 订 了 房间 (5)|| 但是 (4)|| 突然 (3)|| 有 了 (2)||

急事 (1)|| 。

I (7)|| have a reservation (5)|| for tonight (6)|| but (4)|| due to (2)||

urgent business (1)|| I am unable (3)|| to make it (-1)|| .

Three examples of identification results are laid out in

Table 2. The numbers in the table are the specific number

of the co-chunks in the sentences.

3)

By formalizing the co-chunks definition, it is

possible to find the better balance point of the

statistical and rule-based methods.

Table 4 Results of the co-chunk-based translation

5.2. Experiment of the co-chunk-based translation

These experiments are carried out on a Chinese-English

parallel corpus. The corpus consists of spontaneous

utterances from hotel reservation dialogs. Although this

task is a limited-domain task, it is difficult for several

reasons: first, the syntactic structures of the sentences are

less restricted and highly variable; second, it covers a lot

of spontaneous speech characters, such as hesitations,

repetitions and corrections. The summary of the corpus is

given in the tables 3.

Table 3 Training corpus

Chinese

Sentences

English

2655

Vocabulary Size

1237

932

Chunk List Size

2785

1775

The system is tested by the test set of 1000 sentences and

evaluated by both subjective judgments and the automatic

evaluation algorithm.

1)

2)

Subjective judgment. The performance measure of

the subjective judgment is the indication of the

closeness of the output to the original with four

grades: (A) All contents of the source sentence are

conveyed perfectly. (B) The contents of the source

sentence are generally conveyed, but some

unimportant details are missing or awkwardly

translated. (C) The contents are not adequately

conveyed. Some important expressions are missing

and the meaning of the output is not clear. (D)

Unacceptable translation or no translation is given.

Automatic evaluation. An automatic evaluation

approach is employed to measure the output quality

of the spoken-language translation. The equation 16

describes its final score. And the detail is in the

reference [12].

N ( 2 1) precision recalli

i

score F

/ N (16)

i 1

2 precisioni recalli

Table 4 shows the results of the examination. From it we

can see:

1)

Co-chunk-based model outperforms word-based

alignment model significantly.

2)

In spoken language, the processing unit for human

maybe is chunks rather than words.

training

corpse

Automatic

evaluation

word-based

Co-chunkbased

Subjective judgments (%)

A

B

C

D

0.589

29.2

22.9

33.3

14.6

0.794

66.7

22.9

10.4

0.01

Three examples of the experiments are laid out as follows.

Here, <c> is the Chinese sentence; <tw>is the translation

result of the word-based system; and <tb> is the

translation result of the co-chunk-based system.

Exp1: <c> 靠 河边 风景 漂亮 的 房间 有没有 ?

<tw> any of the river from the room good view

<tb> are there any rooms with a good view of the

river ?

Exp2: <c> 没有 收到 日本 佐藤 来 的 房间 预约 吗 ?

<tw> [Fail. No translation]

<tb> in the name of Sato from Japan ?

Exp3: <c> 只要 带有 淋浴 的 房间 都 行 。

<tw> is that all the rooms with a shower .

<tb> all the rooms with a shower will be fine .

6. CONCLUSION

In this paper, we give a brief overview on recent progress

of our work. These are mainly based on the definition of

the co-chunk according to the spoken-language translation.

A novel co-chunk identification algorithm in SMT

framework is described in detail. Experimental results

show that it can identify the co-chunks effectively. Then a

series of co-chunk-based statistical machine translation

experiments are presented which show that the proposed

definition can lead to great improvement to the quality of

the Chinese-English spoken-language translation.

7. REFERENCES

[1] P. F. Brown, J. Cocke, V. J. Della Pietra, S. A. Della

Pietra, F. Jelinek, J. D. Lafferty and R. L. Mercer, “A

statistical approach to machine translation.” Comput.

Linguist., vol. 16, no. 2, pp. 79-85, 1990.

[2] Sue J. Ker and Jason S. Chang. A class-based

approach to word alignment. Computational Linguistics,

1997, 23(2): 313-343

[3] Y. Wang. Grammar inference and statistical machine

translation. [thesis of Doctor degree]. CMU-LTI-98-160,

1998

[4] H. Ney, S. Nieβen, F. J. Och, et al. Algorithms for

statistical translation of spoken language. IEEE Trans. on

Speech and Audio Processing, 2000, 8(1): 24-36

[5] Cheng Wei and Xu Bo. Statistical Approach to

Chinese-English Spoken-language Translation in Hotel

Reservation Domain. The International Symposium of

Chinese Spoken Language Processing (ISCSLP’00), 2000.

271-274

[6] M. Carl, “A model of competence for corpus-based

machine translation,” In: Proceedings of COLING’2000,

Saarbrücken, Germany, 2000.

[7] Steven Abney, “Parsing by Chunks,” In: Robert

Berwick, Steven Abney and Carol Tenny (eds.),

Principle-Based Parsing, Kluwer Academic Publishers,

1991.

[8] Erik F. Tjong Kim Sang and Sabine Buchholz,

“Introduction to CoNLL-2000 Shared Task: Chunking,”

In: Proceedings of CoNLL-2000, Lisbon, Portugal, pp.

127-132, 2000.

[9] Zhou Q, Sun Mao-S, and Huang Chang-N, “Chunk

parsing scheme for Chinese sentences,” Chinese J.

Computers, vol. 22, no.11, pp: 1158-1165, 1999 (in

Chinese with English Abstract).

[10] P. F. Brown, V. J. Della Pietra, S. A. Della Pietra,

and R. L. Mercer, “The mathematics of statistical machine

translation: Parameter estimation.” Comput. Linguist.,

vol. 19, no. 2, pp. 263-311, 1993.

[11] Y. Y. Wang, A. Waibel, “Fast Decoding For

Statistical Machine Translation.” In Proceedings of

ICSLP’98, Sydney, Australia, 1998.

[12] CHENG Wei and XU Bo, “Automatic Evaluation of

Output Quality for Speech Translation Systems”, Journal

of Chinese Information processing, vol. 16, no.2, pp.

47-53, 2002.