A Statistician`s Apology

advertisement

A Statistician's Apology

JEROME CORNFIELD*

*

Statistics is viewed as an activity using a variety of formulations to give incomplete, but revealing,

descriptions of phenomena too complex to be completely modeled. Examples are given to stress the depth and

variety of such activities and the intellectual satisfactions to be derived from them.

1. CRITIC'S COMPLAINT

As may be apparent from my title, which is taken from G.H. Hardy's little masterpiece

[17], this address is on a subject covered by numerous predecessors on this occasion-what

is statistics and where is it going? When one tries, spider-like, to spin such a thread

out of his viscera, he must say, first, according to Dean Acheson,

"What do I know, or think I know, from my own experience and not by literary osmosis?" An honest answer

would be, "Not much; and I am not too sure of most of it."

In that spirit, I shall start by considering a non- statistical critic, perhaps a

colleague on a statistics search committee, who is struck by what he calls the dependent

character of statistics. Every other subject in the arts and sciences curriculum, he

explains, appears self-contained, but statistics does not. Although it uses mathematics,

its criteria of excellence are not those of mathematics but rather impact on other

subjects. Similarly, while other subjects are concerned with accumulating knowledge

about the external world, statistics appears concerned only with methods of accumulating

knowledge but not with what is accumulated. I don't deny, he says, that such activity

can be useful (here he shudders), but why would anyone wish to do it? At this point he

begins to wonder whether statisticians have any proper place in a faculty of arts and

sciences, but being too tactful to say so, he tells, instead, one of those stories about

lies, damned lies and statistics. He ends by quoting from the decalogue of Auden's Phi

Beta Kappa poem for 1946 in which academics are warned

"...

Thou shalt not sit/With statisticians nor commit/a social science."

Such an attitude is not uncommon. Most statisticians have wondered at one time or

another about their choice of profession. Is it merely the result of a random walk or

are there major elements of rationality to it? Would one, in later years, in full

knowledge of one's interests and abilities, agree that the choice was correct? I must

admit that in my more Walter Mitty-like moments I have contemplated the cancer cures

I might have discovered had I gone into one of the biomedical sciences, and similar

marvels for other fields. But advancing years bring realism, and I am convinced that

the outcome of the random walk which made me a statistician was inevitable. The only

state that was an absorbing one was statistics, and I am pleased that the passage time

was so brief. To say why this is so, I must talk about both statistics and myself.

2. DIVERSITY OF INTERESTS

Considering myself first, I point to an affinity with the quantum theorist Pauli, between

whom and all forms of laboratory equipment, it has been said, a deep antagonism subsisted.

He had only to appear between the door-jambs of any physics laboratory in Europe for

*

Jerome Cornfield is chairman, Department of Statistics, George Washington University, and director, Biostatistics Center, 7979 Old Georgetown

Road, Bethesda, Md. 20014. This article is the Presidential Address delivered at the 134th Annual Meeting of the American Statistical Association,

August 27, 1974, St. Louis, Mo. Preparation of this article was partially supported by National Institutes of Health Grant HL15191.

1

some piece of glassware to shatter. Similarly, in my undergraduate class in analytic

chemistry, no matter what solution I was given to analyze, even if only triply distilled

water, I was sure to find sodium, a literal product, I was later told, of the sweat of

my brow. In biology, I retraced the path of James Thurber, the result of whose first

effort to draw what he saw in a microscope was, it will be remembered, a picture of his

own eye. Observational science was clearly not for me, unless I intended to publish

exclusively in the Journal of Irreproducible Results, the famed journal which first

reported the experiment in which one-third of the mice responded to treatment, one-third

did not, and the other was eaten by a cat.

Although I enjoyed mathematics, I enjoyed many other subjects just as much, and a

part-time mathematician ends by being no mathematician at all. This interest in many

phenomena and the sense that mathematics by itself is intellectually confining as Edmund

Burke said of the law, sharpens the mind by narrowing it is characteristic of many

statisticians. Francis Galton, for example, was active in geography, astronomy,

meteorology and of course, genetics, to mention only a few. I have always thought that

his investigation on the efficacy of prayer, in which he, among other things, compared

the shipwreck rate for vessels carrying and not carrying missionaries, exhibits the

quintessence of statistics [13]. Charles Babbage, the first chairman of the Statistical

Section of the British Association for the Advancement of Science and a leading spirit

in the founding of the Royal Statistical Society, was another from the same mold. He

is perhaps the earliest example of a statistician lost to the field because of the greater

attractions of computation. His autobiography [1] lists an awe inspiring number of other

subjects in which he was interested, and no doubt the loss to each of them because of

his youthful reaction to erroneous astronomical tables "I wish to God these calculations

had been executed by steam" was equally lamentable.

A wide diversity of interests is neither necessary nor sufficient for excellence in

a statistician, but a positive association does exist. Statisticians thus tend to be

hybrids, and it was my own hybrid qualities, together with a disinclination to continue

the drift into what Henry Adams called the mental indolence of history, that made the

outcome of my random walk inevitable.

3. COMPLEX SYSTEMS AND STATISTICS

Coming now to statistics, it clearly embraces a spectrum of activities

indistinguishable from pure mathematics at one end and from substantive involvement with

a particular subject matter area at the other. It is not easy to identify the common

element that binds these activities together. Quantification is clearly a key feature

but is hardly unique to statistics. The distinction between statistical and

nonstatistical quantification is elusive, but it seems to depend essentially on the

complexity of the system being quantified; the more complex the system, the greater the

need for statistical description.

Once, many people believed that the necessity for statistical description in a system

was due solely to the primitive state of its development. Eventually, it was felt, biology

would have its Newton, then psychology, then economics. But since Newtonian-like

description has turned out to be inadequate even for physics, few believe this anymore.

Some phenomena are inherently incapable of simple description. A number of years ago

I gave as an example of such a class of phenomena the geography of the North American

continent [9]. I was not suggesting that the Lewis and Clark expedition would have

benefited from the presence of a statistician if its objectives had been quantitative

it might very well have -but only that many scientific fields that are now descriptive

and statistical may remain so for the indefinite future.

2

4. LIFE SCIENCES AND STATISTICS

In the life sciences, the somewhat naive 19th century expectations as expressed most

forcibly perhaps by the great French physiologist, Claude Bernard, have not been

realized. Bernard loathed statistics as inconsistent with the development of scientific

medicine. In his Introduction to the Study of Experimental Medicine [2, pp. 137 ff.]

he wrote:

"A great surgeon performs operations for stones by a single method; later he makes a statistical summary

of deaths and recoveries, and he concludes from these statistics that the mortality law for this operation

is two out of five. Well, I say that this ratio means literally nothing scientifically and gives no certainty

in performing the next operation. What really should be done, instead of gathering facts empirically,

is to study them more accurately, each in its special determinism ... by statistics, we get a conjecture

of greater or less probability about a given case, but never any certainty, never any absolute determinism

... only basing itself on experimental determinism can medicine become a true science, i.e., a sure science

... but indeterminacy knows no laws: laws exist only in experimental determinism, and without laws there

can be no science."

It is ironic that more than a century later, scientific understanding of the

biochemical factors governing the formation of gallstones has progressed to the point

that their dissolution by chemical means alone has become a possibility [22], but one

whose investigation, even now, must include a modern clinical trial with randomized

allocation of patients and statistical analysis of results. In the view of many,

Bernard's vision of a complete nonstatistical description of medical phenomena will not

be realized in the foreseeable future. Sir William Osler was at least as close to the

truth when he said that medicine will become a science when doctors learn to count.

Although the exclusively reductionist philosophy of Bernard still dominates

preclinical teaching in most medical schools, many important biomedical problems not

amenable, in the present state of knowledge, to this form of attack have been and are

being successfully investigated. In his presidential address to the American Association

for Cancer Research [21], Michael Shimkin discussed one of the most well known of them,

smoking and health, and ended by suggesting that a Nobel Prize for accomplishments on

this problem be awarded and shared by Richard Doll and Bradford Hill, Cuyler Hammond

and Daniel Horn and Ernest Wynder, three of whom are members of either the ASA or the

Royal Statistical Society.

A less well-known biomedical activity, in which the impact of statistics is also

becoming considerable, is the evaluation of food additives. Even the most traditional

of toxicologists has been compelled to admit that an experiment on n laboratory animals,

none of whom showed an adverse reaction to an additive, does not establish, even for

that species of animal, the absolute safety of that additive for any finite n, much less

that the risk is less than 1 in n [18]. A more fundamental problem than either of these,

that of brain function, is, according to one investigator, unlikely to be resolved by

deterministic methods since its primary mode of function is believed by many to be

probabilistic [4]. For none of these problems, nor for many others that could be listed,

is a reductionist approach, let alone solution, anywhere in sight, and if the problems

are to be attacked at all, they must be attacked statistically.

This, of course, does not mean they must be attacked by statisticians. Osler was

questioning the unwillingness and not the inability of physicians to count, and many

of them have shown that when they become involved in a problem that requires counting

that they can do a pretty good job of it. No one, not even our hypothetical critic,

questions that statisticians have a useful auxiliary role to play in such investigations.

But the heart of his question and of my inquiry is why should any person of spirit, of

ambition, of high intellectual standards, take any pride or receive any real stimulation

and satisfaction from serving an auxiliary role on someone else's problem. To prepare

the ground for an answer, I shall first consider two examples taken from my own

3

experience.

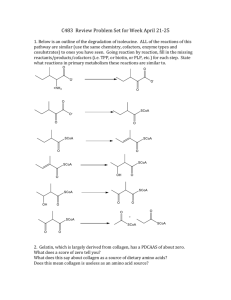

5. EXAMPLE: TOXICITY OF AMINO ACIDS

The first example concerns a series of investigations on the toxicity of the essential

amino acids undertaken by the Laboratory of Biochemistry of the National Cancer Institute

[15]. They were motivated by the finding that synthetic mixtures of pure amino acids

could have ad- verse effects when administered to patients following surgery. An initial

series of experiments had determined the dosage-mortality curve in rats for each of the

ten amino acids. The next step, the investigation of mixtures, threatened to founder

at conception because there were over 1,000 possible mixtures of the ten amino acids

that could be formed, and to study so many mixtures was clearly beyond the bounds of

practicability.

At the time these experiments were done, several methods for measuring joint effects

had been proposed. Their common feature was that they did not depend on detailed

biochemical understanding of joint modes of action. The primitive but not very

well-defined notion to be quantified was that combinations may have effects that are

not equal to the sum of the parts and that knowing this may lead to better understanding

of modes of action. J. H. Gaddum, the British pharmacologist who was one of the inventors

of the probit method, discussed the quantification of the primitive notion in his

pharmacology text with a simplicity and generality that contrasted with most of the other

discussions then available [12]. He started with the idea that if drugs A and B were

the same drug, even if in different dilutions, their joint effects were by definition

additive. That definition implied that if amounts xA and xB of drugs A and B individually

led to the same response, e.g., 50 percent mortality, then they were additive at that

level of response if and only if the mixture consisting of some fraction, p, of xA and

fraction (1 - p) of xB led to that same level of response for all p between zero and unity.

This concept of additivity is different from that customarily used in statistics as may

be seen by noting that, in the customary concept, if responses are additive, then log

responses are not; but with Gaddum's concept, if drugs are additive with respect to a

response, they are additive with respect to all one-to-one functions of that response.

I shall refer to his concept of additivity as dosewise additivity to distinguish it from

the customary statistical concept which is of response-wise additivity.

An alternative, then, to investigating the 1,023 possible mixtures of amino acids

was to start with the concept of dosewise additivity. If the ten amino acids were in

fact dosewise additive in their effects on mortality, further investigation of mixtures

might be unrewarding, while large departures from additivity in either direction might

provide insights into possible joint modes of action. My biochemical colleagues were

initially quite unimpressed with this proposal. But since the dosage-mortality curves

for the individual amino acids were so steep that one-half an LD99.9 (the dose estimated

to be lethal for 99.9 percent of the animals) would elicit no more than a one to three

percent mortality, they did agree to test it in a small experiment. The experiment

consisted of using a mixture of one-half the LD99.9's of each of two amino acids. If

response-wise additivity held, this should have led to a two to six percent mortality,

but dose-wise additivity would have led to 99.9 percent mortality. Although they didn't

say so at the time, the biochemists regarded this experiment as worth the investment

of just two rats. But they did call me the next day to report that both rats had died,

and then added in an awe-stricken tone that would have gratified Merlin himself, "and

so fast."

Once the usefulness of the model of dose-wise additivity as a standard against which

to appraise actual experimental results was accepted, my role became that of passive

observer. But here the real biochemical fun began. The biochemists started with a single

experiment in which a mixture of the ten amino acids, each at a dosage of one-tenth their

4

individual LD50, was administered to a group of rats. On the hypothesis of dosewise

additivity, 50 percent should have died; in fact, none did. Clearly, the different amino

acids were not augmenting each other in the same way that each increment of a single

amino acid augments the preceding amounts of that amino acid. A 50 percent kill was

obtained only when the amount of each ingredient in the mixture was increased by about

70 percent.

To investigate further the nature of this joint effect, ten separate mixtures were

prepared, each containing nine of the amino acids at a dosage of one-ninth X 1.7 their

individual LD 50's. At this point a wholly un- expected result emerged. For all but one

of the ten mixtures, the departures from additivity were of about the same magnitude

as for the mixture containing all ten. But one of the mixtures, the one lacking

L-arginine, was much more toxic than the other nine, suggesting that, despite its

toxicity when given alone, in a mixture L-arginine was protective. A direct experiment

confirmed this. The hypothesis was now advanced that the toxicity of each of the amino

acids was due to the formation and accumulation of ammonia and that the protective effect

of L-arginine was a result of its abilityn previously demonstrated in isolated

biochemical systems, to speed up the metabolism of ammonia. A later series of experiments fully confirmed this hypothesis.

A major advance in the understanding of the metabolism of amino acids was thus

crucially dependent on a wholly abstract concern with appropriate ways to quantify the

joint effects of two or more substances.

6. EXAMPLE: THE ELECTROCARDIOGRAM

The other example is taken from ongoing work in the computerized interpretation of

the electrocardiogram, or ECG [8]. It is convenient to consider computerization as

involving two nonoverlapping tasks. The first is measurement of the relevant variables,

or in the specialized terminology of the field, wave recognition. The second is the

combination of the variables to achieve an interpretation, or as it is sometimes cailled,

a diagnosis. The function of the wave recognition program is to summarize the electrical

signal which shows voltage as a function of time for each of a number of leads. There

are characteristic features to this signal which take the form of maxima and minima and

which can be related to various phases of the heart cycle. These features are referred

to as waves and the wave recognition program locates the beginning and end of each wave.

From these, a series of variables is defined and their values computed. The signal has

thus been transformed into a vector of variables.

The wave recognition procedures of the different groups that have developed

computerized interpretations all agree in principle, although there are important

differences in detail, and hence, in accuracy. The interpretation phase of the different

programs does involve an important difference of principle, however. Traditional reading

of the ECG by a cardiologist involves the use of certain decision points. Thus, the ECG

is traditionally considered "consistent with an infarct" if it manifests one or more

special characteristics, such as a Q wave of 0.03 sec. or more in certain leads, but

normal if it manifests none of these characteristics. Most groups have simply taken such

rules and written them into their programs. Headed by Dr. Hubert Pipberger, the Veterans

Administration group, with which I have been involved, has elected, instead, to proceed

statistically by collecting ECG's on a large number of individuals of known diagnostic

status-normals, those having had heart attacks, etc., and basing the rules on the data

by using standard multivariate procedures rather than on a priori decision points. This

statistical approach distinguishes the Veterans Administration program from all the

others now available.

At this point, I shall consider just a secondary aspect of the general program, the

detection of arrhythmias, and a restricted class of arrhythmias at that those which

5

manifest themselves in disturbances of the RR rhythm. The time between the R peaks of

the ECG for two successive heart beats is termed an RR interval, and the problem was

to base a diagnosis on the lengths of the successive RU intervals. From a formal

statistical point of view, each patient is characterized by a sequence of values t1,

t2,… , tn which can be considered a sample from a multivariate distribution. If the

distribution is multivariate normal and stationary, the sufficient statistics for any

sequence of intervals are given by the mean interval, the standard deviation and the

successive serial correlations. Thus, the RR arrythmias should be classifiable in terms

of these purely statistical attributes.

Even before looking at any data, this formal scheme seemed to provide an appropriate

quantification for this class of arrhythmias. High and low mean values for a patient

correspond to what are called tachyeardias and bradyeardias. High standard deviations

characterize departures from normal rhythm; while the different types of departures,

the atrial fibrillations and the premature ventricular contractions which must be

distinguished because of their different prognoses could be distinguished by their

differing serial correlations. A statistician familiar only with the purely formal

aspects of stationary time series and multivariate normal distributions and entirely

innocent of cardiac physiology, as I am, is nevertheless led to an appropriate

quantification. When these ideas are tested by classifying ECG's from patients with known

rhythmic disturbances, about 85 percent are correctly classified [16]. Because of the

simplicity of the scheme, a small special purpose computer, suitable for simultaneous

bedside monitoring of eight to ten patients in a coronary care unit, seems feasible and

is under active investigation.

7. KEY ROLE OF INTERACTIONS

Having placed this much emphasis on the examples, I am a little embarrassed at the

possibility that they will have failed to show that there is stimulation and satisfaction in working on someone else's problem. Compared with, say, the revolution in

molecular biology and the breaking of the genetic code, the activities described are

admittedly pretty small potatoes. Nevertheless, both the scientific problems and the

statistical ideas involved are nontrivial and the essential dependence of the final product on the blending of the two is characteristic. The role of statistics was central,

not auxiliary. The same results could not have been attained without statistics simply

by collecting more data. Important though efficient design and minimization of the

required number of observations are, they are by no means all that statistics has to

contribute, and both examples highlight this. They also seem, to refute the common

statement, "If you need statistics, do another experiment," if yet another refutation

were needed.

The variety of mathematical ideas and applications in science and human affairs that

flows from the primitive notion of quantifying characteristics of populations as opposed

to explaining each individual event in its own "special determinism" would come as a

surprise to William Petty, John Graunt, Edmund Halley and the other founding fathers.

The steps from them to the idea of a population of measurements, described by theoretical

multivariate distributions, and then to the theory of optimal decisions based on samples

from these distributions all of which were needed to lay the foundations for the ECG

application resulted from the efforts of some of the best mathematical talents of our

era. With these developments at our disposal, we are, astonishingly, at the heart of

the problem of computerizing the interpretation of the ECG. For if we take as our goal

minimum misclassification error or minimum cost of misclassification, statistical

procedures of the type used by the Veterans Administration group can be shown to be

preferable to computerization of the traditional cardio- logic procedures [10]. A

statistician who finds himself at one of the many symposia devoted to this problem is

6

prepared to address the central and not the peripheral issues involved. And in that rapid

shift from theory to practice, so characteristic of statistics, he can also sketch out

the design of a collaborative study comparing the performance of the different programs,

which could con- firm or deny this claim [19].

I must, however, not exaggerate. Statistics, although necessary for this problem,

is certainly not sufficient. The problem requires clinical engineering and many other

skills as well. A test relates to the entire package and not just the statistical

component, and this is true for most statistical applications. It will be remembered

that a well-designed, randomized test of the effectiveness of cloudseeding foundered

on the fact that hunters used as targets the receptacles intended for the collection

and measurement of the amount of rainfall, thus fatally im- pairing the variables needed

for evaluation.

It is for this as well as for other reasons that no one has ever claimed that statistics

Bras the queen of the sciences-a claim which, even for mathematics, was dependent on

a somewhat restricted view of what constituted the sciences. I have been groping for

a more appropriate noun than "queen," something less austere and authoritarian, more

democratic and more nearly totally involved, and, while it may not be the mot juste,

the best alternative that has occurred to me is "bed-fellow." "Statistics bedfellow of

the sciences" may not be the banner under which we would choose to march in the next

academic procession, but it is as close to the mark as I can come.

8. EXAMPLES OF INTERACTIONS

How can a person so engaged find the time to maintain and develop his or her grasp

of statistical theory, without which one can scarcely talk about the stimulation and

satisfaction that comes from successful work on someone else's problem? The usual answer

is to distinguish between consultation and research and to insist that time must be

reserved for the latter. This answer comprehends only part of the truth, however, because

it overlooks the complementary relation between the two. Application requires

understanding, and the search for understanding often leads to, and cannot be

distinguished from, research.

The true Joy is to see the breadth of application and breadth of understanding grow

together, with the un- planned fallout the pure gravy, so to speak being the new research

finding. It sometimes turns out not to be so new, but aside from the embarrasing light

this casts on one's scholarship, should not be regarded as more than a minor misfortune.

It is hard to give examples of these interactions which don't become unduly technical

or too long, but some of the issues involved in sequential experimentation may give a

glimpse of the process. Many years ago in trying to construct a sequential screening

test which would require fewer observations than the standard six mouse screen then being

used by an NIH colleague, I was both crushed and baffled to find that, using the sequential

t test, more than six observations were required to come to a decision no matter what

the first six showed. This was crushing because the increase in efficiency promised could

not be realized, and baffling because inability to reach a decision after six

observations, no matter how adverse to the null hypothesis they might be, seemed not

only inefficient, but downright ridiculous.

Much fruitless effort was spent in the following years long after the problems at

which the six mouse screen had been directed had been solved-trying to understand the

difficulty; but only after I realized that the sequential test, unlike the fixed sample

size one, was choosing between two alternatives was the problem clarified. Observations

really adverse to the null hypothesis were almost equally adverse to the alternative

and, hence, because of the analytic machinery of the sequential test, considered

indicative of a large standard deviation, even if all the observations were identical.

In light of this large standard deviation, the test naturally called for more data before

7

coming to a decision. But if a composite rather than a simple alternative with respect

to the mean were considered, or, as I now prefer to say it, if prior probabilities were

assigned to the entire range of alternatives with respect to the mean, rejection of the

null hypothesis even after two sufficiently adverse observations was possible, and the

puzzle disappeared [6].

The problem was intimately connected with the role of stopping rules in the

interpretation of data a question of great importance for the clinical trials with which

I was becoming involved. From this line of investigation, I concluded, as had others

who had previously considered- the problem, that in choosing among two or more

hypotheses, stopping rules were largely irrelevant to interpretation -and, in

particular, that it made no difference whether the experiment was a fixed sample size

or a sequential one. Data is data, just as in the old story, "pigs is pigs."

But the problem does not end there. As Piet Hein puts it, "Any problem worthy of

attack/Proves its worth by hitting back…”

It is not always easy or even possible to cast all hypothesis testing problems in

the form of a choice between alternatives. Isolated hypotheses, for which alternatives

cannot be specified, also arise and for these the stopping rule does matter. Thus. the

original form of the VA algorithm for the computerized interpretation of the ECG computed

the posterior probability that an individual fell in one of a number of predesignated

diagnostic entities, and this probability provided the basis for a choice between these

alternative hypotheses. But a difficulty was posed by the occasional patient who did

not fall into any of the diagnostic entities built into the algorithm which then tended,

in desperation, to spread the posterior probabilities over all the entities that were

built in.

To avoid this, we might have tried to incorporate more entities into the algorithm,

but even in principle this appeared impossible. Nobody knows them all. What seemed

clearly required, and is now being added to the algorithm, is a classical tail area test

of the hypothesis that a patient falls in one of these predesignated diagnostic entities,

to be used as a preliminary screen. If this hypothesis is rejected, the posterior

probabilities, being inappropriate, would not be computed. But this commits one to a

dualism in which stopping rules sometimes matter and sometimes don't, which is

philosophically distasteful, but which seemingly cannot be avoided. There is perhaps

comfort in the story about the physicist, who, in a similar dilemma, considered light

a particle on Mondays, Wednesdays, and Fridays, a wave on Tuesdays, Thursdays, and

Saturdays and who, on Sunday, prayed.

However sketchy, this illustration of the interaction between theory and practice

should indicate that the usual dichotomy is a false one, detrimental to both theory and

practice. Critical attention to application ex- poses shortcomings and limitations in

existing theory which, when corrected, leads to new theory, new applications and a new

orthodoxy in a loop which may be never-ending, and whose convergence properties are in

any event unknown.

9. NONINFERENTIAL ASPECTS OF STATISTICS

This discussion has the disadvantage of giving more emphasis than I would wish to

the inferential and, in particular, to the hypothesis testing aspects of statistics.

Statistical theory, instruction and practice have tended to suffer from overemphasis

on hypothesis testing. Its major attraction in some applications appears to be that it

is admirably suited for the allaying of insecurities which might better have been left

unallayed, or, as Tufte puts it, for sanctification. I recall one unhappy situation in

which an investigator castigated the statistician whose computer-based P-values had not

alerted him to the possibility that his data interpretation had overlooked some

well-known, not merely hypothetical, hidden variables. Both investigator and

8

statistician might have been better off without the P-values and with a more quantitative

orientation to the actual problem at hand.

The concept of dosewise additivity of the first example provides another

noninferenltial example of quantification in statistics. Of course, multivariate

normality implies linear relations so that, even if additivity were not a constant theme

in most branches of science, an interest in it is a natural consequence of a

statistician's other interests. But it is doubtful if the concept of dosewise additivity

would ever have been developed abstractly as a natural consequence of other statistical

ideas, if not for its importance in interpreting joint effects in certain kinds of medical

experimentation.

There are many other problems in quantification which are noninferential in which

statisticians could nevertheless profitably be more active. The wave recognition aspects

of the ECG interpretation, referred to earlier, provides one such example. Many of the

computer-based image processing techniques, e.g., for chromosomes and for trans-axial

reconstruction of brain lesions, provide others. Engineers and computer scientists have

been more heavily involved in such problems than statisticians, largely, I am afraid,

because they are in closer contact with the real problems and more willing to pursue

non- traditional lines of inquiry. Besides computer-based applications, many problems

in modeling biochemical, physiological or clinical processes are mathematically

interesting and substantively rewarding. I was involved in a few such applications [20,

11, 7], as were other statisticians, but as a profession we could do more. If we don't,

others will.

10. NECESSITY FOR OUTSIDE IDEAS

It is much more respectable than it once was to acknowledge that statistical theory

cannot fruitfully stand by itself. There was a time when many statisticians would have

applauded George Gamow's account [14, p. 34) of David Hilbert's opening speech at the

Joint Congress of Pure and Applied Mathematics, at which Hilbert was asked to help break

down the hostility that existed between the two groups. As Gamow tells it, Hilbert began

by saying,

"We are often told that pure and applied mathematics are hostile to each other. This is not

true. Pure and applied mathematics are not hostile to each other. Pure and applied mathe- matics

have never been hostile to each other. Pure and applied mathematics will never be hostile to

each other. Pure and applied mathematics cannot be hostile to each other because, in fact,

there is absolutely nothing in common between them."

But I think that today most of us would be more sympathetic with Von Neumann's account

[23, p. 2063]:

"As a mathematical discipline travels far from its empirical source, or still more, if it is

a second and third generation only indirectly inspired by ideas coming from 'reality', it is

beset with very grave dangers. It becomes more and more purely aestheticizing, more and more

purely l'art pour l'art. This need not be bad, if the field is surrounded by correlated subjects,

which still have closer empirical connections, of if the discipline is under the influence

of men with an exceptionally well- developed taste. But there is a grave danger that the subject

will develop along the line of least resistance, that the stream, so far from its source, will

separate into a multiple of insignificant branches, and that the discipline will become a

disorganized mass of details and complexities. In other words, at a great distance from its

empirical source, or after much 'abstract' in- breeding, a mathematical subject is in danger

of degeneration. At the inception the style is usually classical; when it shows signs of

becoming baroque, then the danger signal is up.... Whenever this stage is reached, the only

remedy seems to me to be the rejuvenating return to the source: the reinjection of more or

less directly empirical ideas. I am convinced that this was a necessary condition to conserve

9

the freshness and the vitality of the subject and that this will remain equally true in the

future."

A rejuvenating return to the source will involve more, however, than sitting in on

a few consultations or leafing through a subject matter article or two in the hope that

they will suggest interesting mathematical problems. A definite expenditure of time and

intellectual energy in one or more subject matter areas is required, not because they

will necessarily generate mathematical problems but because the areas are of intrinsic

interest. This is well understood and would hardly be worth repeating if there were not

some corollaries that are sometimes overlooked.

The first is that the certainties of pure theory are not attainable in empirical

science or the world of affairs. More subtle or at least different critical faculties

are needed to appraise scientific evidence than are needed for evaluating a formal

mathematical proof. For example, in his Double Helix [24], Watson speaks scornfully of

those contemporaries who were unable to see that Avery's experiment made virtually

inevitable the idea that the nucleic acids rather than the proteins were the source of

the genetic material. However it may have seemed at the time, the subsequent course of

events has confirmed Watson's judgment. Ability to see where evidence is pointing before

the last piece is in, and, contrariwise, ability to see the numerous directions in which

it is pointing from only the first piece, requires as much cultivation by the statistician

interested in the field as by the specialists, perhaps more.

Taking an example a little closer to mathematics, the well-known set theorist Zermelo

was also interested in physics and, in fact, translated Willard Gibbs' work on

statistical mechanics into German. He was so over- whelmed with its logical deficiencies,

however, that his remaining contributions to statistical mechanics consisted of attacks

on Gibbs. It remained for other physicists, who, although also interested in logical

purity, were equally interested in the subject matter, to take the constructive step

of modifying Gibbs' formulation to eliminate the deficiencies [3].

In statistical applications one can detect this same search for purity, as manifested

in a reluctance to make assumptions, even though they can lead to important

simplifications when approximately true, or in an unwillingness to admit prior

probabilities even when, as in the diagnosis problem, they have a well-defined frequency

interpretation and are required for optimum properties. It also shows up in a certain

type of statistical criticism of scientific results, in which pointing to a potential

weakness is considered equivalent to demolition. Bross some effort to demonstrate the

reality as well as the potentiality of the weakness be required does not seem to have

dimmed this quest for purity, at least as manifested in some recent statistical

criticisms.

A second corollary is that any but a nominal movement towards applications will have

profound implications for graduate education. Students need a thorough grounding in

theory, but they need a perhaps equally thorough supervised exposure to the world of

applications, and this latter exposure will compete with time now devoted to theory.

Every one will have his own choice of dispensable subjects, but the major problem is

how to provide the applications. All too often we have behaved like a hypothetical medical

school which turned out physicians with no internship or residency training, and in some

cases with no training beyond the pre-clinical basic science courses. The better students

would no doubt overcome this deficiency in time, but the patients could scarcely be

expected to welcome their initial ministrations. A formal statistical internship with

a commitment of time comparable to that of the medical intern is a desirable step in

the direction of applications. But we need the equivalent of the teaching hospital as

a source of problems as well, and in most cases this will require arrangements with

government and/or industry. The ASA, I am pleased to report, has taken some significant

steps in this direction; but a great deal more remains to be done, not only by ASA, but

by everyone interested.

10

11. THE APOLOGY

But I have wandered a bit from my main theme the apology. I have no new ideas to add

to what I have already said and if my hypothetical critic, like some of my real ones,

is still unconvinced, he must remain so. As Justice Holmes said, "You cannot argue a

man into liking a glass of beer."

But I do want to amplify slightly my earlier expression of pleasure at having spent

so little time in finding out that I was destined to be a statistician and not any of

the other things that my friends and relatives thought I might be. I came to Washington

during the Great Depression, taking a job in the Bureau of Labor Statistics, at the

princely salary of $1,368 per year-not per month. It didn't take long, however, to become

interested in a variety of statistical problems embedded in the social and economic

problems of the time. The Department of Agriculture was then publishing what it called

an adequate diet at minimum cost, but I doubted, in the arrogance of youth, that anyone

involved knew how to find a minimum. It soon turned out that neither did I. The

minimization of a linear function subject to a set of linear constraints was not covered

in my calculus text and was beyond my powers to develop although it is now made clear

in texts for freshman. But there were plenty of other problems to keep one occupied,

some within my powers. Nobody knew how many unemployed there were, and sampling seemed

the way to find out. Learning, developing and applying sampling theory was my first really

exciting post-school intellectual experience. Statistics had me hooked, and the monkey

has been on my back ever since.

In later years, it came as a pleasant surprise to find out that other people were

watching appreciatively. It would have been less than human not to take pleasure in the

consultantships, memberships in review and advisory committees and, most of all, in the

very great honor you have done me by electing me President of the American Statistical

Association. But, of course, these are simply the visible symbols.

Whitehead [25, p. 225], at one point, speaks of

"... the

human mind in action, with its ferment of vague obviousness, of hypothetical formulation, of renewed

insight, of discovery of relevant detail, of partial understanding, of final conclusion, with its

disclosure of deeper problems as yet unsolved."

I can perhaps avoid presumption and still suggest that the portrait I have tried to

sketch is not wholly unlike Whitehead's image of a mind in action, and that the

statistician's apology and justification is neither more nor less than that the practice

of his profession requires exactly that.

REFERENCES

[1] Babbage, C., "Passages from the Life of Philosopher," re- printed in part in Morrison, P.

and Morrison, E., eds., Charles Babbage and His Calculating Engines, New York: Dover

Publications, Inc., 1961.

[2] Bernard, C., An Introduction to the Study of Experimental Medicine, New York: The MacMillan

Co., 1927.

[3] Born, M., Natural Philosophy of Cause and Chance, Oxford: Clarendon Press, 1949.

[4] Brazier, M.A.B., "Analysis of Brain Waves," Scientific American, 206 (June 1962), 142-53.

[5] Bross, I.D.J., "Statistical Criticism," Cancer, 13, No. 2 (March-April 1960), 394-400.

[6] Cornfield, J., "A Bayesian Test of Some Classical Hypotheses- With Applications to Sequential

Clinical Trials," Journal of the American Statistical Association, 61 (September 1966), 57794.

[7] , Steinfeld, J. and Greenhouse, S., "Models for the Interpretation of Experiments Using Tracer

Compounds," Biometrics, 16, No. 2 (June 1960), 212-34.

[8] -, Dunn, R.A., Batchlor, C.D. and Pipberger, H.V., "Multigroup Diagnosis of

Electrocardiograms," Computers and Biomedical Research, 6, No. 1 (February 1973), 97-120.

11

[9] , "Principles of Research," American Journal of Mental Deficiency, 64, No. 2 (September 1959),

240-52.

[10] ,"Statistical Classification Methods," in Jacquez, J.A., ed., Computer Diagnosis and

Diagnostic Methods, Springfield, Ill.: Charles C Thomas, 1972, 355-73.

[11] Folk, J.E., et al., "The Kinetics of Carboxypeptidase B Ac- tivity, III. Effect of Alcohol

on the Peptidase and Esterase Activities: Xinetic Models," Journal of Biological Chemistry,

237, No. 10 (October 1962), 3105-9.

[12] Gaddum, J.H., Pharmacology, London: Oxford University Press, 1953.

[13] Galton, F., Inquiries into Human Faculty and Its Development, London: MacMillan and Co.,

Ltd., 1883.

[14] Gamow, G., One, Two, Three ... Infinity, New York: New American Library, 1953.

[15] Gullino, P., et al., "Studies in the Metabolism of Amino Acids, Individually and in Mixtures,

and the Protective Effect of L-Arginine," Archives of Biochemistry and Biophysics, 64, No.

2 (October 1956), 319-32.

[16] Haisty, WI{., Jr., et al., "Discriminant Function Analysis of RR Intervals: An Algorithm

for On-Line Arrhythmia Diag- nosis," Computers and Biomedical Research, 5, No. 3 (June 1972),

247-55.

[17] Hardy, G.H., A Mathematician's Apology, London: Cambridge University Press, 1940.

[18] Panel on Carcinogenesis of the Advisory Committee on Protocols for Safety Evaluation of the

Food and Drug Administration, Toxicology and Applied Pharmacology, 20, 1971, 419-38.

[19] Pipberger, H.V. and Cornfield, J., "What ECG Computer Program to Choose for Clinical

Application," Circulation, 47 (May 1973), 918-20.

[20] Scow, R.O. and Cornfield, J., "Quantitative Relations Between the Oral and Intravenous

Glucose Tolerance Curves," American Journal of Physiology, 179, No. 3 (December 1954), 435-8.

[21] Shimkin, M., "Upon Man and Beast-Adventures in Cancer Epidemiology; Presidential Address,"

Cancer Research, 37, No. 7 (July 1974), 1525-35.

[22] Thistle, J.L. and Hofmann, A.F., "Efficacy and Specificity of Chenodeoxycholic Acid Therapy

for Dissolving Gallstones," New England Journal of Medicine, 289, No. 13 (September 27, 1973),

655-60.

[23] Von Neumann, J., "The Mathematician," reprinted in J.R. Newman, ed., The World of Mathematics,

Vol. 4, New York: Simon and Schuster, 1956, 2053-63.

[24] Watson, J.D., The Double Helix, New York: Athaneum, 1968.

[25] Whitehead, A.N., "Harvard, the Future," Science and Philosophy, New York: The Wisdom Library,

1948.

Journal of the American Statistical Association March 1975, Volume 70, Number 349, pp. 7-14, Presidential

Address.

12