Significance of Attributes and Similarity Based Rough Sets

advertisement

Rough Sets Similarity Based Learning

Jaroslaw Stepaniuk

Institute of Computer Science

Bialystok University of Technology

Wiejska 45A, 15-351 Bialystok, Poland

email: jstepan@ii.pb.bialystok.pl

ABSTRACT: First part of this paper presents the basic rough set model methodology and thus serves as an introduction

for the other parts. In the second part of the paper we discuss similarity based rough set model. We define similarity

relations and significance of attributes in this model. In the third part we present some applications of introduced notions

in similarity based learning.

1 ROUGH SETS

Rough sets (Pawlak 1991) have been introduced as a tool to deal with inexact, uncertain or vague knowledge in

artificial intelligence applications. In this section we recall some basic notions related to information systems and rough

sets.

An information system is a pair A = (U, A), where U is a non-empty, finite set called the universe and A - a non-empty,

finite set of attributes, i.e. a: U Va for aA, where Va is called the value set of a. Elements of U are called objects

and interpreted as, for example, cases, states, processes, patients, observations. Attributes are interpreted as features,

variables, characteristic conditions, etc.

Every information system A = (U, A) and non-empty set B A determine a B-information function

InfB: UP(B

V

a B

a

) defined by InfB(x) = {(a,a(x)): aB}.

We define B - indiscernibility relation as follows: xIND(B)y iff InfB(x)=InfB(y).

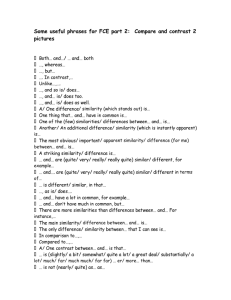

For every subset X U we define the lower approximation IND B X and the upper approximation

IND B X as follows:

IND B X x U : x B X ,

IND B X x U : x B X .

X

IND B X

IND B X

Figure 1: The lower and the upper approximations of a set X in basic

rough set model

Some illustration of the approximations

is presented on Figure 1 (a set U of all

objects is represented as the global

rectangle and B - indiscernibility classes

are represented as small rectangles).

We consider a special case of

information systems called decision

tables. A decision table (Pawlak 1991) is

any information system of the form A =

(U, A {d}), where dA is a

distinguished attribute called decision.

The elements of A are called conditions.

One can interpret a decision attribute as

a kind of classification of the universe of

objects given by an expert, decisionmaker, operator, physician, etc. The

cardinality of the image d(U) = {k:

d(x)=k for some xU} is called the rank

of d and is denoted by r(d). We assume

that the set Vd of values of the decision d

is equal to {1,...,r(d)}. Let us observe that the decision d determines the partition CLASSA(d)= {X1,...,Xr(d)} of the

universe U, where Xk = {xU: d(x)=k} for 1 k r(d). CLASSA(d) will be called the classification of objects in A

determined by the decision d. The set Xk is called the k-th decision class of A. The set POS(B,{d}) is called the positive

region of classification CLASSA(d) and is equal to the union of all lower approximations of decision classes. Some

example of positive region is presented on Figure 2 (a set U of all objects is represented as the global rectangle,

indiscernibility classes are represented as small rectangles, and there are three decision classes).

Different attributes may play different roles in determining the dependency relationship between the condition and

decision attributes. The basic idea for calculating the weights of each attribute is that the more information an attribute

provides to the decision attribute, the more weight has the attribute. Rough sets theory provides the background for

calculating attribute weight and supplies a variety of tools which can measure the amount of information each attribute

gives to the other attributes as a form of significance. Let B A, relative significance of an attribute a B can be

defined in many ways. Here we present two natural coefficients:

SRC B, d , a

SGF B, d , a

card POS B, d card POS B a, d

card U

card POS B, d

and

card POS B, d card POS B a, d

.

In both cases we assume that significance of an attribute reflects the degree of decrease of positive region as a result of

removing attribute a from B. In practice, the stronger the influence attribute a has on the relationship between B and d,

the higher is the value of both coefficients. Let us observe that also the following properties are satisfied:

0 SRC B, d , a SGF B, d , a 1 .

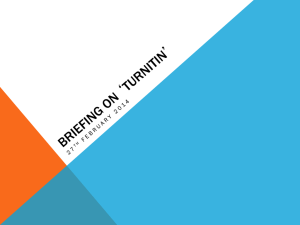

Problem Analysis

Attribute Determination

and Decision Table

Construction

Basic Rough Set Analysis

Results of analysis are

good enough?

Yes

Stop

No

Definition of Similarity Measures

for Attributes

Similarity Based Rough Set

Analysis

Figure 2: Positive region of partition in the basic

rough set model

Figure 3: General scheme for similarity based

rough set data mining

2 SIMILARITY BASED ROUGH SET MODEL

It was observed, that considering a similarity relation instead of an indiscernibility relation is quite relevant. The main

argument for use of a similarity relation instead of the indiscernibility relation is connected with existence of quantitative

attributes in the decision table. Very often, these attributes carry an uncertain information because of non adequate

definition, imprecise measurement or random fluctuation of some parameters. On the other hand, in order to create a

generalized description of the decision table and to discover some regularities in the data, the user may wish to translate

numerical values of attributes into qualitative terms. Therefore, when using the indiscernibility relation, the quantitative

attributes should be discretized using some norms translating the attribute domains into sub-intervals corresponding to

qualifiers: very low, low, medium, high, very high, etc. For example in medicine the use of norms is quite frequent and

there are many conventions establishing them. In those applications, however, where the definition of norms is arbitrary,

it is more natural to define a relative similarity with respect to a given value of the attribute. Moreover, the use of norms

introduces an undesirable phenomenon, when very close objects are separated between two consecutive sub-intervals.

The similarity based extension of rough sets theory should be applied when results obtained by standard rough set

methods are not satisfactory. Figure 3 provides a summary of rough set based methods (standard model and similarity

based model ) of data mining.

In next two subsections we describe constructions of similarity relation and basic properties of similarity based rough set

model.

2.1 CONSTRUCTIONS OF SIMILARITY RELATION

Construction of similarity relation one can start from setting relations between attribute values for each attribute. We

propose to use as a base similarity measure, which one can adopt to different types of given attributes.

Let A=(U,A{d}) be a decision table and let r(d) be a number of decision values. We can define similarity measures

between two values of a given attribute aA. For example, for attribute aA with numeric values one can define a

similarity measure

sa v i , v j 1

vi v j

a max a min

,

where amin, amax denotes the minimum and maximum values of attribute a, respectively.

For more examples of similarity measures see (Stepaniuk 1996).

We assume that value vi is similar to vj when sa v i , v j t a , where t(a)[0,1] is a similarity threshold for values

of attribute a.

Next we describe the aggregation process leading to the definition of the similarity relation on the set of objects.

Let B A, to construct global similarity relation (it means between objects) we use for example the following operators

(Stepaniuk 1996):

s a x, a y t ,

y iff s a x , a y t ,

xSIM B y iff

a

a B

xSIM B

a

a B

where t[0,1] is a similarity threshold for objects.

2.2 ROUGH SETS AND SIMILARITY RELATIONS

In this section we present basic notions of the rough set concept based on similarity relations (Skowron and Stepaniuk

1995). The standard rough set model can be generalized by considering any type of binary relations on attribute values,

instead of the trivial equality relation (Skowron and Stepaniuk 1995, Slowinski and Vanderpooten 1995). We propose a

similarity relation on attributes values in information system (Skowron and Stepaniuk 1995, Stepaniuk and Kretowski

1995).

Let A=(U,A{d}) be a decision table, let Va be a set of values of attributes of aA and let r(d) be a number of decision

values.

A similarity based decision table is defined by (A,SIMA), where SIMA is a similarity relation on the set of objects. We

define SIMAx = {yU: ySIMAx}, SIMAx contains all objects similar to x.

The set approximations (Skowron and Stepaniuk 1995, Slowinski and Vanderpooten 1996) are defined below.

The lower approximation of XU by SIMA is defined as follows:

SIM A X x X : SIM A x X .

SIM A x .

The upper approximation of XU by SIMA is defined as follows: SIM A X

xX

The set

SIM A X is the set of all elements of U which can be with certainty classified as elements of X, with respect to

SIMA. The set

SIM A X is the set of elements of U which can be possibly classified as elements of X, employing

knowledge included in SIMA.

Let Xi = {xU: d(x)=i}. The set

r d

POS SIM A , d SIM A X i

i 1

is called SIMA - positive region of partition {Xi: i=1,...,r(d)}. The positive region as union of the lower approximations

of decision classes include only those objects which belong to the corresponding decision classes without any ambiguity.

Now we introduce the notion of relative reduct in similarity based rough set model.

A subset R A is a relative reduct for (SIMA,d) iff

1) POS(SIMA,{d})= POS(SIMR,{d}),

2) for every proper subset R’R condition 1) is not true.

Let B A, relative significance of attribute a B can be defined as follows:

SRC SIM B , d , a

SGF SIM B , d , a

and

.

card POS SIM B , d card POS SIM B a , d

card U

card POS SIM B , d card POS SIM B a , d

card POS SIM B , d

Thus in both cases we assume that significance of an attribute reflects the degree of decrease of positive region as a

result of removing attribute a from B. Let us observe that if R A, is a relative reduct, then for every a R we obtain

SRC SIM R , d , a , SGF SIM R , d , a 0 .

3 SIMILARITY BASED LEARNING

Many data mining algorithms are based on inductive learning methods. Much less are based on similarity-based

learning. However, similarity-based learning accrues advantages, such as simple representations for decision classes

descriptions, low incremental learning costs, small storage requirements.

Similarity based learning algorithms consist at least of the following main components:

Similarity function: given two normalized objects, this yields their numeric-valued similarity.

Classification function: given an object xnew to be classified and its similarity with each saved object, this yields a

classification for xnew.

In our approach similarity based learning is performed in three steps.

1. Compute some reduct (with minimal number of attributes).

2. Calculate the weights of attributes (using significance of attributes).

3. Calculate the similarity function of the new object with each object in the reduced decision table and classify the

object to the corresponding decision class.

Similarity between objects can be defined in many ways (Stepaniuk 1996). Let x and y be objects described by attribute

set A and let R A be some reduct. We consider for example the following similarity functions:

sim x, y minw * s a x, a y ,

sim x , y wa * sa a x , a y ,

a R

a R

a

a

sim x , y wa * sa a x , a y ,

a R

where 0 wa 1 is a weight assigned to attribute a R. We use as wa significance of attribute a (see Section 2).

For new objects, value of decision attribute d is computed as follows: let xnew be a new object, value of decision attribute

d is the same as d(x) for object x U most similar to xnew.

Thus in other words we can classify d(xnew ) = d(x), where sim(xnew,x) = max{sim(xnew,y) : y U}.

CONCLUSIONS

This paper has focused attention on standard rough set model and similarity based rough set model. We discussed

properties of basic notions in both models. We also present application of introduced notions in similarity based

learning.

REFERENCES

Hu X., Cercone N. 1995. Rough Sets Similarity-Based Learning from Databases, Proceedings of the First International

Conference on Knowledge Discovery and Data Mining, Montreal, Canada, August 20-21 1995, pp. 162-167.

Krawiec K., Slowinski R., Vanderpooten D. 1996. Construction of Rough Classifiers Based on Application of a

Similarity Relation, Proceedings of the Fourth International Workshop on Rough Sets, Fuzzy Sets, and Machine

Discovery, November 6-8, 1996, Tokyo, Japan, pp. 23-30.

Kretowski M., Stepaniuk J. 1996. Selection of Objects and Attributes, a Tolerance Rough Set Approach, Proceedings of

the Poster Session of Ninth International Symposium on Methodologies for Intelligent Systems, June 10-13, 1996,

Zakopane, Poland, pp. 169-180.

Pawlak Z. 1991. Rough Sets. Theoretical Aspects of Reasoning about Data, Kluwer Academic Publishers, 1991.

Skowron A., Stepaniuk J. 1995. Generalized Approximation Spaces, In: Soft Computing, T.Y.Lin, A.M.Wildberger

(eds.), San Diego Simulation Councils, Inc., 1995, pp. 18-21.

Skowron A., Stepaniuk J. 1996. Tolerance Approximation Spaces, Fundamenta Informaticae, 27 (1996) pp. 245-253.

Slowinski R., Vanderpooten D. 1995. Similarity Relation as a Basis for Rough Approximations, Proceedings of the Second Annual Joint Conference on Information Sciences, Wrightsville Beach, N. Carolina, USA, September 28 - October

1, 1995, pp. 249-250, also ICS Research Report 53, 1995.

Slowinski R., Vanderpooten D. 1996. A Generalized Definition of Rough Approximations, ICS Research Report 4,

1996.

Stepaniuk J., Kretowski M. 1995. Decision System Based on Tolerance Rough Sets, Proceedings of the Fourth

International Workshop on Intelligent Information Systems, Augustow, Poland, June 5-9, 1995, pp. 62-73.

Stepaniuk J. 1996. Similarity Based Rough Sets and Learning, Proceedings of the Fourth International Workshop on

Rough Sets, Fuzzy Sets, and Machine Discovery, November 6-8, 1996, Tokyo, Japan, pp. 18-22.