YJB Practice Classification Framework

advertisement

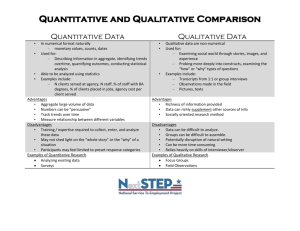

Youth Justice Board YJB Practice Classification System Ben Archer, Youth Justice Board YJB Practice Classification Framework v2.8 Contents Introduction 2 Effective Practice Classification Panel 3 The Practice Classification Framework 4 YJB Categories 4 Quantitative methods 6 Qualitative methods 7 Background to development 10 Factors to consider when using practice classifications 12 Glossary References APPENDIX A 14 16 17 © Youth Justice Board for England and Wales 2013 The material featured in this document is subject to copyright protection under UK Copyright Law unless otherwise indicated. Any person or organisation wishing to use YJB materials or products for commercial purposes must apply in writing to the YJB at ipr@yjb.gov.uk for a specific licence to be granted. Introduction The YJB’s Practice Classification System is designed to provide the youth justice sector with greater information about the effectiveness of programmes and practices in use across not just the youth justice system in England and Wales, but also the broader range of children’s services (and, where applicable, internationally). The system is made up of the following two components: The Effective Practice Classification Panel The Practice Classification Framework This document describes how the system operates and the information it provides for use by youth justice practitioners and commissioners. 2 Effective Practice Classification Panel The Effective Practice Classification Panel is comprised of independent academics and members of the YJB Effective Practice and Research teams. The role of the panel is to classify practice examples in accordance with the Practice Classification Framework, following a thorough consideration of the evidence in support of them. With reference to the categories on the following page, using their expertise and knowledge of the methods involved in evaluation, the panel will be classifying examples that appear to fall close to the threshold for the ‘promising evidence’ category and recommending any which appear close to the ‘research-proven’ threshold for consideration by the Social Research Unit1. The academic representation on the panel is decided through a process of open procurement and academic representatives serve the panel for a period of one year. 1 The YJB has an existing partnership with the Social Research Unit (Dartington) whose own standards of evidence we use to govern classification in the ‘research-proven’ category. The YJB Effective Practice Team will classify those examples of practice that are clearly ‘emerging’ examples (i.e. those not suitable for evaluation or where there is no evaluation information available). 3 The Practice Classification Framework In order to inform the judgements of the Effective Practice Classification Panel, we developed the Practice Classification Framework to assist with categorising practice examples according to the quality of evaluation evidence in support of their effectiveness. A classification is given to every example of practice in the YJB’s Effective Practice Library. YJB Categories Examples of practice are placed into one of the following five categories. The gatekeepers for each category are listed adjacent to each. Category Classification route Proof of effectiveness Research-proven These practices and programmes have been proven, through the highest standards of research and evaluation, to be effective at achieving the intended results for the youth justice system. Social Research Unit Promising evidence These practices and programmes show promising evidence of their ability to achieve the intended results, but do not meet the highest standards of evidence required to be categorised as ‘research-proven’. Effective Practice Classification Panel Emerging evidence These practices and programmes have limited or no evaluation information available, but are nevertheless regarded as examples of successful innovation or robust design by the sector or other stakeholders. YJB Effective Practice Team Proof of ineffectiveness Treat with caution These practices and programmes show some evidence of ineffectiveness but have been evaluated to a lesser extent than those in the ineffective category. In some cases, they may contain features of known ineffective methods (see below), or contravene core legal or moral principles and values that the YJB consider fundamental to maintaining an ethical and legal youth justice system. Ineffective These practices and programmes have been found, on the basis of rigorous evaluation evidence, to have no effect with regard to their intended outcomes, or to be harmful to young people. YJB Effective Practice Governance Group Social Research Unit The categories are arranged above to demonstrate the link with the evidence in relation to either their effectiveness or ineffectiveness. 4 The first three categories are what the YJB refers to as ‘effective practice’2. The lower two categories are used to classify practice which we believe to be either ineffective or have concerns about for other reasons (see below for further details). The thresholds between classification categories are deliberately loosely defined in order to reflect the Effective Practice Classification Panel’s role in judging individual practice examples on the basis of a combination of their theoretical basis, the quality of the evaluation design, and the findings of the evaluation. Ineffective or harmful practice As well as providing information about effective practices and methods, it is also the aim of this framework to provide information about practices and programmes which we know not to be effective or have some concerns about, based on the evidence available. In some cases, evaluation evidence will demonstrate that certain practices are not effective or even harmful to young people3. In such cases we will classify this practice as ‘ineffective’ as per the definition above, and clearly state that a programme or practice should not be used. Given that a judgement such as this by the YJB carries considerable implications, we will only do so on the basis of the most rigorous evaluation evidence (i.e. that which would meet the criteria for the ‘research-proven’ category) and once the evidence has been considered by the Effective Practice Classification Panel. There may also be cases where we have concerns about a certain practice or programme (for example, if it uses methods drawn from known ineffective models) but it has not yet been evaluated to the extent required to provide a greater level of confidence in its ineffectiveness. In these cases, we will classify the practice as ‘treat with caution’ until further evidence is available to either support either its effectiveness or ineffectiveness. Examples of practice that the YJB believe contravene the core legal or moral principles and values fundamental to maintaining a legal and ethical youth justice system will also be placed in this category. The YJB’s Effective Practice Governance Group, which oversees the YJB’s Effective Practice Framework, identifies contraventions to such legal and ethical principles and assigns practices to this category on that basis. The following two sections of the document outline the factors that the Effective Practice Classification Panel consider in relation to the quantitative and qualitative evaluation evidence supplied with practice examples. 2 The YJB defines effective practice as ‘practice which produces the intended results’ (Chapman and Hough, 1998: chapter 1/para1.9) 3 For example, repeated evaluations have shown that ‘Scared Straight’ programmes are not only ineffective but in fact likely to increase young people’s likelihood of re-offending (Petrosino et al. (2003) 5 Quantitative methods The version of the Scientific Methods Scale (Farrington et al, 2002) seen in Figure 1 below, and adapted by the Home Office for reconviction studies, currently forms the basis for appraising the quality of evidence from impact evaluations in government social research in criminal justice. This scale is used by the YJB’s Effective Practice Classification Panel when considering the quality of quantitative evidence contained within evaluations of youth justice practice and programmes. Quantitative research evidence4 Level 1 A relationship between the intervention and intended outcome. Compares the difference between outcome measure before and after the intervention (intervention group with no comparison group) Level 2 Expected outcome compared to actual outcome for intervention group (e.g. risk predictor with no comparison group) Level 3 Comparison group present without demonstrated comparability to intervention group (unmatched comparison group) Level 4 Comparison group matched to intervention group on theoretically relevant factors e.g. risk of reconviction (well-matched comparison group) Level 5 Random assignment of offenders to the intervention and control conditions (Randomised Controlled Trial) Figure 1: The Scientific Methods Scale (adapted for reconviction studies) Home Office Research Study 291: ‘The impact of corrections on re-offending: a review of ‘what works’ (Home Office, 2005) (http://webarchive.nationalarchives.gov.uk/20110218135832/rds.homeoffice.gov.uk/rds/pdfs04/hors291.pdf) 4 6 Qualitative methods Whereas quantitative methods are designed to offer more in the way of information regarding the extent to which a practice or programme is effective (the ‘what’ or ‘how much’), qualitative methods are designed to find out how and why a particular intervention was successful (or not). Qualitative research methods differ from quantitative approaches in many important respects, not the least of which is the latter’s emphasis on numbers. Quantitative research often involves capturing a shallow band of information from a wide range of people and objectively using correlations to understand the data. Qualitative research, on the other hand, generally involves many fewer people but delving more deeply into the individuals, settings, subcultures and scenes, hoping to generate a subjective understanding of the ‘how’ and ‘why’. Both research strategies offer possibilities for generalization, but about different things, and both approaches are theoretically valuable (Adler and Adler, 2012). Quantitative and qualitative methods should not been considered as mutually exclusive. Indeed, when used together to answer a single research question, the respective strengths of the two approaches can combine to offer a more robust methodology. As qualitative methods involve a very different approach to data collection and analysis, often using words and observation as opposed to numerical data, they are not so easily suited to being placed in a scale of rigour. Qualitative evaluation evidence must therefore be considered in a different way, and looking at different characteristics, in order to ascertain its quality and potential for factors such as generalizability etc. The following areas of consideration (adapted from Spencer et al, 2003), exploring the various stages of evaluation design, data collection and analysis, provide a framework for examining the quality of qualitative research evidence. Please note that, depending on the focus and nature of the research in question, some of the quality indicators may not be applicable. Quality indicators How credible are the findings? The findings/conclusions are supported by data/study evidence (the reader can see how the researcher arrived at his/her conclusions) FINDINGS FINDINGS Appraisal questions The findings/conclusion ‘make sense’/have a coherent logic Use of corroborating evidence to support or refine findings (i.e. other data sources have been used to examine phenomena) How well does the evaluation address its original aims and purpose? A clear statement of the study’s aims and objectives The findings are clearly linked to the purposes of the study (and to the intervention or practice being studied) The summary or conclusions are directed towards the aims of the study 7 Where appropriate, a discussion of the limitations of the study in meeting its aims (e.g. limitations due to gaps in sample coverage, missed or unresolved areas of questioning, incomplete analysis?) Is there scope for generalisation? A discussion of what can be generalised to the wider population from which the sample is drawn FINDINGS Detailed description of the contexts in which the study was conducted to allow applicability to other settings/contextual generalities to be assessed Discussion of how hypotheses/propositions /findings may relate to wider theory; consideration of rival explanations Evidence supplied to support claims for wider inference (either from the research or corroborating sources) Discussions of the limitations on drawing wider inference How defensible is the research design? A discussion of the rationale for the design and how it meets the aims of the study DESIGN Clarification and rationale of the different features of the design (e.g. reasons given for different stages of research; purpose of particular methods or data sources, multiple methods etc) Discussion of the limitations of the research design and their implications for the study evidence Sample selection / composition – how well is the eventual coverage described? Rationale for the basis of selection of the target sample Discussion of how sample allows required comparisons to be made SAMPLE Detailed profile of achieved sample/case coverage Discussion of any missing coverage in sample and the implications for study evidence (e.g. through comparison of target and achieved samples, comparison with population etc.) Documentation of any reasons for non-participation among sample approached or non-inclusion of cases DATA COLLECTION Discussion of access and methods or approach and how these might have affected participation/coverage How well was the data collection carried out? Details of: Who collected the data Procedures used for collection/recording Description of conventions for taking fieldnotes Discussion of how fieldwork methods or settings may have influenced the data collected Demonstration, through use and portrayal of data, that depth, detail and richness were achieved in collection 8 ANALYSIS ANALYSIS How well has the detail, depth and complexity of the data been conveyed? Detection of underlying factors/influences Identification and discussion of patterns of association/conceptual linkages within the data Presentation of illuminating textual extracts/observations How clear are the links between data, interpretation and conclusions – i.e. how well can the route to the conclusion be seen? Clear links between the analytic commentary and presentations of the original data Discussion of how explanations / theories / conclusions were derived, and how they relate to interpretations and content of original data; whether alternative explanations were explored REPORTING Display and discussion of any negative cases How clear and coherent is the reporting? Demonstrates a link to the aims of the study / research questions Provides a narrative/story or clearly constructed thematic account 9 Background to development Most youth justice practice in the UK has received minimal evaluation, with very little undergoing the kind of rigorous research needed to be classified as researchproven. However, our approach does not set out to discredit the good work that goes on within youth justice services; its aim is to encourage practitioners to be more aware of which practice is underpinned by theory and evidence, and that which is less so. On this basis, although tempting, the classification framework outlined here should not be seen or used as a hierarchy in which practice appearing in the researchproven category is ‘better’ than practice in the other categories. Rather, it should be used as a guide to what is available and to assist practitioners and managers to make decisions about how to develop their services. In some cases, this will include actively discouraging practice that has been shown to be counterproductive. Classification under the YJB’s system is based upon two considerations: Design Evaluation The first of these is a pre-requisite for inclusion in the Effective Practice Library, irrespective of the quality and results of any evaluation. The latter defines which classification the practice example ultimately receives. Design The YJB’s expectations in relation to programme design are articulated in our published products Programmes in Youth Justice: Development, Evaluation and Commissioning and the Programme Development and Evaluation Toolkit – both based on the principles presented within the Centre for Effective Services’ (CES) child and family services multi-dimensional review, The What Works Process and The What Works Tool (2011). It has been adapted by the YJB to fit the youth justice context. Evaluation Our Classification Framework is designed to provide a guide to the Effective Practice Classification Panel when considering the quality of evaluation evidence in support of a particular example of practice, including both quantitative and qualitative evidence. Quantitative evidence is appraised using a version of the Scientific Methods Scale (Farrington et al., 2002), outlined on page 5, and adapted by the Home Office for use in assessing the impact of reconviction studies as measured by reconviction data and other outcomes (Friendship et al., 2005). The scale is also currently applied across government departments for use in outcome research; the original version is described in detail in HM Treasury’s Magenta Book: Guidance for evaluation (HM Treasury, 2011). During development, the framework was subject to review by academics and youth justice practitioners to gather their views on this approach to categorising examples of practice. The consensus was that, while the requirement on us to use the research standards applied in government social research was recognised, these standards (i.e. the Scientific Methods Scale) fail to place the same value on qualitative research methods as they do on experimental or quasi-experimental quantitative designs, arguing that only the latter can demonstrate the true impact of 10 an intervention or policy. While this is acknowledged, it is the YJB’s aim that this framework can be applied as equally to rigorously evaluated programmes in the ‘research-proven’ category as it can to programmes developed at a local level that will not have been evaluated using rigorous research designs. Due to their nature, qualitative research methods are much more difficult to arrange in a scale of rigour, as the Scientific Methods Scale attempts to do with quantitative methods. The Effective Practice Classification Panel therefore plays a crucial role in using its judgement and expertise to ascribe practice and programmes to a particular category, informed by this framework. The framework is intended to act as a guide for the panel in making these judgements about the quality of the evaluation, and what the evaluation says about whether the practice in question can deliver the intended results. It aims to provide a basis for these decisions and promote consistency across classifications, which would be hard to achieve if each case were considered without the use of a framework based on standards of evidence. The classification framework will continue to be reviewed to ensure that it remains up to date with developments in research and valid for the broad range of practice in use across the youth justice system. 11 Factors to consider when using practice classifications When considering the classifications that have been ascribed to examples of practice, attention must be given to certain factors to ensure that claims regarding the effectiveness of the practice in question are not wrongly assumed. As discussed previously, both quantitative and qualitative methods have their respective strengths and limitations when applied in certain ways. A general awareness of these is useful when using this classification framework to look for practice or programmes that could be used in your local context. Using the YJB categories Firstly, it is very important to state that the categories on page 4 (and this document in general) are about the evaluation evidence and not the practice itself - we are not saying that practice appearing in the ‘research-proven’ category is ‘better’ than practice in the other categories. Classification is reflection of the strength of the evaluation evidence. The categories should be used as a guide to what is available and to assist practitioners and managers to make decisions about how to develop their services. The strengths and limitations of a quantitative methodology Quantitative data can provide a rigorous statistical measure of to what extent a specific intervention has achieved (or not achieved) its intended outcomes. Information is gathered from a large number of sources, meaning that the size and representativeness of the sample allow generalisations to be made (although this also depends on the scale of the evaluation). However, in order to maximise sample size, the information gathered is often ‘shallow’, meaning that little is understood about the participants’ experiences or the local contexts and processes involved. Experimental and quasi-experimental methods quantitative methods (those at levels 4 and 5 of the scale on page 5) are also strong at controlling for variables (known as ‘internal validity’) in order to increase certainty that it was the practice or programme being tested that produced the results seen. This scientific method produces a rigorous evaluation, but arguably decreases the extent to which results can be generalised (known as ‘external validity’), as the environment created is a highly constructed one, and not always typical of the social context in which we work. The strengths and limitations of a qualitative methodology Qualitative data is typically captured from a smaller sample, due to the greater depth and level of detail involved. This means that the ability of these methods to offer generalizable results is reduced, as they are often specific to a few local contexts. However, they are more useful at understanding these specific local contexts and processes in detail, and how they may have played a part in the success (or otherwise) of the practice or programme being evaluated. Qualitative methods do not offer the same scientifically rigorous certainty that it was the practice or programme being evaluated that produced the results seen, or to what extent those results were achieved. They are, however, useful in understanding why or how a particular practice or programme may have been successful, due to the emphasis placed on capturing the local context and the experiences of the participants involved. 12 Does this mean that ‘practice x’ will definitely produce the intended results for me? The million-dollar question! Here are some key factors to bear in mind when considering the use of a programme that has evidence of effectiveness. Context – The role played by the context in which an intervention takes place should not be underestimated. Such things as local service delivery structures and the culture of the organisation in which the intervention is delivered can play a vital role in the effectiveness of a programme (depending on its scale). Fidelity – ‘Programme fidelity’ refers to the extent to which a programme is delivered as it was originally intended. Deviating from the original template risks tampering with aspects of the programme vital to its success. The practitioner! – Another factor that should never be underestimated is the role of high quality staff, skilled in the delivery of a programme or intervention. A frequent obstacle to the ‘scaling up’ of interventions (implementing them on a large scale) is often how to maintain the quality of the staff who deliver them – a key ‘ingredient’ in the success of the intervention. The evaluation - Different evaluation methods can offer more certainty than others in terms of the potential to replicate results across wider populations. For example, the evaluation of a programme conducted in several different geographical areas can claim greater potential for generalisation across the wider population than one conducted in a single location (even if it is a rigorous design, such as a Randomised Controlled Trial). However, the context must always be considered; although findings from the most rigorous evaluations may be highly capable of generalisation across the wider population, this does not guarantee they automatically apply to your particular context, and the unique systems and processes you may have in your local area. This caveat applies equally to evaluations using qualitative methods, including those using large representative samples. In summary...the category applied to an example of practice reflects the view of the panel based on the information that was provided to them about evaluations completed up to that point. Therefore, it should not be taken as a guarantee that the practice in question will always deliver the same results in the future; it is more a reflection of the effectiveness of the practice or programme to date. 13 Glossary Comparison group A group of individuals whose characteristics are similar to those of a programme's participants (intervention group). These individuals may not receive any services, or they may receive a different set of services, activities, or products; in no instance do they receive the service being evaluated. As part of the evaluation process, the intervention group and the comparison group are assessed to determine which types of services, activities, or products provided by the intervention produced the intended results. Evaluation (impact) A process that takes place before, during and after an activity that assesses the impact of a particular practice, programme or intervention on the audience(s) or participants by measuring specific outcomes. Evaluation (process) A form of evaluation that assesses the extent to which a programme is operating as it was intended. It typically assesses whether programme activities conform to statutory and regulatory requirements, programme design, and professional standards or customer expectations (also known as an implementation evaluation). External validity The extent to which findings from an evaluation can be generalised and applied to other contexts. Randomised Control Trials (RCTs), for example, have a low level of external validity due to the unique environments created by controlling the number of variables required to produce high internal validity. Generalizability The extent to which findings from an evaluation can be applied across other contexts and populations. Internal validity The level to which variables in the evaluation have been controlled for, allowing conclusions to be drawn that it was the intervention in question that produced the difference in outcomes. RCTs have a high level of internal validity. Intervention group The group of participants receiving an intervention or programme (also known as the treatment group) Practice example This can be a particular resource, a way of working, or a method or example of service delivery. Practice examples can be defined as things such as programmes or resources that 14 can be shared with other youth justice services. Qualitative data Detailed, in-depth information expressed in words and often collected from interviews or observations. Qualitative data is more difficult to measure, count or express in numerical terms. Qualitative data can be coded, however, to allow some statistical analysis. Quantitative data Information expressed in numerical form, and is easier to count and analyse using statistical methods. Evaluations using quantitative data can produce effect sizes (i.e. the difference in outcomes between the control and treatment groups). Randomised Control Trial (RCT) An experimental research design that randomly assigns participants to either the control or treatment groups. Groups are closely matched on their characteristics and variables tightly controlled to demonstrate that the outcome was produced by the intervention being delivered to the treatment group (i.e. the only difference between the two groups) 15 References Adler, P. and Adler, P. (2012) in Baker, S. and Edwards, E. (eds) How many qualitative interviews is enough? National Centre for Research Methods. Centre for Effective Services (2011) The What Works Process – evidence-informed improvement for child and family services, Dublin: CES. Farrington et al. (2002) ‘The Maryland Scientific Methods Scale’ in Sherman, L.W., Farrington, D.P., Welsh, B.C. and MacKenzie, D.L. (Eds.). Evidence-based Crime Prevention. London and New York: Routledge. Friendship et al. (2005) ‘Introduction: the policy context and assessing the evidence’ in Home Office Research Study 291: ‘The impact of corrections on reoffending: a review of ‘what works’, Home Office: London. HM Treasury (2011) The Magenta Book: Guidance for evaluation. HM Treasury: London. Innes Helsel et al. (2006) Identifying evidence-based, promising and emerging practices that use screen-based and calculator technology to teach Mathematics in grades K-12: a research synthesis. American Institutes for Research: Washington Petrosino et al (2003), ‘Scared Straight’ and other juvenile awareness programs for preventing juvenile delinquency. Campbell Collaboration. Spencer et al. (2003) Quality in Qualitative Evaluation: A framework for assessing research evidence, Government Chief Social Researcher’s Office: London. 16 APPENDIX A Practice example submission and classification process Practice example submitted to YJB Submission and supporting evidence checked by YJB Effective Practice team Effective Practice team select required classification route Effective Practice Classification Panel (if potentially ‘promising evidence’ uploaded to Effective Practice Library pending classification) Effective Practice Governance Group (if potentially ‘treat with caution’) Panel refer to Social Research Unit (if potentially ‘researchproven’ or ‘ineffective’) Panel categorise submissions Feedback to submitter Classification updated on Effective Practice Library Figure 2: YJB practice example submission and classification process 17