Cognitive Illusions and the Welcome Psychologism of Logicist

advertisement

Cognitive Illusions and the Welcome Psychologism

of Logicist Artificial Intelligence

Selmer Bringsjord & Yingrui Yang

The Minds & Machines Laboratory

Dept. of Philosophy, Psychology & Cognitive Science

Department of Computer Science (S.B.)

Rensselaer Polytechnic Institute (RPI)

Troy, NY 12180

selmer@rpi.edu yangyri@rpi.edu

2.19.02

Abstract:

We begin by using Johnson-Laird’s ingenious cognitive illusions (in which it seems that certain

propositions can be deduced from given information, but really can’t) to raise the spectre of a naive brand

of psychologism. This brand of psychologism would seem to be unacceptable, but an attempt to refine it in

keeping with some suggestive comments from Jacquette eventuates in a welcome version of psychologism

-- one that can fuel logicist (or logic-based) AI, and thereby lead to a new theory of context-independent

reasoning (mental metalogic) grounded in human psychology, but one poised for unprecedented

exploitation by machines.

Introduction

The overall goal of this chapter is two-fold: (i) sketch a perfectly acceptable brand of psychologism that can

function as a productive foundation for logicist (= logic-based) artificial intelligence (AI); (ii) introduce a

new theory of human context-independent reasoning as the cornerstone of this foundation. To reach our

goal, we begin by using Johnson-Laird’s ingenious cognitive illusions (in which it seems -- to most,

anyway -- that certain propositions can be deduced from given information, but really can’t) to raise the

spectre of an unpalatable brand of psychologism. Once this brand of psychologism is refined, the promised

welcome version of psychologism is produced (and, in fact, in part displayed in action), as is our new

theory of human reasoning: mental metalogic, or just ‘MML,’ for short. We conclude by briefly presenting

the context of our belief that though MML is firmly grounded in human reasoning and empirical

psychology, it’s a theory poised for unprecedented exploitation by machines.

Logical Illusions and a Naive Brand of Psychologism

Due in no small part to ingenious experimentation carried out by Johnson-Laird, one of the dominant

themes in current psychology of reasoning is the notion of a cognitive or logical illusion. In a visual illusion

of the sort with which you’re doubtless familiar, one seems to see something that, as a matter of objective

fact, simply isn’t there. (In the Sahara, what seems to be a lovely pool of water is just the same dry-as-a-

bone expanse of sand -- and so on.) In illusions of the sort that Johnson-Laird has brought to our attention,

what seems to hold on the basis of inference doesn’t. Here’s a first specimen: 1

Illusion 1

(1)

If there is a king in the hand, then there is an ace, or else if there isn’t a king in the hand, then there is an ace.

(2)

There is a king in the hand.

Given these premises, what can you infer?

Johnson-Laird has recently reported that

Only one person among the many distinguished cognitive scientists to whom we have given

[Illusion 1] got the right answer; and we have observed it in public lectures -- several hundred

individuals from Stockholm to Seattle have drawn it, and no one has ever offered any other

conclusion (Johnson-Laird 1997b, p. 430).

The conclusion that nearly everyone draws (including, perhaps, you) is that there is an ace in the hand.

Bringsjord (and, doubtless, many others) has time and time again, in public lectures, replicated JohnsonLaird's numbers -- presented in (Johnson-Laird & Savary, 1995) -- among those not formally trained in

logic. (The reason for the italicized text will become clear later.) This is wrong because ‘or else’ is to be

understood as exclusive disjunction,2 so (using obvious symbolization) the two premises become

(1')

((K A) (K A)) ((K A) (K A))

(2')

K

It follows from the right (main) conjunct in (1') by DeMorgan's Laws that either K A or K A is false.

But by the truth-table3 for ‘,’ in which a conditional is false only when its antecedent is true while it’s

consequent is false, it then follows that either way A is false, i.e., there is not an ace in the hand. Technical

philosophers and logicians, in our experience, invariably succeed in carrying out this deduction. In fact,

1

Illusion 1 is from (Johnson-Laird & Savary, 1995). Variations are presented and discussed in (JohnsonLaird, 1997a).

2

Even when you make the exclusive disjunction explicit, the results are the same. E.g., you still have an

illusion if you use

Illusion 1'

(1) If there is a king in the hand then there is an ace, or if there isn't a king in the hand then there is

an ace, but not both.

(2) There is a king in the hand.

Given these premises, what can you infer?

3

Of course, a fully syntactic proof is easy enough to give; indeed we give and discuss it below (see Figure

1).

when Bringsjord presented this problem at the 2000 annual International Computing and Philosophy

Conference, two logicians rapidly not only produced the proof in question, but commented that “Of course,

this assumes that you’re dealing with an exclusive disjunction.” Predictably, both these thinkers symbolized

the problem in first-order logic (FOL).

From the standpoint of discussing psychologism -- put roughly for now, the view that correct reasoning is

reasoning in accord not with abstract formalisms, but rather in accord with how humans in fact reason -Illusion 1 is uneventful. (On the other hand, from the standpoint of those who know a thing or two about

logic, the fact that so many cognitive scientists went awry on this problem is remarkable.) But now

consider a second logical illusion, one given again by Johnson-Laird (1997b, p. 430):

Illusion 2

(*)

Only one of the following assertions is true:

(3) Albert is here or Betty is here, or both.

(4) Charlie is here or Betty is here, or both.

(5)

This assertion is definitely true: Albert isn't here and Charlie isn't here.

Given these premises, what can you infer?

Johnson-Laird says: “These premises [in Illusion 2] yield the illusion that Betty is here” (1997b, p. 431).

But upon closer inspection this pronouncement is peculiar. To see this, using A and B as obvious

abbreviations, (5) becomes A C. We know from (*) that (3) is true, or (4) is, but not both. Suppose

that (3) is true; then by disjunctive syllogism on (3) and A we obtain that Betty is here (B). Suppose

instead that (4) is true; then by disjunctive syllogism on (4) and C we obtain that Betty is here (B). Either

way, contra Johnson-Laird, Betty is here.4

Bringsjord showed this simple proof (and other similar ones in connection with other “illusions” offered by

Johnson-Laird) to Johnson-Laird himself; Bringsjord’s assumption was that Johnson-Laird would say that

an error on his part had been made. That assumption turned out to be wrong. Johnson-Laird replied in

personal communication that people don’t make inferences as sophisticated as the ones Bringsjord had

made in dissecting Illusion 2, and that the illusion stands. Incredulous, Bringsjord pointed out that surely a

logical illusion I is classified as such because the premises in I are taken by most subjects to entail ,

while in fact cannot be deduced from using laws of logic. Johnson-Laird replied that logic is not the

arbiter in illusions; the arbiter by his lights is what most logically untrained subjects believe about what

must be true given the truth of the propositions in . As we see it, this position, and the attitude toward

logic that accompanies it, is about as good a candidate for an instance of (a presumably objectionable)

psychologism as can be found anywhere.

4

In a recent presentation of Illusion 2 in a lecture by Bringsjord, the reasoning just given was provided by a

high school student. Everyone else in the audience declared that Betty is here, “Obviously” -- but they

provided, at best, exceedingly murky arguments. The same student went on to demonstrate, on the basis of

the given information, that Betty isn't here. His reasoning this time involved indirect proof: The premises

are inconsistent, as you may wish to verify. In addition, this clever student declared that he could prove that

the moon is made of green cheese, given the premises in question (reductio again, of course). Figure 2

shows a proof in HYPERPROOF, the fourth line of which confirms that a contradiction is a logical

consequence of the given info.

But let’s slow down. Is Johnson-Laird’s position even coherent? Suppose that there is some purported

illusion I with premises , and that all subjects declare that can be deduced from . If in fact all subjects

make this declaration, then we have here a powerful illusion. (After all, that Illusion 2 is powerful is

something Johnson-Laird attempts to substantiate by pointing out that audience after audience has declared

that Betty is here can be inferred.) But if all subjects make this declaration, then by Johnson-Laird’s

psychologistic stance it follows that it’s valid to infer from . But since by hypothesis we’re dealing with

an illusion, by hypothesis the inference isn’t valid! We thus have a contradiction: incoherence.5

Toward a Palatable Brand of Psychologism

However, there’s a reading of Johnson-Laird’s response that provides him with a possible escape, and

points the way toward a welcome version of psychologism. To begin, we need to be more precise about

what an illusion is. Let E1, E2, … denote declarative sentences in English (actually, any natural language will

do, but that needn’t detain us). (So for example in Illusion 1 Only one of the following two

assertions is true.6 could be E1.) Let Q stand for some question in English. Following the

notation we used above, we can let capital Greek letters , , … range over sets of formulas in some

logical system (e.g., first-order logic, or just ‘FOL’), and lowercase Greek letters , , … refer to

individual formulas. Next, notice that there is some variation in the questions. Sometimes the question isn’t

open-ended, as it is in Illusions 1 and 2. Instead, sometimes it is a query about whether a particular

proposition (or state-of-affairs) is “possible.” Here's an example: 7

Illusion 3

(6)

Only one of the following premises is true about a particular hand of cards.

(7)

There is a king in the hand or there is an ace, or both.

(8)

There is a queen in the hand or there is an ace, or both.

(9)

There is a jack in the hand or there is a ten, or both.

Is it possible that there is an ace in the hand?

Now obviously, at least at this point, we don’t want to call upon some of the recherché machinery of

symbolic logic (e.g., modal logic) to formally analyze the question here as one involving a possibility

operator. Fortunately, the question is clearly intended to be a question about consistency in the standard,

5

Another route to reach apparent incoherence is to simply point out that Illusion 1 is ruled an illusion by

Johnson-Laird because the reasoning we gave above yields, while subjects don't apprehend this, and the

reasoning is based on formal logic, not on what is going on in the minds of subjects.

6

While Johnson-Laird apparently likes to use the colon, if we use a period we lose nothing, and gain the

fact that we're dealing with clearly demarcated sentences.

7

From page 1053 of (Yang & Johnson-Laird, 2000).

first-order, extensional sense.8 That is, the question here is whether the declarative sentence There is

an ace in the hand is consistent with (6)-(9). (Is it?)9 More generally, we will say that the question

Q can be one of three types: Q1: open-ended (it can ask what can be inferred); Q2: a question about whether

(a) given inferential relation(s) hold(s) between the Ei; or Q3: a question about whether (a) given inferential

relation(s) hold(s) between the Ei and another sentence (such as There is an ace in the hand in

Illusion 3). Because the Qi are concerned with an inferential relation, it would seem to make sense to

stipulate that one of the arguments in this relation is the “single turnstyle,” which traditionally denotes

provability; that is, ‘ |- ’ indicates that can be proved from . Putting together what we have so far

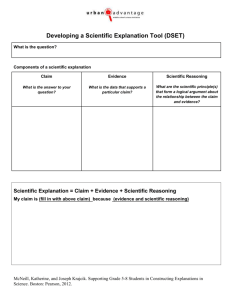

gives us the following scheme for cognitive illusions:

English Version

Formal Version

E1

E2

…

En

Q 1/Q2/Q 3

|-?/R(,|-)/R(,|-,

But how can we describe the structure of the illusions themselves, rather than just the presentation of them?

Well, first, to ease exposition, but without loss of generality, hereafter suppose that we have an illusion

specifically of the Q3 variety; and suppose, specifically, that the inferential relation in question is |-.

Assuming this framework, we might venture that an illusion obtains when two conditions are met:

Def1

A cognizer c succumbs to a logical illusion

E1

…

En

Q3

if and only if:

1. c believes that an be inferred from , i.e., Belc[ |- ];

2. not |- .

8

And the idiosyncratic use of ‘possible’ may stem from what might be called “mental model mindset,” in

which subjects are presupposed to be conceiving of possibilities in the mental model sense. Syntactically, a

set of formulas is inconsistent if and only if a contradiction can be derived from . Semantically, a set

of first-order formulas is inconsistent just in case there is no interpretation on which all of are true. The

syntactic and semantic senses of inconsistency are equivalent in first-order logic.

9

If you're letting yourself function as a subject in connection with this illusion, don't read any further in this

footnote. The answer is “No.”

Unfortunately, this account doesn't help Johnson-Laird: on it, Illusion 2 isn't a cognitive illusion, since

Betty is here can be derived from the relevant knowledge (as we’ve shown). 10 However, some

additional elements can change the story dramatically -- elements that reflect sensitivity to Johnson-Laird’s

seemingly unpalatable notion that how reasoners reason should determine what’s “valid” and “invalid.”

Specifically, here’s what we have in mind: We can turn to a more sophisticated version of our first

definition, by including some indication of what system S underlies ‘|-‘:

Def2 A cognizer c succumbs to a logical illusion

E1

…

En

Q3

if and only if:

1. c believes that an be inferred from in S, i.e., Belc[ |-S ];

2. not |-S .

For example, given Def2, we can speak of deduction in accordance with a full, standard set of natural

deduction rules -- a set that includes the rules of inference we used in our proof that Betty is here. In

such a set, for example in the elegantly presented set that’s part of the natural deduction system F in

Barwise and Etchemendy's Language, Proof, and Logic (1999), there is always a rule for introducing a

logical connective, and a rule for eliminating such a connective. Specifically, then, there is a rule for

eliminating ‘,’ and a rule for introducing ‘.’ The first of these corresponds to so-called constructive

dilemma, and it’s a rule that is used in our proof that Betty is here. To see this, a formal version of

the proof in is shown in Figure 1. Where is here composed of the two Givens, this proof establishes that

|-F B.

But now we are finally in position to see how to make respectable Johnson-Laird’s response to this result:

We can read him to be presupposing that the S in Def2 is simply indexed to the population in question. For

example, suppose that a particular implication can only be proved if rule r is employed; to completely fix

the situation, suppose that this implication goes through only if r’ = Elimination is available. In this case,

not |-F-r’ B,

but the subjects in question may nonetheless believe that B is provable in the relevant manner. It seems

possible that what Johnson-Laird may have in mind is that untrained reasoners at least generally believe

that Betty is here can be inferred in a system without constructive dilemma, and that this belief may

be incorrect.

10

It’s interesting to note that the account just given, with modifications of the sort we momentarily express

in subsequent definitions, apparently captures the general phenomena that have long driven psychology of

reasoning. For example, puzzles like the Wason selection task can be precisely represented in the

machinery we have introduced and crystallized in successors to Def1. Indeed, it would seem that apparently

all the puzzles, problems, and tasks at the heart of psychology of reasoning can be formalized along the

lines set out in the present chapter. See (Stanovich & West, 2000) for an excellent catalogue of these

puzzles and problems.

The general notion that untrained reasoners lack an understanding of highly specific patterns of primitive

inference is not unprecedented in psychology. Indeed, apparently the view that such reasoners lack an

understanding of reductio ad absurdum is widespread among psychologists of reasoning. Johnson-Laird, in

personal communication, has told us that that is in fact his view; many other eminent psychologists of

reasoning are of the same view (e.g., Braine). And more concretely, there is the example of what Lance

Rips (1994) seems to have done in devising his PSYCOP system, for this system includes (e.g.) conditional

proof, set out on page 116 of (Rips, 1994) as

+P

…

Q

IF P THEN Q

but not the rule which sanctions passing from the denial of a conditional to the truth of this conditional’s

antecedent (a rule that will be central to our concluding illusion, Illusion 4). For example, Rips (1994,

p. 125-126) says that this inference cannot be made by PSYCOP:

NOT (IF Calvin passes history THEN Calvin will graduate).

Calvin passes history.

At this point it may be safe to say that we have sketched a formal account of logical illusions on which

Johnson-Laird’s response to the provability of B in Illusion 2 is coherent. But how does this account relate

to the promised welcome version of psychologism? We answer this question now.

Toward a Welcome Brand of Psychologism

Jacquette anticipates an approximation of the brand of psychologism we have in mind when he writes:

In engineering one learns how to build a bridge ‘correctly’ so that it will bear its load properly,

withstand wind shear and other kinds of stress, minimize mental fatigue and the like; similarly, in

logic one learns how to reason ‘correctly’ to draw sound inferences and avoid fallacious ones.

When we learn to build bridges or perform heart surgery or reason correctly, we also learn what not

to do relative to presumed practical purposes. We want the bridge to span that gorge safely and not

collapse, the patient to recover with improved health and not die on the operating table, and our

reasoning to expand our knowledge and improve our decision-making ability, and not lead us from

truth to falsehood. Now, I think that most theorists would be reluctant to conclude that engineering

and medicine are not reducible to physics and biology, but rather that their respective practices

combine these sciences with an assumption about the goals they should help their practioners to

achieve. Why should things be different with respect to logic as an applied discipline grounded in

the psychology of reasoning? (Jacquette 1997, pp. 324-325)

The version of psychologism that we have in mind involves a relationship not between what Jacquette calls

“applied logic” and psychology of reasoning, but rather between a field that marks the marriage of

engineering and logic, and the psychology of reasoning; the field is: logicist (= logic-based) AI. Logicist

AI, or just ‘LAI’ for short, is devoted to engineering machines capable of simulating intelligent behaviors

of various sorts, where these machines carry out their relevant work via formal reasoning. (A full

introduction to LAI can be found in (Bringsjord & Ferrucci, 1998). For a book-length treatment of LAI as

an approach to machine creativity see (Bringsjord & Ferrucci, 2000).) Though LAI isn’t exactly what

Jacquette ascribed the term ‘applied logic’ to, this term is certainly an appropriate one for the kind of AI

we’re talking about. We believe that LAI is, or at least ought to be, to use Jacquette’s phrase, “grounded in

the psychology of reasoning.” On this view, the process of building the intelligent machines in question

involves looking to human reasoning for guidance; as this building proceeds, new knowledge of value to

psychologists of reasoning is produced; and the process iterates, back and forth.

Now, in order to see this looping reciprocity in action here, let’s suppose that we wish to build a computing

machine capable of solving English-expressed logic problems, including logical illusions. A bit more

precisely, we want to construct an artificial intelligent agent A that takes some such problem P as input,

and produces for output an answer that constitutes a solution. 11 We would also like to be able to modify A

so that it succumbs to logical illusions, and thereby offers a mechanical instantiation of the human

reasoning that takes place when a logical illusion “tricks” some human. What we have learned so far about

the underlying structure of logical illusions should be helpful in working toward such an intelligent agent,

but is it? Not very; for only a little reflection from the perspective of LAI and the goal of building A reveals

that Def2 is inadequate. Why? There are many reasons; here's a fatal one.

When you aim at building A you immediately see that we have swept under the rug the thorny issue of how

to go from the English to (in this case) FOL. Our artificial agent is going to scan the English in, parse it,

and represent the English in FOL. 12 But we have completely left this process out of Def1 and Def2. Suppose

(and notice now that we’re moving back for guidance to the human sphere) that a human looks at Illusion 1

and represents English sentences (1) and (2) as P and Q, respectively; and suppose that she represents

There is an ace in the hand as A. Suppose as well that the set of inference rules in question, S,

is a standard Fitch-style set at the propositional level (such as propositional F). Clearly, not {P, Q} |- A in

this set; this implies that the second clause of Def2 is satisfied. But now suppose that this human also

happens to believe that A can be derived from these premises, for (irrational) reasons having to do with the

price of tea in China. At this point, both clauses in the definition are satisfied -- but no one would want to

say that this human has fallen prey to the illusion in question. Ergo, Def 2 can't be right, and should

presumably be supplanted with:

Def3 A cognizer c succumbs to a logical illusion

E1

…

En

Q3

if and only if:

1a. c represents the Ei as and the “target” English sentence in the query as ;

1. c believes that an be inferred from in S, i.e., Belc[ |-S ];

11

Such an agent would have lots of practical value, but such value isn’t our concern herein. For example, a

great many standardized tests consist in giving humans logic problems; the Graduate Record Exam (GRE)

and Law School Admission Test (LSAT) are two examples. An agent able to solve these problems would

presumably point the way to agents able to automatically generate the problems in question, which would

presumably save many institutions lots of money.

12

For coverage of how this would work, in general, see (Russell & Norvig, 1994).

2. not |-S .

Do we have here an acceptable account? Unfortunately, no; counterexamples are still easy to come by:

Suppose that Jones “correctly” represents the premises in Illusion 1 as , believes that |-S A, where S is

appropriately instantiated -- but: suppose that this belief, as before, has an irrational justification, namely

that because the price of tea in China has reached exorbitant levels, the implication in question is correct.

Certainly we wouldn't want to say that Jones has been affected by Illusion 1. What’s missing is that Jones

doesn’t have in mind the right sort of justification for believing |-S A. It would seem that those who

succumb to Illusion 1 believe that A follows from the premises on the strength of a relevant argument – it’s

just that the argument isn’t sound. For example, in our experience, when interviewed subsequent to giving

their response in Illusion 1 that the presence of the ace in the hand can be deduced, most subjects give such

rationales as: “Well look, either there is a king in the hand or there isn’t, but we know that either way there

is an ace.” It would seem that subjects tripped up because they have in mind an inchoate, enthymematic

argument establishing

{(K A) (K A)} |- A,

a true equation.

Let’s take stock of where we are, then, from the standpoint of LAI. Apparently there are two tasks to

consider: the representation of the English in an illusion in formal form, and the judgment as to whether

this formal knowledge can be used to (given, as you’ll recall, that we are limiting ourselves to illusions of

Q3 form) establish . Given this, there are three possibilities, in general: 13

Label

Representation Reasoning “Tricked”?

Possibility 1

correct

incorrect

yes

Possibility 2

incorrect

correct

yes

Possibility 3

correct

correct

no

Possibility 4

incorrect

incorrect

yes

Obviously, our definition needs to take account of these possibilities via suitable disjunctions. Where

stands for a correct representation and ’ an incorrect one, we have:14

Def4 A cognizer c succumbs to a logical illusion

E1

13

We say “in general” because of such finer-grained possibilities as that the subject could correctly

represent the premises but incorrectly represent the proposition the query concerns, and so on. In the fourth

possibility, Possibility 3 in our table, the cognizer isn’t tricked, so this possibility has no bearing on the

definition we’re seeking.

14

For ease of exposition and to work within the space constraints of this chapter, we leave aside certain

niceties. For example, to be more precise, we would need to distinguish between representation of one

premise versus another, between the premises versus the proposition implicit in Q, etc.

…

En

Q3

if and only if either

1a'. c represents the Ei as and the “target” English sentence in the query as ;

1'. c believes, on the basis of a relevant invalid argument that can be inferred from in S, i.e.,

Belc[ |-S ];

2. not |-S .

or

1a. c represents the Ei as ’ and the “target” English sentence in the query as ;

1''. c believes, on the basis of a relevant valid argument that can be inferred from in S, i.e.,

Belc[ |-S ];;

2. not |-S .

or

1a. c represents the Ei as ’ and the “target” English sentence in the query as ;

1'. c believes, on the basis of a relevant invalid argument that can be inferred from in S, i.e.,

Belc[ |-S ];

2. not |-S .

This account is perhaps approaching respectability, but it’s doing only that: approaching: lots of work

remains to be done. We can perhaps assume that standard natural language processing techniques will

secure for A from the Ei. But what about the incorrect representation of the English (denoted by ’)?

How is this representation produced, specifically? And what about the notion of a “relevant argument”?

What does this amount to, exactly? Without answers to these questions, our agent A will not be attainable.

Though we haven’t the space to provide the answers, we can say a few words, with help from our core

examples. Take Illusion 1 first. In this case, as we’ve already indicated,

= {(K A) (K A), ((K A) (K A)), K}

and

’ = {(K A) (K A), K}

and the “relevant valid argument” is shown in Figure 3.

Now, what about Illusion 2? Can we analyze it in terms of Def4? Yes, as follows. First, there are two

possibilities: either Johnson-Laird didn't err, and our charitable reading of him on this illusion is correct, or

he did in fact simply slip up. Either way we can make sense of Illusion 2.

Suppose first that Johnson-Laird did make a mistake in writing that Illusion 2 is an illusion despite the fact

that B is provable in elementary logic. Then (with a standard set of inference rules) what he apparently

wanted was English that when represented would yield (A B) (C B) and A C. Our hypothetical

cognizers could for example then produce the incorrect representation: (A B) (C B), along with A

C. There would then be a straightforward valid proof of B.

On the other hand, if Johnson-Laird didn't err (because he had in mind the subtle variations on the variable

S in Def4), then B can’t be obtained because constructive dilemma is unavailable. 15 But would our cognizer

have a correct or incorrect representation? She can’t have a correct representation, and at the same time

believe that she can obtain B. The reason is that this belief would be based on an argument that itself

deploys constructive dilemma. Hence, if Johnson-Laird didn’t make a mistake, cognizers must have on

hand some ’ from which you can get B in this restricted set of inference rules.

At this point, perhaps Def4 can be said to be at least somewhat promising. But surely it’s still incomplete, if

for no other reason than that it simply doesn’t present all the permutations that arise from the formal

structure we have set out. For example, it should be obvious that we would have an illusion if we simply

“flip the switches” in Def4’s first disjunction in straightforward ways. To be more specific, we would have

an illusion of the Q3 variety if subjects were tricked into thinking that some proposition can’t be derived

from the correct representation of the premises, when in fact |- . This would be the relevant variation

on Def4’s disjunction:

1a'. c represents the Ei as and the “target” English sentence in the query as ;

1'. c believes, on the basis of a relevant invalid argument that cannot be inferred from in S, i.e., Belc

not[ |-S ];

2'. |-S .

Now, what about generating illusions? If Def4 is any good, we should be able to use it to construct an

artificial agent that generates bona fide illusions before any data is gathered. This agent should yield some

rather tricky illusions, given that we have now identified six variations to instantiate (two for each of the

disjuncts in Def4), and that individual illusions can actually instantiate at least two of these variations

simultaneously. Of course, without a specification of the relationship between correct and incorrect

representations, and of justifying arguments, we concede that we can’t literally construct the agents we

seek, but we can certainly at this point “hand simulate” the processes involved. Accordingly, here’s a new

illusion Bringsjord derived from the analysis we’ve produced to this point:

Illusion 4

(10)

The following three assertions are either all true or all false:

(11)

15

If Billy helped, Doreen helped.

If Doreen helped, Frank did as well.

If Frank helped, so did Emma.

The following assertion is definitely true: Billy helped.

There are of course other ways to obtain B. For example, ignoring every fixed proof-theoretic system S,

one could produce a “semantic” proof, one based, say, on a truth table. But we are assuming in accordance

with |- that we are restricting the space of proofs in such a way that these kinds of proofs are inadmissible.

Can it be inferred from (10) and (11) that Emma helped?

(If you're interested in pondering this illusion without knowing the answer, pause before reading the rest of

this paragraph.) Sure enough, in our preliminary results, most students, and even most professors, often

including, in both groups, even those who have had a course in logic, answer with “No.” The rationale they

give is that, “Well, if all three of the if-thens are true, then you can chain through them, starting with ‘Billy

helped,’ to prove that Harry helped. But you can’t do that if the three are false, and we’re told they might

be.” But this is an illusion. In the case of reports like these, the premises are represented correctly, but there

is an invalid argument for the belief that E can’t be deduced from the conjoined negated conditionals (while

they do have in mind a valid argument from the true conditionals and Billy helped to Emma

helped). This argument is invalid because if the conditionals are negated, then we have

(B D)

(D F)

(F E)

But the first two of these yields by propositional logic the contradiction D D. Given this, if we assume

that Emma failed to help, that is, E, we can conclude by reductio ad absurdum that she did help, that is,

E.16 Notice that this solution makes use of both the rule that Rips’ says humans don’t have (a negated

conditional to the truth of its antecedent) and the rule that most psychologists of reasoning say humans

don’t have (reductio). In light of this, it’s noteworthy that a number of those to whom we presented Illusion

4 did produce the full solution we just explained.

We next draw your attention to an interesting feature of Illusion 4, and use it as a stepping stone to our

theory of human reasoning: mental metalogic.

Mental MetaLogic: A Glimpse

Illusion 4 instantiates a variation in which cognizers conceive of disproofs. More specifically, a cognizer

fooled by Illusion 4 imagines an argument for the view that there is no way to derive Emma helped from

the negated trio of conditionals and Bill helped. Such arguments cannot be expressed in F. And when

-- in keeping with the version of psychologism that is guiding us -- we look to psychology of reasoning for

help in making sense of such arguments we draw a blank. The reason for this is that psychology of

reasoning is dominated by two competing models of human reasoning (especially deductive reasoning),

and neither one allows for the expression of disproofs. The two theories are mental logic (ML) and mental

models (MM). ML is championed by Braine (1998) and Rips (1994), who hold that human reasoning is

based on syntactic rules like modus ponens (recall yet again the rule we earlier pulled from (Rips, 1994)

and displayed). MM is championed by Johnson-Laird (1983), who holds that humans reason on the basis

not of sequential inferences over sentence-like objects, but rather over possibilities or scenarios they in

some sense imagine. As many readers will know, the meta-theoretical component of modern symbolic

logic covers formal properties (e.g., soundness and completeness) that bridge the syntactic and semantic

components of logical systems. So, in selecting from either but not both of the syntactic or semantic

components of logical systems, psychologists of reasoning have, in a sense, broken the bridges built by

logicians and mathematicians. Mental logic theory and mental model theory are incompatible from the

perspective of symbolic logic: the former is explicitly and uncompromisingly syntactic, while the latter is

explicitly and uncompromisingly semantic. Our theory, mental metalogic (or just ‘MML'), bridges between

16

A fully formal proof in F is shown in Figure 4. The predicate letter H is of course used here for ‘helped.’

these two theories (see Figure 5). More concretely, MML provides a mechanism which our agent A will

need if it is to simulate human cognizers who have beliefs about provability on the basis of valid and

invalid disproofs. In this mechanism, step-by-step proofs (including, specifically, disproofs) can at once be

syntactic and semantic, because situations can enter directly into line-by-line proofs. The HYPERPROOF

system of Barwise and Etchemendy (1994) can be conveniently viewed as a simplistic instantiation of part

of MML.17 In HYPERPROOF, one can prove such things as that not |- . Accordingly, let's suppose that in

Illusion 4, a “tricked” cognizer moves from a correct representation of the premises when (10)’s

conditionals are all true along with (11), to an incorrect representation when the conditionals in (10) are

false. Suppose, specifically, that the negated conditionals give rise to a situation, envisaged by the cognizer,

in which four people (objects represented as cubes) b, d, f, and e are present, the sentence Billy is

happy is explicitly represented by a corresponding formula, and the issue is whether it follows from this

given information that Emma is happy. This situation is shown in Figure 6. (We have moved from helping

to happiness because HYPERPROOF has ‘Happy’ as a built-in predicate.) Notice that the full logical import of

the negated conditionals is nowhere to be found in this figure. Next, given this starting situation, a disproof

in HYPERPROOF is shown in Figure 7. Notice that a new, more detailed situation has been constructed, one

which is consistent with the original given info (hence the CTA rule), and in which it is directly observed

that Emma isn’t happy. This demonstrates that Emma’s being happy can’t be deduced from the original

given information. Note that such demonstrations can be created and processed by computer programs in

general, and hence can be created and processed by our agent A.

We will stop at this point on the road toward A. Hopefully the reader now has a sense for how the kind of

psychologism we are promoting can be constructive from the standpoint logicist AI. We end with a brief

discussion of our promised final point.

Mental MetaLogic and Machine Reasoning: Future Work for

Our Brand of Psychologism

How productive can our version of psychologism be? What grander things might it enable in LAI? Well,

our hope is that it can make “Simon's Dream” a reality. What is Simon’s Dream? It is a dream that Herb

Simon, one of the visionary founders of AI (and, for that matter, of LAI, specifically) expressed in the

Summer before he died: viz., to build an AI system able to produce conjectures, theorems, and proofs of

these theorems as intricate, interesting, subtle, robust, profound, etc. as those produced by human

mathematicians and logicians. In this talk Simon pointed out something that everybody in the relevant

disciplines readily concedes: machine reasoning, when stacked against professional human reasoning, is

laughably primitive. For example, John Pollock, before delivering a recent talk at RPI on his system for

automated reasoning, OSCAR, conceded that all theorem provers, including his own, are indeed primitive

when stacked against expert human reasoners, and he expressed a hunch that one of the reasons is that such

provers don’t use diagrammatic, pictorial, or spatial reasoning: they can all be viewed as machine

incarnations of mental logic. Simon’s “condemnation” of today's machine reasoning has also been carefully

expressed in specific connection with Gödel’s incompleteness theorems: Bringsjord (1998) has explained

why the well-known and highly regarded prover OTTER, despite claims to the contrary, doesn’t really prove

Gödel's incompleteness results in the least.

We take this quote from Jacquette very seriously: “Logic can be understood as descriptive of how some

reasoning occurs, at the very least the reasoning of certain logicians.” (Jacquette 1997, p. 324) We

specifically submit that mental metalogic, used in conjunction with our Jacquette-inspired variety of

psychologism, is AI's best bet for reaching Simon's Dream. As we have said, expert reasoners often

explicitly work within a system that is purely syntactic (and hence within a system that relates only to

17

We have our own, more sophisticated instantiations of MML, but HYPERPROOF is simpler, is widely

used in teaching philosophy and computer science, and in the present context is perfectly sufficient.

mental logic) -- but it's also undeniable that such reasoners often work on the semantic side. Roger Penrose

has recently provided us with an interesting example of semantic reasoning: He gives the case of the

mathematician who is able to see via images of the sort that are shown in Figures 8 and 9 that adding

together successive hexagonal numbers, starting with 1, will always yield a cube.

MML (Rinella et al., 2001; Yang & Bringsjord, in press), as we’ve said, is a theory of reasoning that draws

from the proof theoretic side of symbolic logic, the semantic (and therefore diagrammatic) side, and the

content in between: metatheory. Furthermore, while theories of reasoning in psychology and cognitive

science have to this point been restricted to elementary reasoning (e.g., the propositional and predicate

calculi), MML includes psychological correlates to all the different forms of deductive reasoning that we

find in relevant human experts; for example, modal, temporal, deontic, conditional, reasoning. Accordingly,

MML can serve as the basis of an automated reasoning system that realizes Simon’s Dream, and we will

attempt to demonstrate this -- not now, but in tomorrow's work arising from the psychologism we have

described today.

Bibliography

Barwise, J. & Etchemendy, J. (1994), Hyperproof, CSLI, Stanford, CA.

Barwise, J. & Etchemendy, J. (1999), Language, Proof, and Logic, Seven Bridges, New York, NY.

Braine, M. (1998), Steps toward a mental predicate-logic, in M. Braine & D. O'Brien, eds, ‘Mental Logic’,

Lawrence Erlbaum Associates, Mahwah, NJ, pp. 273-331.

Bringsjord, S. (1998), Is Gödelian model-based deductive reasoning computational?, Philosophica 61, 5176.

Bringsjord, S. & Ferrucci, D. (1998), Logic and artificial intelligence: Divorced, still married, separated...?,

Minds and Machines 8, 273-308.

Bringsjord, S. & Ferrucci, D. (2000), Artificial Intelligence and Literary Creativity: Inside the Mind of

Brutus, a Storytelling Machine, Lawrence Erlbaum, Mahwah, NJ.

Jacquette, D. (1997), ‘Psychology and the philosophical shibboleth’, Philosophy and Rhetoric 30(3), 312331.

Johnson-Laird, P. (1997a), Rules and illusions: A criticial study of Rips's The Psychology of Proof’. Minds

and Machines 7, 387-407.

Johnson-Laird, P. N. (1983), Mental Models, Harvard University Press, Cambridge, MA.

Johnson-Laird, P. N. (1997b), And end to the controversy? A reply to Rips, Minds and Machines 7, 425432.

Johnson-Laird, P. & Savary, F. ( 1995), How to make the impossible seem probable, in ‘Proceedings of the

17th Annual Conference of the Cognitive Science Society’, Lawrence Erlbaum Associates, Hillsdale, NJ,

pp. 381-384.

Rinella, K., Bringsjord, S. & Yang, Y. ( 2001), Efficacious logic instruction: People are not irremediably

poor deductive reasoners, in J. D. Moore & K. Stenning, eds, ‘Proceedings of the Twenty-Third Annual

Conference of the Cognitive Science Society’, Lawrence Erlbaum Associates, Mahwah, NJ, pp. 851-856.

Rips, L. (1994), The Psychology of Proof, MIT Press, Cambridge, MA.

Russell, S. & Norvig, P. (1994), Artificial Intelligence: A Modern Approach, Prentice Hall, Saddle River,

NJ.

Stanovich, K. E. & West, R. F. ( 2000), Individual differences in reasoning: Implications for the rationality

debate, Behavioral and Brain Sciences 23(5), 645-665.

Yang, Y. & Bringsjord, S. (2001), Mental metalogic: A new paradigm for psychology of reasoning, in `The

Proceedings of the Third International Conference on Cognitive Science'. (Press of the University of

Science and Technology of China: Hefei, China), pp. 199--204.

Yang, Y. & Johnson-Laird, P. N. (2000) How to eliminate illusions in quantified reasoning. Memory &

Cognition 28(6), 1050-1059.