Keerthi final_report - oth

advertisement

SMA 5505 Project Final Report

Parallel SMO for Training Support Vector Machines

May 2003

Deng Kun

Lee Yih

Asankha Perera

Table of Contents

1

INTRODUCTION

3

2

SUPPORT VECTOR MACHINES (SVM)

4

2.1

Introduction to SVM

4

2.2

General Problem Formulation

5

2.3

The Karush-Kahn-Tucker (KKT) Condition

6

2.4

A Brief Survey of SVM Algorithms

7

3

SEQUENTIAL MINIMAL OPTIMIZATION (SMO)

8

3.1

The SMO Algorithm

8

3.2

Keerthi's Improvement to SMO

9

3.3

Parallel SMO

3.3.1

Partitioning

3.3.2

Combination and Retraining

10

10

11

4

AN IMPLEMENTATION OF PARALLEL SMO USING CILK

12

5

AN IMPLEMENTATION OF PARALLEL SMO USING JAVA THREADS 14

5.1

Java Implementation details

14

5.2

Kernel Cache

15

5.3

Java Results

5.3.1

Results on Sunfire

6

CONCLUSION

16

17

19

Abstract

Support Vector Machine (SVM) is an algorithmic technique for pattern

classification that has grown in popularity in recent times, and has been

used in many fields including bioinformatics. Several recent results

provide improvements in various SVM algorithms. The Sequential

Minimal Optimization (SMO) is one of the fastest and popular algorithms.

This report describes parallelization of the SMO. The main idea is to

partition the training data into more than one part, train these parts in

parallel, and perform a combination and a retrain of the combined result.

We have implemented this idea both in Cilk[13] as well as using Java

threads. We also consider new techniques such as Keerthi's improvement

[8] to the SMO.

1 Introduction

Support Vector Machine (SVM) is an algorithm that was developed for pattern

classification but has recently been adapted for other uses, such as finding regression and

distribution estimation. It has been used in many fields such as bioinformatics, and is

currently a very active research area in many universities and research institutes which

include the National University of Singapore (NUS) and Massachusetts Institute of

Technology (MIT).

Since its introduction in 1970 by Vapnik [12], various improvements and new algorithms

have been developed. Currently, the most popular algorithm is based on [10]. One

example is the Sequential Minimal Optimization (SMO) algorithm [11].

The rest of the paper is organized as follows. The next section will introduce the Support

Vector Machine (SVM). Section 3 will discuss the SMO algorithm. Section 4 will

describe how we parallelize the basic SMO algorithm. Section 5 will describe the Cilk

implementation, and Section 6 will describe an implementation in Java threads. The last

section will conclude.

2 Support Vector Machines (SVM)

2.1 Introduction to SVM

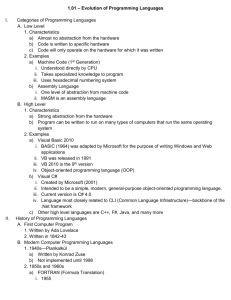

Although the SVM can be applied to various optimization problems such as regression,

the classic problem is that of data classification. The basic idea is shown in figure 1. The

data points are identified as being positive or negative, and the problem is to find a hyperplane that separates the data points by a maximal margin.

Figure 1: Data Classification

The above figure only shows the 2-dimensional case where the data points are linearly

separable. The mathematics of the problem to be solved is the following:

1

w,

min

w ,b 2

s.t yi 1 w xi b 1

yi 1 w xi b 1

s.t yi (w xi b) 1, i

(1)

The identification of the each data point xi is yi, which can take a value of +1 or -1

(representing positive or negative respectively). The solution hyper-plane is the

following:

u w x b

The scalar b is also termed the bias.

(2)

A standard method to solve this problem is to apply the theory of Lagrange to convert it

to a dual Lagrangian problem. The dual problem is the following:

N

min ( ) min yi y j ( xi x j ) i j i

N

1

2

N

i 1 j 1

i 1

N

y

i 1

i

i

(3)

0

i 0, i

The variables αi are the Lagrangian multipliers for corresponding data point xi.

2.2 General Problem Formulation

In general, we want non-linear separators. A solution is to map the data points into

higher dimension (depending on the non-linearity characteristics required) so that the

problem is linear in this high dimension. For certain classes of mapping, the dot-product

in equation (3) can be easily computed with its corresponding "kernel function". This

means that instead of directly mapping a pair data points (xi, xj) into higher dimensions

before performing the dot-product, we can simply evaluate the kernel K(xi, xj).

Some of the common kernel functions are:

In real world, data points may not be separable at all, and what is generally done is to

introduce a slack variable to the objective function and to introduce a new parameter C.

This parameter C allows the user to assign a level of penalty to erroneous classifications.

A large value of C will give a high penalty to erroneous classifications of data points,

while a small value will give a smaller penalty.

The general optimization can also be solved by means of the dual Lagrangian. The

optimization problem to be solved is as follows:

N

min ( ) min yi y j K ( xi x j ) i j i

N

1

2

N

i 1 j 1

i 1

N

y

i 1

i

i

(4)

0

C i 0, i

The solution is given by the formula:

N

u ( x) i yi K ( xi , x) b

(5)

i 1

2.3 The Karush-Kahn-Tucker (KKT) Condition

The theory of quadratic programming shows that the KKT condition is a necessary as

well as sufficient condition for the problem described by equation (4). The KKT

condition for equation (4) is the following set of equations:

i 0 yi ui 1,

0 i C yi ui 1,

i C yi ui 1,

(6)

Where ui = u(xi).

As a note, the dual Lagrangian problem solves for the Lagrangian multipliers αi's and

does not provide direct derivation of the bias b that is used in the function u (equation 5).

The bias can be computed from the KKT conditions because when 0 < αi < C (the second

statement in equation (6)) ui = 1/yi. For each such αi, we can compute a bi, and we can

set b as the average of such bi,s. In Keerthi's improvement to the SMO algorithm is

described in Chapter 3 where a lower-bound and uppper-bound of b is computed instead

of calculating b.

2.4 A Brief Survey of SVM Algorithms

For small problems, traditional algorithms from optimization theory exist. Examples

include conjugate gradient decent, and interior points methods. For larger problems,

these algorithms do not work well because of the large space requirements to store the

kernel matrix. Often they are slow because they do not make use of the characteristics of

real-world SVM problems, for example that the number of support vectors are usually

sparse.

Existing algorithms can be classified into three classes:

1. Algorithms where the kernel components are evaluated and discarded

during learning

These methods reportedly slow and require multiple scans of the dataset.

2. Decomposition and chunking methods

The main idea of these algorithms is that a small dataset is selected heuristically

for local training. If the result of the local training does not give a global

optimum, the dataset is reselected or modified and is trained again. The process

iterates until a global optimum is achieved. The SMO belongs to this class.

3. Other methods

There are currently many new methods under research and development.

Some of the SVM algorithms can be parallelized, but very few parallel SVM algorithms

exist. In [5], a possible parallelization of a conjugate method using Matlab*P is

described.

3 Sequential Minimal Optimization (SMO)

3.1 The SMO Algorithm

The SMO algorithm was developed by Platt [11] and refined by Keerthi [8], and based on

Osuna’s idea [10]. A more detailed description of the SVM can be found in [4], while [2]

provides an introduction. The SMO is introduced in [11].

Osuna’s decomposition algorithm works by choosing a small subset, called the working

set, from the data set, and solving the related sub problem defined by the variables in the

working set. At each iteration, there is a strategy to replace some of the variables in the

working set with other variables not in the working set. Osuna’s results show that the

algorithm will converge to the global optimal solution.

Platt took the decomposition to the extreme by selecting a set of 2 as the working set.

This allows the sub problems to have analytical solution in close form. In the general

case when the working set is large, there may not exist any analytical solutions, and thus

numerical solutions must be computed. Platt’s idea provides much improvement in

efficiency, and the SMO is now one of the fastest SVM algorithms available.

A very simplified pseudo code of the SMO algorithm is as follows:

1. Loop until no improvements are possible

2.

Use heuristics to select two multipliers a1, and a2

3.

Optimize by assuming all other multipliers are constant

4. End Loop

In implementation, we use the exact algorithm used by Platt. Two different heuristics are

actually used to choose a1 and a2. In step 3, the analytic solution is applied to determine

the optimal values of a1 and a2. Step 3 is called "take step" because it is like taking a

small step towards an optimal solution. Therefore, the SMO algorithm is a sequential

process where a small step is taken at each time towards the optimization target.

3.2 Keerthi's Improvement to SMO

Algorithmic improvement according to Keerthi’s method.

One of the major problems of doing average for the bias b is the lack of theoretical basis.

The other problem is that when doing retraining on the averaged bias, the convergence

speed is not guaranteed. Sometime it’s faster and sometimes it’s slower. According to

Professor Keerthi’s paper, a better way to estimate the value of bias is suggested while

the method is also suitable for our parallelized algorithm.

N

Suppose our objective classifier is u ( x) i yi K ( xi , x) b . We define

i 1

N

Fi j y j K ( x j , xi ) yi

j 1

The KKT condition can be rewritten as

Case1 i 0

Case2 0 i C

Case3 i C

( Fi b) yi 0

( Fi b) yi 0

( Fi b) yi 0

And by further classification according to the possible combination of alpha and yi . We

can have:

b Fi for i I 0 I1 I 2

b Fi for i I 0 I 3 I 4

Where I0 I1 I2 I3 I4 are defined as follows,

I 0 {i : 0 i C}

I1 {i : yi 1, i 0} I 2 {i : yi 1, i C}

I 3 {i : yi 1, i C} I 4 {i : yi 1, i 0}

So if we define

blow max{ Fi : i I 0 I 3 I 4 }

bup min{ Fi : i I 0 I1 I 2 }

The optimality condition is equivalent to blow bup .

Our way of using this fact is that first our basic smo solver is rewritten to us blow bup as

stopping criteria. Then when doing retraining, instead of averaging b value, we will

simply recalculate b_low and b_up for the new problem, as they are only determined by

alphas and labels of samples. In this case, the algorithm is guaranteed to converge.

Experiments show improved algorithms run about 2 or 3 times faster then the original

one while we didn’t meet with any cases of non-convergence by this method.

3.3 Parallel SMO

At each step-taking (step 3 in the pseudo code), the algorithm solves the optimization

problem by just considering two multipliers. We look at parallelization by partitioning

the training set into smaller parts, optimize the two parts in parallel, and perform a

combination of the result.

3.3.1 Partitioning

If we randomly partition the data, the solution of the different parts should be close to

each other. Probabilistically, it is unlikely to partition the data set so bad that the

solutions are very different. A bad partition can be shown in figure 2 below.

Figure 2: Bad Partitioning

The two closed curve defines 2 partitions of the data set. The partitions are labeled

"Dataset1" and "Dataset2". The linear separator for Dataset1 is a line going from bottom

left to top right; the linear separator for Dataset2 is a line going from top left to bottom

right. Therefore, the solutions of the 2 partitions are completely different and

incompatible.

Although we are aware of such a problem, it is unlikely that this situation will occur if the

partitioning is random, and each partition is large enough. If random partitioning is done,

then if the results show that the different partitions give very different results, it probably

means that each partition is too small. This can be because the dataset is too small, or

there dataset has been partitioned into too many parts.

3.3.2 Combination and Retraining

The combination is quite easy. The list of multipliers can simply be joined together. The

main problem is how to combine the different biases from the different partitions. We

performed a weighted average of all the biases, where the weight is the proportion of

non-bounded multipliers. Experimentally, this is slightly better than simple averages.

Experimentally, the combined result is a quite accurate estimate of an optimal solution

for the test datasets that we use. We show the results in Section 5.

We also tried the idea of training either the entire data set or parts of the data set again

after combination. In other words, we use the results from the parallel computation as

starting values for the final optimization. Our results show that this does allow faster

optimization, and we show it in Section 4 and 5.

4 An Implementation of Parallel SMO using Cilk

The parallel SMO has been implemented using Cilk [13]. We use the NUS sunfire

machine both for development as well as testing.

This implementation does not include Keerthi's improvement. Due to time constraint,

this implementation does not include many of the caching techniques that we use in our

Java implementation.

We tested the program on a test data, using 2 cases: without any retraining and with

retraining of all data after combination. The test data consisted of 2000 points, each with

9947 dimensions. The following chart (Figure 3) shows the result when we partition the

data into 2 parts.

The sequential program is the exact Cilk program with the Cilk keywords. The same

optimization (-O3) flag is used for compiling the codes.

800

700

2 Proc, Retrain

Time (sec)

600

Sequential

500

1 Proc, no Retrain

400

300

2 Proc, no Retrain

200

100

0

100

200

600

800

1000

1200

Number of Data Points

Figure 3: Cilk Result (2 Partitions)

The result shows that retraining the combined result shows quite poor performance –

there is in fact about a slowdown by factor 1. The difference between the line showing

parallel computation using 2 processors without retraining and the line showing the same

with retraining indicate that the final retraining process takes a lot of time. In fact, the

retraining of the entire data is faster than retraining using the combined result.

The next graph however shows a different result. For this graph, we ran the program

again, and this time we partition the data into 4 parts. The result shows good

improvements in the timings. The overall training time actually reduces.

Due to time constraint, we did not test the program on various data sets. Thus, the result

is not very conclusive.

400

Sequential

350

4 Proc, Retrain

Time (sec)

300

250

1 Proc, no Retrain

200

150

4 Proc, no Retrain

100

50

0

100

200

600

800

1000

1200

Number of Data Points

Figure 4: Cilk Result (4 Partitions)

The parallelism as reported by the Cilk program shows the following result:

2 SubProblems

4 SubProblems

No-Retrain

Retrain

1.8

1.1

2.5

1.1

The result is not un-expected. It shows that the final retraining takes up the bulk of the

time. With retrain, the parallelism is close to 1.

5 An Implementation of Parallel SMO using Java

Threads

We make use of the Colt Distribution, which is a high performance Java package that

provides functions which are similar to Cilk's "spawn" and "sync" commands.

Furthermore, we implemented the Keerthi's improvement. We also use a caching

technique to further improve the efficiency.

5.1 Java Implementation details

When we discussed the detailed implementation of our plan, we thought of Java. The

reason why we choose Java as one of the implementation languages is its more and more

popularity even in scientific computing area. As its platform independence and elegance

in the language itself (for example dynamic arrays and garbage collection etc), scientific

people can code their algorithms without considering too much about unrelated issues as

memory allocation and repeating designing standard data structures like list or hash table

etc, and the spirit of “write-once-run-anywhere” seems very appealing for scientific research

as well, because thus people can exchange their research results more quickly. One

example that can be drawn for our courses is that from the release of Matlab6, JIT

compiling technology for java has also been used in Matlab environment to boost

performance.

a. However a big problem with Java is still speed. There are a lot of efforts to

improve it, which can be summarized as basically follows,

i. Concurrent java package, java with thread library.

ii. High performance Java Virtual Machine with JIT and “hot-spot”.

iii. Native and Optimizing Java Compiler.

iv. Parallel Java Virtual Machine and distributed grid computing

v. Java with parallel computation library.

vi. Java dialects with special support for parallel and scientific computing.

b. In this project, we have chosen the simplest one, namely concurrent java

package. Part of our goal is to achieve more experiences in the following

aspects:

i. How slow or fast can java be? Can we improve it by using the above

methods?

ii. What is the bottleneck of a Java program? How to improve it?

c. From experiments, we have gained some experiences (or lessons) which

might be useful for people wanting to develop mathematical problems in Java

language.

i. For sequential version of SMO algorithm, we have also tried both

IBM’s fast JVM and a native compiler for Java called Jet™. We tested

our smo program against corresponding C++ O3 optimization program,

and found that Java can achieve at most 70% of performance of

equivalent C++ programs by using the above techniques. So one

experience is if speed is really more important than other matters and

the solution is not parallelized either, don’t use Java!

ii. The main bottleneck is dynamic object creation, according to our

statistics. In order to write faster java code, we have to try to avoid

unnecessary temporary variables as much as possible. Also sometimes

we have to avoid using OOP programming style but stick to C’s

simple function-partitioning style. But the dilemma here is programs

written in this way tend to be more difficult to understand and debug.

iii. However, for our parallel version of SMO algorithm, we have found

java is very good for fast-prototyping. The time spent in coding can be

as little as ¼ of coding the same C++ programs. Because SMO is

mainly a mathematical problem, the main time of coding is spent in

understanding the algorithm and the theory behind it. However once

we understood the algorithm, it’s extremely fast to code in Java. Also

the speed-up ratio gained in Java (sequential java vs parallel java) is

about the same as we coded the whole program in Cilk (sequential c vs

Cilk).

iv. More speed-ups can be achieved by improving the algorithm but not

using faster language implementations. This is an extremely important

lesson that we have been taught in this project. I will talk about this in

the next section.

5.2 Kernel Cache

Profiling the Java code using JProbe [14], we found that the evaluation of kernel values

takes close to 90% of the total execution time. Hence efficiently caching the results of

evaluated values for pairs of points, could improve the overall performance of the system

by saving time during error cache updates and “take step” process (refer section 3.1).

However the straight forward implementation of a cache using a Java Hash table, for data

points with thousands of dimensions, is expensive both in time (computation for look up

and checking for equivalence) and space (number of objects created and garbage

collection overhead). Hence we assigned a unique prime number for each of the points

(as an ID) initially, as they were being loaded into the system. Subsequently, as new

kernel values are evaluated for pairs of points, we store the result in a Hash table using

the multiplication of the unique (prime) IDs of each of the points as the key. This has

shown to improve the performance of the cache drastically, when the code was profiled

subsequently. We also maintained the cache as a Least Recently Used (LRU) cache, to

limit the amount of space used by the cache, while guaranteeing adequate performance.

5.3 Java Results

The Java program was evaluated according to the following three methods.

Method 0: Sequential algorithm

Method 1: Combine and retrain support vectors

Method 2: Combine and retrain all error points

Method 3: Combine without retraining

Method 0

Validation Accuracy

(points)

1971

98.6

Duration

(s)

270.914

Method 1

1975

98.8

253.757

Method 2

1975

98.8

329.265

Method 3

1980

99

199.422

The above table shows the results for a data set of 2000 points, each with 9947

dimensions. This shows that the accuracy of each method is comparative, and that our

implementation achieves the same level of accuracy as the sequential algorithm, but in

less time. The duration stated above includes the time to load the data as well.

5.3.1 Results on Sunfire

5

2

x 10

1.8

Method 1

Combine + Retrain

support vectors

1.6

time

(ms)

1.4

Method 0

Sequential

1.2

1

Method 2

Combine + Retrain

error points

0.8

0.6

0.4

0.2

0

200

Method 3

Combine – no retraining

400

600

800

1000

1200

1400

1600

1800

number of points

The above graph shows the result obtained on the “sunfire” machine of the School of

Computing (NUS). We used Java “bound threads” on Solaris, which effectively made

each user level thread defined in Java, map directly to a kernel level thread on Solaris

instead of a Solaris Light Weight Process (LWP).

In the experiment, we select 200 random points, build a classifier, and repeat this process

each time increasing the number of points by 40, until all 2000 data points are considered.

Since “sunfire” has a limitation on the duration of jobs (30 minutes), some of the tests

failed to complete for the whole data set.

2000

When a large number of points are considered, all methods show performance better than

the sequential version. Combining and retraining error points seems to add too much

overhead for smaller number of points. Combining without retraining (method 3) gives

best performance, many times faster than the sequential version.

Method 0 and Method 2 cannot complete the test case, since they exceed the maximum

time durations allocated for jobs on “sunfire”. Hence we have repeated the same test case

on a dual CPU (Intel 2.4GHz x 2) PC and the following graph shows the complete test

results.

4

3

x 10

data0

data1

data2

data3

2.5

time

(ms)

2

1.5

1

0.5

0

200

400

600

800

1000

1200

1400

1600

1800

number of Points

The method 3 (no retraining) performs best in this test case too, and is about two times

faster than the sequential version for the 2000 data points. Method 2 (combine + retrain

error points) performs as close to the sequential version, due to increased overhead.

2000

6 Conclusion

The results show that the method we devised is able to build a classifier comparatively

accurate as the classifier obtained with the sequential algorithm, but in much shorter time.

The kernel caching and Keerthi’s improvement implemented in the Java version of the

parallel algorithm shows that significant performance gains could be achieved using these

optimizations. The performance increase over the sequential algorithm for the Cilk

implementation is better than the Java implementation, but due to the optimizations

implemented in the Java version, and due to the various configurable parameters of the

Java runtime environment, larger datasets could be handled with the Java implementation,

where the Cilk implementation fails.

References

[1] Bennett K, Campbell C. “Support Vector Machines: Hype or Hallelujah?”, SIGKDD

Explorations 2(2), p1-13, 2000.

[2] Burges, C. “A Tutorial on Support Vector Machines for Pattern Recognition”, Data

Mining and Knowledge Discovery, 2, p121-167, 1998. Also available at

http://www.kernel-machines.org/papers/Burges98.ps.gz

[3] Collobert R and Bengio S. SVMTorch. http://www.idiap.ch/learning/SVMTorch.html

[4] Cristiani N and Shawe-Taylor J. “An Introduction to Support Vector Machines and

other Kernel-based Learning Methods”, Cambridge University Press 2000.

[5] Edelman A, Wen T, and Gorsich D. “Fast Projected Conjugate Gradient Support

Vector Machine Training Algorithms”. Technical Report N, AMSA, 2002

[6] Joachims T. “Making large-scale support vector machine learning practical”,

Advances in Kernel Methods: Support Vector Machines. MIT Press, Cambridge, MA,

1998.

[7] Joachims T. SVMLight. http://svmlight.joachims.org/.

[8] Keerthi et al. “Improvements to Platt's SMO Algorithm for SVM Classifier Design”,

Technical Report CD00-01, Control Division, Dept of Mechanical and Production

Engineering, National University of Singapore.

[9] Keerthi S. Shevade S. Bhattacharyya C and Murthy K. “A Fast Iterative Nearest Point

Algorithm for Support Vector Machine Classifier Design”, Technical Report TR-ISL-9903, Intelligent Systems Lab, Dept of Computer Science and Automation, India Institute

of Science, Bangalore, India, 1999.

[10] Osuna E, Freung R, Girosi F. “Improved Training Algorithm for Support Vector

Machine”, Proc IEEE NNSP 97, 1997.

[11] Platt J. “Sequential Minimal Optimization: A Fast Algorithm for Training Support

Vector Machines”. Available at http://www.research.microsoft.com/users/jplatt/smo.html.

[12] Vapnik, V. “Estimation of Dependences Based on Empirical Data” [in Russian],

Nauka, Moscow, 1979. (English translation: Springer Verlag, New York, 1982).

[13] The Cilk Project, http://supertech.lcs.mit.edu/cilk/

[14] JProbe Profiler, http://java.quest.com/jprobe/jprobe.shtml