Neural Network Classifier for Segmentation and Classification of

advertisement

Neural Network Classifier for Segmentation and

Classification of Skin Lesions from Digital Images

Jain Elza Jose

Anina John

Department of Computer Science

Department of Computer Science

4th Semester MTech Student

Assistant Professor

Caarmel Engineering College, Perunad

Caarmel Engineering College, Perunad

Abstract- Melanoma is a cancer that make melanin in the

melanocytes. Melanoma tumors are brown or black. There is a

need for an automated system to assess a patient’s risk of

melanoma using images of their skin lesions captured using a

standards digital camera. Locating the skin lesion in the digital

image is a challenging one. The segmentation accuracy is also

less. In the existing system, it segments the skin lesions and

classify the skin as lesion and normal skin. In the proposed

system, texture distictiveness lesion segmentation algorithm is

used to segment the skin lesions. The TDLS algorithm consists

of two steps. First, a set of sparse texture distributions of

normal skin and lesion skin are learned. A TD metric is

calculated to measure the dissimilarity of a texture distribution

from all other texture distributions. Second, the TD metric is

used to classify regions in the image as part of the skin class or

lesion class. Finally a neural network classifier is used to

classify the lesion as benign or malignant. The proposed

framework has higher segmentation accuracy compared to all

other tested algorithms.

superpixels). The goal of segmentation is to simplify and/or

change the representation of an image into something that is

more meaningful and easier to analyze. Image segmentation

is typically used to locate objects and boundaries (lines,

curves, etc.) in images.

Index Terms- Melanoma, neural network, segmentation, texture.

I. INTRODUCTION

Image processing [17] is a signal processing for which the

input is an image, such as a photograph. The output may be

either an image or a set of characteristics or parameters

related to the image. Most image-processing techniques

involve treating the image as a two-dimensional signal and

applying standard signal-processing techniques to it. Image

processing usually refers to digital image processing, but

optical and analog image processing also are possible. This

article is about general techniques that apply to all of them.

The acquisition of images (producing the input image in the

first place) is referred to as imaging. Image segmentation

[5][16] is the process of partitioning a digital image into

multiple segments (sets of pixels, also known as

The result of image segmentation is a set of segments that

collectively cover the entire image, or a set of contours

extracted from the image (see edge detection). Each of the

pixels in a region are similar with respect to some

characteristic or computed property, such as color, intensity,

or texture. Adjacent regions are significantly different with

respect to the same characteristics.

Melanoma [1][2] is a cancer that begins in the melanocytes.

Most of these cells still make melanin, so melanoma tumors

are often brown or black. Melanoma most often starts on the

trunk (chest or back) in men and on the legs of women, but

it can start in other places, too. Having dark skin lowers the

risk of melanoma, but a person with dark skin can still get

melanoma. Melanoma can almost always be cured in its

early stages. But it is likely to spread to other parts of the

body if it is not caught early. Due to the increase in

incidence rates, early detection of melanoma is essential. To

reduce the cost of screening melanoma an automated

melanoma screening [2] algorithms have been proposed.

A dermatoscopes [3] is a handheld device that optically

magnifies, illuminates and enhances skin lesions, allowing

the dermatologist to better view the lesion features. Use of

the dermatoscope has been found to improve diagnosis,

compared to the naked eye. Dermatoscope was used for

screening melanoma by the dermatologists. The main

reasons against using the dermatoscope include a lack of

training or interest. Recent work includes screening the

melanoma from digital images. It includes segmentation

algorithms for locating the lesion border of the skin. This is

important while classifying the lesion based on the features.

The main features include the ABCD scale: asymmetry,

border irregularity, color variegation, and diameter. The

examples of digital images of melanoma are shown in fig.

1(a) and (c).

(a)

(b)

Fig. 1. (a) and (b) are examples of digital images of melanoma.

II. RELATED WORKS

Existing illumination correction algorithms [6] adjust pixel

intensities in an image based on an estimated illumination

map. The goal of these algorithms is to remove any external

illumination, so that the resulting image is independent of

any illumination effects. Some of these effects that should

be removed include shadows and bright areas caused by

illumination variation. The motivation of this preprocessing

step is to improve the performance of subsequent steps,

including lesion segmentation and classiffication. Existing

general illumination correction algorithms focus on

correcting for illumination variation in standard digital

images. These algorithms are general and can be applied to

any image.

Recent work by cavalcanti et al. [6] proposes a correction

algorithm specific for skin lesion images. The algorithm fits

pixel intensities from the four corners of the photograph to a

parametric surface. The disadvantage with this algorithm is

that only a very small subset of pixels is used to fit the

parametric surface. This results in the estimated illumination

map being over-or underestimated. The purpose of image

segmentation algorithms is to find and outline distinct

objects of importance in an image. For example, for images

of skin lesions, the border of the skin lesion should be

identified. Segmentation in general is a very well-researched

area and many different algorithms have been proposed.

Image segmentation [5][16] is perhaps the most studied area

in computer vision, with numerous methods reported. A

segmentation method is usually designed taking into

consideration the properties of a particular class of images.

The majority of algorithms only use features derived from

pixel color to drive the segmentation. Segmentation is

difficult due to the illumination variation. Thresholding

algorithms are used for bright areas where there is a

reflection of camera flash. In preprocessing, a color image is

first transformed into an intensity image in such a way that

the intensity at a pixel shows the color distance of that pixel

with the color of the background. The color of the

background is taken to be the median color of pixels in

small windows in the four corners of the image.

Preprocessing step is to correct shadows and bright spots

caused by illumination variation.

Most of the segmentations algorithms use color variation to

identify the skin lesions. Textures are also used to identify

the skin lesion and normal skin. Since normal skin and

lesion skin has different textures. It includes, smoothness,

roughness, bumps or ridges etc. Stoecker et al. [7] analyzed

texture in skin images using basic statistical approaches,

such as the gray-level cooccurrence matrix. They found that

texture analysis could accurately find regions with a smooth

texture and that texture analysis is applicable to

segmentation and classification of dermatological images.

Existing texture analysis extracts features and measurements

of a texture, allowing textures from different regions to be

compared. Texture analysis is useful for image

segmentation because different parts of the same object will

usually match in texture. Algorithms include using first

level statistics, gray-level co-occurrence matrix or haralick

statistics. Model-based algorithms use probability models,

such as the autoregressive model or markov random field

model [4][8l, to characterize textures. Structural algorithms

deconstruct and characterize the texture as a number of

texture elements. The algorithm proposed by xu et al. [9]

learns a model of the normal skin texture using pixels in the

four corners of the image, which is later used to find the

lesion. Hwang and celebi [10] use gabor filters to extract

texture features and use a g-means clustering approach for

segmenting the lesion.

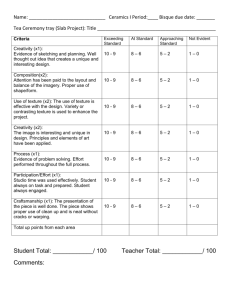

Fig. 2. Architecture of the System

In this paper, it proposes a texture distinctiveness lesion

segmentation algorithm (TDLS) to locate skin lesion in the

digital images. The TD metric measures the dissimilarity of

texture distributions with all other distributions. Then

classify it as normal skin or lesion skin. A probabilistic

neural network classifier is used to classfy the skin as

benign or malignant. In section III system design, the

process of learning the sparse texture model and calculating

a metric to measure td is described. Finally the lesion is

separated. Then the neural network classifier is used to

classify the lesion as benign or malignant. In section IV

experimental results and discussion about future are

explained.

III. SYSTEM DESIGN

Each row in the neighbourhood is concatenated

sequentially. An unsupervised algorithm is used to learn the

textures. The k-means clustering algorithm is used here. It is

used to find the k clusters of texture data. It is also used to

increase the robustness and to speed up the number of

iterations.

The mean and standard deviations are calculated to find the

TD metric. TD means the texture distinctiveness metric. The

TD metric is used to classify the image as normal skin and

lesion skin. For the normal skin, the dissimilarity of the

textures from other is very small. So the TD metric is also

small for the normal skin. But for the lesion skin, the TD

metric will be large. Because of its dissimilarity from other

textures.

The proposed system includes a texture distinctiveness

lesion segmentation algorithm (TDLS) to learn the texture

distributions and to calculate the TD metric. It is used to

classify the image as normal skin and lesion skin. Then

finally a neural network classifier is used to the lesion as

benign or malignant.

A.

Texture distributions

In texture disributions, the input image will transform the

RGB image to XYZ image. It will convert the pixel values

into XYZ. For each pixel in the image a local texture vector

is obtained. The texture vector contains pixels in the

neighbourhood of size n centered on the pixel of interest.

Fig.3. Map of the texture distinctive metric. In (a) and (c), the original

images are shown. In (b) and (d), maps of the texture distinctive metric

In Fig. 3, it will show the map of texture distinctiveness

metric. Fig. 3 (b) and (d) displays the textural

distinctiveness metric for each pixel in the image. In both

figures, the lesion is white, which means that it has highest

textural distinctiveness metric.

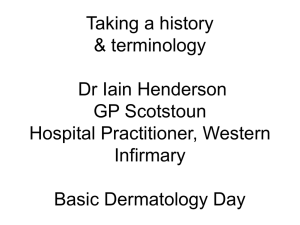

lesion as malignant. The resultant graph is shown in fig.4.

This will shows the better analysis with the existing

classification algorithms.

B. Statistical region merging

In this, first of all the image is oversegmented, that image is

divided into number of regions. For that SRM algorithm is

used. It includes mainly two steps that is a sorting step and a

merging step. In the sorting step , a four connected graph is

constructed. The horizontal and vertical pixels are sorted

based on their similarity. In the merging step, it will merge

the regions based on the pixel intensities. Here the normal

skin and lesion skin are classified.

Fig.4. Performance Analysis Graph

C. Segmentation refinement

In the segmentation refinement, the lesion is refined here.

To refine the lesion border postprocessing are applied. It

includes two steps: morphological dilation and region

selection. The morphological dilation is used to fill the holes

and smooth the border of the lesion. The region selection is

used to select the lesion region and eliminate the unwanted

region. The region which touches the edge of the

photographs can be eliminated. Since the lesion will not

touch the edge.

D. Neural network classifier

In this research, as a future enhancement probabilistic feed

forward neural network classifier is used. In this, the lesion

is segmented. The features such as the area of the lesion,

orientation, major axis length, minor axis length,

eccentricity, centroid, momentum, red average value are

extracted. The training towards the benign and melanoma

are tested. Finally, it is used to classify the lesion as benign

or malignant.

IV. EXPERIMENTAL RESULTS AND DISCUSSIONS

The objective of this experiment is to measure the

classification accuracy of the lesion that is classified as

benign or malignant. This experiment is done with 50

benign images and 50 malignant images. The experiment

shows about 98% accuracy for classifying the lesion as

benign and 99% accuracy for classifying the lesion as

malignant. In the existing systems it is only 80% for

classifying the lesion as benign and 81% for classifying the

V.

CONCLUSION AND SCOPE OF THE WORK

In summary, a novel lesion segmentation algorithm using

the concept of learning is proposed. Texture distinctiveness

lesion segmentation algorithm is used. It captures

dissimilarity between the texture distributions. Then image

is divided into smaller regions and classified as lesion or

skin based on TD map. In future enhancement, it will work

with neural network classifier. The classifier will extract

features and finally classify the lesion as benign or

malignant. Experience and training based learning is an

important characteristic of neural networks that makes it

ideal for diagnosis applications. From the segmented images

neural network learns skin and lesion pixel values. The

proposed framework achieves higher segmentation and

classification accuracy. As a future work, it is expected to

work with border accuracy of benign or malignant image.

REFERENCES

[1]. D. S. Rigel, r. J. Friedman, and a. W. Kopf, “the

incidence of melanoma in the united states: issues as

we approach the 21st century,” j. Amer. Acad.

Dermatol., vol. 34, no. 5, pp. 839–847,1996.

[2]. R. Amelard, j. Glaister, a. Wong, and d. A. Clausi,

\melanoma decision support using lighting-corrected

intuitive feature models," in computer vision techniques

for the diagnosis of skin cancer, j. Scharcanski and m.

E. Celebi, eds. Springer,accepted, 2013.

[3]. Starck, m. Elad, and d. Donoho, “image decomposition

via the combination of sparse representations and a

variational approach,” ieee trans. Image process., vol.

14, no. 10, pp. 1570–1582, oct. 2005.

j. M. Grichnik, a. A. Marghoob, h. S. Rabinovitz, and

s. W. Menzies, “border detection in dermoscopy

images using statistical region merging,” skin

res.technol., vol. 14, no. 3, pp. 347–353, 2008.

[4]. C. Serrano and b. Acha, “pattern analysis of

dermoscopic images based on markov random fields,”

pattern recog., vol. 42, no. 6, pp. 1052–1057, 2009.

[5]. L. Xu, m. Jackowskia, a. Goshtasby, d. Roseman, s.

Bines, c. Yu, a. Dhawan, and a. Huntley, “segmentation

of skin cancer images,” image vis. Comput., vol. 17, pp.

65–74, 1999.

[13]. P. G. Cavalcanti, j. Scharcanski, andc. B. O. Lopes,

“shading attenuation in human skin color images,” in

advances in visual computing, g. Bebis, r. Boyle, b.

Parvin, d. Koracin, r. Chung, r. Hammoud, m.

Hussain, t. Karhan, r. Crawfis, d. Thalmann, d. Kao,

and l. Avila, eds., (ser. Lecture notes in computer

science), vol. 6453 heidelberg, germany: springer,

2010, pp. 190–198.

[6]. P. G. Cavalcanti and j. Scharcanski, \automated

prescreening of pigmented skin lesions using standard

cameras," computerized medical imaging and graphics,

vol. 35, no. 6, pp. 481{491, sept 2011.

[14]. P. G. Cavalcanti and j. Scharcanski, “automated

prescreening of pigmented skin lesions using standard

cameras,” comput.med. Imag. Graph.vol. 35, no. 6, pp.

481–491, sep. 2011.

[7]. W. V. Stoecker, c.-s. Chiang, and r. H. Moss, “texture in

skin images: comparison of three methods to determine

smoothness,” comput. Med. Imag. Graph., vol. 16, no. 3,

pp. 179–190, 1992.

[8]. C. Serrano and b. Acha, “pattern analysis of

dermoscopic images based on markov random fields,”

pattern recog., vol. 42, no. 6, pp. 1052–1057, 2009.

[15]. Jeffrey glaister, alexander wong “segmentation of skin

lesions from digital images using joint statistical

texture

distinctiveness”ieee

trans.bio.med,april

2014,vol.61,no. 4.

[9]. L. Xu, m. Jackowskia, a. Goshtasby, d. Roseman, s.

Bines, c. Yu, a. Dhawan, and a. Huntley, “segmentation

of skin cancer images,” image vis. Comput., vol. 17, pp.

65–74, 1999.

[10]. S. Hwang and m. E. Celebi, “texture segmentation of

dermoscopy images using gabor filters and g-means

clustering,” in proc. Int. Conf.image process., comput.

Vision, pattern recog, jul. 2010, pp. 882–886.

[11]. G. Peyre, \sparse modeling of textures," journal of

mathematical imaging and vision, vol. 34, no. 1, pp.

17{31, 2009.

[12]. M. E. Celebi, h. A. Kingravi, h. Iyatomi, y. A.

Aslandogan,w. V. Stoecker, r. H. Moss, j. M. Malters,

[16] C. Carson, s. Belongie, h. Greenspan, and j. Malik.

Blobworld: image segmentation using expectationmaximization and its application to image querying.

Ieee trans. Pattern anal. And machine intell.,

24(8):1026–1038, 2002.

[17].

Jensen, J.R. 1996. Introduction to Digital Image

Processing: A Remote Sensing Perspective.Practice

Hall, New Jersey.