- CLU-IN

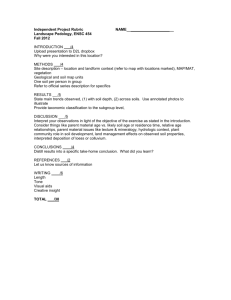

advertisement