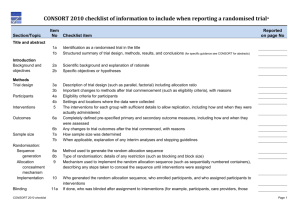

19 – Harms

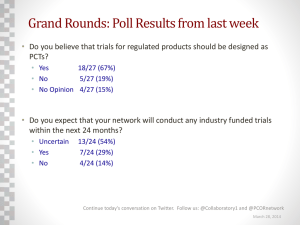

advertisement