hw_05n_sol

advertisement

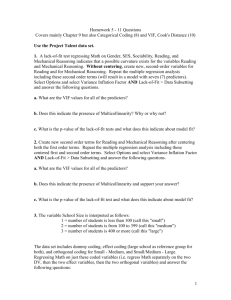

Homework 5 - 11 Questions Covers mainly Chapter 9 but also Categorical Coding (8) and VIF, Cook's Distance (10) Use the Project Talent data set. 1. A lack-of-fit test regressing Math on Gender, SES, Sociability, Reading, and Mechanical Reasoning indicates that a possible curvature exists for the variables Reading and Mechanical Reasoning. Without centering, create new, second-order variables for Reading and for Mechanical Reasoning. Repeat the multiple regression analysis including these second order terms (will result in a model with seven (7) predictors). Select Options and select Variance Inflation Factor AND Lack-of-Fit > Data Subsetting and answer the following questions. a. What are the VIF values for all of the predictors? Predictor Gender Reading Mech Social SES r2 me2 Coef -1.238 -1.6071 -2.040 0.5179 0.22559 0.03581 0.14575 SE Coef 1.895 0.6963 1.159 0.2971 0.09012 0.01264 0.06323 T -0.65 -2.31 -1.76 1.74 2.50 2.83 2.30 P 0.522 0.034 0.096 0.099 0.023 0.011 0.034 VIF 1.542 63.366 43.422 1.365 1.543 69.894 52.568 b. Does this indicate the presence of Multicollinearity? Why or why not? Yes, since the VIF values for Reading, Mechanical and their second order terms exceed 10 c. What is the p-value of the lack-of-fit tests and what does this indicate about model fit? No evidence of lack of fit (P >= 0.1) indicating that there is no lack of model fit 2. Create new second order terms for Reading and Mechanical Reasoning after centering both the first order terms. Repeat the multiple regression analysis including these centered first and second order terms. Select Options and select Variance Inflation Factor AND Lack-of-Fit > Data Subsetting and answer the following questions. a. What are the VIF values for all of the predictors? Predictor Gender Social SES cR cM cR2 cM2 Coef -1.238 0.5179 0.22559 0.5960 1.2242 0.03581 0.14575 SE Coef 1.895 0.2971 0.09012 0.1435 0.4320 0.01264 0.06323 T -0.65 1.74 2.50 4.15 2.83 2.83 2.30 P 0.522 0.099 0.023 0.001 0.011 0.011 0.034 VIF 1.542 1.365 1.543 2.692 6.033 2.651 2.963 1 b. Does this indicate the presence of Multicollinearity and support your answer? With all VIF values being less than 10 there is no indication of multicollinearity. The centering reduced the correlation between the first and second order terms for Reading and Mechanical Reasoning c. What is the p-value of the lack-of-fit test and what does this indicate about model fit? No evidence of lack of fit (P >= 0.1) indicating that there is no lack of model fit 3. The variable School Size is interpreted as follows: 1 = number of students is less than 100 (call this "small") 2 = number of students is from 100 to 399 (call this "medium") 3 = number of students is 400 or more (call this "large") The data set includes dummy coding, effect coding (large school as reference group for both), and orthogonal coding for Small - Medium, and Small/Medium - Large. Regressing Math on just these coded variables (i.e. regress Math separately on the two DV, then the two effect variables, then the two orthogonal variables) and answer the following questions: a: Provide an interpretation of the t-tests for regressing Math on the DV. Predictor Constant Small_DV Medium_DV Coef 31.571 -13.429 -8.481 SE Coef 3.495 4.943 4.471 T 9.03 -2.72 -1.90 P 0.000 0.013 0.071 Since dummy coded, the T-tests are comparing the means for the categorical level in the model to the mean for the category not included in the model. In this case, the mean Math score of the Small schools and mean Math score of the Medium schools are being compared to the mean Math score of the Large schools. Using alpha of 0.05, we would conclude a significant difference between the mean math scores for the Small and Large schools. The negative slope for Small indicates that the mean math score for Small schools is less than the mean math score for Large schools. Since the p-value for Medium exceeds 0.05 we cannot conclude a difference in mean math scores between the Medium and Large schools. Note that in this model the intercept is the mean math score for the Large schools. b: Provide an interpretation of the t-tests for regressing Math on the effect variables. Predictor Constant Small_eff Med_eff Coef 24.268 -6.126 -1.177 SE Coef 1.892 2.766 2.484 T 12.83 -2.21 -0.47 P 0.000 0.037 0.640 2 Since effect coded AND unequal sample sizes, the T-tests are comparing the means for the categorical level in the model to the average of the three group means. That is, if you find the mean math score for the three school sizes (18.14, 23.09, and 31.57) this average is 24.3 – the intercept of the model. In this model, the mean Math score of the Small schools and mean Math score of the Medium schools are being compared to the this average mean. Using alpha of 0.05, we would conclude a significant difference between the mean math scores for the Small and average of the mean Math scores for the three sizes. The negative slope for Small indicates that the mean math score for Small schools is less than this average. Since the p-value for Medium exceeds 0.05 we cannot conclude a difference between the mean math scores for the Medium and average of the mean Math scores for the three sizes. c: Provide an interpretation of the t-tests for regressing Math on the orthogonal variables. Predictor Constant Small-Med SM-Large Coef 24.080 -0.2749 -0.4162 SE Coef 1.850 0.2484 0.1648 T 13.02 -1.11 -2.53 P 0.000 0.280 0.019 Since orthogonal coded, the T-tests are comparing the means for the categorical levels being compared in that specific contrast. For Small-Med this compares the mean Math score between these two school sizes; for SM-Large we are comparing the mean Math score of the Small/Medium schools to the mean math score of the Large schools. Again using alpha of 0.05, we only reject the hypothesis for the SMLarge contrast and conclude a difference in mean math scores for the Small/Medium schools compared to the Large schools. With a negative slope and the contrast difference being calculated by “SM minus Large” we can add that the Large school mean math scores are higher than the mean math scores of the Small/Medium sized schools. Note that the intercept in this model is the overall mean of the math scores, i.e. the grand mean for math. 4. Regress Math on each orthogonal coded variable separately (i.e. conduct two simple linear regressions) and answer the following: a. What is the R-squared value for regressing Math on Small-Med? R-sq = 4.1% b. What is the R-squared value for regressing Math on SM-Large? R-sq = 21.6% c. What is the R-squared value for the multiple regression model when regressing Math on both orthogonal variables (i.e. from the model in 3c)? R-sq = 25.7% d. Did the sum of the R-squared values in parts a and b equal the R-squared value in c? 3 Yes they did. NOTE: this is the result of having orthogonal (i.e. correlation between predictors is zero). In such instances when all the model predictors are orthogonal, the R-squared values are additive. Use the High School and Beyond (HSB) data set. The data is explained in the HSB Read Me file. USE MATH AS RESPONSE 1. With Math as the response and the remaining variables as predictors (excluding ID as that serves only as an identifier), how many models are possible (assume an intercept for all models)? 213 – 1 = 8191 2. Using Math as response, analyze the data using Minitab Backward, Forward, and Stepwise Regression (keep default settings). Specify the “best” regression equation identified by these three methods. How many steps did it take for each method? Do they agree? Backward: Math = 9.28 – 1.42SEX + 0.83SES +0.26RDG + 0.28WRTG + 0.22SCI 0.07CIV Steps: 8 Forward: Math = 8.79 + 0.25RDG + 0.28WRTG + 0.22SCI - 1.41SEX + 0.78SES + 0.07CIV + 0.09CAR Steps: 7 Stepwise: Math = 8.79 + 0.25RDG + 0.28WRTG + 0.22SCI - 1.41SEX + 0.78SES + 0.07CIV + 0.09CAR Steps: 7 Agree? No, they do not all agree; the forward and stepwise methods do but not backward. 3. In the Backward Elimination analysis, which variable was removed first and why? After the full model is run, School Type (SCTYP) is removed first since it has the largest p-value (0.981) exceeding the alpha to remove of 0.1 4. In the Forward and Stepwise analyses which variable entered first and why? After the full model is run, Reading (RDG) is entered first since it has the smallest p-value (0.000) below the alpha to enter of 0.25 (Note: with rounding there could be 4 other variables with three digit p-values of 0.000. In such cases the distinction as to which would be added first could be determined by the variable with the largest T statistic.) 5. In the Backward Elimination analysis how much of a change in R2 is there between the model in Step 4 and the final model? After step 4, the R-sq is 57.33% and in the final step this is 58.20% - no a great increase. 6. Now regress Math on all of the predictors and use the Best Subsets in Minitab to determine the variables that comprise the best model using R-squared, adjusted Rsquared, and lowest Cp. What are the variables, criterion values, and are the models the same? [Remember that goal is reduce number of variables from full model.] Lowest Cp: SEX, SES, CAR, RDG, WRTG, SCI, CIV Value: 4.0 R-squared: SEX, SES, LOCUS, CAR, RDG, WRTG, SCI, CIV Value: 58.3% Adj. R-Squared: SEX, SES, CAR, RDG, WRTG, SCI, CIV Value: 57.7% All models the same? No, the two models based on lowest Cp and adjusted Rsquared are the same, but not when using R-squared. 7. Regress Math on Reading, Writing and Science. Click Storage and select Cook’s Distance (Di). Determine if any of these Di value(s) indicate if any observation(s) as influential by seeing if any of these Di values exceed 0.5 of the F-distribution with p and n-p degrees of freedom. That is, find the cumulative F probability for this column of Di values. If any cumulative probabilities exceed 0.5 then that observation would be considered and outlier. Also, in the output under Unusual Observations any observation marked with an “X” indicates and influential outlier. Do any exist in this regression analysis? DF: 4, 596 Number of Di values greater than 0.5: None Observations that are considered influential outliers: Using Cook’s Distance there is no observations that would be classified as influential outliers. NOTE however in the output under Unusual Observations that there are three observations designated with an ‘X’ indicating they are influential using the high leverage method. If you stored the leverages and compared these to 0.02 (from min[0.99, 3p/n]) you would find that the leverages for these three observations exceed 0.02 5