home2-13

advertisement

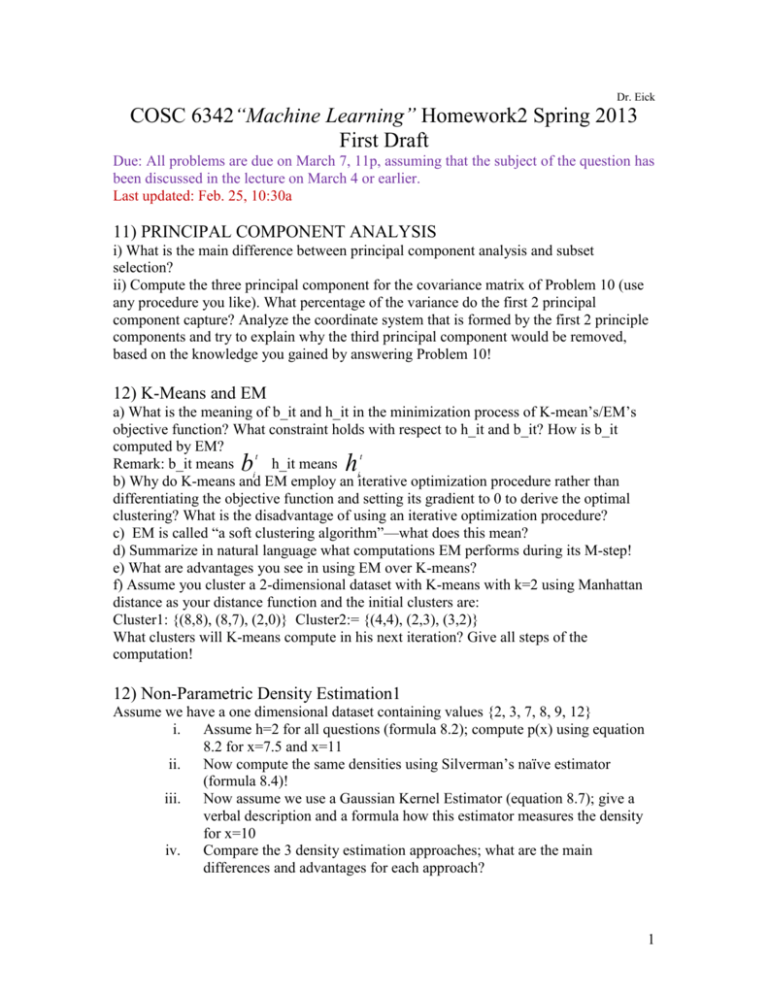

Dr. Eick

COSC 6342“Machine Learning” Homework2 Spring 2013

First Draft

Due: All problems are due on March 7, 11p, assuming that the subject of the question has

been discussed in the lecture on March 4 or earlier.

Last updated: Feb. 25, 10:30a

11) PRINCIPAL COMPONENT ANALYSIS

i) What is the main difference between principal component analysis and subset

selection?

ii) Compute the three principal component for the covariance matrix of Problem 10 (use

any procedure you like). What percentage of the variance do the first 2 principal

component capture? Analyze the coordinate system that is formed by the first 2 principle

components and try to explain why the third principal component would be removed,

based on the knowledge you gained by answering Problem 10!

12) K-Means and EM

a) What is the meaning of b_it and h_it in the minimization process of K-mean’s/EM’s

objective function? What constraint holds with respect to h_it and b_it? How is b_it

computed by EM?

t

t

Remark: b_it means

h_it means

i

i

b) Why do K-means and EM employ an iterative optimization procedure rather than

differentiating the objective function and setting its gradient to 0 to derive the optimal

clustering? What is the disadvantage of using an iterative optimization procedure?

c) EM is called “a soft clustering algorithm”—what does this mean?

d) Summarize in natural language what computations EM performs during its M-step!

e) What are advantages you see in using EM over K-means?

f) Assume you cluster a 2-dimensional dataset with K-means with k=2 using Manhattan

distance as your distance function and the initial clusters are:

Cluster1: {(8,8), (8,7), (2,0)} Cluster2:= {(4,4), (2,3), (3,2)}

What clusters will K-means compute in his next iteration? Give all steps of the

computation!

b

h

12) Non-Parametric Density Estimation1

Assume we have a one dimensional dataset containing values {2, 3, 7, 8, 9, 12}

i.

Assume h=2 for all questions (formula 8.2); compute p(x) using equation

8.2 for x=7.5 and x=11

ii. Now compute the same densities using Silverman’s naïve estimator

(formula 8.4)!

iii. Now assume we use a Gaussian Kernel Estimator (equation 8.7); give a

verbal description and a formula how this estimator measures the density

for x=10

iv.

Compare the 3 density estimation approaches; what are the main

differences and advantages for each approach?

1

13) Non-parametric Density Estimation2

a) Assume a dataset X={xt,rt}consisting of 4 examples (0,1), (1,3), (2,7),(4,1) is given

and the bin-width is 2.5: assume that x and x’ belong to the same bin if |x-x’|2.5.

a1) Compute the values (also give the formula) for the regressogram for inputs 0.2, 1.7,

and 4.6 for the mean smoother (see formula 8.19 on page 175 of the textbook).

ĝ(0.2)=

ĝ(1.7)=

ĝ(4.2)=

Now assume the bin-width is only 1. Recompute the prediction for input 1.7!

ĝ(1.7)=

a2) In general the function obtained using the above approach has discontinuities. What

could be done to obtain a continuous function?

b) What is the main difference between the Gaussian Kernel Density function approach

as described in Section 8.2.2 of the textbook and the k-nearest Neighbor Density

Estimator that has been described in Section 8.2.3?

c) What advantages you see in using non-parametric density estimation approach

compared to parametric density approaches, such as multivariate Gaussians? [3]

14) DBSCAN

a) How does DBSCAN form clusters?

b) What is a border point when using DBSCAN?

2