Basics of face detection and facial recognition

advertisement

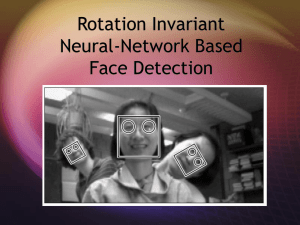

Basics of face detection and facial recognition Roland Matusinka Óbuda University, John von Neumann Faculty of Informatics Phone: (36)-(30)-782-4470, matusinka.roland@freemail.hu Abstract – Face detection and recognition is one of the most used technologies today. From social sites to CCTV systems it can be found almost anywhere. The goal of this paper is to introduce the reader to the basics of these technologies. I. INTRODUCTION The aim of face detection algorithms is to determine the size and location of human faces in digital images, ignoring anything else, like trees or other objects in the image [1]. This can be achieved either by using a reference image containing known faces, or by finding key features of a face, like the eyes, the nose or the mouth. In the first case the faces are matched bitwise, meaning that any slight alteration in the processed image (like a different facial expression) can cause the matching to fail. After we found the face in the image, we can use facial recognition the determine who that face belongs to. Facial recognition systems extract landmarks and unique features from a face, and try to match them to a facial database. This facial database can consist of geometric (structural) data of faces or a gallery of images used as reference data. II. FACE DETECTION Given an arbitrary image, the goal of face detection is to determine whether or not there are any faces in the image and if present, return the image location and extent of each face. Some challenges of face detection are the following [2]: Pose: There is no guarantee that a person will look straight into a camera every time a picture is taken of him/her. Therefore, more often than not, a human’s face will be at an angle with the camera (frontal, 45-degree, profile, upsidedown, etc.) and thus the proportions of the face will be altered. Presence or absence of features: Facial features such as beards, mustaches, and glasses may or may not be present, and there is a great variability of these features in size, color, and shape. Facial expression: The appearance of a face are affected by the person’s facial expression. Occlusion: Face images can be occluded by other objects. In an image of a group of people some faces may be partially occluded by other faces. Image orientation: Face images vary for different rotations about the camera’s optical axis. Image condition: When the image is created factors such as sources, and distribution of lighting, and properties of the camera affect the appearance of a face. There are many closely related problems of face detection. Face localization aims to determine image position of a single face. This is a simplified detection problem with the assumption that an image contains only one face. The goal of face feature detection is to detect the presence and location of certain facial features such as eyes, nose, nostrils, eyebrow, lips, mouth, ears, etc., with the assumption that there is only one face in the image. Face recognition or face identification compares an input image (probe) against a database (gallery) and reports a match, if any. Face authentication, face tracking, and facial expression recognition is also worth mentioning as common problems. Face detection is the first step in any automated systems which solves the above problems [2]. There are different approaches to face detection. Often they are divided into four categories. These categories may overlap, so an algorithm could belong to two or more categories [3]. Knowledge-based methods: Rule-based methods that encode our knowledge of human faces. They try to capture our knowledge of faces and translate them into a set of rules. Feature-invariant methods: Algorithms that try to find structural features of a face that exist in any condition (different pose, different lighting, etc.). The above methods are mainly used for face localization. Template matching methods: These algorithms compare input images with stored patterns of faces or features. These methods are used for both face localization and detection. Appearance-based methods: A specialization of template matching methods where the pattern database is learnt from a set of training images. They are designed to use in face detection. III. FACE RECOGNITION Face recognition’s core problem is to extract information from photographs. This feature extraction process can be defined as the procedure of extracting relevant information from a face image. This information must be valuable for the later step of identifying a subject with an acceptable error rate. There are many feature extraction algorithms. Most of them are used in other areas than face recognition. Researchers in face recognition have used, modified and adapted many algorithms and methods to their purpose. Feature selection algorithms aim to select a subset of the extracted features that cause the smallest classification error. The importance of this error is what makes feature selection dependent to the classification method used. The most straightforward approach to this problem would be to examine every possible subset and choose the one that fulfills the criterion function. However, this can become an unaffordable task in terms of computational time. Therefore researchers make an effort towards creating a satisfactory algorithm, rather than an optimal one [3]. Once features are extracted and selected, the next step is to classify the image. Appearance-based face recognition algorithms use a wide variety of classification methods. Sometimes two or more classification methods are combine to achieve better results. On the other hand model-bases methods match the sample with a template or model, then a learning method can be used to improve the algorithm. Either way, classifiers have a big impact in face recognition. There are three concepts that are key in building a classifier – similarity, probability, and decision boundaries [3]. Similarity: Patterns that are similar should belong to the same class. The idea is to establish a metric that defines similarity and a representation of the same class. Probability: Some classifiers are build based on a probabilistic approach. Bayes decision rule is often used. The rule can be modified to take into account different factors that could lead to miss-classification. Bayesian decision rules can give an optimal classifier, and the Bayes error can be the best criterion to evaluate features. Therefore, probability functions can be optimal. Decision boundaries: This approach can become equivalent to a Bayesian classifier. It depends on the chosen metric. The main idea behind this approach is to minimize a criterion (a measurement of error) between the candidate pattern and the testing patterns. IV. PROBLEMS OF FACE RECOGNITION Illumination. Many algorithms rely on color information to recognize faces. Features are extracted from color images, although some of them may be grayscale. The color that we perceive from a given surface depends not only on the surface’s nature, but also on the light upon it. As many feature extraction methods relay on color/intensity variability measures between pixels to obtain relevant data, they show an important dependency on lightning changes. Not only light sources can vary, but also light intensities may increase or decrease, new light sources added. Entire face regions be obscured or in shadow, and also feature extraction can be impossible because of solarization. The big problem is that two faces of the same subject but with different illumination can show more differences between them than compared to another subject [3]. Pose. Pose variation and illumination are the two main problems researchers have to face in face recognition. The majority of face recognition methods are based on frontal face images. Recognition algorithms must implement the constraints of the recognition application. Many of them, like video surveillance, video security systems or augmented reality home entertainment systems take input image data from uncontrolled environment. The uncontrolled environment constraint involves several obstacles for face recognition. Other problems worth mentioning are: Occlusion, Optical technology (format of input images), Facial expressions. REFERENCES [1.] Face detection – Wikipedia. http://en.wikipedia.org/wiki/Face_detection Last visited: 2014. 04. 27. [2.] Ming-Hsuan Yang, David J. Kriegman, Narendra Ahuja Detecting Faces in Images: A Survey http://homepages.cae.wisc.edu/~ece738/notes/Yang02.pdf Last visited: 2014. 04. 27. [3.] Ion Marqués – Face Recognition Algorithms http://www.ehu.es/ccwintco/uploads/e/eb/PFC-IonMarques.pdf Last visited: 2014. 04. 27.