ON ASSURING VALID MEASURES FOR THEORETICAL MODELS

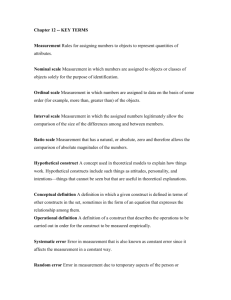

advertisement