simplex3

advertisement

(User.programs)

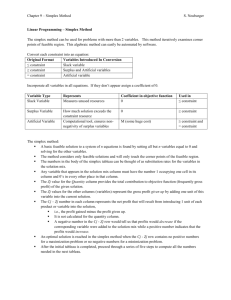

Item: 437 by akcs.rmarimon@hpcvbbs.cv.hp.com [Ricardo Jose Marimon]

Subj: Solving Linear Programming Problems with SIMPLEX3

Date: 14 Nov 1992

This solves linear programs using a "pseudo revised" simplex method.

It works very fast. Take a look at it!!!

[Note: "Linear Programming Problems" is mathematical jargon for what is

simply a system of linear inequalities with the goal of maximizing a

particular variable.

Concrete example: Suppose that the Sierra Cement Company has several

cement trucks, drivers, delivery routes, and types of cement. It is

known that the sum of the costs of the delivery routes plus the drivers'

pay must fall below some particular maximum. And the other variables

are

similarly tied to given maximum values. These inequalities are called

"constraints". If Sierra Cement wants to maximize their profit, they

could spend all day doing "what-if" tinkering on a spreadsheet on a

computer, or they could solve it in a few seconds using the Simplex

method, which kicks out the absolute maximum possible profit, and the

exact values of all the variables required to produce that maximum.

Nice,

eh? Every business should use it, but few have even heard about it.

Complete details can be found in any textbook about "Finite

Mathematics". -jkh-]

SIMPLEX3 can solve a problem with 8 variables and 5 constraints in less

than 15 seconds performing 5 iterations on the tableau.

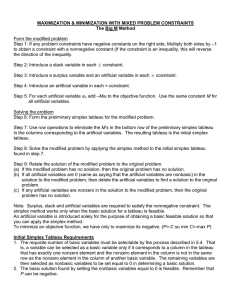

The problem this program is intended to solve is of the form,

min

st

c.x

A.x = b

x >= 0

(* b must be greater than zero *)

The way the problem is entered is as a matrix of the following form,

[ c | 0 | 0 ]

[---+---+---]

[ A | I | b ]

Where I is the matrix formed by adding the slack and excess variables.

I is NOT necessarily the identity matrix.

For example to solve the following problem

max x1 + 2 x2

st

x1 + x2

x1 - x2 +

x2 +

x1 + x2 +

+ x3

<= 3

x3 <= 3

x3 <= 3

x3 >= 3

x2

<= 2

x1, x2, x3 >=0

We convert it to the standard form

min - x1 - 2 x2 - x3

st

x1 + x2

+ x4

x1 - x2 + x3

+ x5

x2 + x3

+ x6

x1 + x2 + x3

- x7

x2

+ x8

x1, x2, x3 >= 0

=

=

=

=

=

3

3

3

3

2

And write it as a matrix to enter it in the program.

[[ -1 -2 -1 0 0 0 0 0

[ 1 1 0 1 0 0 0 0

[ 1 -1 1 0 1 0 0 0

[ 0 1 1 0 0 1 0 0

[ 1 1 1 0 0 0 -1 0

[ 0 1 0 0 0 0 0 1

0

3

3

3

3

2

]

]

]

]

]

]]

This example matrix is included in the SIMPLEX3 directory under the

name "P".

To solve this problem right away, enter the matrix [just press {alpha}

P {enter} -jkh-], press the [INIT] key which initializes some of the

variables used by the program and then press the [SOLVE] key which

iterates and gives the solution in three parts as follows,

3:

2:

1:

:X: [ 2 .999999999998 2 0 0 0 2 1 ]

:\Gl: [ -1 0 -1 0 0 ]

:Z: -6

Where x is the actual point that minimizes the problem, the \Gl (lambda)

is the solution to the dual problem or shadow prices of each of the

constraints, and Z is the objective function value at the point found to

be the minimum.

This is the end of the quick start procedure to solve linear programming

problems with my program. Now lets go to some details...

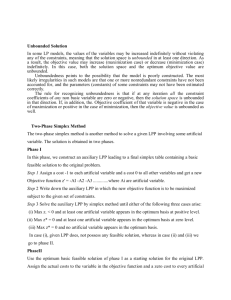

The solutions of LP (linear programming problems) can be classified into:

unfeasible, when there is no point satisfying the set of constraints

unbounded, when the set of constraints can not restrict the decrease

of the objective function (c.x)

feasible & bounded, when there is a solution to the set of

constraints

which minimizes the objective function value.

The problem can handle all three cases, giving the solution when it is

feasible and bounded, providing a ray (which is a vector through which

you can infinitely decrease the objective function while remaining in

the feasible region) when the solution is unbounded, or flagging that

there is no feasible solution.

To achieve the different solutions, the program goes through the two

phase simplex method automatically (you don't have to include artificial

variables). Phase 1 is used to derive a starting basic feasible solution

by introducing a set of artificial variables whith the objective of

minimizing its sum; when this is achieved, the set of artificial

variables is removed and Phase 2 starts with the original objective

function. (refer to any LP book for more details into the 2 phase

simplex method) One important thing to notice, is that the columns

corresponding to the artificial variables, although not taken into

account on phase 2, are not removed from the problem because it is

sometimes usefull to have these columns at the end of the problem in

order to perform sensitivity analysis.

The basic algorithm that I used to solve the problem is a pseudo

revised simplex method, where all that is maintained is the inverse of

the base, the set of basic and non basic variables, and the rest of

the data provided at the initialization process. I think this is

the most effective procedure in terms of time because it requires less

computations...

Here's a brief explanation of what the programs in the directory do.

This first set of programs are the ones intended to be used directly

to solve the problem:

[INIT]

Initializes the set of variables that are needed by the

program.

[TABL] Provides the current tableau.

[SOLU] Extracts the solution from the current tableau.

[SRAY] Extracts a ray from an unbounded LP.

[TRACE] Performs one iteration at a time; it is equivalent to solve,

but you can take a look at the partial tableaus that are

generated by the procedure. [Great for homework or exams in

Finite Mathematics courses, where you must show "your" work!

-jkh-]

[SOLVE] Gives the answer to the LP if it exists.

This second set of programs should not be used directly unless you

REALLY know what you're doing. There are some system-RPL programs

that can crash your system...

[ITER]

Given which variable enters and which variable exits,

this program performs the necessary iterations. Be careful

because the numbers that this programs expects are the

indices into the BVAR and NVAR variables corresponding to

the basic and non-basic variables.

[INVAR] Calculates which variable enters the base. It returns 0

if it is an optimum.

[OUTVAR] Given which variable enters the base, it gives which

variable must exit the base. It returns 0 if the solution

is unbounded.

[PH1]

Performs all the calculations necessary to start phase 1.

[PH2]

Same but for phase 2.

[MCHOP] Given a matrix and the contents of the 'ZERO' variable

if an element of the matrix is less than 'ZERO' in absolute

value it changes it to 0.

[MVIN] Calculates the min element such that it is strictly less

than zero. It returns the index into the array corresponding

to the element. It returns 0 if no negative elements are

found.

[MVOUT] It performs the ratio test among two vectors.

2: v2

1: v1

Returns i s.t. min over all i [ v1[i]/v2[i] for v2[i]>0 ]

Returns 0 if all v2[i] are less or equal to 0.

[MJOC] Given two matrices it joins them in the standard form

2: [ A ]

1: [ B ]

It returns [ A | B ]

[MSBC] It takes the columns of a column as specified by a list

with the numbers of the columns.

2: [ A ]

1: { 3 2 2 1} It returns [ A3 A2 A2 A1 ] where Ai represents

the ith column of A.

[MELM] This program is used to calculate the matrix that when

multiplied by the inverse of the base, gives the new

inverse.

2: [1 2 4]

1: 1

It returns [[ 1 0 0]

[-2 1 0]

[-4 0 1]

Multyplying by this matrix is equivalent to performing

row reduction in row 1 (as specified by element in level 1)

given that the column involved is specified by the element

in level 2.

[MBASE] It quickly calculates wether there is a base in the system

and if there is, which one it is. It returns a list such that

its elements correspond to the elements of the original matrix

that corresponds to the the columns of the identity.

[MSPL] It just separates the original problem form,

[ c | 0 | 0 ]

[---+---+---]

[ A | I | b ]

Into the matrices [ A | I ], [ b ], and [ c ].

[CLR14] Clears the top 14 rows of the display.

Here are the variables used by the above procedures. Do not play with

these variables during a run of the problem because you might get

incorrect answers.

[ZERO]

[BINV]

Threshold after which numbers are considered to be zero.

This in important because due to numeric problems, results

tend to show some rounding errors.

This is the inverse of the base.

[ACST]

[NVAR]

[BVAR]

[COST]

[DATA]

[RSRC]

[ARTI]

[COLS]

[ROWS]

Actual cost function being used, it changes from phase 1

to phase 2.

Non basic variables.

Basic variables, in the order in which they are in the

inverse of the base.

The original objective function coefficients of the problem.

The matrix [ c ]

The matrix [ A | I ]

The matrix [ b ]

Number of artificial variables included.

Number of variables in the problem, excluding artificials.

Number of constraints in the problem.

The custom menu provides a quick access to all of these variables.

I hope that most of the question will be answered by the above brief

description, but you can always contact me, and if I have time I will

get back yo you fairly soon.

Ricardo Marimon

28-D Escondido Village

Stanford CA 94305

Email: rmarimon@leland.stanford.edu

Most of the bugs have already been worked out, but as Murphy says, there

is always one more bug... Please send any bug reports to my email

address...

Of course, I am not responsible for any damage that this program might

cause to your calculator, your job, yout studies, or your life... :-)

But again, I haven't had any troubles for months.