4.10 Some Derivations and Proofs (Optional) 4.10.1 Proof that the

advertisement

4.10 Some Derivations and Proofs (Optional)

4.10.1 Proof that the Probability Function of the Hypergeometric Distribution

sums to 1

We consider the identity

1 a 1 a

N1

N N1

1 a

N

(4.10.1)

N

The coefficient of a n in the expansion of the right-hand side is . The left-hand side of

n

(4.10.1) can be written as

N1

1 a

1

a N1

N N1 ( n x )

a

nx

N

1 ax

x

1

a ( N N1 )

(4.10.2)

Thus, the coefficient of a n in the expansion of the left-hand side of (4.10.1) is seen to be

N1 N N1

n x

x

x

where the summation is over values of x satisfying

max 0, n N N1 x min n, N1

As the coefficients of a n of the left and right sides of (4.10.1) must be equal, we have

N1 N N1 N

x n x n

x

N

and division by yields

n

h( x ) 1

x

4.10.2 Mean and Variance of the Hypergeometric Distribution

(4.10.3)

Given the hypergeometric distribution having probability function

N N (1 )

x n x

h( x )

N

n

(4.10.4)

we wish to show that its mean and variance 2 are given by

n

2

N n

n (1 )

N 1

Using (4.2.1) note that to find the mean we must evaluate the sum

xh( x),

where the sum extends over the entire sample space of X. Since h( x) is a probability

distribution, we have

h( x) 1,

and hence

N N (1 ) N

x n x n

(4.10.5)

To evaluate xh( x ) , we must consider the sum

N N (1 )

x n x

x

(4.10.6)

N

N 1

But x

N

for x 0 , and hence the sum in (4.10.6) may be expressed as

x

x 1

N

N 1 N (1 )

x 1 (n 1) ( x 1)

(4.10.7)

N 1

It can be seen from (4.10.5) that the sum in (4.10.7) has the value N

. Hence,

n 1

N 1

N

n 1

N

n

(4.10.8)

which reduces to

n

(4.10.8a)

Using (4.2.2a) for 2 , we have

2 x 2 h( x) (n )2

[ x( x 1) x]h( x) (n ) 2

x ( x 1) h( x) n (n )

(4.10.9)

2

since xh( x) n and x x( x 1) x.

2

Thus, the problem of obtaining the value of 2 for the hypergeometric distribution reduces

to the evaluation of x( x 1)h( x) , that is, the evaluation of

N N (1 )

x n x

x( x 1)

(4.10.10)

But for x 2 , we have that

N

N 2

x( x 1)

( N )( N 1)

x

x2

Hence, (4.10.10) reduces to

N (1 )

N 2

N 2

( N )( N 1)

( N )( N 1)

x 2 (n 2) ( x 2)

n2

Thus, we have, from (4.10.9) and the above, that

N 2

n2

2 ( N )( N 1)

n (n ) 2

N

n

which, after some algebra, simplifies to

N n

n (1 )

N 1

4.10.3 Mean and Variance of the Binomial Distribution

2

(4.10.11)

The binomial distribution has probability function

n

b( x) x (1 )n x ,

x 0, 1, , n

x

and we wish to show that its mean and variance 2 are given by

(4.10.12)

n ,

2 n (1 )

The mean is defined as

n

n

x x (1 )n x

x

(4.10.13)

We note that for x = 0, the first term in (4.10.13) is zero, so the summation is from x = 1 to n.

Further, for x 1, we have that

x0

n

n 1

x n

.

x

x 1

Hence

n

n

n 1

x 1

( x 1)

n [(1 ) ]( n 1)

(1 )( n 1) ( x 1)

x 1

That is,

n

(4.10.14)

Using the same technique as in (4.10.9), namely that x 2 x( x 1) x , we have that the

variance 2 is given by

n

2 x( x 1) b( x) n (n ) 2

(4.10.15)

x0

since

n

x0

xb( x) = n . Inserting the expression for b( x ) , we obtain for the first term of

the right-hand side of (4.10.15),

n

n

x( x 1) x

x0

x

(1 ) n x

Now since the terms in the above summation for which x = 0 and x= 1 are zero, this

summation reduces to

n

n 2 ( x 2)

n(n 1) 2

(1 )( n 2) ( x 2) n(n 1) 2 [(1 ) ]n 2

x

2

x2

n(n 1) 2

Hence we obtain

2 n(n 1) 2 n (n )2

and we then have

2 n (1 )

(4.10.16)

4.10.4 Mean and Variance of the Poisson Distribution

The Poisson distribution has probability function

p( x)

e x

,

x!

x 0, 1, 2,

(4.10.17)

Its mean and variance both have the value (the parameter appearing in the distribution

itself).

To obtain the value of the mean, we must evaluate the sum

x

x0

e x

e x

x

x!

x!

x 1

which reduces to

e

( x 1) 0

( x 1)

( x 1)!

e e

(4.10.18)

That is, the mean of the Poisson distribution is .

The variance 2 of the Poisson distribution (4.10.17) is (again using x 2 x( x 1) x ) given

by

x

x2

x!

x( x 1)e

2

But the sum reduces to

2e

( x 2) 0

x2

( x 2)!

2e e 2

We then have 2 2 2 , so that the variance of the Poisson distribution is

2 Var ( X ) .

4.10.5 Derivation of the Poisson Distribution

Suppose we assume that the probability of occurrence of an insulation break in a piece of

wire L units long, L , is independent of both the position on the wire (of length L units)

and the number of insulation breaks that occur elsewhere on the wire. Under these

assumptions, and dividing up a piece of wire of length L units into n small pieces of length

L L n units each, we further assume that:

1. The probability that exactly one insulation break occurs in a specified piece of length L

units ( L small) is L

2. The probability that no breaks occur in a piece of length L is 1 L

3. The probability that two or more breaks occur in a piece of wire of length L , which we

express by o( L) , is such that o( L) L 0 as L 0

The event that x breaks occur in a piece of wire of length L L is the union of the

following mutually exclusive events:

(a) Exactly x breaks occur in the piece of wire of length L and none in the “bordering” piece

of length L ;

(b) Exactly x 1 breaks occur in the piece of wire of length L and exactly one in the

“bordering” piece of length L ;

(c) Exactly x i breaks occur in the piece of length L and exactly i in the “bordering” piece

of length L , where i 2,

,x.

The probabilities of the events described in assumptions (a), (b), and (c) are respectively

px ( L) (1 L) , px 1 ( L) L , and a quantity that we denote by o( L) , which goes to zero

faster than L goes to zero. Hence

px ( L L) px ( L) (1 L) px 1 ( L) L o( L)

if x 1, 2,

. For x = 0,

p0 ( L L) p0 ( L) (1 L) o( L)

These equations may be rewritten as follows:

px ( L L) px ( L)

o( L )

px 1 ( L) px ( L)

,

L

L

p0 ( L L) p0 ( L)

o( L )

p0 ( L)

L

L

x 1, 2,

x0

As L 0 , that is, as we allow the length of L L to shrink to L, we obtain the

differential equations

d px ( L)

px 1 ( L) px ( L)

dL

and

d p0 ( L)

p0 ( L).

dL

If we define p0 1 and let px (0) 0 for x 1, we obtain the solution

px ( L)

( L ) x e L

,

x!

x 0, 1,

,

which is the Poisson distribution p ( x ) given in (4.8.1) with distribution parameter replaced

by L .

4.10.6 Approximation of the Hypergeometric by the Binomial Distribution

We can obtain the probability function of the binomial distribution by applying a limiting

process to that of the hypergeometric distribution. We first give the method and then

interpret the result. It is convenient to use (4.4.2) for this purpose. There we obtained

N N 1

x n x

h( x )

N

n

which may be written as

N 1 !

n ! N n !

N !

x ! N x ! n x ! N 1 n x !

N!

After expanding the factorials and performing some algebra, this may be re-expressed as

n

x

1

N

x 1

1

n x 1

1 1 1

N

N

N

.

1

x

1

n

1

1 1 1

1

N

N

N

If N increases indefinitely while , the proportion of articles of type A, remains constant, we

find

n

nx

lim h( x) x 1

b( x )

N

x

This result shows that, if N (the population size) is large, the probability function h( x) of the

hypergeometric distribution can be approximated by the probability function b( x ) of the

binomial distribution for any value of x that can occur, the approximation error tending to

zero as N . This implies that, for very large N, sampling with replacement gives

approximately the same probabilities as sampling without replacement.

___________________________________________________________________________

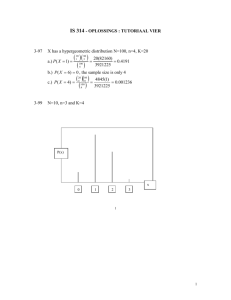

Example 4.6.1 (Approximating Hypergeometric Probabilities) To give some idea of the

closeness of the approximation, suppose we have a lot of 10,000 articles, of which 500 are

defective. If a sample of size 10 is taken at random, the probabilities of obtaining x defectives

for x 0, 1, 3, 4, and 5 are given in Table 4.6.1 for the cases of sampling with replacement

(binomial) and without replacement (hypergeometric). The table shows that, if we use the

probability function of the binomial distribution to approximate that of the hypergeometric

distribution for this case, the error is very small indeed. To use the approximating binomial if

N is large, we set of the binomial by taking N1 / N , and with n the sample size.

TABLE 4.10.1 Values of b( x ) with n 10, .05 and h( x) with N 10,000, N1 500,

n 10.

x

0

1

2

3

4

5

b( x )

.5987

.3151

.0746

.0105

.0009

.0001

h( x )

.5986

.3153

.0746

.0104

.0007

.0000

4.10.7 Moment Generating Function of the Poisson Distribution

M x (t ) E (etX )

etx

x0

e

e x

x!

(4.8.6)

( e t ) x

x!

x0

e e e e (e

t

t

1)

(4

To find moments, we proceed by differentiating M x (t ), and in particular we find

t

d M x (t )

e ( e 1) t

dt

2

t

d M x (t )

{( et 1) e ( e 1) t }

2

dt

Hence

1 E ( X )

2 E ( X 2 )

d M x (0)

,

dt

(4.8.7)

d 2 M x (0)

[( 1)] 2 .

2

dt

From (4.2.2a), we have that

Var ( X ) E ( X 2 ) [ E ( X )]2

2 ( ) 2

(4.8.8)