CS 6293 Advanced Topics: Translational Bioinformatics

advertisement

CS 6293 Advanced Topics:

Translational Bioinformatics

Intro & Ch2 - Data-Driven View of

Disease Biology

Jianhua Ruan

Road map

• What is translational bioinformatics

• Probability and statistics background

• Data-driven view of disease biology

– Bayesian Inference

– Network of functional related genes

– Evaluation of network

What is translational

bioinformatics?

– Advancement in biological technology (for high-throughput data

collection) and computing technology (for cheap and efficient largescale data storage, processing, and management) has shifted modern

biomedical research towards integrative and translational

– Translational medical research:

• the process of moving discoveries and innovations generated during

research in the laboratory, and in preclinical studies, to the development of

trials and studies in humans, leading to improved diagnosis, prognosis, and

treatment.

– Barriers to translating our molecular understanding into technologies

that impact patients:

• understanding health market size and forces, the regulatory milieu, how to

harden the technology for routine use, and how to navigate an increasingly

complex intellectual property landscape

• Connecting the stuff of molecular biology to the clinical world

– The book chapters in this PLoS Comput Bio collection deals mostly with

computational methodologies that likely to have an impact on clinical

research / practice

Topic 1: Network-based understanding of

disease mechanisms

• Chapter 2: Data-Driven View of Disease

Biology

• Chapter 4: Protein Interactions and

Disease

• Chapter 5: Network Biology Approach to

Complex Diseases

• Chapter 15: Disease Gene Prioritization

Topic 2: drug design / discovery using

computational / systems approaches

• Chapter 3: Small Molecules and Disease

• Chapter 7: Pharmacogenomics

• Chapter 17: Bioimage Informatics for

Systems Pharmacology

Topics 3: Genome sequencing and

disease

• Chapter 6: Structural Variation and

Medical Genomics

• Chapter 12: Human Microbiome Analysis

• Chapter 14: Cancer Genome Analysis

Topic 4: Automated knowledge

discovery and representation

• Chapter 8: Biological Knowledge

Assembly and Interpretation

• Chapter 9: Analyses Using Disease

Ontologies

• Chapter 13: Mining Electronic Health

Records in the Genomics Era

• Chapter 16: Text Mining for Translational

Bioinformatics

Ch2: Data-Driven View of Disease

Biology

•

Diverse genome-scale datasets exist

–

–

–

–

–

–

•

Genome sequences

Microarrays

genome-wide association studies

RNA interference screens

Proteomics databases

Databases of gene functions, pathways, chemicals, protein interactions, etc.

Promise to provide systems level understanding of disease mechanisms

– Modeling (understand)

– Inference (make prediction)

•

Integration is the key challenge

– Experimental noise

– Biological heterogeneity: e.g. source of material – cells in culture or biopsied

tissues?

– Computational heterogeneity: e.g. data format – discrete or continuous?

Bayesian Inference

• Powerful tool used to make predictions

based on experimental evidence

• Simple yet elegant probabilistic theories

• Easy to understand and implement

• Data-driven modeling

– No explicit assumption about the underlying

biological mechanisms

Probability Basics

• Definition (informal)

– Probabilities are numbers assigned to events

that indicate “how likely” it is that the event

will occur when a random experiment is

performed

– A probability law for a random experiment is

a rule that assigns probabilities to the events

in the experiment

– The sample space S of a random experiment

is the set of all possible outcomes

Example

0 P(Ai) 1

P(S) = 1

Random variable

• A random variable is a function from a

sample to the space of possible values of

the variable

– When we toss a coin, the number of times

that we see heads is a random variable

– Can be discrete or continuous

• The resulting number after rolling a die

• The weight of an individual

Cumulative distribution function

(cdf)

• The cumulative distribution function FX(x)

of a random variable X is defined as the

probability of the event {X≤x}

F (x) = P(X ≤ x) for −∞ < x < +∞

Probability density function (pdf)

• The probability density function of a

continuous random variable X, if it

exists, is defined as the derivative of

FX(x)

• For discrete random variables, the

equivalent to the pdf is the probability

mass function (pmf):

Probability density function vs

probability

• What is the probability for

somebody weighting 200lb?

• The figure shows about 0.62

– What is the probability of

200.00001lb?

• The right question would be:

– What’s the probability for somebody

weighting 199-201lb.

• The probability mass function is

true probability

– The chance to get any face is 1/6

Some common distributions

• Discrete:

–

–

–

–

–

Binomial

Multinomial

Geometric

Hypergeometric

Possion

• Continuous

–

–

–

–

–

–

Normal (Gaussian)

Uniform

EVD

Gamma

Beta

…

Probabilistic Calculus

• If A, B are mutually exclusive:

– P(A U B) = P(A) + P(B)

• Thus: P(not(A)) = P(Ac) = 1 – P(A)

A

B

Probabilistic Calculus

• P(A U B) = P(A) + P(B) – P(A ∩ B)

Conditional probability

• The joint probability of two events A and B

P(A∩B), or simply P(A, B) is the probability that

event A and B occur at the same time.

• The conditional probability of P(B|A) is the

probability that B occurs given A occurred.

P(A | B) = P(A ∩ B) / P(B)

Example

• Roll a die

– If I tell you the number is less than 4

– What is the probability of an even number?

• P(d = even | d < 4) = P(d = even ∩ d < 4) / P(d < 4)

• P(d = 2) / P(d = 1, 2, or 3) = (1/6) / (3/6) = 1/3

Independence

• P(A | B) = P(A ∩ B) / P(B)

=> P(A ∩ B) = P(B) * P(A | B)

• A, B are independent iff

– P(A ∩ B) = P(A) * P(B)

– That is, P(A) = P(A | B)

• Also implies that P(B) = P(B | A)

– P(A ∩ B) = P(B) * P(A | B) = P(A) * P(B | A)

Examples

• Are P(d = even) and P(d < 4) independent?

–

–

–

–

P(d = even and d < 4) = 1/6

P(d = even) = ½

P(d < 4) = ½

½ * ½ > 1/6

• If your die actually has 8 faces, will P(d = even)

and P(d < 5) be independent?

• Are P(even in first roll) and P(even in second

roll) independent?

• Playing card, are the suit and rank independent?

Bayes theorem

• P(A ∩ B) = P(B) * P(A | B) = P(A) * P(B | A)

Likelihood

=> P(B | A) =

Posterior probability of B

P ( A | B ) P (B )

Prior of B

P( A)

Normalizing constant

This is known as Bayes Theorem or Bayes Rule, and is (one of) the

most useful relations in probability and statistics

Bayes Theorem is definitely the fundamental relation in Statistical Pattern

Recognition

Example

• Prosecutor’s fallacy

– Some crime happened

– The suspect did not leave any evidence, except some

hair

– The police got his DNA from his hair

• Some expert matched the DNA with that of a

suspect

– Expert said that both the false-positive and false

negative rates are 10-6

• Can this be used as an evidence of guilty

against the suspect?

Prosecutor’s fallacy

•

•

•

•

Prob (match | innocent) = 10-6

Prob (no match | guilty) = 10-6

Prob (match | guilty) = 1 - 10-6 ~ 1

Prob (no match | innocent) = 1 - 10-6 ~ 1

• Prob (guilty | match) = ?

Prosecutor’s fallacy

P (g | m) = P (m | g) * P(g) / P (m)

~ P(g) / P(m)

• P(g): the probability for someone to be

guilty with no other evidence

• P(m): the probability for a DNA match

• How to get these two numbers?

– We don’t really care P(m)

– We want to compare two models:

• P(g | m) and P(i | m)

Prosecutor’s fallacy

• P(i | m) = P(m | i) * P(i) / P(m)

= 10-6 * P(i) / P(m)

• Therefore

P(i | m) / P(g | m) = 10-6 * P(i) / P(g)

• P(i) + P(g) = 1

• It is clear, therefore, that whether we can conclude the

suspect is guilty depends on the prior probability P(i)

• How do you get P(i)?

Prosecutor’s fallacy

• How do you get P(i)?

• Depending on what other information you have on the

suspect

• Say if the suspect has no other connection with the

crime, and the overall crime rate is 10-7

• That’s a reasonable prior for P(g)

• P(g) = 10-7, P(i) ~ 1

• P(i | m) / P(g | m) = 10-6 * P(i) / P(g) = 10-6/10-7 = 10

Prosecutor’s fallacy

• P(i | m) / P(g | m) = 10-6/10-7 = 10

• Therefore, we would say the suspect is

more likely to be innocent than guilty,

given only the DNA samples

• We can also explicitly calculate P(i | m):

P(m) = P(m|i)*P(i) + P(m|g)*P(g)

= 10-6 * 1 + 1 * 10-7

= 1.1 x 10-6

P(i | m) = P(m | i) * P(i) / P(m) = 1 / 1.1 = 0.91

Another example

• A test for a rare disease claims that it will

report a positive result for 99.5% of people

with the disease, and 99.9% of time of

those without.

• The disease is present in the population at

1 in 100,000

• What is P(disease | positive test)?

• What is P(disease | negative test)?

Relation to multiple testing problem

• When searching a DNA sequence against a database,

you get a high score, with a significant p-value

• P(unrelated | high score) / P(related | high score) =

P(high score | unrelated) * P(unrelated)

P(high score | related) * P(related)

Likelihood ratio

• P(high score | unrelated) is much smaller than P(high

score | related)

• But your database is huge, and most sequences should

be unrelated, so P(unrelated) is much larger than

P(related)

Combining Diverse Data Using

Bayesian Inference

• Want to calculate the probability that a

gene of unknown function is involved in a

disease

• Collect positive and negative genes (gold

standard)

• Measure their activities under three

hypothetical conditions

•

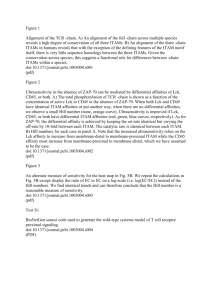

Figure 1. Potential distributions of experimental results obtained for datasets collected under three different conditions.

Greene CS, Troyanskaya OG (2012) Chapter 2: Data-Driven View of Disease Biology. PLoS Comput Biol 8(12): e1002816.

doi:10.1371/journal.pcbi.1002816

http://www.ploscompbiol.org/article/info:doi/10.1371/journal.pcbi.1002816

Higher score in cond A and lower score in cond C => involved in disease

P (involved in disease | experimental data)?

•

Table 1. A contingency table for the experimental results for Condition A.

Greene CS, Troyanskaya OG (2012) Chapter 2: Data-Driven View of Disease Biology. PLoS Comput Biol 8(12): e1002816.

doi:10.1371/journal.pcbi.1002816

http://www.ploscompbiol.org/article/info:doi/10.1371/journal.pcbi.1002816

• Probability that a gene i is involved in

disease given the experimental results for

likelihood

gene i

Prior

Normalizing factor

Prior

Combining datasets using Naïve

Bayes

P(D | EB, EC) P(EB, EC | D) P(D)

P(EB | D) P(EC | D) P(D)

P(~D | EB, EC) P(EB, EC | ~D) P(~D)

P(EB | ~D) P(EC | ~D) P(~D)

P(D | EB, EC) + P(~D | EB, EC) = 1.

Define Gold Standard (training

samples) for gene-gene network

• Positive examples: genes within the same

biological process

– Rely on expert selected Gene Ontology terms

• biological regulation

• response to stimulus

• cell-matrix adhesion involved in tangential migration using

cell-cell interactions

• response to DNA damage stimulus

• ldehyde metabolism

• Negative examples: random gene pairs

– Assuming most gene pairs are not related

Building a Network of Functionally

Related Genes

• P(FRij | Eij) = P(Eij | FRij) P(FRij)

• Eij: evidence (score) for a functional relationship

between gene i and gene j from a particular

dataset

• For some dataset, e.g., physical interaction data,

obtaining Sij is trivial

• In general, Sij can be calculated using gene-wise

correlation

Fisher's z-transformation

• Pearson correlation coefficient

Z-transformation

Purpose: stabilizing variance

Source: wikipedia

•

Figure 4. The highest and lowest contributing datasets for the pair of APOE and PLTP are shown

(http://hefalmp.princeton.edu/gene/one_specific_gene/18543?argument=21697&amp;context=0).

Greene CS, Troyanskaya OG (2012) Chapter 2: Data-Driven View of Disease Biology. PLoS Comput Biol 8(12): e1002816.

doi:10.1371/journal.pcbi.1002816

http://www.ploscompbiol.org/article/info:doi/10.1371/journal.pcbi.1002816

•

Figure 5. The diseases that are significantly connected to APOE through the guilt by association strategy used in HEFalMp.

Used Fisher’s exact test

Greene CS, Troyanskaya OG (2012) Chapter 2: Data-Driven View of Disease Biology. PLoS Comput Biol 8(12): e1002816.

doi:10.1371/journal.pcbi.1002816

http://www.ploscompbiol.org/article/info:doi/10.1371/journal.pcbi.1002816

•

Figure 6. The genes that are most significantly connected to Alzheimer disease genes using the HEFalMp network and OMIM

disease gene annotations (http://hefalmp.princeton.edu/disease/all_genes/55?context=0).

Greene CS, Troyanskaya OG (2012) Chapter 2: Data-Driven View of Disease Biology. PLoS Comput Biol 8(12): e1002816.

doi:10.1371/journal.pcbi.1002816

http://www.ploscompbiol.org/article/info:doi/10.1371/journal.pcbi.1002816

Evaluating Functional Relationship

Networks

• TPR vs FPR plot (ROC curve) and AUC

• Separate gold standard into training and

testing

• Cross validation

• Literature evaluation

Summary

• We talked about

– Prob / stats background

– Bayes inference method to integrate multiple largescale, noisy datasets to predict

• gene-disease associations

• gene-gene associations

– Network useful for discovering novel gene functions

and directing experimental followups

• Advantage against curated literature or analysis based on

single dataset

• Limited by availability / quality of gold standard data