Parallel-Architectures-Performence-Analysis

advertisement

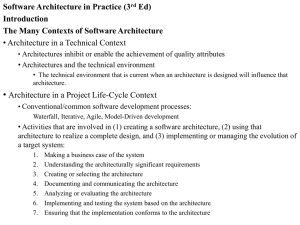

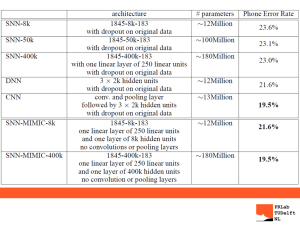

Prepared 7/28/2011 by T. O’Neil for 3460:677, Fall 2011, The University of Akron. Parallel computer: multiple-processor system supporting parallel programming. Three principle types of architecture Vector computers, in particular processor arrays Shared memory multiprocessors Specially designed and manufactured systems Distributed memory multicomputers Message passing systems readily formed from a cluster of workstations Parallel Architectures and Performance Analysis – Slide 2 Vector computer: instruction set includes operations on vectors as well as scalars Two ways to implement vector computers Pipelined vector processor (e.g. Cray): streams data through pipelined arithmetic units Processor array: many identical, synchronized arithmetic processing elements Parallel Architectures and Performance Analysis – Slide 3 Natural way to extend single processor model Have multiple processors connected to multiple memory modules such that each processor can access any memory module So-called shared memory configuration: Parallel Architectures and Performance Analysis – Slide 4 Parallel Architectures and Performance Analysis – Slide 5 Type 2: Distributed Multiprocessor Distribute primary memory among processors Increase aggregate memory bandwidth and lower average memory access time Allow greater number of processors Also called non-uniform memory access (NUMA) multiprocessor Parallel Architectures and Performance Analysis – Slide 6 Parallel Architectures and Performance Analysis – Slide 7 Complete computers connected through an interconnection network Parallel Architectures and Performance Analysis – Slide 8 Distributed memory multiple-CPU computer Same address on different processors refers to different physical memory locations Processors interact through message passing Commercial multicomputers Commodity clusters Parallel Architectures and Performance Analysis – Slide 9 Parallel Architectures and Performance Analysis – Slide 10 Parallel Architectures and Performance Analysis – Slide 11 Parallel Architectures and Performance Analysis – Slide 12 Michael Flynn (1966) created a classification for computer architectures based upon a variety of characteristics, specifically instruction streams and data streams. Also important are number of processors, number of programs which can be executed, and the memory structure. Parallel Architectures and Performance Analysis – Slide 13 Control unit Control Signals Arithmetic Processor Results Instruction Memory Data Stream Parallel Architectures and Performance Analysis – Slide 14 Control Unit Control Signal PE 1 PE 2 Data Stream 1 Data Stream 2 PE n Data Stream n Parallel Architectures and Performance Analysis – Slide 15 Control Unit 1 Instruction Stream 1 Control Unit 2 Instruction Stream 2 Control Unit n Instruction Stream n Processing Element 1 Processing Element 2 Data Stream Processing Element n Parallel Architectures and Performance Analysis – Slide 16 S1 S2 S3 S4 S1 S2 S3 S4 Serial execution of two processes with 4 stages each. Time to execute T = 8 t , where t is the time to execute one stage. S1 S2 S3 S4 S1 S2 S3 S4 Pipelined execution of the same two processes. T=5t Parallel Architectures and Performance Analysis – Slide 17 Control Unit 1 Instruction Stream 1 Control Unit 2 Instruction Stream 2 Control Unit n Instruction Stream n Processing Element 1 Processing Element 2 Processing Element n Data Stream 1 Data Stream 2 Data Stream n Parallel Architectures and Performance Analysis – Slide 18 Multiple Program Multiple Data (MPMD) Structure Within the MIMD classification, which we are concerned with, each processor will have its own program to execute. Parallel Architectures and Performance Analysis – Slide 19 Single Program Multiple Data (SPMD) Structure Single source program is written and each processor will execute its personal copy of this program, although independently and not in synchronism. The source program can be constructed so that parts of the program are executed by certain computers and not others depending upon the identity of the computer. Software equivalent of SIMD; can perform SIMD calculations on MIMD hardware. Parallel Architectures and Performance Analysis – Slide 20 Architectures Vector computers Shared memory multiprocessors: tightly coupled Centralized/symmetrical multiprocessor (SMP): UMA Distributed multiprocessor: NUMA Distributed memory/message-passing multicomputers: loosely coupled Asymmetrical vs. symmetrical Flynn’s Taxonomy SISD, SIMD, MISD, MIMD (MPMD, SPMD) Parallel Architectures and Performance Analysis – Slide 21 A sequential algorithm can be evaluated in terms of its execution time, which can be expressed as a function of the size of its input. The execution time of a parallel algorithm depends not only on the input size of the problem but also on the architecture of a parallel computer and the number of available processing elements. Parallel Architectures and Performance Analysis – Slide 22 The speedup factor is a measure that captures the relative benefit of solving a computational problem in parallel. The speedup factor of a parallel computation utilizing p processors is defined as the following ratio: In other words, S(p) is defined as the ratio of the sequential processing time to the parallel processing time. Parallel Architectures and Performance Analysis – Slide 23 Speedup factor can also be cast in terms of computational steps: Maximum speedup is (usually) p with p processors (linear speedup). Parallel Architectures and Performance Analysis – Slide 24 Given a problem of size n on p processors let Inherently sequential computations (n) Potentially parallel computations Communication operations (n) (n,p) Then: Parallel Architectures and Performance Analysis – Slide 25 Computation Time Communication Time “elbowing out” Number of processors Parallel Architectures and Performance Analysis – Slide 26 The efficiency of a parallel computation is defined as a ratio between the speedup factor and the number of processing elements in a parallel system: E Exec. time using one processor p Exec. time using p processors Ts p Tp S ( p) p Efficiency is a measure of the fraction of time for which a processing element is usefully employed in a computation. Parallel Architectures and Performance Analysis – Slide 27 Since E = S(p)/p, by what we did earlier Since all terms are positive, E > 0 Furthermore, since the denominator is larger than the numerator, E < 1 Parallel Architectures and Performance Analysis – Slide 28 Parallel Architectures and Performance Analysis – Slide 29 As before since the communication time must be non-trivial. Let f represent the inherently sequential portion of the computation; then Parallel Architectures and Performance Analysis – Slide 30 Limitations Ignores communication time Overestimates speedup achievable Amdahl Effect Typically (n,p) has lower complexity than (n)/p So as p increases, (n)/p dominates (n,p) Thus as p increases, speedup increases Parallel Architectures and Performance Analysis – Slide 31 As before Let s represent the fraction of time spent in parallel computation performing inherently sequential operations; then Parallel Architectures and Performance Analysis – Slide 32 Then Parallel Architectures and Performance Analysis – Slide 33 Begin with parallel execution time instead of sequential time Estimate sequential execution time to solve same problem Problem size is an increasing function of p Predicts scaled speedup Parallel Architectures and Performance Analysis – Slide 34 Both Amdahl’s Law and Gustafson-Barsis’ Law ignore communication time Both overestimate speedup or scaled speedup achievable Gene Amdahl John L. Gustafson Parallel Architectures and Performance Analysis – Slide 35 Performance terms: speedup, efficiency Model of speedup: serial, parallel and communication components What prevents linear speedup? Serial and communication operations Process start-up Imbalanced workloads Architectural limitations Analyzing parallel performance Amdahl’s Law Gustafson-Barsis’ Law Parallel Architectures and Performance Analysis – Slide 36 Based on original material from The University of Akron: Tim O’Neil, Kathy Liszka Hiram College: Irena Lomonosov The University of North Carolina at Charlotte Barry Wilkinson, Michael Allen Oregon State University: Michael Quinn Revision history: last updated 7/28/2011. Parallel Architectures and Performance Analysis – Slide 37