Slides - Washington University Engineering

advertisement

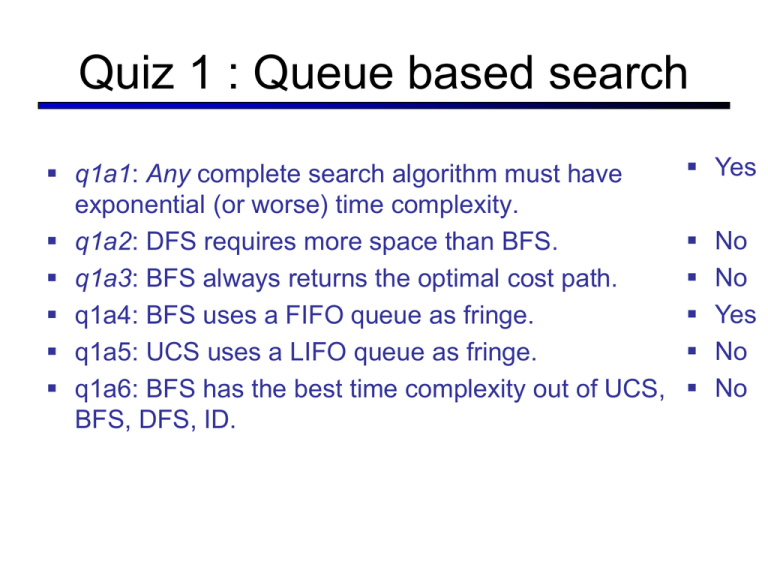

Quiz 1 : Queue based search q1a1: Any complete search algorithm must have exponential (or worse) time complexity. q1a2: DFS requires more space than BFS. q1a3: BFS always returns the optimal cost path. q1a4: BFS uses a FIFO queue as fringe. q1a5: UCS uses a LIFO queue as fringe. q1a6: BFS has the best time complexity out of UCS, BFS, DFS, ID. Yes No No Yes No No CSE511a: Artificial Intelligence Spring 2013 Lecture 3: A* Search 1/24/2012 Robert Pless – Wash U. Multiple slides over the course adapted from Kilian Weinberger, originally by Dan Klein (or Stuart Russell or Andrew Moore) Announcements Projects: Project 0 due tomorrow night. Project 1 (Search) is out soon, will be due Thursday 2/7. Find project partner Try pair programming, not divide-and-conquer Exercise more and try to eat more fruit. Today A* Search Heuristic Design Recap: Search Search problem: States (configurations of the world) Successor function: a function from states to lists of (action, state, cost) triples; drawn as a graph Start state and goal test Search tree: Nodes: represent plans for reaching states Plans have costs (sum of action costs) Search Algorithm: Systematically builds a search tree Chooses an ordering of the fringe (unexplored nodes) Machinarium Machinarium: Search Problem Youtube A*-Search General Tree Search Uniform Cost Search Strategy: expand lowest path cost … c1 c2 c3 The good: UCS is complete and optimal! The bad: Explores options in every “direction” No information about goal location Start Goal [demo: countours UCS] Best First (Greedy) Strategy: expand a node that you think is closest to a goal state … b Heuristic: estimate of distance to nearest goal for each state A common case: Best-first takes you straight to the (wrong) goal … b Worst-case: like a badlyguided DFS [demo: countours greedy] Example: Heuristic Function h(x) Combining UCS and Greedy Uniform-cost orders by path cost, or backward cost g(n) Best-first orders by goal proximity, or forward cost h(n) 5 e h=1 1 S h=6 c h=7 1 a h=5 1 1 3 2 d h=2 G h=0 b h=6 A* Search orders by the sum: f(n) = g(n) + h(n) Example: Teg Grenager Three Questions When should A* terminate? Is A* optimal? What heuristics are valid? When should A* terminate? Should we stop when we enqueue a goal? 2 A 2 h=2 S G h=3 2 B h=0 3 h=1 No: only stop when we dequeue a goal Is A* Optimal? 1 A h=6 3 h=0 S h=7 G 5 What went wrong? Actual bad goal cost < estimated good goal cost We need estimates to be less than actual costs! Admissible Heuristics A heuristic h is admissible (optimistic) if: where is the true cost to a nearest goal Example: 15 Coming up with admissible heuristics is most of what’s involved in using A* in practice. Example: Pancake Problem Cost: Number of pancakes flipped Pancake: State Space Object Example: Pancake Problem State space graph with costs as weights 4 2 3 2 3 4 3 4 3 2 2 2 4 3 Example: Heuristic Function Heuristic: the largest pancake that is still out of place 3 h(x) 4 3 4 3 0 4 4 3 4 4 2 3 Example: Pancake Problem Optimality of A*: Blocking Notation: g(n) = cost to node n h(n) = estimated cost from n to the nearest goal (heuristic) f(n) = g(n) + h(n) = estimated total cost via n G*: a lowest cost goal node G: another goal node … Optimality of A*: Blocking Proof: What could go wrong? We’d have to have to pop a suboptimal goal G off the fringe before G* This can’t happen: Imagine a suboptimal goal G is on the queue Some node n which is a subpath of G* must also be on the fringe (why?) n will be popped before G … Properties of A* Uniform-Cost … b A* … b UCS vs A* Contours Uniform-cost expanded in all directions A* expands mainly toward the goal, but does hedge its bets to ensure optimality Start Goal Start Goal [demo: countours UCS / A*] Creating Admissible Heuristics Most of the work in solving hard search problems optimally is in coming up with admissible heuristics Often, admissible heuristics are solutions to relaxed problems, where new actions are available 4 15 Inadmissible heuristics are often useful too (why?) Example: 8 Puzzle What are the states? How many states? What are the actions? What states can I reach from the start state? What should the costs be? Search State 8 Puzzle I Heuristic: Number of tiles misplaced Why is it admissible? Average nodes expanded when optimal path has length… h(start) = 8 …4 steps …8 steps …12 steps This is a relaxedproblem heuristic UCS 112 TILES 13 6,300 39 3.6 x 106 227 8 Puzzle II What if we had an easier 8-puzzle where any tile could slide any direction at any time, ignoring other tiles? Total Manhattan distance Why admissible? h(start) = 3 + 1 + 2 + … TILES = 18 MANHATTAN Average nodes expanded when optimal path has length… …4 steps …8 steps …12 steps 13 12 39 25 227 73 [demo: eight-puzzle] 8 Puzzle III How about using the actual cost as a heuristic? Would it be admissible? Would we save on nodes expanded? What’s wrong with it? With A*: a trade-off between quality of estimate and work per node! Trivial Heuristics, Dominance Dominance: ha ≥ hc if Heuristics form a semi-lattice: Max of admissible heuristics is admissible Trivial heuristics Bottom of lattice is the zero heuristic (what does this give us?) Top of lattice is the exact heuristic Other A* Applications Pathing / routing problems Resource planning problems Robot motion planning Language analysis Machine translation Speech recognition … Graph Search Tree Search: Extra Work! Failure to detect repeated states can cause exponentially more work. Why? Graph Search In BFS, for example, we shouldn’t bother expanding the circled nodes (why?) S e d b c a a e h p q q c a h r p f q G p q r q f c a G Graph Search Very simple fix: never expand a state twice Graph Search Idea: never expand a state twice How to implement: Tree search + list of expanded states (closed list) Expand the search tree node-by-node, but… Before expanding a node, check to make sure its state is new Python trick: store the closed list as a set, not a list Can graph search wreck completeness? Why/why not? How about optimality? Consistency 1 A h=4 1 h=1 S h=6 C 1 B 2 h=1 What went wrong? Taking a step must not reduce f value! 3 G h=0 Consistency Stronger than admissability Definition: C(A→C)+h(C)≧h(A) C(A→C)≧h(A)-h(C) Consequence: The f value on a path never decreases A* search is optimal h=4 A 1 h=0 C h=1 3 G Optimality of A* Graph Search Proof: New possible problem: nodes on path to G* that would have been in queue aren’t, because some worse n’ for the same state as some n was dequeued and expanded first (disaster!) Take the highest such n in tree Let p be the ancestor which was on the queue when n’ was expanded Assume f(p) ≦ f(n) (consistency!) f(n) < f(n’) because n’ is suboptimal p would have been expanded before n’ So n would have been expanded before n’, too Contradiction! Optimality Tree search: A* optimal if heuristic is admissible (and nonnegative) UCS is a special case (h = 0) Graph search: A* optimal if heuristic is consistent UCS optimal (h = 0 is consistent) Consistency implies admissibility In general, natural admissible heuristics tend to be consistent Summary: A* A* uses both backward costs and (estimates of) forward costs A* is optimal with admissible heuristics Heuristic design is key: often use relaxed problems