Document

advertisement

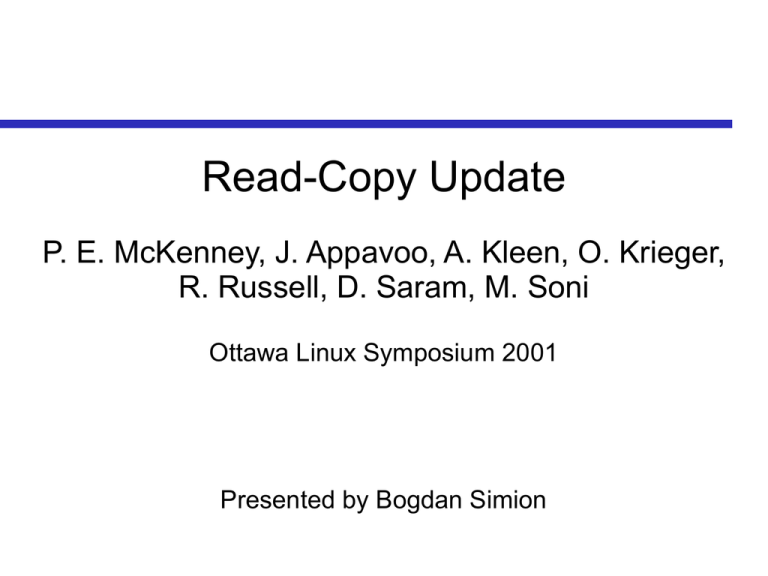

Read-Copy Update

P. E. McKenney, J. Appavoo, A. Kleen, O. Krieger,

R. Russell, D. Saram, M. Soni

Ottawa Linux Symposium 2001

Presented by Bogdan Simion

Motivation

Locking can be expensive

Overhead of locking code

Cache bouncing

Linux uses locking to protect against infrequent

destructive modifications

e.g., racy accesses to unloaded modules

Want to avoid locking expense for reads of data

that are infrequently modified

Key Idea

Example: module unloading

Give ongoing operations a grace period to finish

Grace Periods

Starts when new operations see new state

e.g., remove pointer to module from a list

First phase of the update

No new references made once period starts

Extends until after all operations that started

before the grace period finish

Operations with outstanding references finish safely

When period ends, system may cleanup

Second phase of the update, e.g., free module data

Grace Period Duration

Safe to end the grace period when all CPUs

have finished prior operations

A non-preemptive operating system finishes all

operations when it context switches

Thus, grace period ends after all CPUs have

context switched at least once

Zero reference count deduced without using

any shared data!

RCU So Far

RCU performs updates in two phases:

– Update enough so new operations see new state

but old operations can proceed using old state

– Complete the update after the grace period

RCU works well when

– Updates can be done in two phases

– Operations still work with stale state

– Destructive updates are infrequent

Let's look at an example of how it's used

Example: Reference Counted Search

• Simple circular doubly linked-list

• Compare a reference-counting locking

algorithm taken from Linux with its read-copyupdate equivalent

Reference Counted Search

• For each algorithm:

• search()

• delete()

• search(): returns a pointer to an element in the

list given its addr, and ensures that element is

not being freed up

• delete(): arranges for the specified element to

eventually be freed up

• delete() may not be able to free the element

immediately due to concurrent searches

Reference Counted Search

Reference-Counted Usage

• Read-only and update (including delete) operation

RCU Search / Delete

Search / Delete Discussion

Searching scales perfectly

No locks – scales well

No cache line bouncing

Clear advantage over reference counting

Search can return stale data

There is a race between search and delete

Reference counting + locks does not have this problem

Delete is similar – global lock

Good speedups only if many more searches than del

kfree_rcu is neither trivial nor inexpensive

Read-Copy Deletion Scenario

• To delete element B, the updater task acquires

list lock to exclude other list manipulation, unlinks

element B from the list and releases list lock

Read-Copy Deletion Scenario

List After Grace Period

List After Element B Returned

to Freelist

• The updater task passes a pointer to B to the

kfree_rcu() primitive, which adds the memory to a

list waiting to be freed.

• Safe to return B to the freelist at the end of the

grace period (when all pre-existing ops complete)

Implementing kfree_rcu

• Basic idea:

Implementing kfree_rcu

• Execute updater on each CPU:

Implementing kfree_rcu

Delay deletion until the end of the grace period:

wait_for_rcu() {

...

current->cpus_allowed = (1 << num_cpus) - 1;

while(true) {

current->cpus_allowed &= ~(1 << cpu_index());

if (current->cpus_allowed == 0) break;

schedule();

} /* Grace period now over. Now it's safe to delete. */

...

}

Implementing kfree_rcu

Delay deletion until the end of the grace period:

wait_for_rcu() {

...

current->cpus_allowed = (1 << num_cpus) - 1;

while(true) {

current->cpus_allowed &= ~(1 << cpu_index());

if (current->cpus_allowed == 0) break;

schedule();

} /* Grace period now over. Now it's safe to delete. */

...

}

Doesn't work with preemptible kernels. Why?

Can't be called from an interrupt handler or while a spin

lock is held. Why?

Can be relatively slow. Why?

Deferring wait_for_rcu

struct rcu_head { tq_struct task; };

void* kmalloc_rcu(size_t size, int flags) {

rcu_head* ret = kmalloc(size + sizeof(*ret), flags);

return ret + 1;

}

void sync_and_destroy(void* head) {

wait_for_rcu();

kfree(head);

}

kfree_rcu(void* obj) {

rcu_head* head = ((rcu_head*) obj) – 1;

head->task.routine = &sync_and_destroy;

head->task.data = head;

schedule_task(&head->task);

}

Deferring wait_for_rcu

struct rcu_head { tq_struct task; };

void* kmalloc_rcu(size_t size, int flags) {

rcu_head* ret = kmalloc(size + sizeof(*ret), flags);

return ret + 1;

}

void sync_and_destroy(void* head) {

wait_for_rcu();

kfree(head);

}

kfree_rcu(void* obj) {

rcu_head* head = ((rcu_head*) obj) – 1;

head->task.routine = &sync_and_destroy;

head->task.data = head;

schedule_task(&head->task);

}

Deferring wait_for_rcu

struct rcu_head { tq_struct task; };

void* kmalloc_rcu(size_t size, int flags) {

rcu_head* ret = kmalloc(size + sizeof(*ret), flags);

return ret + 1;

}

void sync_and_destroy(void* head) {

wait_for_rcu();

kfree(head);

}

kfree_rcu(void* obj) {

rcu_head* head = ((rcu_head*) obj) – 1;

head->task.routine = &sync_and_destroy;

head->task.data = head;

schedule_task(&head->task);

}

Why is kmalloc_rcu necessary?

RCU Application: File Descriptors

Kernel maintains mapping of file descriptors to

instances of struct file with an array

Expansion of the array is a destructive update:

Copies the old elements into a new array

Updates pointers and deletes the old array

RCU employed:

Phase 1: Create new arrays and update pointers

Phase 2: Delete the old arrays

RCU Performance: File Descriptors

Chat benchmark, 2.4.2 SMP Kernel

Why does R/W lock incur so much overhead?

RCU Performance Improvements

A number of improvements to the basic

mechanism

Batch grace period measurements

wait_for_rcu is expensive

A single measurement satisfies multiple deferred free

requests

Maintain per-CPU request lists

Faster grace period algorithm

See the paper for details

Comparing RCU to other Locking

Algorithms

Data locking

Does not avoid reader locks

Also prone to deadlocks

Although list elements can be manipulated in

parallel, searches cannot be done in parallel

Can be used to prevent stale reads in RCU

brlock

Effectively lock-free reads

Not clear how its performance differs from RCU

i.e., Can't brlock be used for the file descriptor arrays?

Conclusions

RCU is an effective approach for avoiding locking

for read-mostly data structures

An elegant method for implicit reference counting

Main advantage: readers need not acquire locks,

perform any atomic ops, write to shared memory

or use barriers.

The destructive update is delayed until the grace

period finishes – until all CPUs context switch (if

non-preemptible)

Since 2001, it has been used in hundreds of

places in the Linux kernel