Representation of Numbers

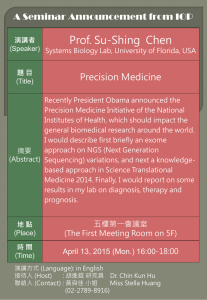

advertisement

Computational Physics 1 Limits of computers Computers are powerful but finite. There is limited amount of space. This has an effect on how numbers can be represented and any calculations we carryout. We first look at how numbers are stored and represented on computers. Number Representation Representation of Numbers Computer represent all numbers, other than integers and some fractions with imprecision. Representation of Numbers Computer represent all numbers, other than integers and some fractions with imprecision. Numbers are stored in some approximation which can be represented by a fixed number of bits or bytes. Representation of Numbers Computer represent all numbers, other than integers and some fractions with imprecision. Numbers are stored in some approximation which can be represented by a fixed number of bits or bytes. There are different types of “representations” or “data types”. Representation of Numbers Data types vary in number of bits used (word length) and representation - fixed point (int or long) or floating point(float or double) format. Fixed point Integer Representation Fixed point Integer Representation A common method of integer representation is sign and magnitude rep. Fixed point Integer Representation A common method of integer representation is sign and magnitude rep. One bit is used for the sign and the remaining bits for the magnitude. Fixed point Integer Representation A common method of integer representation is sign and magnitude rep. One bit is used for the sign and the remaining bits for the magnitude. Clearly there is a restriction to the numbers which can be represented. Fixed point Integer Representation With 7 bits reserved for the magnitude, the largest and smallest numbers represented are +127 and –127. Sign bit (+ve number) +127 -127 = = 0 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 Sign bit (-ve number) Fixed point Integer Representation 1. 2. 3. Things to note: Fixed point numbers are represented as exact. Arithmetic between fixed point numbers is also exact provided the answer is within range. Division is also exact if interpreted as producing an integer and discarding any remainder. Floating point Representation Floating point Representation In floating point representation, numbers are represented by a sign bit s, an integer component e, a positive integer mantissa M. Eg of floating pt. e E sM B e-exact exponent. E –bias : fixed int and machine dependent. B- base, usually 2 or 16. Floating point Representation Most computers use the IEEE representation where the floating point number is normalized. Floating point Representation 1. 2. Most computers use the IEEE representation where the floating point number is normalized. The two most common IEEE rep are: IEEE Short Real (Single Precision): 32 bits – 1 for the sign, 8 for exponent and 23 mantissa IEEE Long Real (Double Prec): 64 bits – 1 sign bit, 11 for exp and 52 for the mantissa Floating point Representation Example of a single precision number. exponent 31 30 1 sign 23 22 11111111 0 11111111111111111111111 mantissa Floating point Representation For example 0.5 is represented as: 0 01111111 10000000000000000000000 Where the bias is 0111 11112 =12710 Floating point Representation By convention by floating point number is normalized so that 31278 is normalized as 3.1278 x 104. As a result the left most bit in the mantissa is 1 and does not have to stored. The computer only needs to remember that there is a phantom bit. Precision A consequence of the storage scheme is that there is a limit to precision. Consider the following example, to see how machine precision affects calculations. Precision Consider the addition of two 32-bit words (single precision): 7 + 1.0 x 10-7. Precision Consider the addition of two 32-bit words (single precision): 7 + 1.0 x 10-7. These numbers are stored as: 7= 0 10000010 11100000000000000000000 10-7 = 0 01100010 11010110101111111001010 Precision Because the mantissa of the numbers are different, the exponent of the smaller number is made larger while the mantissa is made smaller until they are same(shifting bits to the right while inserting zeros). Precision Because the mantissa of the numbers are different, the exponent of the smaller number is made larger while the mantissa is made smaller until they are same(shifting bits to the right while inserting zeros). Until: 10-7 = 0 01100010 00000000000000000000000(0001101…) NB: The bits in the brackets are lost. Precision As a result when the calculation is carried out the computer will get: 7 + 1.0 x 10-7 = 7 Effectively the 10-7 term has been ignored. The machine precision mcan be defined as the maximum positive value that can be added to 1 so that its value is not changed. Errors and Uncertainties Living with errors The problem Errors and uncertainty are an unavoidable part of computation. Some are human error while others are computer errors (eg. Due to precision). We therefore look at the types of errors and ways of reducing them. Errors and Uncertainties 1. 2. 3. 4. 5. There five types of errors in computation: Mistakes Random error Truncation error Roundoff error Propagated error Errors and Uncertainties Mistakes: are typographical errors entered with program or maybe running the program using the wrong data etc. Errors and Uncertainties Random errors: these are caused by random fluctuations in electronics due to for example power surges. The likelihood is rare but there is no control over them. Errors and Uncertainties Truncation or approximation errors: these occur from simplifications of mathematics so that the problem may be solved. For example replace of an infinite series by a finite series. n x x Eg: e n 0 n! Errors and Uncertainties Truncation or approximation errors: these occur from simplifications of mathematics so that the problem may be solved. For example replace of an infinite series by a finite series. n n N x x x Eg: e e x x, N n 0 n! n 0 n! Errors and Uncertainties Where x, N is the total absolute error. Errors and Uncertainties Where x, N is the total absolute error. The truncation error vanishes as N is taken to infinity. For N much larger than x, the error is small. If x and N are close then the truncation error will be large. Errors and Uncertainties Roundoff error: since most numbers are represented with imprecision by computers (and general restrictions) this leads to a number being lost. The error as result of the roundoff or truncation of digits is known as the roundoff error. 1 2 2 Eg: 0.6666666 0.6666667 0.0000001 0 3 3 Errors and Uncertainties Propagated error: this is defined as an error in later steps of a program due to an earlier error. Errors and Uncertainties Propagated error: this is defined as an error in later steps of a program due to an earlier error. This error is added the local error(eg. to a roundoff error). Errors and Uncertainties Propagated error: this is defined as an error in later steps of a program due to an earlier error. This error is added the local error(eg. to a roundoff error). Propagated error is critical as errors may be magnified causing results to be invalid. Errors and Uncertainties Propagated error: this is defined as an error in later steps of a program due to an earlier error. This error is added the local error(eg. to a roundoff error). Propagated error is critical as errors may be magnified causing results to be invalid. The stability of the program determines how errors are propagated. Stability of algorithms For stable methods early errors die out as the program. However unstable programs continuously magnify any error. Errors and Uncertainties In a program all or some of the types of errors occur and will interact to some degree. Calculating the Error A simple way of looking at the error is as the difference between the true value and the actual value. Ie: Error (e) = True value – Approximate value Example: Find the truncation error for at x= e x if the first 3 terms in the expansion are retained. Sol: Error = True value – Approx value x 2 x3 x2 1 x ... 1 x 2 ! 3 ! 2 ! x3 x 4 x5 ... 3! 4! 5! Calculating the Error Three other ways of defining the error are: Absolute error Relative error Percentage error Calculation the Error Absolute error. ea = |True value – Approximate value| ea X X Error Calculating the Error Absolute error: ea = |True value – Approximate value| ea X X Error Relative error is defined as: Error er TrueValue X X X Calculating the Error Percentage error is defined as: X X e p 100er 100 X Examples Suppose 1.414 is used as an approx to 2. Find the absolute, relative and percentage errors. Examples Suppose 1.414 is used as an approx to 2. Find the absolute, relative and percentage errors. 2 1.41421356 Examples Suppose 1.414 is used as an approx to 2. Find the absolute, relative and percentage errors. 2 1.41421356 ea True value – Approximat e value ea 1.41421356 -1.414 0.00021356 (absolute error) Examples Suppose 1.414 is used as an approx to 2. Find the absolute, relative and percentage errors. 2 1.41421356 Error er TrueValue er 0.00021356 0.151103 2 (relative error) Examples Suppose 1.414 is used as an approx to 2. Find the absolute, relative and percentage errors. 2 1.41421356 Error er TrueValue er 0.00021356 0.151103 (relative error) 2 ep er 100 0.151101 % ( percentage error) Well posed(conditioned) vs ill posed problems Conditioning of a problem and stability The accuracy of a solution depending on how a problem is stated (as well as the computer’s accuracy). Conditioning of a problem and stability The accuracy of a solution depending on how a problem is stated (as well as the computer’s accuracy). Not all solutions to a problem are well posed and hence stable. Conditioning of a problem and stability The accuracy of a solution depending on how a problem is stated (as well as the computer’s accuracy). Not all solutions to a problem are well posed and hence stable. A problem is well posed if a solution (a) exits, (b) is unique, and (c) has a solutions that varies continuously as its parameter vary continuously Conditioning of a problem and stability If the problem is ill-posed it should be replaced by alternative form or another that has a solution which is close enough. Conditioning of a problem and stability If the problem is ill-posed it should be replaced by alternative form or another that has a solution which is close enough. For example simplifying complicated functions having values which are almost the same.