slides - Department of Computer Science

advertisement

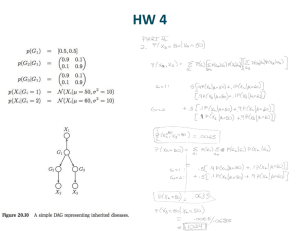

Bayesian Generative Modeling Jason Eisner Summer School on Machine Learning Lisbon, Portugal – July 2011 1 Bayesian Generative Modeling what’s a model? Jason Eisner Summer School on Machine Learning Lisbon, Portugal – July 2011 2 Bayesian Generative Modeling what’s a generative model? Jason Eisner Summer School on Machine Learning Lisbon, Portugal – July 2011 3 Bayesian Generative Modeling what’s Bayesian? Jason Eisner Summer School on Machine Learning Lisbon, Portugal – July 2011 4 Task-centric view of the world x Task e.g., p(y|x) model and decoder y evaluation (loss function) 5 Task-centric view of the world x Task p(y|x) model y loss function Great way to track progress & compare systems But may fracture us into subcommunities (our systems are incomparable & my semantics != your semantics) Room for all of AI when solving any NLP task Spelling correction could get some benefit from deep semantics, unsupervised grammar induction, active learning, discourse, etc. But in practice, focus on raising a single performance number Within strict, fixed assumptions about the type of available data Do we want to build models & algs that are good for just one task? 6 Variable-centric view of the world When we deeply understand language, what representations (type and token) does that understanding comprise? 7 Bayesian View of the World observed data probability distribution hidden data 8 Different tasks merely change which variables are observed and which ones you care about inferring comprehension production sentence syntax tree semantics facts about speaker/world facts about the language ? learning (?) (?) () () () (?) () () ? latent 9 Different tasks merely change which variables are observed and which ones you care about inferring comprehension production learning surface form of word ? surface underlying alignment (?) latent () underlying form of word (?) latent () abstract morphemes in word (?) () underlying form of morphemes (lexicon) (?) constraint ranking (grammar) (?) 10 Different tasks merely change which variables are observed and which ones you care about inferring MT decoding MT training cross-lingual projection Chinese sentence Chinese parse latent latent English parse latent latent English sentence ? ? translation & language models ? ? 11 All you need is “p” Science = a descriptive theory of the world Write down a formula for p(everything) everything = observed needed latent Given observed, what might needed be? Most probable settings of needed are those that give comparatively large values of ∑latent p(observed, needed, latent) Formally, we want p(needed | observed) = p(observed, needed) / p(observed) Since observed is constant, the conditional probability of needed varies with p(observed, needed), which is given above (What do we do then?) 12 All you need is “p” Science = a descriptive theory of the world Write down a formula for p(everything) everything = observed needed latent p can be any non-negative function you care to design (as long as it sums to 1) (or another finite positive number: just rescale) But it’s often convenient to use a graphical model Flexible modeling technique Well understood We know how to (approximately) compute with them 13 Graphical model notation slide thanks to Zoubin Ghahramani 14 Factor graphs slide thanks to Zoubin Ghahramani 15 Rather basic NLP example First, a familiar example Conditional Random Field (CRF) for POS tagging Possible tagging (i.e., assignment to remaining variables) … v v v … preferred find tags Observed input sentence (shaded) 16 Rather basic NLP example First, a familiar example Conditional Random Field (CRF) for POS tagging Possible tagging (i.e., assignment to remaining variables) Another possible tagging … v a n … preferred find tags Observed input sentence (shaded) 17 Conditional Random Field (CRF) ”Binary” factor that measures compatibility of 2 adjacent tags v v 0 n 2 a 0 n 2 1 3 a 1 0 1 v v 0 n 2 a 0 n 2 1 3 a 1 0 1 Model reuses same parameters at this position … find … preferred tags 18 Conditional Random Field (CRF) v v 0 n 2 a 0 n 2 1 3 a 1 0 1 v v 0 n 2 a 0 n 2 1 3 a 1 0 1 … … v 0.3 n 0.02 a 0 find v 0.3 n 0 a 0.1 preferred v 0.2 n 0.2 a 0 tags can’t be adj 19 Conditional Random Field (CRF) p(v a n) is proportional to the product of all factors’ values on v a n … v v 0 n 2 a 0 v a 1 0 1 v v 0 n 2 a 0 a v 0.3 n 0.02 a 0 find n 2 1 3 n 2 1 3 a 1 0 1 … n v 0.3 n 0 a 0.1 preferred v 0.2 n 0.2 a 0 tags 20 Conditional Random Field (CRF) p(v a n) is proportional to the product of all factors’ values on v a n … v v 0 n 2 a 0 v a 1 0 1 v v 0 n 2 a 0 a v 0.3 n 0.02 a 0 find n 2 1 3 n 2 1 3 a 1 0 1 = … 1*3*0.3*0.1*0.2 … … n v 0.3 n 0 a 0.1 preferred v 0.2 n 0.2 a 0 tags MRF vs. CRF? 21 Inference: What do you know how to compute with this model? p(v a n) is proportional to the product of all factors’ values on v a n … v v 0 n 2 a 0 v a 1 0 1 v v 0 n 2 a 0 n 2 1 3 a 1 0 1 a v 0.3 n 0.02 a 0 find n 2 1 3 = … 1*3*0.3*0.1*0.2 … … n v 0.3 n 0 a 0.1 preferred v 0.2 n 0.2 a 0 tags Maximize, sample, sum … 22 Variable-centric view of the world When we deeply understand language, what representations (type and token) does that understanding comprise? 23 semantics lexicon (word types) entailment correlation inflection cognates transliteration abbreviation neologism language evolution tokens sentences N translation alignment editing quotation discourse context resources speech misspellings,typos formatting entanglement annotation To recover variables, model and exploit their correlations 24 How do you design the factors? It’s easy to connect “English sentence” to “Portuguese sentence” … … but you have to design a specific function that measures how compatible a pair of sentences is. Often, you can think of a generative story in which the individual factors are themselves probabilities. May require some latent variables. 25 Directed graphical models (Bayes nets) Under any model: p(A, B, C, D, E) = p(A)p(B|A)p(C|A,B)p(D|A,B,C)p(E|A,B,C,D) Model above says: slide thanks to Zoubin Ghahramani (modified) 26 Unigram model for generating text w1 w2 w3 … p(w1) p(w2) p(w3) … 27 Explicitly show model’s parameters “ is a vector that says which unigrams are likely” w1 w2 w3 … p() p(w1 | ) p(w2 | ) p(w3 | ) … 28 “Plate notation” simplifies diagram “ is a vector that says which unigrams are likely” w N1 p() p(w1 | ) p(w2 | ) p(w3 | ) … 29 Learn from observed words (rather than vice-versa) w N1 p() p(w1 | ) p(w2 | ) p(w3 | ) … 30 Explicitly show prior over (e.g., Dirichlet) given Dirichlet() wi “Even if we didn’t observe word 5, the prior says that 5 = 0 is a terrible guess” w N1 p() p( | ) p(w1 | ) p(w2 | ) p(w3 | ) … 31 Dirichlet Distribution Each point on a k dimensional simplex is a multinomial probability distribution: 2 i 1 0.2 0.5 0.3 1 dog the cat 0 1 3 1 1 1 0 0 i i 1 dog the cat i 32 slide thanks to Nigel Crook Dirichlet Distribution A Dirichlet Distribution is a distribution over multinomial distributions in the simplex. 2 1 0 1 1 0 1 3 2 2 11 1 1 1 1 1 1 3 1 3 33 slide thanks to Nigel Crook 34 slide thanks to Percy Liang and Dan Klein Dirichlet Distribution Example draws from a Dirichlet Distribution over the 3-simplex: 2 0 3 2 1 0 3 2 Dirichlet(5,5,5) 1 Dirichlet(0.2, 5, 0.2) 1 Dirichlet(0.5,0.5,0.5) 1 3 35 slide thanks to Nigel Crook Explicitly show prior over (e.g., Dirichlet) Posterior distribution p( | , w) is also a Dirichlet just like the prior p( | ). “Even if we didn’t observe word 5, the prior says that 5 = 0 is a terrible guess” prior = Dirichlet() posterior = Dirichlet(+counts(w)) Mean of posterior is like the max-likelihood estimate of , but smooth the corpus counts by adding “pseudocounts” . (But better to use whole posterior, not just the mean.) w N1 p() p( | ) p(w1 | ) p(w2 | ) p(w3 | ) … 36 Training and Test Documents “Learn from document 1, use it to predict document 2” test w N2 train w What do good configurations look like if N1 is large? What if N1 is small? N1 37 Many Documents “Each document has its own unigram model” 3 w 2 w 1 w N3 Now does observing docs 1 and 3 help still predict doc 2? N2 Only if learns that all the ’s are similar (low variance). N1 And in that case, why even have separate ’s? 38 Many Documents or tuned to maximize training or dev set likelihood “Each document has its own unigram model” given d Dirichlet() wdi d w ND D 39 Bayesian Text Categorization “Each document chooses one of only K topics (unigram models)” given k Dirichlet() wdi k but which k? K w ND D 40 Bayesian Text Categorization given Dirichlet() zd given k Dirichlet() wdi zd K “Each document chooses one of only K topics (unigram models)” a distribution over topics 1…K z Allows documents to differ a topic considerably while some in 1…K still share parameters. w ND D And, we can infer the probability that two documents have the same topic z. Might observe some topics. 41 Latent Dirichlet Allocation “Each document chooses a mixture of all K topics; each word gets its own topic” (Blei, Ng & Jordan 2003) z K w ND D 42 (Part of) one assignment to LDA’s variables slide thanks to Dave Blei 43 (Part of) one assignment to LDA’s variables slide thanks to Dave Blei 44 Latent Dirichlet Allocation: Inference? K z1 z2 z3 … w w1 w2 w3 … D 45 Finite-State Dirichlet Allocation (Cui & Eisner 2006) “A different HMM for each document” K z1 z2 z3 … w1 w2 w3 … D 46 Variants of Latent Dirichlet Allocation Syntactic topic model: A word or its topic is influenced by its syntactic position. Correlated topic model, hierarchical topic model, …: Some topics resemble other topics. Polylingual topic model: All versions of the same document use the same topic mixture, even if they’re in different languages. (Why useful?) Relational topic model: Documents on the same topic are generated separately but tend to link to one another. (Why useful?) Dynamic topic model: We also observe a year for each document. The k topics used in 2011 have evolved slightly from their counterparts in 2010. 47 Dynamic Topic Model slide thanks to Dave Blei 48 Dynamic Topic Model slide thanks to Dave Blei 49 Dynamic Topic Model slide thanks to Dave Blei 50 Dynamic Topic Model slide thanks to Dave Blei 51 Remember: Finite-State Dirichlet Allocation (Cui & Eisner 2006) “A different HMM for each document” K z1 z2 z3 … w1 w2 w3 … D 52 Bayesian HMM “Shared HMM for all documents” (or just have 1 document) K z1 z2 z3 w1 w2 w3 … D We have to estimate transition parameters and emission parameters . 53 FIN 54