perceptron dichotomizer

advertisement

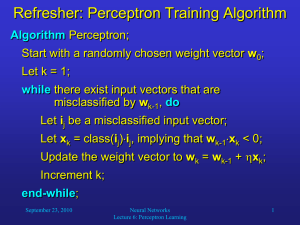

Multivariate linear models for regression and classification Outline: 1) multivariate linear regression 2) linear classification (perceptron) 3) logistic regression Multivariate Linear Classification The perceptron (lecture 3 on amlbook.com) Review: perceptron applied to credit approval Integrate “threshold” into input vector Perceptron learning algorithm (PLA) sign(wTx) negative sign(wTx) positive Each iteration pulls boundary in direction that tends to correct misclassification If data is linearly separable, iteration terminates when Ein(g) = 0 Otherwise terminate by maximum number of iterations A graphical demo of the Perceptron Learning Algorithm (in Octave) is on AMLbook.com Classification for digit recognition Examples of hand-written digits from zip codes Given 16x16 pixel image, could have model with 257 weights (attributes) Better to develop a small number of attributes (features) from the raw data Regardless of attributes chosen, x0 = 1 is included 2-attribute model: intensity and symmetry Intensity: how much black is in the image Symmetry: how similar are mirror images PLA is unstable. No obvious trend toward min Ein Correcting misclassification of one example may cause others to become misclassified Pocket algorithm (text p80) Keeps the best PLA result obtained thus far Must evaluate Ein (number of misclassified examples) after each update to determine if it is better than previous best PLA & Pocket final results of 1 vs 5 classification • Just like in Assignment 4, multivariate linear regression can be used as an alternative to PLA and Pocket algorithm. • PLA and Pocket algorithm require class labels to be +1. • Multivariate linear regression can use any 2 distinct real numbers r1 and r2. Classification boundaries • yfit (x) = w0 + w1 x1 + w2 x2 • yfit (x) = (r1 + r2)/2 defines the a function of x1 and x2 that is the boundary • Solve this function for x2 as a function of x1 Assignment 5: due 10-21-14 Part 1: Apply Pocket algorithm (text p80) to 1vs5 classification Two attribute files (intensity, symmetry) are on class webpage. Use the smaller (424 examples) for training. Use the larger (1561 examples) get the following results: 1) Etest as percent misclassified 2) Confusion matrix as percentages 3) Scatter plot with boundary (as in slide 12) Part2: Compare Pocket algorithm results with those obtained by multivariate linear regression. Again, use smaller set for training and larger for testing. Report the same results as above. Calculate Etest as mean of squared residuals by leave-one-out (424 runs). Compare to Etest as mean of squared residuals calculated from the test set.