Learning from Example - Computing Science and Mathematics

Learning from Example

• Given some data – build a model to make predictions

• Linear Models (Perceptrons).

• Support Vector Machines.

House Price for a given size

Many relationships

We know are linear

V=IR (ohm’s law)

F=ma (Newton's 2nd)

Pv=nRT (gas law)

Can you think of anymore.

Other laws are non-linear e.g. Newton’s law of gravity.

Moore’s law for processors.

Time for a ball to hit the ground.

Can you think of anymore.

What is the price of a house

At 2750 square feet?

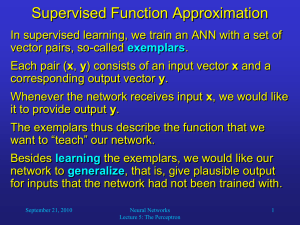

Perceptron – how to calculate?

Given an input – what is the output

• Input vector X=(x1, x2,…, xn)

• Weight vector W=(w1, w2,…, wn)

• X.W = x1.w1 + x2.w2 +

…+ xn.wn

If X.W >0 return true (1) else return false (0)

Perceptron – how to learn

• Learning is about how to change the weights

• This corresponds to moving the decision

• boundary around to find

While epoch produces an error a better separation.

This is linear algebra.

Present network with next inputs

(pattern) from epoch

Err = T – O

• Is there anything wrong

If Err <> 0 then with this approach?

W j

End If

= W j

+ LR * I j

* Err

End While

2 layers for Boolean functions

perceptron can only represent linearly separable functions (AND OR NAND NOR).

It cannot represent all Boolean functions (XOR).

However, 2 layers is enough to represent any

Boolean function.

This is disjunctive normal form DNF (only needs two levels of expression) e.g.

(x1 and !x2) OR (!x2 and x3) OR (x3 and !x4)

2 layers for Boolean functions

• For each input vector, create a hidden node which fires if and only if this specific input vector is presented to the network.

• This produces a hidden layer which will always have exactly one node active (effectively a look up table)

• Implement the output layer as an OR function that activates just for the desired input patterns.

From Boolean to Real Values

• A perceptron can learn Boolean functions. It returns 0 or 1 (false or true).

• However a robot might need to use Real

(continuous) values e.g. to control angles/speeds.

• We can use perceptrons and artificial neural networks to learn Real-valued functions.

• Actually Neural nets AND computers ARE continuous machines (electrical devices) – but we have made them digital (to reduce copying errors with great success)

Boolean to Real-Valued Functions

• We can interpret 0=false and

1=true.

• How else could we do it e.g. +1=T,

-1=F (any benefit?)

• What function does this represent? (input is black, output is red).

• How many decision boundaries are there?

• How many 2-input Boolean functions are linearly separable.

In Java

Boolean AND (Boolean x, Boolean y)

T

T

F

F

T decision boundaries

F

T

T

Boolean to Real-Valued Functions

• Now all input values can be given real values

In Java

Double AND(Double x,

Double y) e.g.

AND(0.5, 0.5) = -4.97

1

0

All points here are 1

Or POSITIVE

1

0

0

0 0

1

All points here are 0

Or NEGATIVE

Regression

• Linear Regression is the problem of being given a set of data points (x,y), and finding the best line y = mx +c which fits the data.

• You have to find m and c

• There is a well-known formula for this (18.3 in the book)

• It is also easy to prove (basic calculus).

Finding the weights.

• The weights w0 and w1 have a smooth

(continuously and differentiable) error surface.

• The best value is unique.

• We can gradually move toward the global optima.

• LOSS= error

Small learning rate

Large learning rate

Earthquake or Nuclear Explosion?

• X1 is body wave magnitude

• X2 is surface wave magnitude

• White circles are earth quakes.

• Black circles are nuclear explosions.

• Given the dotted line we can make predictions about new wave data.

More Noisy Data

• With more data we cannot divide the two types of vibration into two separate sets – but we can still make quite good predictions.

Linearly Separable

• Look at where the teacher and students are in the class now.

• Is it possible to draw a straight line between the teacher and the students?

• Is it possible to draw a straight line between the female students and the male students?

A Geometry Problem

• A man walks one mile south, one mile east and one mile north, but returns to the same position?

• Is this possible? HOW?

• Are there 0, 1, or many solutions?

Linearly Separable –in one dimension

FAIL/PASS

25, 35, 45, 55

• If a student gets less than 40 percent in an exam they FAIL otherwise they PASS.

• Imagine some students get the following marks

• 25, 35, 45, 55 – can we linearly separate them?

• If MARK < 40 FAIL else PASS.

• Which is better for students – a binary (Boolean) value or a continuous real (integer) value?

Boolean or Real-valued feedback

• Boolean gives only two types of feedback (pass fail)

• A real value gives more detailed information and makes learning easier.

• Example of parking a car. Indicate distance/shout stop

• Example of sniper and bullets. Can see where hit and hit/miss (tracers).

25 , 35,

BAD FAIL, JUST FAIL,

45, 55

JUST PASS, GOOD PASS

EVEN ODD

• 0, 2, 4, 6, are even, 1, 3, 5, are odd (not even)

• What about 8, 7, what about 7.5

• In java (x%2==0)

• (5! = 1.2.3.4.5=120, what is 5.4!)

• Can we linearly separate the two classes of numbers

Even, odd, even, odd

2, 3, 4, 5

Are Prime numbers linearly separable

• Prime numbers : greater than 1 that has no positive divisors other than 1 and itself.

• Examples 2, 3, 5, 7, 11, 13, 17, 19, 23, 29

• Is 2.3 prime? What does this mean?

• We can give it a meaning (if we want to!) by defining a function on floats/doubles/reals which is the prime function on integers.

Types of Neural Network Architecture

• Typically in text books we are introduced to one-layer neural networks

(perceptrons)

• Multi-layer neural networks.

• Do we need more?

• Ragged and Recurrent

1, 2 or 3 layer Neural Networks

• One layer (a perceptron) defines a linear surface.

• Two layers can define convex hulls

(and therefore any

Boolean function)

• Three layers can define any function

In the general for the 2- and 3- layers cases, there is no simple way to determine the weights.

Hyper-planes

• Given two classes of data (o, x) which are linearly separable,

• There are typically many

(infinity) ways in which they can be separated.

• With a perceptron,

– a different initial set of weights

– a different learning parameter

(alpha)

– A different order of presenting the input/output data

• Are the reasons we can end with a different with different decision boundaries.

Decision Boundary for AND

• On the right is a graphical version of

LOGICAL AND.

• Where is the ‘best’ position for the decision boundary?

T

T

F

F

T

F

T

T

The decision boundary

• The best decision boundary can be described informally.

• In this case the solid black line has the maximum separation between the two classes.

Geometric Interpretation

• Maximize the distance between between the two classes.

• The margin is the distance between the two classes that separate the classes.

• We choose a line parallel

– as this is the line that is most likely to represent the samples of data.

Support vectors margin

The decision boundary with the maximum margin

Transform from 2D to 3D

A non-linear decision boundary A linear decision boundary

A 2D (x1, x2) coordinate maps to a 3D coordinate (f1, f2, f3)

CLASS EXERSISE – DO THE FOLLOWING EXAMPLES – NEXT SLIDE f1 = x1*x1 f2 = x2*x2 f3 = 1.41*x1*x2

(0,0) -> (?,?,?)

(0,1) -> (?,?,?)

(-1, -1) -> (?,?,?)

Mapping from 2D to 3D

f1 = x1*x1 f2 = x2*x2 f3 = 1.41*x1*x2

(0,0) -> (0,0,0)

(0,1) -> (0,1,0)

(-1, -1) -> (1,1, 1.41)

This is like lifting the middle of a bed-sheet up!

A linear separator in F-space is non-linear in x-space

Terminology

x o o o o o o o o o o x x x x x x x x x x x o

Support vectors

Maximum

Margin

Separator margin

Activation Functions

• Different activation functions can be used.

• Usually monotonic and non-linear.

ANN and Game Playing.

• How to get a ANN to play othello/connect 4 or tic-tac-toe (naughts and crosses).

• Input is an n X n board (fixed e.g. 3 by 3 in

OXO)

• Could give values 1 for your piece, -1 for opponents piece, and 0 for an empty square.

• Could take 9 outputs and place piece in output with maximum value which is unoccupied.

Hidden layers

• Often we have no guidance about the number of hidden nodes

– or the way they are connected.

• Typically fully connected

• With image recognition

– typically neighboring cells on the retina are locally connected.

• How can we code a color image for an ANN?

Advantages of ANN

• A computer program is brittle – if you chanage one instruction – the function computed can change completely- therefore it may be hard to learn with a program.

• With an ANN, we can gradually alter the weights and the function gradually changes

(so-called graceful degradation – cf human memory)

Advantages of SVM

A perceptron depends on the initial weights and the learning rate.

A perceptron may give a different answer each time – a SVM gives a unique and best answer.

A perceptron can oscillate when training – and will not converge if the data is not linearly separable. A SVM will find the best solution it can – given the data it has.

• U:\2nd Year Share\Computer Science

Division\Semester Two\IAI(Introduction to

Artificial Intelligence)\BostonDynamics