Chapter 4. #1 Pipeline stalls are necessary when an instruction must wait due to unresolved hazards or resource conflicts, effectively pausing the instruction flow and allowing the pipeline to synchronize and resolve the _________. #2 An _________ occurs when instructions i and j write the same register or memory location. The ordering between the instructions must be preserved to ensure that the value finally written corresponds to instruction j. #3 __________ is a technique to get more performance from loops that access arrays, in which multiple copies of the loop body are made and instructions from different iterations are scheduled together. #4 Many superscalars extend the basic framework of dynamic issue decisions to include __________, it chooses which instructions to execute in a given clock cycle while trying to avoid hazards and stalls. #5 Explain the three primary units of a dynamically scheduled pipeline. #6 _______ is a situation in pipelined execution when an instruction blocked from executing does not cause the following instructions to wait. #7 _______ is a small memory that is indexed by the lower portion of the address of the branch instruction and that contains one or more bits indicating whether the branch was recently taken or not. #8 Consider a loop branch that branches nine times in a row, and then is not taken once. What is the prediction accuracy for this branch, assuming the prediction bit for this branch remains in the prediction buffer? #9 #10 Explain the 2-bit prediction scheme. Generally, we can use the term ________ to refer to any unexpected change in control flow without distinguishing whether the cause is internal or external; we can use the term ________ only when the event is externally caused. #11 In a __________, the address to which control is transferred is determined by the cause of the exception, possibly added to a base register that points to memory range for them. #12 ________ is a style of instruction set architecture that launches many operations that are defined to be independent in a single-wide instruction, typically with many separate opcode fields. #13 An__________ between instructions i and j occurs when instruction j writes a register or memory location that instruction i reads. The original ordering must be preserved to ensure that i reads the correct value. #14 Generally, the term _________ can refer to any unexpected change in control flow without distinguishing the cause, while the term _________ refers to externally caused events. #15 In a _________, the address to which control is transferred is determined by the cause of the exception, possibly added to a base register. #16 A pipeline _________ is a temporary halt in the execution of instructions to resolve data hazards or control hazards. #17 Dynamic issue decisions in superscalars are extended to include _________, which chooses which instructions to execute in a given clock cycle to avoid hazards. #18 Static branch prediction relies on predetermined patterns, such as assuming a branch will always be taken or not taken, based on typical branch behavior, which helps in simplifying the branch prediction process but may not always accurately reflect dynamic _________ patterns. #19 Dynamic branch prediction, on the other hand, uses hardware to track the actual behavior of branches over time and adapt predictions accordingly, providing a more accurate method for handling branches by learning from recent _________ outcomes. #20 The critical path analysis determines the longest delay in a pipeline stage, which affects the clock period and overall pipeline performance, highlighting the importance of minimizing delays to enhance _________. #21 Forwarding, also referred to as bypassing, is a technique used to resolve data hazards by directly routing the output of one pipeline stage to a subsequent stage without waiting for it to be written back to the register file, thus reducing unnecessary _________. #22 The three primary units of a dynamically scheduled pipeline include the _________ unit, _________ unit, and the _________ unit. #23 #24 #25 Chapter 5 #1. ________ locality is the locality principle stating that if a data location is referenced then it will tend to be referenced again soon . #2. ________ locality is the locality principle stating that if a data location is referenced, data locations with nearby addresses will tend to be referenced soon . #3. ________ is a structure that uses multiple levels of memories; as the distance from the processor increases, the size of the memories and the access time both increase while the cost per bit decreases . #4. Which of the following statements are generally true? 1. Memory hierarchies take advantage of temporal locality. 2. On a read, the value returned depends on which blocks are in the cache. 3. Most of the cost of the memory hierarchy is at the highest level. 4. Most of the capacity of the memory hierarchy is at the lowest level . #5. _______ is the time required for the desired sector of a disk to rotate under the read/write head; usually assumed to be half the rotation time . #6. How many total bits are required for a direct-mapped cache with 16 KiB of data and four-word blocks, assuming a 64-bit address? #7. Consider a cache with 64 blocks and a block size of 16 bytes. To what block number does byte address 1200 map? #8. _______ is a scheme that handles writes by updating values only to the block in the cache, then writing the modified block to the lower level of the hierarchy . #9. _______ is a scheme in which a level of the memory hierarchy is composed of two independent caches that operate in parallel with each other, with one handling instructions and one handling data . #10. Assume the miss rate of an instruction cache is 2% and the miss rate of the data cache is 4%. If a processor has a CPI of 2 without any memory stalls, and the miss penalty is 100 cycles for all misses, determine how much faster a processor would run with a perfect cache that never missed. Assume the frequency of all loads and stores is 36%. #11. Find the average memory access time (AMAT) for a processor with a 1 ns clock cycle time, a miss penalty of 20 clock cycles, a miss rate of 0.05 misses per instruction, and a cache access time (including hit detection) of 1 clock cycle. Assume that the read and write miss penalties are the same and ignore other write stalls . #12. Assume there are three small caches, each consisting of four one-word blocks. One cache is fully associative, a second is two-way set associative, and the third is direct-mapped. Find the number of misses for each cache organization given the following sequence of block addresses: 0, 8, 0, 6, and 8 . #13. Increasing associativity requires more comparators and more tag bits per cache block. Assuming a cache of 4096 blocks, a four-word block size, and a 32-bit address, find the total number of sets and the total number of tag bits for caches that are direct-mapped, two-way and four-way set associative, and fully associative . #14. Suppose we have a processor with a base CPI of 1.0, assuming all references hit in the primary cache, and a clock rate of 4 GHz. Assume a main memory access time of 100 ns, including all the miss handling. Suppose the miss rate per instruction at the primary cache is 2%. How much faster will the processor be if we add a secondary cache that has a 5 ns access time for either a hit or a miss and is large enough to reduce the miss rate to main memory to 0.5%? #15. Assume one byte data value is 10011010two. First show the Hamming ECC code for that byte, and then invert bit 10 and show that the ECC code finds and corrects the single bit error. Leaving spaces for the parity bits, the 12-bit pattern is__ 1_ 0 0 1_ 1 0 1 0 . #16. ______ is a cache that keeps track of recently used address mappings to try to avoid an access to the page table . #17. In a memory hierarchy like that of Figure 5.30, which includes a TLB and a cache organized as shown, a memory reference can encounter three different types of misses: a TLB miss, a page fault, and a cache miss. Consider all the combinations of these three events with one or more occurring (seven possibilities). For each possibility, state whether this event can actually occur and under what circumstances . #18. ______ addressed cache: A cache that is accessed with a virtual address rather than a physical address. ______ addressed cache: A cache that is addressed by a physical address . #19. ______ is a changing of the internal state of the processor to allow a different process to use the processor that includes saving the state needed to return to the currently executing process . #20. ______ is a cache miss that occurs in a set-associative or direct-mapped cache when multiple blocks compete for the same set and that are eliminated in a fully associative cache of the same size . #21. Given that a multicore multiprocessor means multiple processors on a single chip, these processors very likely share a common physical address space. Caching shared data introduces a new problem, because the view of memory held by two different processors is through their individual caches, which, without any additional precautions, could end up seeing two distinct values. Figure 5.40 illustrates the problem and shows how two different processors can have two different values for the same location. This difficulty is generally referred to as the _______ problem . #22. In _________ architecture, multiple instructions can be issued per clock cycle, increasing the instruction throughput of the processor . #23. _________ Execution allows instructions to be executed as resources become available, rather than strictly in the order they appear, improving performance by better utilizing available execution units . #24. ________ is a special cache that stores recent translations of virtual addresses to physical addresses, speeding up memory access . #25. When data that is not reused frequently fills up the cache, it leads to _________, which wastes cache space and degrades performance . #26. If a virtual memory system has 2^48 bytes of virtual address space and a page size of 4 KiB, how many pages can it address? #27. A processor has a CPI of 2 without memory stalls, and the miss penalty is 50 cycles. If the instruction cache has a miss rate of 3%, what is the effective CPI?" #28. Consider a two-level cache hierarchy where the Level 1 (L1) cache has a hit rate of 95% and the Level 2 (L2) cache has a hit rate of 90%. The access time for the L1 cache is 1 ns, the access time for the L2 cache is 10 ns, and the access time for main memory is 100 ns. Calculate the overall average memory access time (AMAT) . #29. A disk has a track-to-track seek time of 3 ms and a full stroke seek time of 10 ms. If the average seek time is typically one-third of the full stroke seek time, calculate the average seek time for this disk . Chapter 6 #1 According to _______ law, the potential speedup of a process using multiple processors is limited by the time needed for the sequential portions of the process. #2 ______ scaling is speed-up achieved on a multiprocessor without increasing the size of the problem. ____ scaling is speed-up achieved on a multiprocessor while increasing the size of the problem proportionally to the increase in the number of processors. #3 Strong scaling evaluates a system's performance improvement as more processors are added while keeping the problem size _______. In weak scaling, the system's performance is measured as the problem size increases in _______ with the number of processors, maintaining a constant workload per processor. #4 To achieve the speed-up of 20.5 on the previous larger problem with 40 processors, we assumed the load was perfectly balanced. That is, each of the 40 processors had 2.5% of the work to do. Instead, show the impact on speed-up if one processor’s load is higher than all the rest. Calculate at twice the load (5%) and five times the load (12.5%) for that hardest working processor. How well utilized are the rest of the processors? #5 ______ is a set of computers connected over a local area network that function as a single large multiprocessor. #6 True or false: Clusters have separate memories and thus need many copies of the operating system. #7 _______ is a parallel computing architecture developed by NVIDIA, allowing developers to use GPUs for general-purpose processing tasks beyond graphics. #8 True or false: GPUs rely on graphics DRAM chips to reduce memory latency and thereby increase performance on graphics applications. #9 A _______ Architecture is designed to handle specific types of tasks or applications more efficiently than general-purpose processors, often used in areas like AI and graphics. #10 A _______ Processing Unit, is specialized hardware developed by Google to accelerate machine learning workloads and improve the efficiency of neural network computations. #11 True or False? DSAs are more effective than CPUs or GPUs in their domains primarily because you can justify using a much larger die for a domain. #12 Explain roofline model. #13 The _______ model provides a graphical representation of a computer system’s performance based on its computational intensity and memory bandwidth. #14 _______ Memory Access describes a memory architecture where the access time to memory is the same for all processors in the system. In _______ Memory Access, memory access times vary depending on the processor’s distance from the memory location, impacting performance. #15 ______ is a type of single address space multiprocessor in which some memory accesses are much faster than others depending on which processor asks for which word. #16 True or false: Shared memory multiprocessors cannot take advantage of task-level parallelism. #17 ______ is a parallel processor with a single physical address space. #18 _______ allows multiple processors to access shared memory and I/O devices in a symmetrical and balanced manner, enhancing performance. #19 Cache _______ mechanisms ensure that all processors in a multiprocessor system have a consistent view of shared memory, preventing data inconsistency. #20 ______ is a version of multithreading that lowers the cost of multithreading by utilizing the resources needed for multiple issue, dynamically scheduled microarchitecture. #21 ______ is a version of hardware multithreading that implies switching between threads after every instruction. ______ is a version of hardware multithreading that implies switching between threads only after significant events, such as a last-level cache miss. #22 True or false: Both multithreading and multicore rely on parallelism to get more efficiency from a chip. #23 True or false: Simultaneous multithreading (SMT) uses threads to improve resource utilization of a dynamically scheduled, out-of-order processor. #24 ______ is the conventional MIMD programming model, where a single program runs across all processors. #25 True or false: As exemplified in the x86, multimedia extensions can be thought of as a vector architecture with short vectors that support only contiguous vector data transfers. #26 _______ is a cloud computing model where software applications are delivered over the Internet, making them accessible from anywhere and scalable on demand. #27 In a shared memory system, each of the 16 processors has a local cache. If the cache hit rate is 95% and the average memory access time is 100 nanoseconds for a cache hit and 500 nanoseconds for a cache miss, what is the average memory access time? #28 Calculate the speedup achieved on a 20-processor system if the workload is 85% parallelizable and the remaining 15% must be executed sequentially. #29 In a 32-processor NUMA system, the average remote memory access latency is 300 nanoseconds, and the local memory access latency is 100 nanoseconds. If 25% of the memory accesses are remote, what is the overall average memory access latency? #30 A program that initially takes 250 seconds to run on a single processor has a parallel fraction of 70%. How long will it take to run on 25 processors?

0

0

advertisement

Download

advertisement

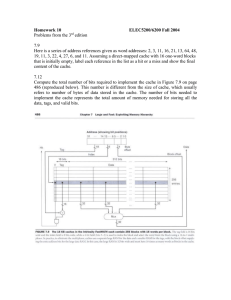

Add this document to collection(s)

You can add this document to your study collection(s)

Sign in Available only to authorized usersAdd this document to saved

You can add this document to your saved list

Sign in Available only to authorized users