- No category

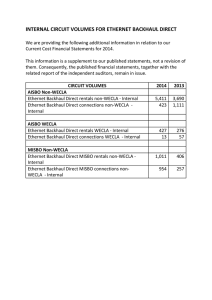

5G Backhaul and Fronthaul: Technologies and Architectures

advertisement